Part 3 of 3 in the AI Copyright Wars series.

Paul McCartney, Elton John, and Sir Ian McKellen do not typically share a political cause. On March 19, 2026, they shared a victory. UK Science Minister Liz Kendall confirmed the government “no longer has a preferred option” on UK AI copyright training rules — killing a broad exception that would have let AI companies train on copyrighted creative works by default.

Reversing course on copyright policy is not a creator victory — it is an abdication dressed as a decision. By retreating to “market-led licensing” without enforcement teeth, without a timeline, and without penalties, the government handed a £146 billion creative industry a press release instead of a law.

Across the Channel, the EU wrote penalties into the AI Act — up to 7% of global turnover for violations. Britain wrote “best practices” and promised to “keep under review.” Between those two responses lies the definition of what this reversal actually means for creators, AI firms, and anyone building a licensing business on British intellectual property.

When 88% Isn’t Enough

Over 11,500 people and organizations responded to a consultation under the Data (Use and Access) Act 2025, which according to the government’s progress statement required a formal update on AI and copyright. 88% of online respondents demanded mandatory licensing — a requirement that AI companies pay before training on copyrighted works. Just 3% supported the opt-out model Downing Street had championed, and another 7% wanted no change to existing law.(https://ipwatchdog.com/2025/12/16/respondents-uk-ai-consultation-overwhelmingly-want-ai-companies-license-copyrighted-works-all-cases/)

Ellie Peers, General Secretary of the Writers’ Guild of Great Britain, demanded enforcement over erosion: “UK copyright needs to be enforced not weakened” to end “the industrial-scale theft of writers’ and other creators’ work.” UK Music chief executive Tom Kiehl called the reversal “a major victory for campaigners”.

Victory implies a new legal position. None exists. Keeping the option 88% rejected alongside the option 88% demanded is not consultation — it is postponement dressed as process, per Article 99 sets fines from €7.5 million or 1% of turnover, t.

UK AI Copyright: What Enforcement Looks Like Elsewhere

Cross-jurisdictional comparison exposes a structural problem. Under the EU AI Act, Article 99 sets fines from €7.5 million or 1% of turnover, through €15 million or 3%, to €35 million depending on the violation. For the largest companies, those penalties scale to between 3% and 7% of worldwide annual turnover. For a company the size of Alphabet or Meta, that percentage translates to billions in potential exposure. Penalties at that scale shape corporate behavior before any regulator files a case.

EU legislators wrote penalties into the AI Act with precision. Japan chose the opposite approach with equal clarity. Article 30-4 of the Japanese Copyright Act grants AI developers a broad commercial training exception, provided outputs do not reproduce copyrighted “enjoyment.” Permissive, yes — but defined. AI companies operating in Japan know exactly where the boundaries sit.

Britain occupies a third position: neither Brussels’ enforcement muscle nor Tokyo’s permissive clarity. Existing UK copyright law permits text and data mining only for non-commercial research, meaning commercial AI training on copyrighted works technically requires authorization. Authorization from whom, on what terms, enforced by what mechanism? McCartney and Elton John can retain King’s Counsel; a session musician, a romance novelist, or a children’s book illustrator cannot. What this analysis terms the Enforcement Vacuum — a regime where the right exists in statute but the remedy is priced out of reach — now defines British AI copyright policy by default.

Here is the reversal’s deepest irony, and the detail the victory narrative obscures: the opt-out exception that creators spent a year fighting would have been better for them than what replaced it. An opt-out creates an administrative framework — a defined mechanism where rights holders register objections, AI companies demonstrate compliance, and regulators audit both. That framework, however creator-hostile in principle, generates a compliance record, a legal foothold, and a basis for enforcement when violations occur.

That vacuum generates none of those things. It does not protect creators — no mechanism exists to enforce their rights at scale. It does not liberate AI companies — no safe harbor exists to shield their training. That reversal produced the one outcome worse for both sides than the policy it defeated.

For creators, that vacuum means rights without remedies. For AI companies, it means operational ambiguity — training that may or may not be lawful depending on a legal interpretation no court has tested. For the government, it means deferring the core UK AI copyright question to judges who never ran for office and will have no industrial strategy brief when they rule.

What a Press Release Costs Per Month

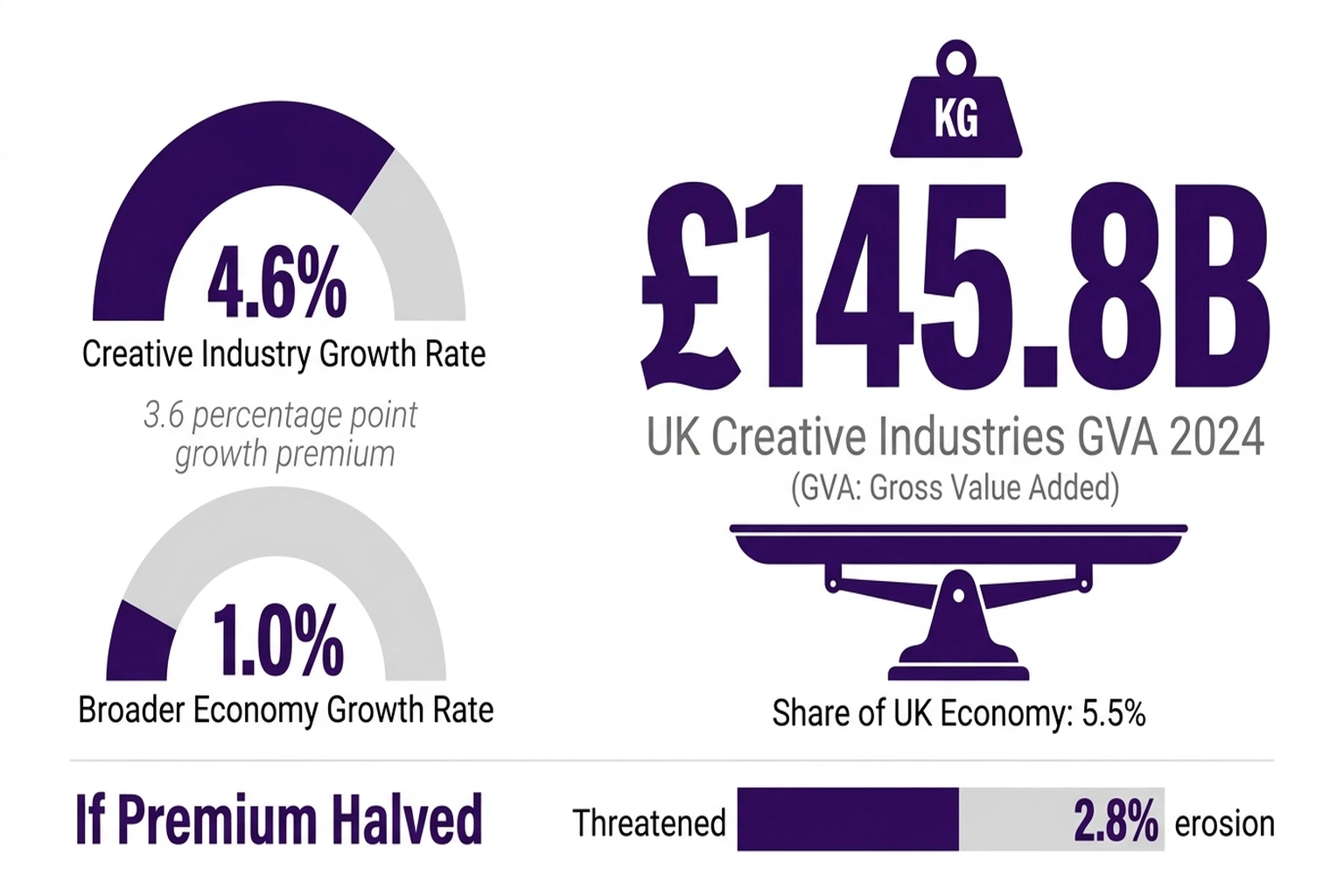

Celebration arithmetic collapses on contact with real numbers. UK creative industries generated £145.8 billion in gross value added in 2024 — 5.5% of the entire UK economy.(https://www.gov.uk/government/statistics/dcms-economic-estimates-gva-2024-provisional/dcms-sectors-economic-estimates-gross-value-added-2024-provisional) Growth hit 4.6% between 2023 and 2024, more than four times the broader economy’s 1.0% rate,(https://www.gov.uk/government/statistics/dcms-economic-estimates-gva-2024-provisional/dcms-sectors-economic-estimates-gross-value-added-2024-provisional) continuing a decade-long pattern of outperformance.

That 3.6-percentage-point growth premium is itself at stake. Creative industries outperform the broader economy precisely because they produce high-value intellectual property — the asset class now being absorbed into AI training sets without compensation. If unlicensed training erodes that premium by even half, narrowing creative industry growth from 4.6% to 2.8%, the compounding cost over three years is roughly £16 billion in lost GVA relative to the current trajectory. If the premium collapses entirely — creative industries growing at the economy-wide 1.0% — the three-year gap exceeds £32 billion. No one in government has published either figure, per UK creative industries generated £145.8 billion in gross val.

No one — not the government, not the AI industry, not collecting societies — disputes that AI models are already training on British copyrighted material. For more than a year, the consultation ran, and throughout that period, training continued with no licensing framework in place. Every month without that framework is a month where the compensation owed to creators is zero.

£145.8 billion divided by 12 equals roughly £12.15 billion in creative output each month. Absent transparency requirements — which also do not yet exist — no one can quantify what share enters AI training pipelines.

If even 2% of annual creative output feeds those pipelines without licensing, the result is approximately £2.9 billion per year in unlicensed value transfer — a figure neither the government nor any published industry analysis has calculated. That assumption is illustrative; absent mandatory disclosure, the real number is unknowable. Halving it to 1% still yields £1.45 billion — and either figure dwarfs the cost of building a licensing framework.

Run those numbers against the enforcement regimes that actually exist. Under the EU AI Act’s penalty ceiling of 7% of global turnover, unauthorized training on £2.9 billion worth of copyrighted material would expose a large AI company to penalties in the hundreds of millions. In London, the same conduct faces a progress statement and a promise to “keep under review.” That is not a policy difference — it is a deterrence ratio approaching infinity.

The Strongest Case for Doing Nothing

Strict copyright enforcement, AI industry advocates contend, pushes training to less regulated jurisdictions. If Britain writes tough rules while competitors write none, British startups lose and British creators still gain nothing because the training happens elsewhere.

Cambridge University Press president Mandy Hill welcomed the reversal but warned against reintroduction of similarly restrictive proposals. Even creator advocates acknowledge that shrinking the British AI market serves no one — the dispute is over how to grow it fairly.

Both sides have a genuine claim — and current UK law resolves the dispute for neither. But jurisdictional evidence undermines the flight-risk argument from both ends of the regulatory spectrum. The EU wrote €35 million penalty floors into the AI Act(https://ai-act-service-desk.ec.europa.eu/en/ai-act/article-99) and remains the world’s largest single market for AI applications. Japan granted broad commercial exceptions through Article 30-4, a framework associated with growing domestic AI investment. One chose maximum enforcement, the other maximum permissiveness — and both attracted capital. What they share is not a policy preference but a policy position: defined rules that let companies model costs before committing resources.

Clear rules — strict or permissive — attract capital. Regulatory silence generates uncertainty, and uncertainty is what corporate legal departments price into investment decisions. That vacuum does not merely fail creators; it fails the AI companies who cite it to justify inaction. A firm that cannot determine whether its training is lawful cannot securitize its models, cannot indemnify its enterprise clients, cannot price its risk. That vacuum is not deregulation — it is unpriced risk, which is the one thing capital avoids more reliably than regulation.

When Nippon Life sued ChatGPT for practicing law without a license, it demonstrated what fills regulatory gaps: courts, with less predictable outcomes for everyone involved. Across the North Sea, Germany’s GEMA has already filed against Suno over AI training on copyrighted music — testing whether collecting societies can enforce on behalf of individual creators too small to litigate alone. That case addresses the precise question the UK’s Enforcement Vacuum leaves open.

McCartney and Elton John won the political argument. Kendall confirmed they were right. Then the government wrote no law, set no timeline, and created no enforcement mechanism.

By Q4 2026, the first significant UK copyright claim against an AI training company will reach a British courtroom. When it does, a judge — not Parliament — will set the licensing terms that 88% of consultation respondents asked their elected officials to write.(https://ipwatchdog.com/2025/12/16/respondents-uk-ai-consultation-overwhelmingly-want-ai-companies-license-copyrighted-works-all-cases/)

Three positions are worth taking before that ruling arrives. Creators should catalog digital works and file rights reservation notices under the EU Digital Single Market Directive’s opt-out mechanism — a step that costs nothing, takes an afternoon, and establishes cross-border precedent should a licensing dispute reach an EU member state. AI companies operating in Britain should publish voluntary training data transparency reports now, before legislation makes disclosure mandatory and retrospective. Collecting societies should prepare test case strategies under the Copyright, Designs and Patents Act 1988, targeting high-profile training uses where the evidence trail is clearest.

No Enforcement Vacuum stays empty. Courts fill what legislatures leave.

What to Read Next

- AI Bias Audits Cost $50K. Colorado Just Killed Them.

- Trump’s 4-Page AI Framework Kills 131 State Protections

- 19 Countries, €35M in Fines, Zero AI Act Regulators

References

-

UK backs off default AI training on copyrighted material — The Register coverage of the UK government’s March 2026 copyright reversal, including Minister Kendall’s statement and creative industry GVA context.

-

Article 99: Penalties — EU AI Act — Official penalty provisions under the EU AI Act, detailing fine tiers from €15 million to €35 million by violation category.

-

Respondents to UK AI Consultation Overwhelmingly Want Licensing — IPWatchdog analysis of 11,500+ consultation responses showing 88% support for mandatory licensing.

-

Government retreats on AI copyright plan after criticism — Computing.co.uk coverage with industry reaction from UK Music, Society of Authors, and Cambridge University Press.

-

DCMS Sectors Economic Estimates: Gross Value Added 2024 — Official UK government statistics on creative industries contributing £145.8 billion in GVA.

-

AI & Copyright Law: Comparing Global Approaches — VWV Solicitors’ cross-jurisdictional analysis covering UK, EU, Japan, and US copyright frameworks for AI training.