Anthropic’s announcement for Claude Opus 4.6 leads with one stat: highest score on Terminal-Bench 2.0, the agentic coding benchmark. Read the page, and the conclusion seems clear — best coding model available. BenchLM.ai, the most thorough independent leaderboard, ranking models across nine evaluations, disagrees. GPT-5.4 Pro holds the top spot at 86.0%. According to BenchLM, Claude’s highest entry is Opus 4.5, at rank five.

Both claims check out against their respective data sources. What neither company publishes is the cost to achieve those scores. Artificial Analysis’s standardized evaluation, which reports cross-model token consumption as part of its Intelligence Index benchmarks, ran identical prompts through both models and measured something benchmark tables omit: output token volume. According to Artificial Analysis, Opus 4.6 generated 160 million output tokens where GPT-5.4 generated 120 million — a 33% premium to reach each answer. Anthropic’s flagship doesn’t just score differently. It bills differently.

At $25 per million output tokens, that premium compounds. Opus 4.6’s headline feature, agent teams, gives each autonomous agent its own context window — so a three-agent workflow doesn’t add 33% overhead once. It adds it three times. Score versus actual cost-per-task: that’s the calculation both vendors leave to the customer.

When “Thinks Longer” Means “Bills Higher”

Jeff Wang, CEO of Windsurf, described the mechanism in Anthropic’s own announcement: “We’ve noticed Opus 4.6 thinks longer, which pays off when deeper reasoning is needed.” Wang builds coding environments. For his users, longer thinking is a product differentiator. For every engineering team paying per output token, it’s an invoice multiplier.

Wang’s claim that thinking longer “pays off” assumes the payoff shows up across workloads. BenchLM’s composite suggests otherwise: across nine evaluations, the model that thinks 33% longer ranks three positions below its own predecessor. Extended reasoning pays off on Terminal-Bench 2.0 but extracts a net penalty on the broader evaluation surface. Wang is right that depth matters for hard problems — but wrong to imply the trade-off is universally positive.

Anthropic’s pricing structure compounds the effect. Prompts under 200,000 tokens bill at the standard rate. Cross that threshold — routine when agent teams maintain separate context windows — and output pricing jumps 50% to $37.50 per million. A surcharge that activates precisely when the 1M-token context window sees genuine use.

Jerry Tsui, a staff software engineer at Ramp, captures the developer side: “I’m more comfortable giving it a sequence of tasks across the stack and letting it run” (Anthropic). Comfort is a feature when debugging a gnarly race condition at 2 AM. Comfort is a cost center when 500 engineers run routine tasks through the same pipeline.

One Benchmark Crown, Eight Consolation Prizes

BenchLM.ai ranks models across nine coding evaluations. As of March 2026, Opus 4.6 lands at rank eight with a 77.3% weighted score — behind GPT-5.3 Codex (85.0%), Kimi K2.5 (82.9%), and its own predecessor Opus 4.5 at rank five (80.9%).

Anthropic’s newest, most expensive model scores lower on the broadest independent coding benchmark composite than the model it replaced. That sentence deserves a second read.

BenchLM’s composite rests on two evaluations: SWE-bench Pro and LiveCodeBench — chosen as “the most trustworthy mainstream coding signal” because they resist data contamination. On those two, Opus 4.6 posts 39.6% and 76% respectively. Terminal-Bench 2.0, the evaluation Anthropic headlined? Not among the two that determine the composite.

The paradox has an architectural explanation. Opus 4.6’s extended-thinking engine generates reasoning chains on every query — the same mechanism that powers its Terminal-Bench 2.0 victory. On hard agentic tasks, that depth finds edge cases other models miss. On the routine tasks that dominate composites — autocomplete, single-function generation, test scaffolding — it over-reasons. The model spends tokens deliberating about problems that don’t require deliberation, and occasionally over-thinks its way into worse answers. Opus 4.5, without the extended-thinking overhead, moves faster and scores cleaner on the bread-and-butter evaluations that weight the composite.

The efficiency gap is quantifiable. Using Artificial Analysis’s data: Opus 4.6 spends 160 million tokens to achieve 77.3 composite points — roughly 2.07 million tokens per point. GPT-5.4 Pro spends 120 million tokens for 86.0 points — 1.40 million tokens per point. Opus 4.6 requires 48% more tokens per composite-score-point than the model at rank one. It’s not just scoring lower. It’s paying more per point earned.

The Feature That Bills You Three Times

Here is where the benchmark story becomes a budget story. Every advantage Anthropic advertises for Opus 4.6 — extended thinking, the 1M context window, agent teams — is simultaneously a billing multiplier. They aren’t separate features with separate costs. They compound.

Start with what the data shows: Artificial Analysis’s standardized runs — same prompts, same evaluation suite — show the 33% token premium is structural. Opus 4.6’s extended-thinking architecture generates reasoning tokens on every query, whether the problem demands deep analysis or not.

Analysis of reasoning cost multipliers in extended-thinking models has documented how these architectures generate tokens that inflate output volume without improving output utility — the model reasons aloud, and the meter runs. Opus 4.6 makes this structural. Anthropic’s own guidance recommends dialing effort from the default “high” to “medium” for simpler tasks, which assumes someone at scale is classifying task complexity before each API call. In practice, nobody does.

That 33% isn’t a quality premium. It’s an architecture tax.

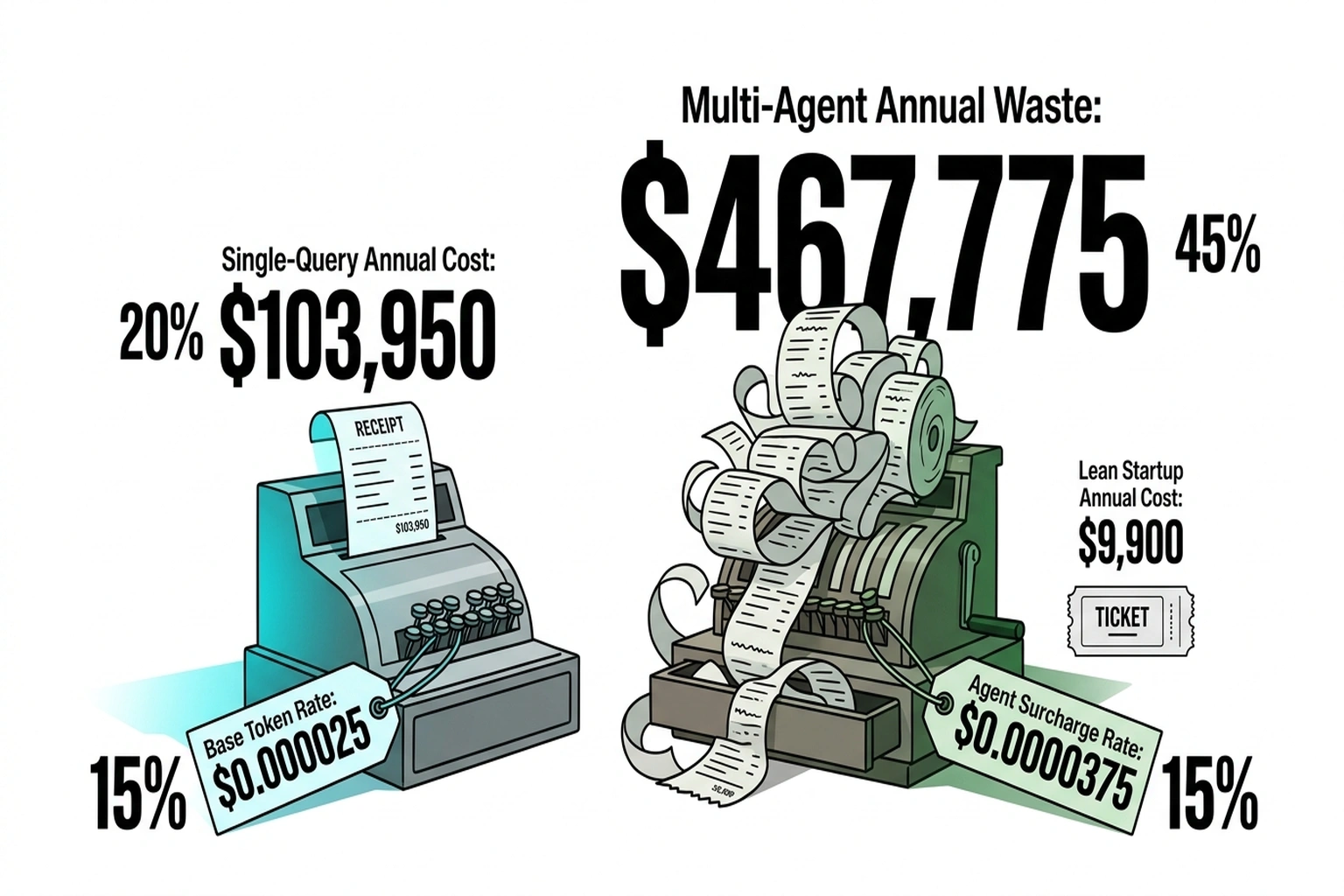

Now compound it. A team of 500 developers running Opus 4.6 at an estimated average production volume of roughly 2.1 million output tokens per developer per month generates 1.05 billion tokens monthly. Subtract the 33% a comparably scoring model wouldn’t spend: 346.5 million excess tokens.

Layer one — the base tax. At $25 per million output: $8,663 per month. $103,950 per year. Same tasks. Same deliverables.

Layer two — agent multiplication. Opus 4.6’s headline feature gives each agent its own context window. A three-agent workflow triples the excess: 346.5 million × 3 = 1.04 billion excess tokens per month. Annual waste climbs to $311,850.

Layer three — the surcharge. Agent teams with separate context windows routinely cross the 200K-token threshold, triggering the 50% price jump from $25 to $37.50 per million. Apply that rate to the agent-multiplied excess: 1.04 billion × $37.50 per million × 12 months = $467,775 per year.

The full Context Capacity Tax: $467,775 — 4.5 times the headline figure — for a model that ranks eighth on the broadest independent coding composite. That’s what the three-layer compound looks like when every advertised feature activates simultaneously.

A team that changes nothing — no workload routing, no effort adjustment, no model selection per task — absorbs that cost as a silent default. Inaction is the most expensive configuration.

For organizations already running Opus 4.5, the upgrade math is perverse: switch to 4.6 for better agentic coding, and every non-agentic query — the vast majority of API calls — gets slower scores at higher cost. The predecessor outperforms the successor on the composite while generating fewer tokens per answer.

These figures use Artificial Analysis’s evaluation as a proxy; the team’s actual workload will differ. Not every query will use agent teams or cross 200K context. But Anthropic’s default reasoning effort is “high”, and agent teams are the feature Anthropic is pushing hardest — which means the question isn’t whether the compound tax exists. It’s how many of the three layers your workload triggers.

Verdict by Workload

Debating “best model” without specifying the workload is arguing about nothing.

| Dimension | Opus 4.6 | GPT-5.4 Pro | Edge |

|---|---|---|---|

| Agentic coding (Terminal-Bench 2.0) | Highest reported | Not reported | Opus |

| Composite coding (BenchLM, 9 evals) | 77.3%, rank 8 | 86.0%, rank 1 | GPT-5.4 |

| Output tokens per task (AA eval) | 160M | 120M | GPT-5.4 |

| Tokens per composite point | 2.07M | 1.40M | GPT-5.4 |

| Context window | 1M tokens, beta | — | Opus |

| Legal reasoning (BigLaw Bench) | 90.2% | — | Opus |

| Full Context Capacity Tax (500 devs, 3-agent) | $467,775/yr | — | GPT-5.4 |

| Verdict | Long-context, multi-step investigation | High-volume, cost-sensitive pipelines | Workload-dependent |

Stian Kirkeberg, Head of AI & ML at NBIM, ran a telling comparison: “Across 40 cybersecurity investigations, Claude Opus 4.6 produced the best results 38 of 40 times in a blind ranking” (Anthropic). A 95% win rate on complex, multi-step security analysis. NBIM manages Norway’s sovereign wealth fund — an organization where investigation depth determines the outcome and token cost is a rounding error. Kirkeberg’s team validates exactly the scenario where paying the Context Capacity Tax makes sense.

Kirkeberg’s result doesn’t contradict the composite ranking. It explains it. Opus 4.6 dominates tasks that reward extended reasoning and deep tool use. It underperforms where thinking longer buys nothing — the routine queries that make up the majority of production coding calls. And as analysis of accuracy degradation at 1M tokens has shown, context rot erodes reliability at the window’s outer range regardless.

A solo developer debugging a distributed system pays the tax gladly — the 90.2% BigLaw Bench score hints at cross-domain reasoning depth that cheaper models can’t match. A CFO reviewing the API line item asks a blunter question: does cost-per-task go up or down when the model roster changes?

One caveat on Kirkeberg: forty investigations is a signal, not statistical proof. And Terminal-Bench 2.0 — the newer, less battle-tested evaluation where contamination verification is weakest — happens to be the one Anthropic chose to headline. Skepticism is earned, not cynical.

Critics of this framing point out that token count is a proxy metric, not a value metric: if Opus 4.6’s extended reasoning catches a single production bug that would have cost a week of engineering time, the entire annual Context Capacity Tax pays for itself on one incident. The strongest version of this argument holds that composite benchmark rankings, which weight routine tasks heavily, systematically undervalue models purpose-built for the complex, high-stakes work where the cost of a wrong answer dwarfs the cost of extra tokens.

What $103,000 Buys — and What $468,000 Costs

Two formulas worth pasting into a spreadsheet:

Base tax (single-query, standard pricing):

Monthly excess = devs × avg_output_tokens/month × 0.33 × $0.000025

Annual waste = monthly_excess × 12

For 500 developers at 2.1M output tokens each: 500 × 2,100,000 × 0.33 × $0.000025 × 12 = $103,950.

Full compound tax (agent teams crossing 200K context):

Monthly excess = devs × avg_output_tokens/month × 0.33 × agents × $0.0000375

Annual waste = monthly_excess × 12

For 500 developers, 3-agent workflows: 500 × 2,100,000 × 0.33 × 3 × $0.0000375 × 12 = $467,775.

A 100-developer startup running leaner — 1M tokens per developer per month, no agent teams — still bleeds $9,900 annually. Not a rounding error on a Series A budget. Add a two-agent workflow at the surcharge rate and that startup’s waste jumps to $29,700.

Three decisions separate the teams that absorb this tax from the ones that eliminate it:

- Run the formula. Pull actual output token volume from billing dashboards — not vendor estimates, actuals. Count how many queries trigger agent teams and how many cross 200K context. Plug in. The gap between the base tax and the compound tax is where the real money hides.

- Route by workload. Long-context investigation and multi-agent coordination → Opus 4.6. Everything else → GPT-5.4 Pro or even Opus 4.5, which at rank five on the composite outscores its successor and generates fewer tokens per answer.

- Track agent team sprawl. Each deployment multiplies context allocations. Monitor the ratio of agent-team queries to standard queries monthly. If it climbs past 30%, rerun the formula — you’ve likely crossed from the base tax into compound territory.

Agent team adoption will accelerate. Each new deployment adds a context allocation multiplier to the formula above — three to five agents per complex query, each with its own context window, each multiplying the tax. A 500-developer organization running 30% of queries through agent teams faces approximately $468,000 in annual compound token costs versus $104,000 under the base tax — a $364,000 differential that buys 1.5 additional senior engineers. Organizations that instrument cost-per-task now will manage the bill. Organizations that don’t will discover it has been buying headcount for a competitor.

Cost-per-merge is the metric nobody budgets for — and the one that determines which API contract survives the next review.

Six months from now, the leaderboard will show different numbers. Opus 4.7 or GPT-5.5 will claim the crown, the press releases will be louder, and the token counts will be higher. None of that changes the equation worth solving: monthly token spend divided by tasks shipped — not tasks attempted, shipped.

The model that tops the coding benchmark a vendor chose to publish is marketing. The model that wins cost-per-merge on a team’s actual workload is engineering. One number lands in a press release. The other lands in a budget.

What to Read Next

- JPMorgan’s AI Mandate Hides a 39-Point Perception Gap

- AI Coding Tools Cost $6,750/yr in Hidden Rework — 5 Ranked by True Price

- Shadow AI Costs $21K Per App: The 3:1 Ratio Nobody Tracks

References

- Claude Opus 4.6 — Anthropic’s official announcement with pricing, benchmark claims, and partner testimonials.

- SWE-bench & LiveCodeBench Leaderboard (March 2026) — Independent composite ranking of coding models across nine evaluations, as of March 2026.

- NVIDIA Nemotron 3 Super: The New Leader in Open, Efficient Intelligence — Artificial Analysis’s standardized evaluation, measuring output volume per model under identical prompts as part of broader Intelligence Index benchmarks.

- AI Reasoning Costs Hit 5x as Hidden Tokens Surge — Analysis of how extended-thinking architectures inflate output volume and cost.

- Context Rot Drops Claude to 78% Accuracy at 1M Tokens — Evidence of accuracy degradation at the outer range of large context windows.