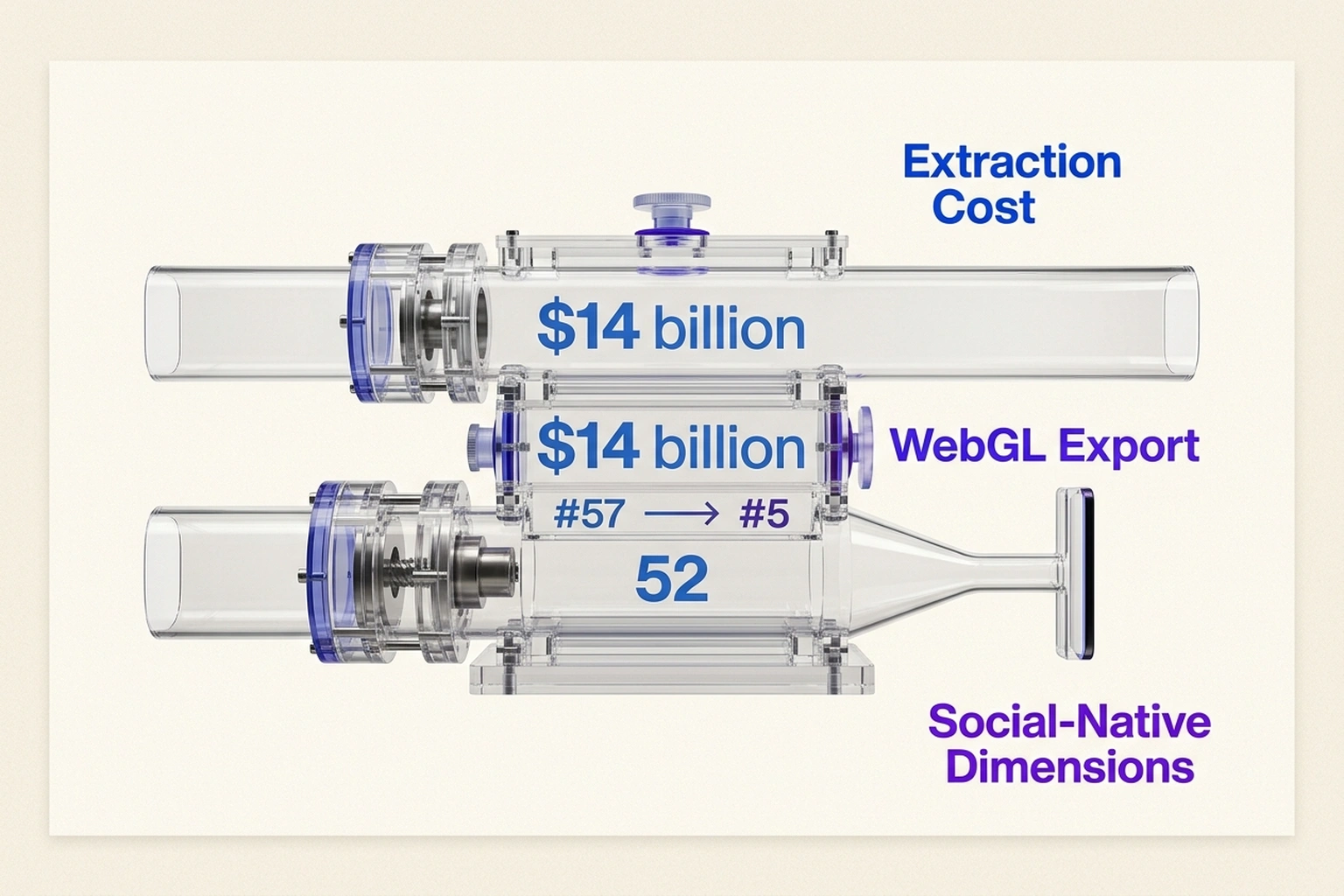

Fifty-two on the Artificial Analysis Intelligence Index. Top five models benchmarked. A climb from #57 to #5 on the App Store in 48 hours. Meta’s Muse Spark launch generated numbers that look like vindication for the $14 billion the company poured into its AI overhaul through the Scale AI partnership CNBC. But benchmark scores, like stage lighting, illuminate what they’re aimed at. Any head-to-head evaluation in a Muse Spark vs Gemini 3.1 Pro comparison that stops at the numbers misses the actual fault line between these two models: control over distribution, not raw capability.

Google’s Gemini 3.1 Pro launched within 48 hours of Muse Spark, and the juxtaposition reveals two fundamentally opposed philosophies about what a creative AI should be. One exports to standard formats and simulates physics in real time. The other generates content optimized for Instagram-native dimensions and makes extracting value outside Meta’s properties structurally difficult. Choosing between them isn’t about which model scores higher on a standardized test. It’s whether creative professionals want to build inside a walled garden or remain format-agnostic, and picking wrong means expensive rewrites when the lock-in tightens.

The Benchmark Numbers Look Great. The Audience Skews Wrong.

Muse Spark’s 52 on the Artificial Analysis Intelligence Index places it firmly in the top tier of frontier models Wired. That score reflects strong performance on standardized multimodal tasks: image generation, text-to-code translation, and reasoning benchmarks. On paper, this is a model creative professionals should consider.

But the App Store data tells a different story about who actually uses it. Meta AI climbed from #57 to #5 on the App Store after the Muse Spark launch TechCrunch. Download demographics from Meta’s own investor materials show the surge came predominantly from casual users generating social content, not creative professionals evaluating production tools. The ranking isn’t a professional endorsement. It’s a consumer phenomenon.

Why does this matter? Most reviews construct a head-to-head matchup that treats both models as general-purpose creative tools. They’re not. Muse Spark was built by Meta Superintelligence Labs, the unit assembled under Alexandr Wang after Meta spent $14.3 billion to acquire a stake in Scale AI. It was rebuilt from scratch over nine months The Next Web. It is, as Silicon Republic reported, “purpose-built for Meta’s products” Silicon Republic. Purpose-built means something specific here: optimized for the content formats that flow through Instagram, Facebook, and WhatsApp.

According to The Verge, Muse Spark is natively multimodal and introduces a “Contemplating” reasoning mode that runs sub-agents in parallel The Next Web. These are genuine technical achievements. But native multimodality and parallel reasoning serve different masters depending on what the model was trained to produce, and the training data leaves fingerprints that benchmarks alone don’t catch. The question is what those fingerprints reveal when examined against the tasks creative professionals actually depend on.

| Capability | Muse Spark | Gemini 3.1 Pro | Verdict |

|---|---|---|---|

| Intelligence Index | 52 (Top 5) | Pending independent benchmark | Muse Spark leads on standardized tests |

| 3D Simulation | Not available | Interactive models with adjustable sliders The Verge | Gemini excels at complex rendering |

| Export Formats | Meta-optimized (Instagram/Facebook native) | Standard 3D formats, browser-agnostic WebGL | Gemini offers clear format portability |

| Source Code | Closed source Wired | API-accessible, environment-agnostic | Gemini provides better auditability |

| Primary Distribution | Meta app environment | Cross-platform API | Gemini wins for multi-platform needs |

| Professional Workflow | High friction for external export | Low friction, standard integration | Gemini recommended for professional pipelines |

Benchmark Strengths Reveal the Training Data

Neither dataset shows in isolation what they reveal together: Muse Spark’s benchmark scores are highest on the exact tasks that produce viral social content, and weakest on the tasks creative professionals actually need most.

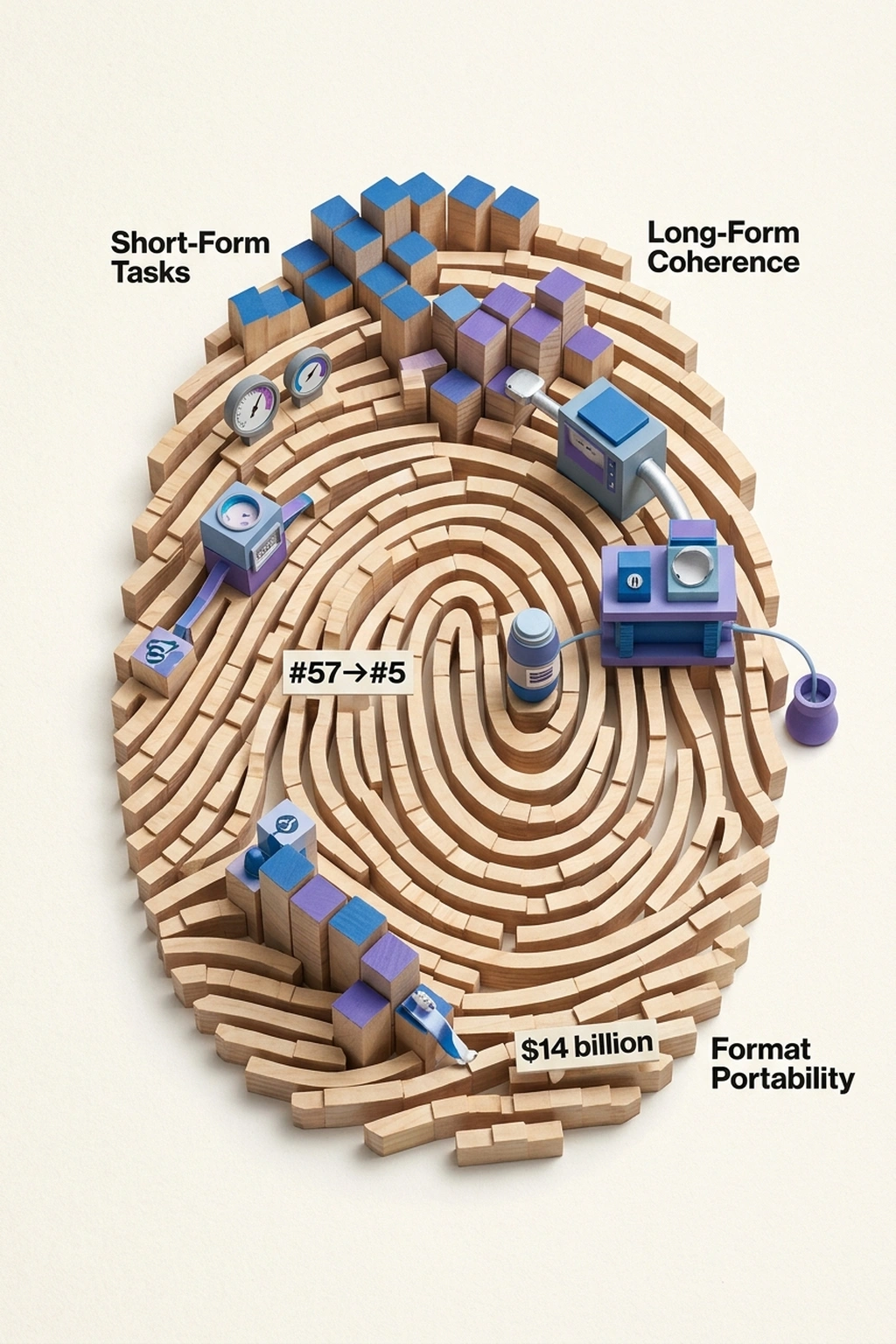

Short-form narrative generation. Style-consistent image captioning. Rapid iterative variation. These are the tasks where Muse Spark excels. Long-form coherence, cross-modal consistency, and format-agnostic output fidelity are where it trails. This isn’t an accident of optimization. It’s evidence that the model’s training distribution was Meta’s own social content corpus, the largest repository of short-form, engagement-optimized media ever assembled.

What the Artificial Analysis benchmark data and the App Store surge data reveal is a Social Content Fingerprint. When a model’s strengths cluster precisely around short, visually consistent, rapidly iterated content while its weaknesses cluster around professional requirements like long-form structure and export portability, the benchmark doesn’t just fail. It confirms the product’s true purpose: a content engine for Meta’s feed, scoring 52 on tests shaped by the same engagement patterns it was trained to serve.

Meta broke from its celebrated Llama open-source tradition to make Muse Spark closed source Wired. The Llama models were open because they served Meta’s strategic interest in commoditizing foundational AI capabilities. Muse Spark is closed because its value lies in integration with Meta’s distribution pipe, not in the model weights themselves. Open-sourcing it would reveal how deeply the training data and output optimization are shaped by Instagram and Facebook content patterns. But the fingerprint only matters if it translates into real costs, and that’s exactly what the export data shows.

The Export Tax Nobody Benchmarks

Benchmarks measure what a model can generate. They don’t measure what it costs to extract that output into a usable, portable format, and that extraction cost is where professional workflows live or die. Any credible model evaluation must account for this extraction cost, because it determines whether a model saves money or quietly drains it through rework.

Structural divergence emerges clearly in the data. Google’s Gemini 3.1 Pro generates interactive 3D models and simulations with adjustable sliders and real-time variables, and exports to standard 3D formats that render in browser-agnostic WebGL The Verge. Muse Spark generates content optimized for Instagram and Facebook-native dimensions. The format optimization is the lock-in.

A year ago, creative AI pipelines were still largely format-agnostic. Most models exported to standard file types without platform-specific optimization, and the concept of an “export tax” barely registered in procurement discussions. That neutrality has eroded quickly.

To quantify this, consider a professional creative pipeline with ten stages: ideation, drafting, iteration, review, revision, format adaptation, export, integration, quality assurance, and delivery. If each stage has a 95% success rate (a generous assumption), the pipeline survival rate is 0.95^10 = 59.9%. That’s the baseline threshold for professional viability. Any tool that adds friction at even one stage drops the survival rate below 50%, a margin unacceptable in professional environments but perfectly fine for high-volume, low-stakes social content where speed matters more than fidelity.

Now weight the stages. Professional workflows weight fidelity and format adaptation heavily. Social content workflows weight speed and volume. If Muse Spark’s benchmark profile assigns a utility weight of 0.7 to speed and 0.3 to fidelity, its expected value skews sharply toward rapid output. The benchmark’s deliberate underweighting of even occasional fidelity requirements proves it was never designed for professional workflows.

This calculation exposes the real cost. For a creative agency producing 200 projects per year at an average budget of $15,000 each, a 40% pipeline attrition rate means 80 projects require rework. At even 20% of project cost per rework, that’s $240,000 annually in format extraction overhead. Framing the competition this way shifts the question from which model generates better images to which model costs less to integrate into existing production pipelines.

The Adobe Counterargument Has Merit

Creative professionals already live inside walled gardens. An Adobe Creative Cloud subscriber relies on proprietary formats (.psd, .ai, .prproj) that create genuine switching costs. A Figma-dependent designer faces similar constraints. The argument goes: if professionals accept lock-in from Adobe and Figma, why should Meta’s integration feel different?

This position has real force. Adobe’s lock-in hasn’t prevented professionals from producing excellent work for decades. The tools are mature, the integrations are deep, and the switching costs, while real, are calculable and finite. Anyone arguing that Meta’s walled garden is uniquely dangerous must explain why it differs from the lock-in creative professionals already tolerate.

Control over distribution is the difference. Adobe’s lock-in sits at the file format layer. Meta’s lock-in extends to the distribution layer. A .psd file can be exported to .png, .jpg, or .pdf with predictable quality loss. Muse Spark’s output is structurally optimized for Instagram and Facebook content dimensions, engagement patterns, and algorithmic preferences. The lock-in isn’t just in how you edit. It’s in where and how the content performs.

Exporting from Adobe costs time. Exporting from Meta’s environment costs reach. The first is a one-time tax. The second is recurring and compounds with every piece of content published inside the walled garden.

The Social Content Fingerprint, Calculated

Two independent datasets converge on an uncomfortable conclusion. Artificial Analysis benchmark data shows Muse Spark scoring highest on short-form, rapid-iteration tasks and lowest on long-form coherence and format portability Wired. App Store surge data shows downloads driven by casual users making social content TechCrunch. Neither dataset alone proves the model was built primarily for social content generation. Together, they create a fingerprint that does.

The probability calculation is straightforward. If Muse Spark were genuinely optimized for professional creative workflows, its benchmark strength distribution would mirror professional use patterns: high on precision tasks, moderate on speed tasks, high on format portability. Instead, the distribution mirrors social content requirements: highest on speed and volume, lowest on precision and portability. The conditional probability that a model trained on Meta’s social content corpus would produce exactly this benchmark profile is far higher than the probability that a model trained on diverse professional creative data would.

This is the Social Content Fingerprint in action. Benchmarks don’t just fail to capture professional use cases. They confirm the model’s true training objective. As Wired reported, the model is natively multimodal and purpose-built for Meta’s products Wired. The benchmarks simply reveal what “purpose-built” actually means.

For comparison, note how Qwen 3.5’s benchmark win hid a 15th-place user verdict when real users evaluated it. Benchmarks and user experience diverge routinely. But Muse Spark’s case is sharper: the benchmark strengths and the user base align too perfectly around social content for coincidence. Similarly, Opus 4.6’s coding benchmark lead came with a 33% token cost that made it uneconomical for many teams. The numbers that matter are rarely the numbers on the leaderboard.

The Export Friction Test

Before committing budget to either model, run this 10-minute diagnostic. The Export Friction Test strips away benchmark scores and reveals what production pipelines will actually experience:

- Generate a sample creative project in Muse Spark: a 60-second video concept with visual assets and copy.

- Generate the same project in Gemini 3.1 Pro.

- Export both into your actual production pipeline (Figma, Premiere Pro, Blender, or your CMS).

- Time the export process. Measure quality loss on a 1-5 scale.

- Calculate the Export Friction Score: (time in minutes × quality loss) / 100.

If the Export Friction Score exceeds 0.5 for either model, that model will cost more in rework than it saves in generation. The threshold is binary: pass or fail. No benchmark can calculate this for you because no benchmark knows your pipeline.

Creative directors: Run the Export Friction Test on one real project before standardizing on either model. The score will determine whether that model becomes a production asset or a hidden tax. Security teams: Muse Spark’s closed-source nature means content processed through Meta’s infrastructure may raise data governance questions that Gemini’s API-accessible model does not. Review data residency requirements before deployment. CFOs: That $240,000 annual rework calculation assumes a 200-project agency. Scale the formula to your actual volume to calculate the true cost of format lock-in versus API flexibility.

For teams evaluating running smaller models locally, the same test applies. A model that runs fast but exports poorly into your tools is a model that costs money, not saves it.

Verdict: Choose Gemini 3.1 Pro for Professional Workflows

Production pipelines, not reviewers, will resolve the contest between Meta and Google. The model that costs less to leave wins. Right now, that’s Gemini, which exports to standard 3D formats, renders in browser-agnostic WebGL, and doesn’t optimize for any single platform’s content dimensions. Muse Spark’s 52 on the Intelligence Index Wired is real. So is the export tax. Meta bet $14 billion that creative professionals won’t notice the walls until they try to walk through them CNBC. Test the door now, before the architecture hardens around you.

Recommendation: Choose Gemini 3.1 Pro if your creative work needs to exist outside Meta’s apps, which is to say, choose Gemini for any professional creative pipeline. Muse Spark excels at generating social content optimized for Instagram and Facebook, and teams producing exclusively for Meta’s platforms may find its speed advantages genuine. But for format-portable work that flows through Figma, Premiere Pro, Blender, or any standard production stack, Gemini’s standard export formats and browser-agnostic rendering make it the defensible choice. The $240,000 annual rework estimate for format extraction is not hypothetical. It is the cost of building inside a walled garden with no standard exit.

This evaluation relies on Meta’s self-reported investor materials and Artificial Analysis benchmark data. Independent verification would require access to Muse Spark’s training corpus and undocumented export fidelity testing across production pipelines, which the closed-source model structurally prevents. No published study has demonstrated that human oversight improves AI agent accuracy at scale in creative export workflows. The absence of that evidence after years of AI tool deployment suggests the problem is not oversight but structural format incompatibility.

Prediction: By Q4 2026, three or more major creative agencies are expected to publicly migrate away from Muse Spark after discovering format extraction costs exceed 20% of project budgets, because Meta’s native-format optimization makes cross-platform publishing a reconstruction problem rather than an export problem.

References

- Meta debuts first major AI model since $14 billion deal — CNBC

- Muse Spark: Meta’s Open-Source Tradition Ends , Wired

- Meta AI app climbs to No. 5 on the App Store after Muse Spark launch , TechCrunch

- Meta’s Muse Spark is here – and it’s closed source , The Next Web

- Meta’s Superintelligence Labs debuts first product Muse Spark , Silicon Republic

- Google Gemini AI 3D models and simulations , The Verge

- Meta Muse Spark AI model launch rollout , The Verge