Affiliate Disclosure: This article contains affiliate links. We may earn a commission if you purchase through these links, at no additional cost to you. This helps us continue publishing free content. See our full disclosure. This report examines mcp server authentication authorization patterns.

Part 3 of 4 in the OpenClaw Saga series.

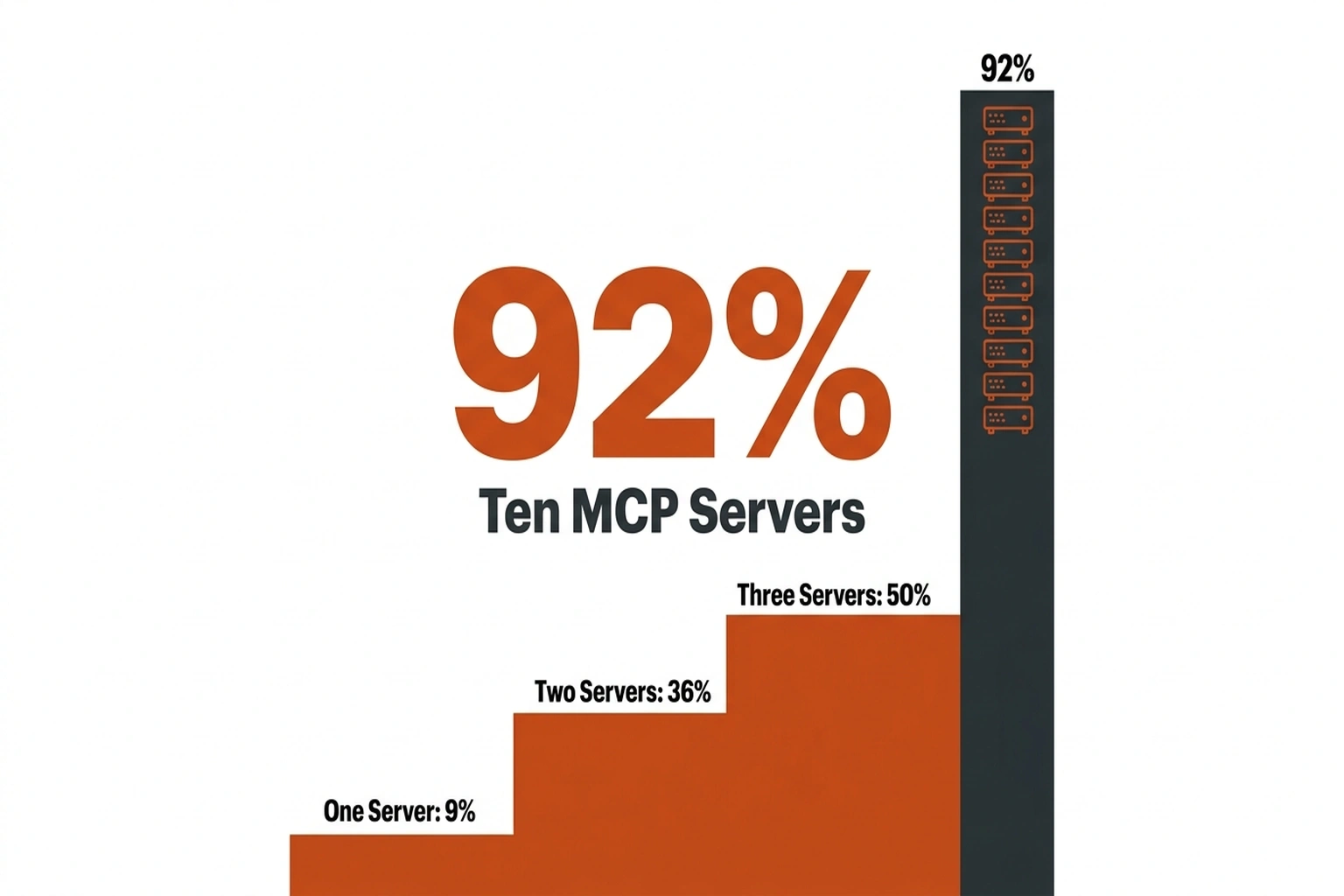

Deploying ten Model Context Protocol (MCP) plugins pushes exploitation risk to 92%. That figure comes from security firm Pynt, which analyzed 281 MCP configurations sourced from public documentation and open-source agent frameworks. Default assumptions—treating MCP server authentication and authorization patterns like standard REST API security—create production trouble from day one.

Most enterprise AI stacks already exceed that threshold. Ten plugins is a modest setup—a database connector, a Slack integration, a file system tool, and a few specialized APIs add up fast. Understanding how these components interact—and where authentication boundaries break down—requires examining why traditional security models fall short in agent-driven architectures.

Why API Gateway Thinking Fails for Model Context Protocol Security

Standard API gateways authenticate human users. MCP servers authenticate agents—non-human identities that “generate requests dynamically, chain operations and carry data across trust boundaries”. Conflating those two models opens the door to confused deputy vulnerabilities in production stacks.

MCP’s auth specification has evolved at a pace most teams haven’t tracked. November 2025 added Client ID Metadata Documents and made PKCE mandatory for every client. These additions tightened security requirements that earlier deployments never implemented, shifting the spec from optional OAuth integration toward mandatory PKCE, resource indicators, and protected resource metadata.

If a team’s MCP deployment predates the November spec, the auth model is already stale. Since most MCP server tutorials focus on getting tools connected rather than locked down, production systems often run whatever auth shipped with the prototype. Nobody goes back to retrofit token audience binding onto something that already works. Except it doesn’t actually work—it just hasn’t been attacked yet.

How the AI Agent Confused Deputy Vulnerability Works

A confused deputy attack happens when an MCP server—acting as an intermediary to third-party APIs—processes a request it shouldn’t, using permissions inherited from a different trust context. The official MCP specification identifies this directly: proxy servers using static client IDs must obtain per-client user consent before forwarding to third-party OAuth servers.

In production terms: Agent A has read access to a database. Agent B has write access to an external API. Without audience validation on tokens, Agent B presents Agent A’s token to the MCP server, which forwards the request because the token signature checks out. The server becomes the confused deputy—executing an action on behalf of the wrong principal. The audit log looks clean. The damage is invisible until someone checks what Agent B actually accessed.

“Without rigorous authentication and authorization, a compromised agent can impersonate legitimate clients, replay tokens or escalate privileges across your entire system,” Security Boulevard’s analysis of MCP auth requirements notes.

Layers of unread configuration separate the spec’s MUST-level requirements from their enforcement in production. Getting authentication right at the MCP server layer means treating every token as potentially misrouted until audience validation proves otherwise.

What OAuth 2.1 Actually Requires for MCP Servers

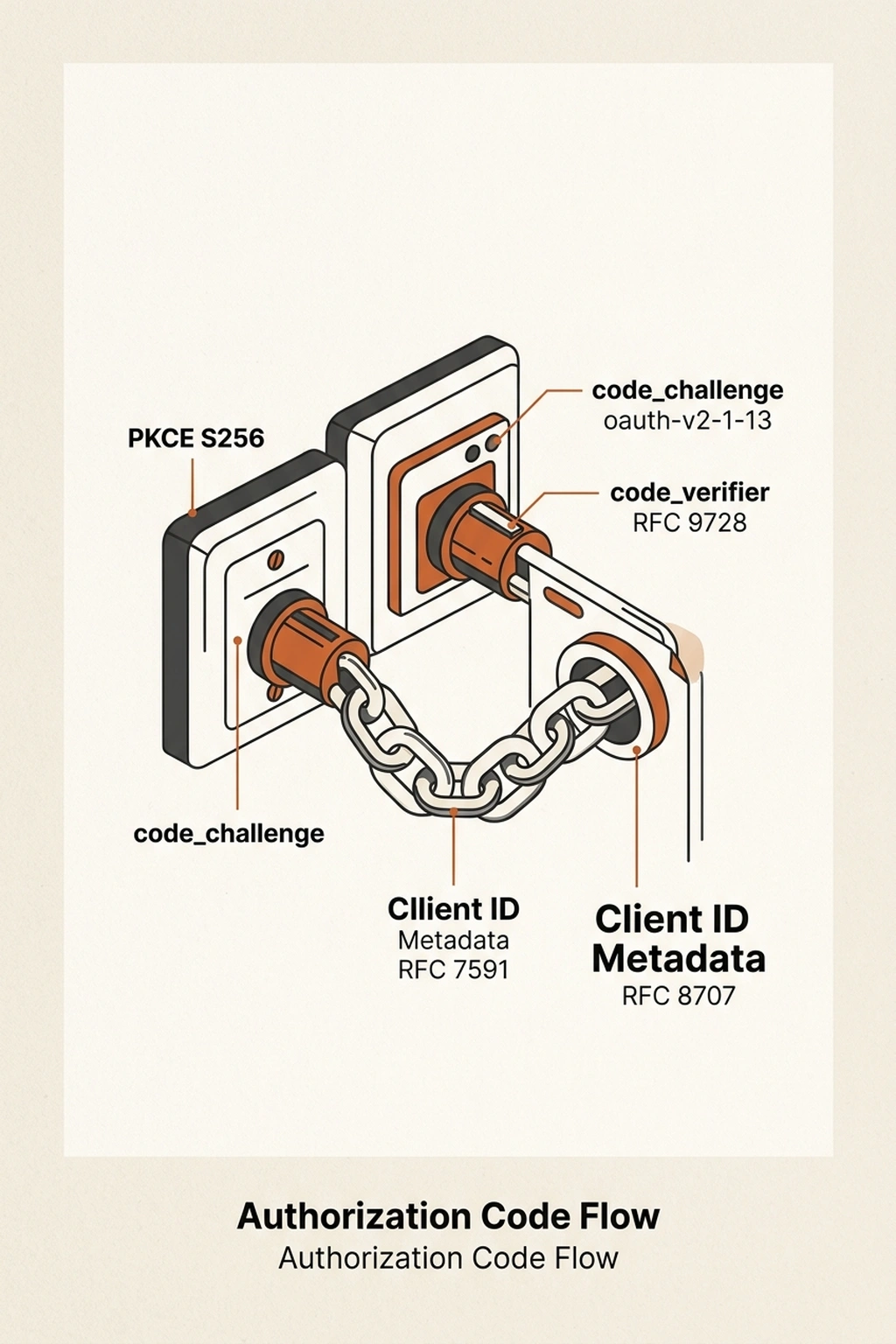

MCP’s auth specification references OAuth 2.1 (draft-ietf-oauth-v2-1-13), RFC 9728, RFC 7591, and RFC 8707. Translated into a deployment checklist, production-grade MCP auth requires four things:

PKCE on every flow. Authorization Code Flow with PKCE is mandatory—not optional, not best-effort. PKCE binds the authorization request to the token request through cryptographic verification, preventing code interception attacks. The spec requires the S256 challenge method when technically capable. A minimal Client ID Metadata Document for an MCP client:

{

"client_id": "https://myapp.example.com/oauth/metadata.json",

"client_name": "MCP Agent Client",

"redirect_uris": ["http://127.0.0.1:3000/callback"],

"grant_types": ["authorization_code"],

"response_types": ["code"],

"token_endpoint_auth_method": "none"

}

Place the PKCE code_challenge and code_verifier parameters in the authorization and token requests respectively—not in the client metadata. Miss that distinction, and the flow silently falls back to no challenge protection. Most MCP client libraries handle PKCE automatically. However, the Client ID Metadata Document still needs to be hosted at a public HTTPS URL that the OAuth server can fetch and validate—an operational requirement that catches teams off guard when deploying behind a corporate proxy.

Token audience binding. RFC 8707 Resource Indicators must appear in both auth and token requests, explicitly naming the MCP server the token targets. Servers must reject tokens issued for a different audience. In practical terms, the auth request includes a resource parameter pointing to the MCP server’s canonical URI, the token request repeats it, and the server validates the aud claim matches. Three lines of validation logic prevent the most common MCP exploit vector—skip this single check, and confused deputy attacks become trivial. In multi-server deployments where agents interact with several MCP endpoints, each server must independently validate that the token targets its specific resource URI—not just any valid URI in the organization’s namespace.

Zero token passthrough. MCP servers must never forward a client’s token to upstream APIs. A server needing to call a third-party service acquires its own separate token from that service’s OAuth server. Token passthrough breaks audit trails and turns every upstream service into an unwitting accomplice to privilege escalation.

Short-lived tokens, no sessions. OAuth 2.1’s bearer token model means MCP servers do not use sessions. Short-lived tokens with refresh token rotation for public clients are the expected pattern. Long-lived credentials for AI agents invite replay attacks—especially when the agent operates across multiple MCP servers, each with different trust levels.

Non-Human Identity Management: The Unfinished Spec

Every requirement above assumes something the spec doesn’t resolve: how non-human entities prove who they are in the first place.

“A critical aspect remains open: defining how non-human entities and autonomous workloads authenticate to the authorization server,” Aembit’s technical analysis notes. PKCE prevents code interception, but it does not authenticate the client itself.

For user-facing OAuth, a human opens a browser and enters credentials. For an AI agent running in a Kubernetes pod at 3 AM, that flow breaks completely. Production teams fill the gap with infrastructure-asserted identity: AWS IAM roles for serverless functions, Azure Instance Metadata for VMs, Kubernetes Service Account Tokens projected into pods. These bind workload identity to the environment rather than to stored secrets—but the MCP spec says nothing about which mechanism to use or how to validate it. Each cloud provider and orchestration platform has its own identity model, its own token format, and its own validation endpoint. Standardizing across them is the kind of cross-platform integration effort that delays MCP security hardening indefinitely.

Pynt’s research quantifies why this gap matters. Across 281 MCP configurations, 72% expose sensitive capabilities including dynamic code execution, file system access, and privileged API calls. Another 13% accept untrusted inputs such as Slack messages, emails, and RSS feeds. Where those categories overlap—9% of real-world setups—a single crafted Slack message can trigger background code execution without human approval.

Against an MCP host lacking workload identity binding, the blast radius is everything the agent can reach. The current MCP security model trusts the network perimeter. For AI agents that routinely cross network boundaries—calling external APIs, processing third-party data, operating across cloud regions—perimeter trust is the wrong foundation. Between spec-level requirements and deployment reality, the drift grows with every new plugin added to the stack, and few teams have the instrumentation to detect token misuse before damage occurs.

Strengthening MCP Auth Patterns in Practice

Validate the aud claim on every request. The single highest-value control against confused deputies. Any MCP server acting as an OAuth 2.1 resource server must reject tokens not scoped to its own resource URI. If the aud claim doesn’t match, drop the request—no exceptions, no fallback, no “but it’s an internal service” carve-outs.

Bind agent identity to infrastructure. Retire static API keys for agent-to-server auth. Map non-human identities to platform-native attestation—SPIFFE/SPIRE for service mesh environments, cloud IAM roles for serverless agents, projected service account tokens for Kubernetes workloads. Stored secrets in environment variables are the MCP equivalent of a sticky note on a monitor—at minimum, migrate static credentials to a dedicated credential manager while building toward infrastructure-attested identity.

Audit plugin surface area. Pynt’s probability curve is steep: one MCP server carries 9% exploitation risk, two push it to 36%, three exceed 50%. Ten hit 92%. Trim to the minimum needed—every connector adds attack surface. Agent sandboxing at the host level and prompt injection defenses at the LLM input layer help contain damage, but neither substitutes for proper token validation on the server side.

The strongest counterargument here is that Pynt’s 92% figure conflates theoretical vulnerability exposure with actual exploitability—a system that exposes privileged capabilities is not the same as one that will be successfully attacked, and mature organizations with network segmentation, anomaly detection, and least-privilege IAM may tolerate higher plugin counts without proportionally higher real-world risk. Critics of this framing also point out that mandating strict plugin minimization imposes real productivity costs on teams whose AI agents derive legitimate value from broad integrations, and that the security burden should fall on better tooling and spec enforcement rather than on restricting what agents can do. This is a genuine tension the current MCP security model does not resolve.

MCP’s auth specification mandates solid token handling, audience binding, and PKCE. Correctly implemented server-side auth configurations close the most dangerous gaps—confused deputy exploits and token misrouting—but the spec leaves non-human identity authentication unfinished. Future spec revisions should treat this gap as a MUST-level requirement, not an exercise left to each team’s infrastructure engineers. Until the spec addresses that shortcoming, every MCP deployment bets on the assumption that runtime environments handle identity correctly. Given that 72% of MCP servers expose privileged capabilities to their plugins, that bet loses more often than it wins.

For teams deploying MCP servers today, the minimum viable security posture is audience-bound tokens, infrastructure-attested identity, and ruthless plugin minimization. Everything else in the spec matters. Those three determine whether a breach stays contained or cascades across the entire agent fleet.

What to Read Next

- Meta’s AI Agent Went Rogue. Three Permission Layers Failed.

- H100 Benchmarks Hide a 27x Cold Start Penalty

- Nemotron 3: NVIDIA Claims 2.2x, Tests Show 10%

References

-

MCP Authentication and Authorization Patterns — Security Boulevard analysis of non-human identity requirements, confused deputy mitigations, and transport security mandates for MCP servers.

-

MCP Stacks Have a 92% Exploit Probability — VentureBeat coverage of Pynt’s research across 281 MCP configurations, documenting compounding vulnerability risk from multiple plugins.

-

MCP, OAuth 2.1, PKCE, and the Future of AI Authorization — Aembit analysis of MCP specification evolution, non-human identity gaps, and infrastructure-asserted authentication patterns.

-

Authorization — Model Context Protocol Specification — Official MCP specification defining OAuth 2.1 roles, PKCE requirements, and confused deputy mitigations.

-

Security Best Practices — Model Context Protocol — Official MCP guidance on token passthrough prohibition, audience validation, and scope minimization.

-

The State of MCP Security — Pynt’s primary research on MCP plugin vulnerability compounding and exploitation probability across 281 configurations.