Affiliate Disclosure: This article contains affiliate links. We may earn a commission if you purchase through these links, at no additional cost to you. This helps us continue publishing free content. See our full disclosure.

Fifty-eight percent of SMB owners already use generative AI, up from 40% a year ago (U.S. Chamber of Commerce, 2025). But generic chatbot answers rarely fit specialized operations. LLM fine-tuning vs in-context learning small business strategies differ in cost and complexity. New research from Google DeepMind and Stanford introduces a third option, augmented fine-tuning, and shows it outperforms both established methods (Lampinen et al., 2025).

Prerequisites

Any SMB owner who has used ChatGPT, Claude, or Gemini through a web interface has the necessary background. No machine learning expertise is necessary, the concepts here apply whether implementation occurs in-house or through a contractor. A monthly AI budget of $20–$500 covers every option discussed below.

What You’ll Build

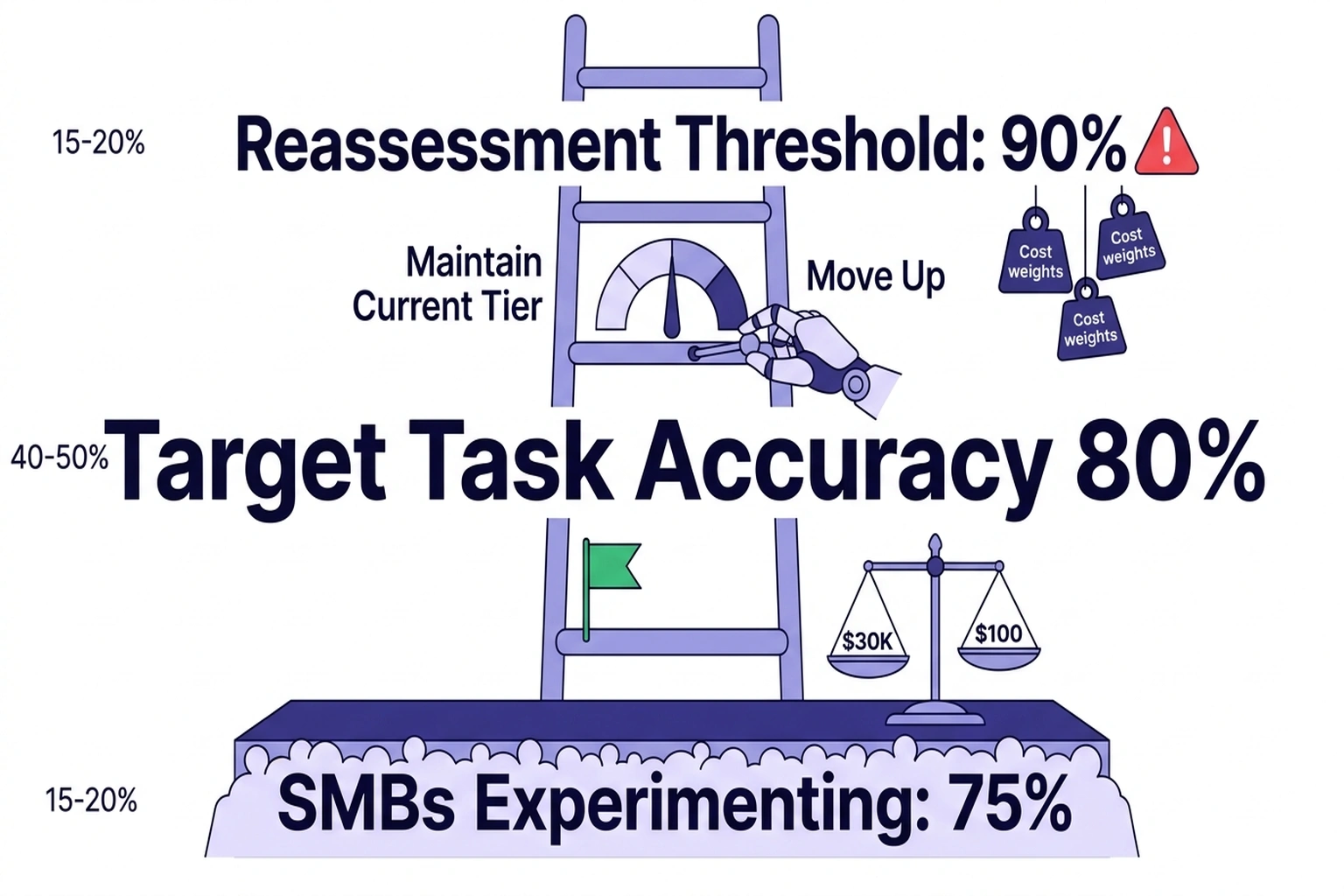

This guide provides a clear decision framework mapping any company AI use case to the right customization method. Each step includes budget estimates, a practical testing procedure, and measurable success criteria. A “Customization Ladder” frames the three options by commitment level: in-context learning (ICL) at the bottom, augmented fine-tuning in the middle, and standard fine-tuning at the top. For most SMBs, starting from the bottom and climbing only when quality gaps justify the cost offers the most capital-efficient path.

Step 1: How Fine-Tuning and ICL Actually Work

In-context learning (ICL) feeds examples directly into the prompt. A bakery wanting AI-generated product descriptions would paste five sample descriptions alongside the instruction, and the model mimics the style without any permanent changes. No model weights shift; performance depends entirely on prompt quality and the number of examples provided. ICL’s limitation is cost at scale, every query carries the full example payload, and token charges increase proportionally (Meta AI, 2024).

Fine-tuning permanently adjusts a model’s internal parameters using a specialized training dataset. Meta’s research demonstrates the range of improvement: fine-tuning Phi-2 on financial sentiment data increased accuracy from 34% to 85%, and just 100 Reddit examples improved sentiment classification by 25 percentage points (Meta AI, 2024). Once trained, changes persist across all future queries, eliminating repeated example prompts and reducing per-query inference costs.

Augmented fine-tuning combines both approaches. Researchers at Google DeepMind used ICL to generate expanded training data, prompting the model to rephrase facts and draw connections across datasets, before fine-tuning on the enriched result (Lampinen et al., 2025). Two strategies proved effective: a local approach that rephrases individual training facts into multiple variations, and a global approach that generates new examples by linking patterns across the full dataset (VentureBeat). To isolate genuine learning from memorized knowledge, the researchers built synthetic datasets replacing all nouns, adjectives, and verbs with nonsense terms, forcing models to learn structure rather than recall prior training (Lampinen et al., 2025).

Related work from Bornschein et al. tested this hybrid approach (labeled ICL+FT) on Gemma-2 models across 23 Big Bench Hard tasks with 150 training examples, producing consistent gains at every model size:

| Model Size | Fine-Tuning Only | ICL Only | Augmented (ICL+FT) | Gain over FT |

|---|---|---|---|---|

| 2B params | 50.7% | 37.6% | 67.5% | +16.8 pts |

| 9B params | 69.7% | 56.6% | 78.7% | +9.0 pts |

| 27B params | 72.6% | 64.2% | 81.8% | +9.2 pts |

| Verdict | Adequate for narrow tasks | Best for quick experiments | Recommended when budget allows |

One striking result shows that a 2B-parameter model using augmented fine-tuning outperformed ICL-only results from models over ten times its size on many tasks (Bornschein et al., 2025). This result reframes the calculus for SMBs comparing these customization methods: a cheaper, smaller model with the right training data can beat an expensive large model fed examples at runtime, directly challenging the assumption that bigger always means better.

Step 2: The Budget Guide for Comparing Customization Methods

Budget determines which rung of the customization ladder fits any evaluation of training versus prompt-based approaches. Below is a tiered breakdown based on current API and training costs.

Tier 1: Under $50/month , In-Context Learning

Paste 3–10 high-quality examples directly into prompts using any commercial API (OpenAI, Anthropic, Google). No training infrastructure needed. Cost scales with prompt length, since longer example sets increase per-query token charges. For many company tasks, email drafting, social media posts, product descriptions, basic customer FAQs. ICL delivers adequate quality with zero upfront investment (Meta AI, 2024).

Verify it works: Send the same business query with and without examples. If output quality improves consistently across 20 test cases, ICL is sufficient. No further investment needed.

Tier 2: $50–$300/month , LoRA Fine-Tuning Budget

Parameter-efficient methods like LoRA (Low-Rank Adaptation) adjust a small fraction of model weights while preserving most of the original model’s knowledge. LoRA achieves approximately 95% of full fine-tuning performance at a fraction of the cost (RunPod).

A sample workflow for a customer support chatbot:

# 1. Prepare training data in JSONL format

# Each line contains a conversation example:

# {"messages": [{"role": "user", "content": "..."}, {"role": "assistant", "content": "..."}]}

# 2. Install the Together AI CLI and start fine-tuning

pip install together==1.4.2

together fine-tuning create \

--training-file customer_support_500.jsonl \

--model meta-llama/Meta-Llama-3.1-8B-Instruct \

--n-epochs 3

# Expected output:

# Fine-tune job created: ft-abc123

# Status: TRAINING

# Estimated completion: ~45 minutes for 500 examples

Verify it works: Compare fine-tuned model responses against the base model on 50 held-out customer queries. Track accuracy, tone match, and factual correctness. If 40 or more of the 50 responses improve, the training investment pays off.

Tier 3: $300–$500/month , Augmented Fine-Tuning

Combine Tier 1 and Tier 2. First, use ICL to generate expanded training data from existing examples, applying the local and global strategies from the DeepMind research. Then fine-tune on the enriched dataset. Data preparation typically accounts for 20–40% of total project cost, so budget accordingly (RunPod).

# Generate augmented training data using ICL

# Local strategy: prompt the model to rephrase each training example 3 ways

# Global strategy: prompt the model to generate new scenarios

# combining patterns from multiple existing examples

# Fine-tune on the combined original + augmented dataset

together fine-tuning create \

--training-file augmented_support_1500.jsonl \

--model meta-llama/Meta-Llama-3.1-8B-Instruct \

--n-epochs 3

Verify it works: Run the same 50-query test from Tier 2. Augmented fine-tuning should show improved handling of edge cases, queries that do not closely match any single training example. If edge case accuracy jumps by 15% or more, the augmentation step is worth repeating for future training rounds.

Step 3: Run a Pilot Before Committing

Before spending on fine-tuning infrastructure, run a structured two-week pilot.

Week 1 , ICL baseline. Collect 50–100 representative business queries from actual customer interactions. Craft 5–10 prompt examples from existing business communications (emails, support tickets, product descriptions). Score each AI output on a 1–5 scale across three dimensions: accuracy, tone, and relevance.

Week 2 , Fine-tuning test. If ICL scores average below 3.5, prepare a fine-tuning dataset from the same business communications. Even 100 high-quality examples produce measurable improvement. Meta’s research showed 25 percentage points of accuracy gain from that dataset size alone (Meta AI, 2024).

Decision gate: ICL delivers a 4.0+ average? Stop there, budget savings from avoiding fine-tuning likely outweigh marginal quality gains. Score between 3.0 and 3.9? Augmented fine-tuning from Tier 3 represents the evidence-based recommendation. Below 3.0? More fundamental data collection must happen before any AI customization approach performs adequately.

Step 4: Measure Results and Iterate

After deploying the chosen method, track three metrics monthly:

- Task accuracy , percentage of outputs requiring no human editing. Target 80% or higher.

- Cost per query , total monthly AI spend divided by query count. ICL costs rise with prompt length; fine-tuned models use shorter prompts but carry training cost amortization. Fifty-eight percent of AI-using SMBs report saving over 20 hours per month; for those companies, time savings typically justify Tier 2 investment (Thryv, 2025).

- Edge case performance , track queries where the model fails or requires heavy editing. A growing failure rate signals the need to move up the customization ladder.

Reassess quarterly. If accuracy drops below 80% on growing query volume, move to the next tier. If accuracy holds above 90%, evaluate whether the current tier costs more than necessary, with 75% of SMBs at least experimenting with AI, many find that basic prompt-based approaches handle their workload without further investment (Salesforce, 2025).

Common Pitfalls

-

Over-investing in fine-tuning before testing ICL. Many company use cases work well with 5–10 prompt examples and zero training cost. Salesforce’s 2025 SMB trends report found that 75% of small businesses are at least experimenting with AI, and for many, basic prompt-based approaches prove sufficient (Salesforce, 2025).

-

Training on too little data. Budget for data preparation time: 20–40% of total project cost goes to cleaning and formatting training examples (RunPod).

-

Ignoring shadow AI risk. When employees adopt AI tools individually without a coordinated customization strategy, unsanctioned deployments create inconsistent outputs and security gaps. A single, centrally customized model reduces fragmentation and makes quality measurement possible.

-

Assuming bigger models are always better. Research demonstrates that a 2B-parameter model with augmented fine-tuning can outperform a 27B-parameter model using ICL alone (Bornschein et al., 2025). Smaller fine-tuned models also cost less to serve, a pattern already driving adoption of small language models in banking for fraud detection.

-

Skipping the decision framework. Meta recommends answering four questions before fine-tuning: Does the use case require external or dynamic knowledge? Is custom tone or vocabulary needed? How important is reducing hallucination? How much labeled data is available? (Meta AI, 2024). Jumping to fine-tuning without clear answers to these questions wastes both time and budget.

Critics of this framing point out that the benchmark gains reported for augmented fine-tuning come primarily from controlled academic tasks, synthetic datasets and standardized reasoning benchmarks, that may not reflect the messiness of real SMB data, where training examples are inconsistent, mislabeled, or too sparse to generate meaningful augmentations. The strongest version of this objection holds that for businesses without dedicated data pipelines, the overhead of curating and augmenting training data could easily consume the cost savings the method promises, making a well-maintained retrieval-augmented generation (RAG) system a more practical alternative for knowledge-intensive tasks. SMB owners should weigh these benchmarks against their own data quality before treating augmented fine-tuning as a default recommendation.

What’s Next

The choice between fine-tuning and ICL for SMBs does not have to be permanent, companies that start with structured ICL pilots accumulate the data needed to adopt augmented fine-tuning as costs continue falling. For business owners ready to go deeper, connecting a customized model to internal tools through approaches like the Model Context Protocol extends AI customization beyond language quality into workflow automation.

An immediate action item: take the five most common customer questions the company receives, write ideal answers, and test them as ICL examples with any commercial API. If the results meet quality thresholds, the cheapest customization method turns out to be the right one. If not, those five question-answer pairs become the seed of a fine-tuning dataset, nothing wasted.

What to Read Next

- TurboQuant’s 6x Compression Creates More GPU Demand

- GPT-5.4 Mini vs Nano: Small Model Costs Hide a 33-Point Cliff

- Qwen 3.5 Benchmark Win Hides a 15th-Place User Verdict

References

-

Fine-tuning vs. in-context learning: New research guides better LLM customization for real-world tasks , VentureBeat coverage of Google DeepMind/Stanford augmented fine-tuning research.

-

On the generalization of language models from in-context learning and finetuning: a controlled study , Lampinen et al., original research paper on augmented fine-tuning using controlled synthetic datasets.

-

Fine-Tuned In-Context Learners for Efficient Adaptation , Bornschein et al., benchmark results for ICL+FT across Gemma-2 and Qwen-3 model families on Big Bench Hard and NLP tasks.

-

To fine-tune or not to fine-tune , Meta’s decision framework for choosing between fine-tuning, ICL, and RAG adaptation methods.

-

Empowering Small Business: The Impact of Technology on U.S. Small Business , U.S. Chamber of Commerce, 2025 survey of 3,870 small businesses on AI adoption trends.

-

AI and Small Business Adoption Report 2025 , Thryv’s 2025 survey of 540 small business decision-makers on AI usage and time savings.

-

New Research Reveals SMBs with AI Adoption See Stronger Revenue Growth , Salesforce’s 2025 SMB trends report across 3,350 respondents on AI experimentation and revenue impact.

-

LLM Fine-Tuning on a Budget: Top FAQs on Adapters, LoRA, and Other Parameter-Efficient Methods , RunPod’s guide to parameter-efficient fine-tuning costs, LoRA implementation, and data preparation budgeting.