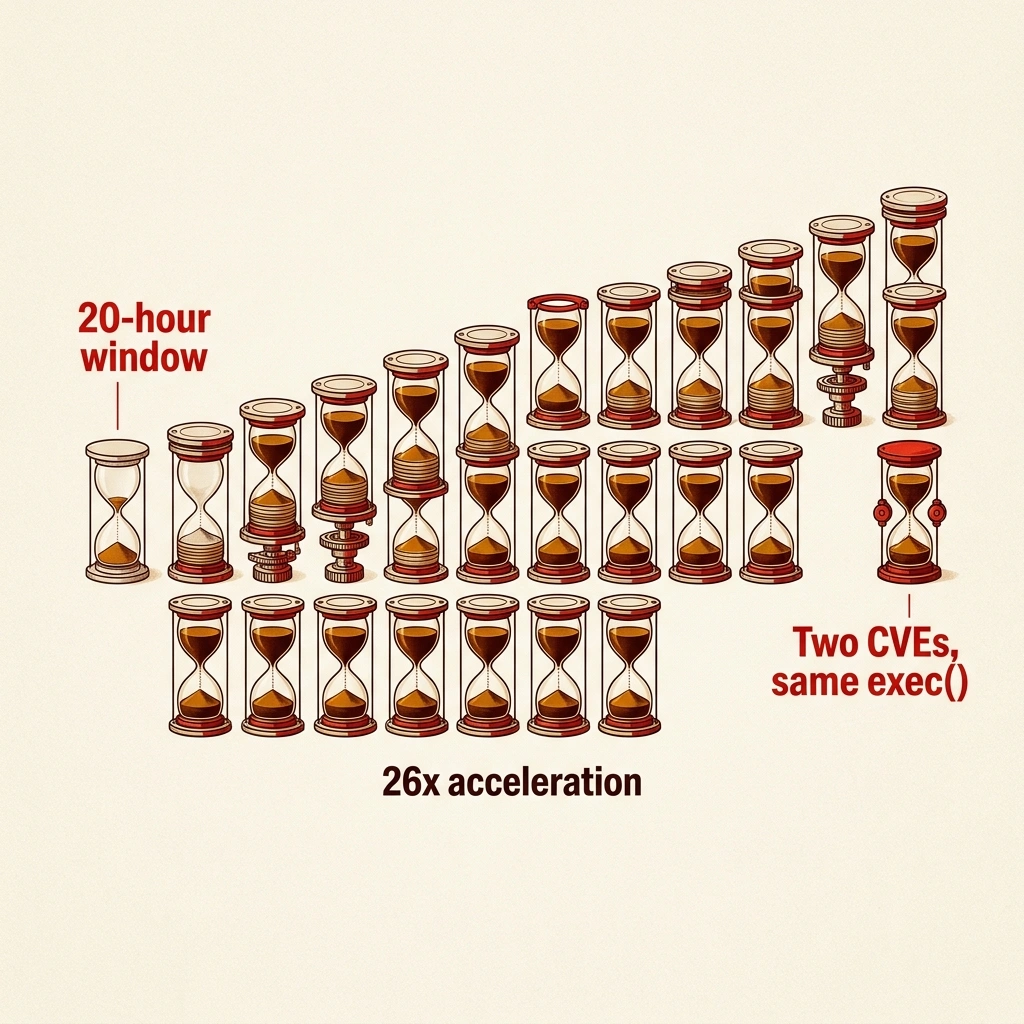

Twenty hours. The Langflow project disclosed a CVSS 9.3 remote code execution flaw and released a patch on March 17 (CSA). That disclosure was also a blueprint.

By the next afternoon, the first exploitation attempt hit Sysdig’s honeypot network (CSOnline). No proof-of-concept code existed on GitHub. No exploit kit circulated on underground forums. Attackers read the advisory, studied the patch diff, and built a working exploit from the documentation alone.

A year ago, Langflow had zero CISA entries and no known exploitation history. Then CVE-2025-3248 hit the same codebase through the same unsandboxed exec() call — just a different endpoint. CISA added it to the Known Exploited Vulnerabilities catalog in May 2025 (CSA). Langflow patched the endpoint. Langflow did not patch the architecture. CVE-2026-33017 routes around that fix through another unauthenticated path, and CISA has again mandated remediation — this time by April 8, 2026 (CyberPress). Patching will close this door. It will not lock the building.

The implications for Langflow RCE extend further. Strip away the CVE numbers, and what remains is architectural. Langflow is a drag-and-drop AI pipeline builder with 145,000 GitHub stars — enough to fill two NFL stadiums — (CSA) whose core feature is executing arbitrary Python code. CVE-2026-33017 did not find a flaw in that design. It found the front door unlocked — again.

The 50% Patch

When CVE-2025-3248 surfaced in May 2025, Langflow patched the exploited endpoint and added authentication to specific code-execution routes. But the underlying prepare_global_scope() function — which passes user input directly to Python’s exec() with no sandboxing, no bytecode restriction, no input validation — remained reachable through at least one other unauthenticated path (CyberPress).

No advisory included this math. If N code paths reach the same unsandboxed exec() function, patching one eliminates 1/N of the actual attack surface. With N ≥ 2, the first patch delivered at most 50% coverage while the changelog claimed 100%.

Based on the calculations in this analysis, that fifty-percentage-point gap between perceived and actual security is the space where CVE-2026-33017 waited for ten months.

CISA rates the new flaw at CVSS 9.3, patched in version 1.9.0 (CSA), and classifies it under three CWEs simultaneously: CWE-94 (code injection), CWE-95 (eval injection), and CWE-306 — missing authentication for critical function (CyberPress). Three classification numbers. One root cause. An unsandboxed exec() call the first patch relocated but did not eliminate. Two CVEs reaching the same function through different endpoints in twelve months is not a pattern of bugs. This analysis argues it is a pattern of architecture.

From Advisory to RCE in Three Phases

For most vulnerabilities, weaponization requires reverse engineering. Attackers analyze binary patches, wait for a published proof-of-concept, or purchase exploit kits. Mandiant’s M-Trends reporting pegs the median time from disclosure to first observed exploitation at 5 days — and the broader industry average for critical CVEs hovers around 22 days. CVE-2026-33017 required none of that runway. The advisory described, in plain technical language, that the POST /api/v1/build_public_tmp/{flow_id}/flow endpoint passes user input to exec() without authentication (The Hacker News). For anyone who can construct an HTTP POST request, the advisory was the exploit.

That is a 26x acceleration over the industry median — not because the attackers were faster, but because exec()-class vulnerabilities eliminate the engineering step entirely.

Sysdig’s threat research team logged the first hit at 16:04 UTC on March 18 and observed the attack unfold in three distinct phases. Phase one: automated Nuclei scanner payloads hit exposed instances, with Cookie: client_id=nuclei-scanner headers and interactsh callback infrastructure confirming reachability. Phase two: custom Python-requests scripts replaced the scanners — directory enumeration, system fingerprinting, stage-two dropper delivery via curl. Phase three: environment variable dumps, .env file extraction, and targeted credential harvesting — OpenAI API keys, Anthropic API keys, AWS access keys, database connection strings (Dark Reading).

From automated scanning to targeted credential theft in under ten hours.

“This kind of rapid exploitation is becoming the norm rather than the exception for AI infrastructure tools,” said Michael Clark, Senior Director of Threat Research at Sysdig (Dark Reading). Clark’s team recorded more than 1,000 exploitation attempts across several regions within the first week — including info stealers, reverse shells, and cryptominers. That figure captures only attacks clumsy enough to hit monitored infrastructure. The actual exploitation count is a dark number — living in the unmonitored Langflow instances deployed by data scientists and platform engineers who never configured logging in the first place.

But Clark’s “norm” framing — fast exploitation of new CVEs — misses what makes exec()-class vulnerabilities structurally different from the buffer overflows and deserialization bugs that dominate KEV entries. For those, attackers must still reverse-engineer the patch diff to locate the memory corruption and craft a reliable exploit. For exec()-class vulnerabilities — where the advisory functionally describes how to pass code to an interpreter — the translation cost from advisory text to working HTTP request approaches zero. The 20-hour figure is not the floor for this vulnerability. It is the ceiling. The next Langflow advisory — or the next advisory for any AI tool with an unsandboxed interpreter — will be weaponized faster, because the playbook is now established and the scanner templates already written.

Currently, this pattern illustrates what this analysis terms the Feature-as-Exploit Gap: the distance between a tool’s marketed capability and its security posture when that capability is arbitrary code execution. Every unauthenticated path to exec() generates a new vulnerability under a new CVE number. Patching individual endpoints is whack-a-mole when the function itself is the attack surface. But what happens when the attack surface is also the product?

Architecture as Attack Surface

Here is where the standard incident-response narrative fails.

A normal CVE story ends with “patch your systems.” This one cannot, because Langflow’s architecture contains a bind that no patch resolves. Follow the logic:

Langflow’s value proposition is letting non-developers build AI pipelines by dragging, dropping, and connecting Python nodes in a visual interface. Running those nodes requires exec(). Sharing pipelines publicly requires unauthenticated access to flow endpoints (The Hacker News). These are not misconfigurations. They are the product. Now apply the constraint:

- Sandbox the execution layer → pipeline nodes can no longer run arbitrary Python → the core feature breaks.

- Gate public flow endpoints behind authentication → pipelines can no longer be shared openly → the collaboration workflow that powered adoption to 145,000 stars breaks.

- Do neither → every unauthenticated path to

exec()is a CVSS 9+ RCE. Not might be. Is.

This is not a security tradeoff. It is a trilemma with no safe vertex. Every option degrades either the product, the growth model, or the security posture. That architectural bind is why two CVEs hit the same function in twelve months — and why there is no version of Langflow’s current architecture where a third does not follow.

Security researcher Aviral Srivastava, who reported CVE-2026-33017 on February 26 after a 19-day responsible disclosure window (CSOnline), distilled the flaw to one sentence: “One HTTP POST request with malicious Python code in the JSON payload is enough to achieve immediate remote code execution” (The Hacker News). The CSA AI Safety Initiative’s subsequent analysis identified the same architectural bind: sandboxing the execution layer eliminates the core use case. When unsandboxed code execution and a required-unauthenticated path coexist in the same architecture, remote code execution is not a risk to be mitigated.

It is a mathematical certainty waiting for a route.

No production code-execution platform has ever maintained security through endpoint-level patching of an unsandboxed interpreter — every survivor, from Jupyter to Google Colab, changed the architecture. That absence of a counter-example after decades of similar platforms is itself the evidence.

Those 145,000 GitHub stars do not represent security auditors. They represent a globally distributed base of unpatched instances — many deployed by platform engineers and data scientists without IT review, replicating the shadow deployment pattern already driving untracked AI tool costs across enterprises. Each unpatched instance is not merely a target for this flaw. It is a conduit to every credential the pipeline touches — and Sysdig’s three-phase attack data shows exactly what happens next: automated discovery, exploitation, credential harvesting of the specific API keys connecting the pipeline to everything else. How many keys is “everything else”? Most organizations have never counted.

Scanning Your Credential Blast Radius

That uncounted number is the actual risk metric. The Credential Blast Radius — a framing introduced in this analysis — quantifies total exposure from a single compromised pipeline: every API key in the environment variables, every connection string in the config, every cloud token used to read and write data. Unlike static identity controls, this metric captures the lateral movement potential an attacker gains the moment exec() runs their payload — and unlike a CVSS score, it scales with your architecture, not the vulnerability.

CVSS 9.3 tells you the flaw is severe. The Credential Blast Radius tells you what severity actually costs.

Sysdig’s phase-three data provides the empirical basis. Attackers harvesting from a compromised Langflow instance targeted four specific credential classes: LLM API keys (OpenAI, Anthropic), cloud provider access keys (AWS), database connection strings, and .env files containing a mix of all three (Dark Reading). A typical production Langflow pipeline connects to at least one LLM provider, one data store, and one cloud service — a minimum of three credential sets. Enterprise deployments chaining multiple models, vector databases, and storage backends commonly expose five to eight. Each key is not just a secret — it is an authorization grant, often with permissions far exceeding what the pipeline requires.

Run the math against Langflow’s footprint. With 145,000 GitHub stars and the typical star-to-deployment ratio for developer tools hovering between 1-2%, a conservative estimate yields 1,450 to 2,900 active instances. At three to eight credentials per instance, the aggregate Credential Blast Radius across the Langflow environment falls between 4,350 and 23,200 exposed credentials — API keys, database passwords, and cloud tokens reachable via a single unauthenticated HTTP POST.

That is not a vulnerability count. It is a supply-chain exposure estimate. And it excludes the instances deployed inside corporate networks that never appear in Shodan scans or honeypot logs — the shadow deployments where credentials tend to carry the broadest permissions precisely because no security team scoped them.

Any organization running Langflow can calculate its own number in minutes: enumerate the environment variables and config files on each instance, count the distinct credentials, and multiply by the number of instances. The result is the actual blast radius of the next vulnerability — because the architectural analysis above demonstrates there will be one.

Not Just Fast — Structurally Certain

Based on available evidence, the operational question is not whether CVE-2026-33017 will be patched. Version 1.9.0 already exists. CISA’s April 8 deadline will force federal agencies to apply it (CyberPress). The question is what happens between the next advisory and the next patch — because the 20-hour window just demonstrated that for exec()-class vulnerabilities, the answer is: everything.

The evidence supports three conclusions.

First, the exploitation clock for AI pipeline tools now starts at disclosure, not at proof-of-concept publication. Twenty hours with no public PoC — a 26x acceleration over the industry median — means security teams cannot treat the patch-to-exploit window as a buffer. For tools built on unsandboxed interpreters, the advisory is the exploit documentation. Incident response must assume exploitation begins the moment the advisory goes public.

Second, patching endpoints on an unsandboxed exec() architecture is provably insufficient. Two CVEs through the same function via different routes in twelve months. Three CWE classifications on a single root cause. The 1/N coverage math strongly suggests every unpatched code path to exec() is the next CVE. Organizations still running Langflow after applying 1.9.0 should audit for additional unauthenticated paths to prepare_global_scope() rather than waiting for the next advisory to find them.

Third, the real exposure metric for AI pipeline compromises is the Credential Blast Radius, not the CVSS score. A CVSS 9.3 tells you to patch urgently. It does not tell you that your Langflow instance holds the AWS key to your production S3 bucket, the Anthropic key billing to your enterprise account, and the Postgres connection string to your customer database — and that all three were exfiltrated in phase three of an attack that completed before your team read the advisory. Calculate your Credential Blast Radius now, before the next disclosure starts the next 20-hour clock.

Critics of this framing point out that describing exec()-based execution as an irreparable architectural flaw may overstate the case: robust network segmentation, strict firewall rules permitting only authenticated internal traffic, and secrets management systems that rotate credentials automatically can meaningfully contain the Credential Blast Radius even on an unpatched instance. The strongest counterargument is that Langflow’s trilemma is not unique — Jupyter Notebook operated for years under nearly identical constraints before broad adoption of JupyterHub’s authentication layer, suggesting that operational controls can hold long enough for architectural remediation to catch up. What this view does not resolve is that those compensating controls require mature security programs precisely where Langflow’s star-to-deployment pattern indicates they are least likely to exist.

The first Langflow RCE was a warning. The second is a pattern. The architecture guarantees a third. The only variable is whether the credentials it exposes will be yours.

What to Read Next

- 41.6M AI Scribe Consultations Hide an Unregulated Medical Device

- Stryker Hack: Zero Devices Hit, Surgeries Canceled for 8 Days

- XBOW AI Agent Hits HackerOne #1 After 9.8 CVE Find

References

- Critical Langflow Flaw CVE-2026-33017 Exploited Within Hours — The Hacker News coverage of the 20-hour exploitation timeline and CISA KEV addition.

- Attackers Exploit Critical Langflow RCE Within 20 Hours of Disclosure — CSOnline analysis of exploitation speed vs industry median.

- Critical Flaw in Langflow AI Platform Under Attack — Dark Reading on Sysdig’s three-phase attack chain and credential harvesting.

- Langflow Code Injection Flaw — CyberPress on CISA deadline and federal remediation requirements.

- CSA Research Note: CVE-2026-33017 — Cloud Security Alliance technical analysis of the

exec()root cause.