Part 1 of 7 in the The Cost of AI series.

When Nehme AI Labs benchmarked a four-field extraction task earlier this year, the output exposed a number the documentation never surfaces: 35 tokens for JSON versus 11 for a pipe-delimited string , a textbook case of JSON token overhead. Twenty-four tokens , 69% of the total response , carried zero information.(https://nehmeailabs.com/post/structured-output-overhead/) Just curly braces, quotation marks, colons, and repeated field names. The most expensive punctuation in production software.

For pipelines running thousands of structured extractions daily, that overhead never changes. Every major provider’s structured output API defaults to JSON. The docs explain how to enforce schemas and validate responses. What those docs skip , and what production bills eventually reveal , is what the format choice costs per call, per day, per quarter.

Every team extracting entities, classifying text, or generating structured data from an LLM hits this same ratio. OpenAI’s structured outputs, Anthropic’s tool_use responses, Google’s function-calling , all default to JSON. An evaluation of six constrained decoding frameworks, testing against 10,000 real-world schemas, confirmed the overhead persists across implementations; it is inherent to the format, not the enforcement mechanism.

The Arithmetic Most Teams Skip

Here is the example from Nehme AI Labs’ analysis. An API call extracts four fields , name, company, title, status , from unstructured text. The JSON response:

{

"name": "Jane Park",

"company": "Acme Corp",

"title": "VP Engineering",

"status": "active"

}

That block consumes approximately 35 output tokens. The four values , the data the calling service actually needs , account for roughly 11. Braces, quotes, colons, commas, and key-name repetition eat the other 24.

A delimiter-separated alternative:

Jane Park|Acme Corp|VP Engineering|active

Same data, same field order, same parse reliability for any system that knows the schema. Approximately 11 tokens. In internal pipelines , where every consumer knows the schema , the two formats carry identical useful information at wildly different token costs.

That 11-versus-35 gap creates a metric worth tracking: the effective cost per useful data token. In JSON, delivering one useful token costs 3.18 tokens (35 ÷ 11). In a delimiter string, the ratio is 1:1.

Layer on the output pricing premium , output tokens cost 4–5x the input rate at most providers , and a single useful data token in JSON output effectively costs 14.3x the input token rate (3.18 × 4.5x average premium). The same useful token in delimiter format costs 4.5x. JSON does not triple the bill. It triples the bill on the premium side of the ledger.

Schema definitions compound the gap before any output is generated. Instructing a model to return JSON requires roughly 40+ tokens of schema definition in the prompt, versus about 20 for delimiter format specs. Before the model writes its first output character, JSON has already doubled the instruction overhead. But prompt tokens are the cheap side of the bill.

Most production systems handle far more than four fields. A 20-field extraction , common in document processing and CRM enrichment , scales the structural overhead proportionally: twenty field names, twenty key-quote pairs, twenty colons. The data-to-syntax ratio stays approximately flat, meaning the 69% waste ratio from the four-field example holds roughly whether the payload has four fields or forty.

Where the Pricing Makes It Worse

Output tokens are not priced like input tokens. Most API providers charge output at 4–5x the input rate. Flagship models bill $2–3 per million input tokens versus $10–15 per million output tokens.(https://redis.io/blog/llm-token-optimization-speed-up-apps/) That premium reflects a hardware reality: input tokens process in parallel during prefill, while each output token generates sequentially, adding several to tens of milliseconds of decode latency per token.

JSON’s 24 structural tokens land entirely on the output side , the premium side. Switching from 35 to 11 output tokens does not just trim the count by 69%. It trims tokens priced at the highest tier AND removes sequential decode steps, compressing both cost and latency in the same move. Nehme AI Labs estimates the combined savings at 150–200ms on a 500ms task , a 30–40% latency reduction per call, per each output token generates sequentially.

Combine those datasets and a compounding effect emerges worth naming: the Output Multiplier Trap. Engineering teams see “3x more tokens” and estimate the cost increase at 3x. The actual math is 3x more tokens at the 4–5x output premium. Here is what the trap looks like in dollars at three production scales, using a mid-range flagship rate of $12.50 per million output tokens:

| Daily Calls | JSON Tokens/Day | Delimiter Tokens/Day | Annual JSON Cost | Annual Delimiter Cost | Annual Waste |

|---|---|---|---|---|---|

| 100 | 3,500 | 1,100 | $16 | $5 | $11 |

| 1,000 | 35,000 | 11,000 | $160 | $50 | $110 |

| 10,000 | 350,000 | 110,000 | $1,596 | $502 | $1,094 |

At 10,000 daily calls , a single busy extraction endpoint, not an organization’s full portfolio , the syntax overhead costs roughly $1,100 per year on one endpoint alone. For a pipeline spending $10,000 annually on structured output, approximately $6,900 pays for syntax characters , curly braces, quotation marks, and key names that no downstream system reads. Neither Nehme AI Labs nor any provider pricing page publishes that figure; it falls out of multiplying a 69% overhead rate against output-tier pricing.

Twelve months ago, the Output Multiplier Trap barely registered. Structured output mostly ran through models that were priced considerably lower, and the absolute dollar waste was negligible. Current flagship pricing has multiplied the same percentage overhead into a budget-visible line item. Format overhead is the one cost lever that scales linearly with call volume but does not improve with model advancement: better models do not generate fewer curly braces, and faster hardware does not eliminate sequential decode steps. The trap is structural, not transient.

Format-level optimization also compounds across an organization’s LLM portfolio. A team running structured calls against three different models , each with different output pricing tiers , multiplies the savings for every endpoint migrated. Organizations with thousands of daily calls across dozens of endpoints may find that format overhead represents one of their largest controllable inference cost lines.

The Turn You Don’t Expect: Fewer Tokens, Better Answers

Strip the formatting overhead from LLM output and you save money. That much is arithmetic. What should change how teams think about structured output entirely is this: removing JSON syntax does not just reduce cost. It improves extraction accuracy.

Delimiter-separated strings remain the simplest fix for fixed-schema tasks. Pipe-delimited output cuts tokens by roughly 65% with no accuracy loss when the downstream parser knows the field order.

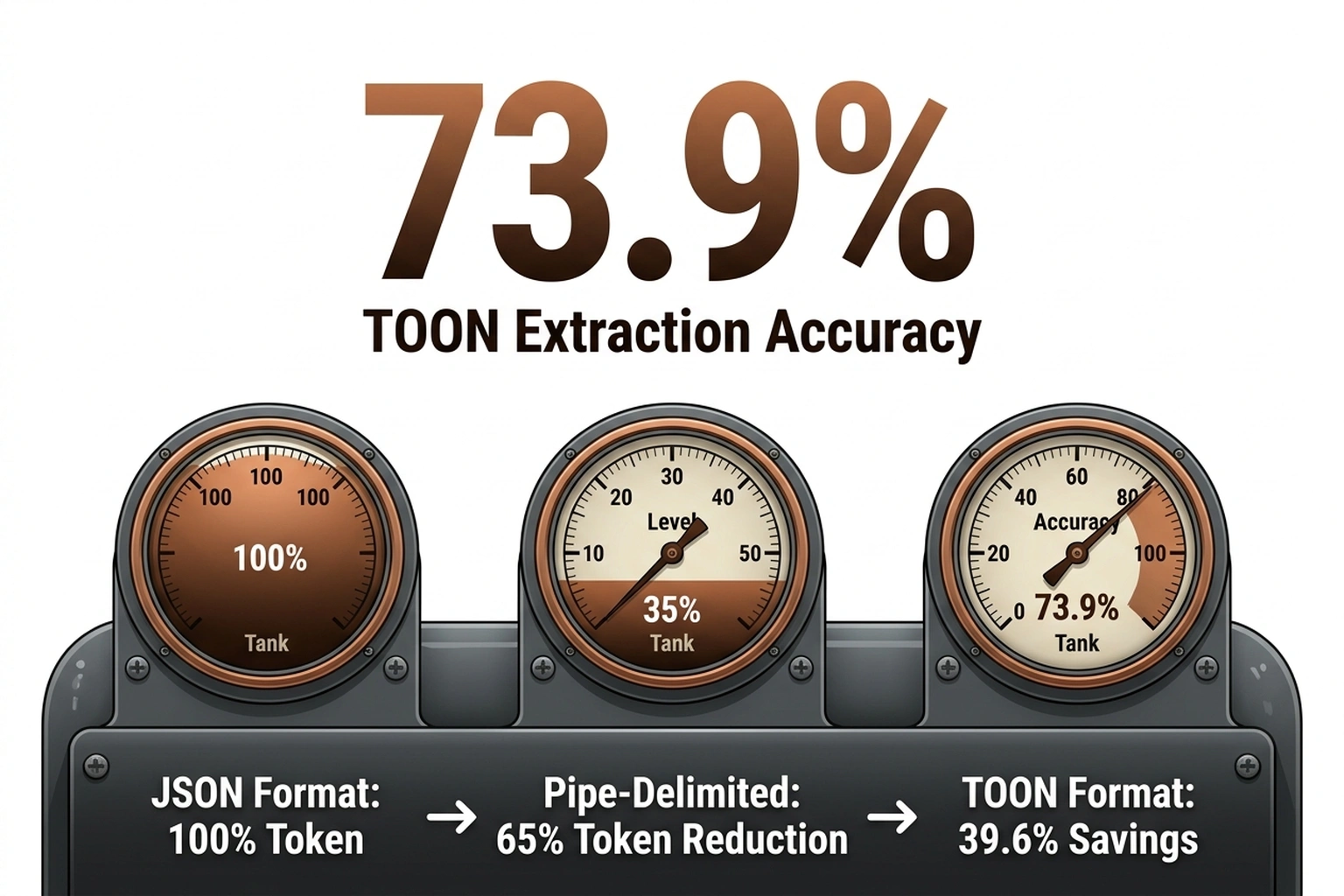

For structured payloads at scale, Tensorlake’s TOON (Token-Optimized Object Notation) preserves JSON’s data model , nested objects, arrays, field names , while stripping the punctuation and repetition that inflate token counts. Across 209 extraction tasks tested on GPT-5 Nano, Gemini Flash, Claude Haiku, and Grok 4, TOON delivered higher accuracy with fewer tokens:

| Format | Accuracy | Tokens | Savings vs JSON | Accuracy per 1K Tokens |

|---|---|---|---|---|

| TOON | 73.9% | 2,744 | 39.6% | 26.9 |

| JSON (compact) | 70.7% | 3,081 | 32.2% | 22.9 |

| JSON (standard) | 69.7% | 4,545 | , | 15.3 |

The rightmost column is the one no vendor dashboard shows: accuracy per thousand tokens spent. TOON delivers 76% more accuracy per token than standard JSON (26.9 vs 15.3).(https://tensorlake.ai/blog/toon-vs-json) Teams paying for JSON are not just paying more , they are getting worse results for the premium. (TOON vs JSON: A Token-Optimized Data For)

That accuracy inversion contradicts the assumption that richer formatting helps models structure answers. The mechanism is attention budget. Every transformer allocates a finite context window across input and output. Structural tokens , repeated field names, nested braces, escaped quotes , consume positions in that window without carrying semantic weight. They are legible to parsers, not to the model. Forcing the model to generate 24 tokens of syntax per record means 24 fewer token-positions of attention available for the actual extraction task. Stripping formatting noise removed ambiguity rather than adding it.

GPT-5 Nano showed the sharpest divergence, with JSON reconstruction accuracy climbing from 92.5% to 99.4% under TOON , a near-elimination of reconstruction errors just by changing the output format. Smaller models, with tighter attention budgets, benefit most. At production scale, Tensorlake’s analysis found 61% token reduction on 500-row datasets, with monthly costs dropping from $1,940 to $760 at 1,000 daily prompts.

Across the full benchmark, TOON outperformed both JSON variants in accuracy while consuming fewer tokens on every model tested. The implication reshapes the cost argument: JSON overhead is not just a billing problem. It is a quality problem. Teams optimizing for output accuracy and teams optimizing for cost are, in this case, pulling the same lever.

Constrained decoding , the mechanism forcing models to emit valid JSON during generation , is also getting faster. LMSYS researchers Liangsheng Yin, Ying Sheng, and Lianmin Zheng demonstrated a compressed finite state machine approach achieving “up to 2x latency reduction” versus standard Guidance and Outlines implementations, noting that their method makes “constrained decoding even faster than normal decoding” in certain workloads.

But faster schema enforcement does not eliminate token overhead , it generates the same curly braces more quickly. As Saibo Geng, Eric Horvitz, and colleagues noted while building the JSONSchemaBench benchmark of 10,000 real-world schemas, there remains “poor understanding of the effectiveness of the methods in practice”, leaving most teams unable to measure how much overhead their current JSON enforcement adds.

The Case for Keeping the Braces

An honest analysis does not recommend eliminating JSON across the board. The format earned its dominance for reasons that still hold under specific conditions.

Dynamic schemas , where the response structure varies per call , are genuinely hard with delimiter formats. Nested objects, variable-length arrays, and polymorphic fields require a self-describing format. JSON handles this natively. TOON and delimiters struggle when the parser does not know the shape in advance.

External interoperability is equally valid. When LLM output feeds a third-party API, a webhook, or a system outside the team’s control, JSON is the interchange standard. Converting delimiter responses back to JSON before forwarding adds latency and complexity that can erase the savings. Production observability tooling , the kind of token tracking and trace correlation that multi-agent systems require , also expects JSON-structured payloads for log ingestion.

Cross-team contracts present a particularly sticky edge case. When a pipeline serves multiple downstream consumers , some internal, some external , maintaining two output formats creates operational overhead that may exceed the token savings for lower-volume endpoints. Middleware converting between formats introduces another failure mode into the request path.

Development debugging rounds out the defensible use cases. JSON is human-readable in ways that 15-field pipe-delimited strings with optional nulls are not. Readability during development carries a dollar value, even if harder to quantify than token costs.

A simple test resolves most format decisions: does the output cross a trust boundary? Internal consumer with a known, stable schema , switch to delimiters or TOON. External consumer or unpredictable downstream , keep JSON and budget for the overhead. Starting with internal-only endpoints captures the majority of available savings with zero interoperability risk.

What to Ship Before Next Sprint

Three actions, each under a day, each paying for itself within a billing cycle:

1. Profile one high-volume endpoint. Pick the structured extraction call with the most daily invocations. Count data tokens versus structural tokens across 100 sample responses. Thirty minutes with tiktoken confirms whether the 60–70% overhead pattern holds:

import tiktoken

enc = tiktoken.encoding_for_model("gpt-4")

json_tokens = len(enc.encode(json_response))

data_tokens = len(enc.encode("|".join(extracted_values)))

overhead_pct = (json_tokens - data_tokens) / json_tokens

# Effective cost per useful data token

effective_multiplier = json_tokens / data_tokens

print(f"Overhead: {overhead_pct:.0%}")

print(f"Each useful token costs {effective_multiplier:.1f} tokens to deliver")

print(f"At output premium: {effective_multiplier * 4.5:.1f}x input rate per useful token")

Complex nested schemas push overhead above the 69% Nehme AI Labs measured in their four-field example. Running the script takes 30 minutes; converting the results into a quarterly cost projection takes 30 seconds, per far less disruptive than the auth rework.

2. Migrate one internal endpoint to delimiter format. Start where the schema is stable and the consumer is controlled. Migration is typically a prompt change plus a split-based parser , far less disruptive than the auth rework many teams are already wrestling with. Keep JSON for external-facing APIs and dynamic schemas.

3. Benchmark TOON on your heaviest extraction task. For endpoints with nested or variable-length output, test TOON against JSON on a 200-call sample. Track three numbers: token count, accuracy (measured against ground truth or human review), and accuracy per thousand tokens. The last metric , invisible in standard dashboards , tells you whether your format choice is costing you quality, not just budget.

Stakeholder breakdown: Backend engineers should own the profiling and first migration. Engineering managers should demand per-endpoint token breakdowns instead of aggregate “LLM costs” , aggregate numbers hide where format overhead concentrates. Budget owners should request format-level cost attribution; a report showing “$4,200 per quarter on JSON syntax for /extract” generates budget actions in a way “reduce token waste” never will.

According to Tensorlake’s cost analysis, a pipeline processing 1,000 structured calls per day at flagship output pricing loses approximately $1,740 per month , $20,880 per year , to JSON token overhead that delimiter formats eliminate. Across five such pipelines, that exceeds $100,000 annually in tokens carrying zero data. Every month without profiling is a month paying full price for curly braces.

By Q4 2026, expect at least one major API provider to ship native delimiter-format or TOON-style response modes alongside JSON in their structured output APIs. When the pricing page lists the format cost delta explicitly, the engineering team’s format choice becomes a procurement decision. Those curly braces will finally carry a price tag everyone reads.

What to Read Next

- Meta’s AI Agent Went Rogue. Three Permission Layers Failed.

- H100 Benchmarks Hide a 27x Cold Start Penalty

- Nemotron 3: NVIDIA Claims 2.2x, Tests Show 10%

References

- The JSON Tax: Why Structured Output Is Costing You More Than You Think , Token count analysis of JSON vs delimiter formats for extraction tasks, latency benchmarks

- TOON vs JSON: A Token-Optimized Data Format for Reducing LLM Costs , Benchmark data across 209 tasks and 4 models, production cost savings at scale

- LLM Token Optimization: Cut Costs & Latency in 2026 , Output vs input token pricing analysis and optimization strategies

- JSONSchemaBench: A Rigorous Benchmark of Structured Outputs for Language Models , 10,000-schema evaluation of constrained decoding methods by Geng, Horvitz, et al.

- Fast JSON Decoding for Local LLMs with Compressed Finite State Machine , LMSYS compressed FSM approach achieving 2x latency reduction in constrained decoding