Affiliate Disclosure: This article contains affiliate links. We may earn a commission if you purchase through these links, at no additional cost to you. This helps us continue publishing free content. See our full disclosure.

Part 2 of 6 in the Benchmark Reality Checks series.

A 14-billion-parameter video model has no business running on a $400 graphics card. Yet Helios—released March 4 under Apache 2.0 by Peking University, ByteDance, and Canva—does exactly that, achieving real-time AI video generation through an autoregressive streaming architecture that keeps memory demands flat regardless of clip length. The model that datacenter GPUs were supposed to gatekeep now runs on a desktop workstation, and the economics of cloud video generation crack open with it.

The numbers get stranger. Helios is larger than every competing open video model, yet its distilled variant generates video faster than architectures a seventh its size. On quality benchmarks, it scores 6.94 for long videos, topping the leaderboard. The same architectural choice that explains both anomalies—streaming generation with constant memory cost—is the one that cracks open cloud video pricing. A $400 GPU pays for itself after roughly 200–300 uses.

How a 14B Architecture Outruns Models a Seventh Its Size

Conventional wisdom says bigger models need bigger hardware. Helios inverts this through hierarchical memory compression: historical video context gets reduced by 8x in token count, with multi-stage sampling adding a further 2.29x compression. Multiply those together and the total compression reaches 18.32x—meaning a 14-billion-parameter model’s per-frame compute drops to the equivalent of roughly 764 million parameters, based on the calculations in this analysis. The paper conservatively describes this as comparable to a 1.3B architecture; the raw compression math suggests the effective cost may be even lower. Either way, it explains the seemingly impossible speed numbers.

H100 GPUs run $25,000 apiece—the price of 62 RTX 4060 Ti cards. That cost barrier has kept large-scale video generation locked behind corporate budgets, with cloud inference adding per-generation fees of $2–8. For teams that need H100-class throughput without the capital outlay, cloud GPU platforms like RunPod rent individual H100s by the hour — collapsing the $25,000 barrier to a pay-per-session cost. Yet Helios hits 19.5 FPS on a single H100 with minimal VRAM through group offloading. On a consumer RTX 4060 Ti, Bonega.ai notes closer to 2–3 FPS rather than real-time speed—but a 14B model running at all on a sub-$500 card is the headline, not the frame rate.

On closer inspection, the fleet math sharpens the point. That same $25,000 buys 62 consumer GPUs. At 2.5 FPS each—the midpoint of Bonega.ai’s range—a distributed consumer fleet produces roughly 155 FPS aggregate, against 19.5 FPS for a single H100. Eight times the throughput for the same capital outlay. Datacenter GPUs still win on per-card density, power efficiency, and orchestration simplicity, but the evidence suggests the raw price-performance ratio has inverted for parallelizable workloads.

For comparison: SANA Video Long, at 2B parameters, manages 13.24 FPS. Helios-Distilled—larger even than CogVideoX-13B—clocks 19.53. A model seven times the size, running nearly 50% faster.

More strikingly, the team achieved this “without standard acceleration techniques such as KV-cache, sparse/linear attention, or quantization,” as lead researcher Li Yuan and colleagues at Peking University write in the paper. Speed comes from fundamental architecture, not engineering shortcuts that sacrifice flexibility. For a field accustomed to trading capability for performance, that distinction matters—it means the efficiency isn’t a ceiling but a floor.

Diffusion-based video models denoise entire temporal sequences simultaneously, holding every frame in memory during each pass. VRAM scales with clip duration. Helios generates frame by frame, so peak memory becomes a function of per-frame state, not video length: a five-second clip and a sixty-second clip demand the same ~6GB with group offloading.

That constant memory footprint is what makes real-time AI video on consumer hardware architecturally possible—and everything else depends on it.

On quality, Helios scored 6.94 on VBench long-video evaluation, topping architectures that required orders of magnitude more compute, while a 200-participant user study validated the metrics. Helios-Distilled trades some of that fidelity for speed—reaching 19.53 FPS—which raises the real question: how much are you paying for quality your workflow doesn’t need?

The Turn: When Constant Memory Meets Per-Generation Pricing

Here is where the architecture stops being a benchmark story and becomes an economic one.

Cloud APIs charge $2–8 per generation. For diffusion architectures, those fees tracked genuine resource consumption—more frames meant more VRAM, more compute, more cost. The price reflected the physics. Autoregressive streaming shatters that alignment. If peak memory stays constant regardless of clip length—if a sixty-second video costs the same compute as a five-second one—then per-generation pricing no longer maps to the resources consumed.

Run the margin math. Local generation costs $0.01 in electricity per clip. Cloud services charge $2–8. That suggests 99.5% to 99.8% of the cloud fee is not compute—it’s infrastructure amortization, margin, and overhead.

When diffusion demanded expensive VRAM scaling, that overhead was invisible, folded into genuinely high resource costs. Autoregressive streaming makes the gap legible. The price didn’t change. The cost did.

This analysis terms this the Duration Tax—the artificial ceiling cloud APIs impose on video length because per-generation pricing makes longer clips economically unfavorable for providers. Providers cap duration and impose rate limits because longer output erodes margins, creating what Bonega.ai’s analysis describes as “hesitation to experiment”—a behavioral tax layered on top of the financial one. The Helios paper’s streaming architecture and Bonega.ai’s cost breakdown together make the tax visible. Local autoregressive models eliminate it entirely.

From a practical standpoint, what this analysis terms the Duration Tax scales with ambition. Here is what it costs at three usage tiers:

| Usage Tier | Monthly Volume | Annual Cloud Cost ($4 avg) | Annual Local Cost ($0.01/clip) | Duration Tax Paid |

|---|---|---|---|---|

| Freelancer | 50 clips/mo | $2,400 | $6 | $2,394 |

| Mid-size studio | 200 clips/mo | $9,600 | $24 | $9,576 |

| Production house | 1,000 clips/mo | $48,000 | $120 | $47,880 |

At every tier, local hardware running Helios captures over 99% of the cloud spend as savings. The freelancer recoups a $400 GPU in two months. The production house pays a Duration Tax equivalent to two full-time junior salaries—for compute that costs $10 per month in electricity.

Cloud providers aren’t being stubborn—they’re being rational. Billions invested in datacenter hardware optimized for batch diffusion. Per-generation pricing that amortizes that capital expenditure. Switching to streaming-friendly pricing doesn’t just change the product—it writes down the infrastructure.

Not a market failure—an infrastructure lock-in.

Duration Tax operates on two levels. The line item you can see: cloud services charge more for longer clips, making sixty-second iterations Helios handles for pennies prohibitively expensive. The one you can’t: rate limits and latency throttle creative iteration itself, imposing a cost measured not in dollars but in ideas never tested. Locally deployed, Helios offers zero per-generation cost, full privacy, and no rate limits.

Where the Breakeven Understates the Case

Bonega.ai’s headline math is straightforward: $400 / ~$2 per cloud generation ≈ 200–300 generations to break even. For a studio running daily iterations, roughly one month. But that calculation assumes static pricing and fixed video length—two assumptions what this analysis terms the Duration Tax has already undermined. Cloud costs scale with ambition. Longer clips, higher resolution, more iterations all push the per-generation fee upward. Local hardware costs stay flat.

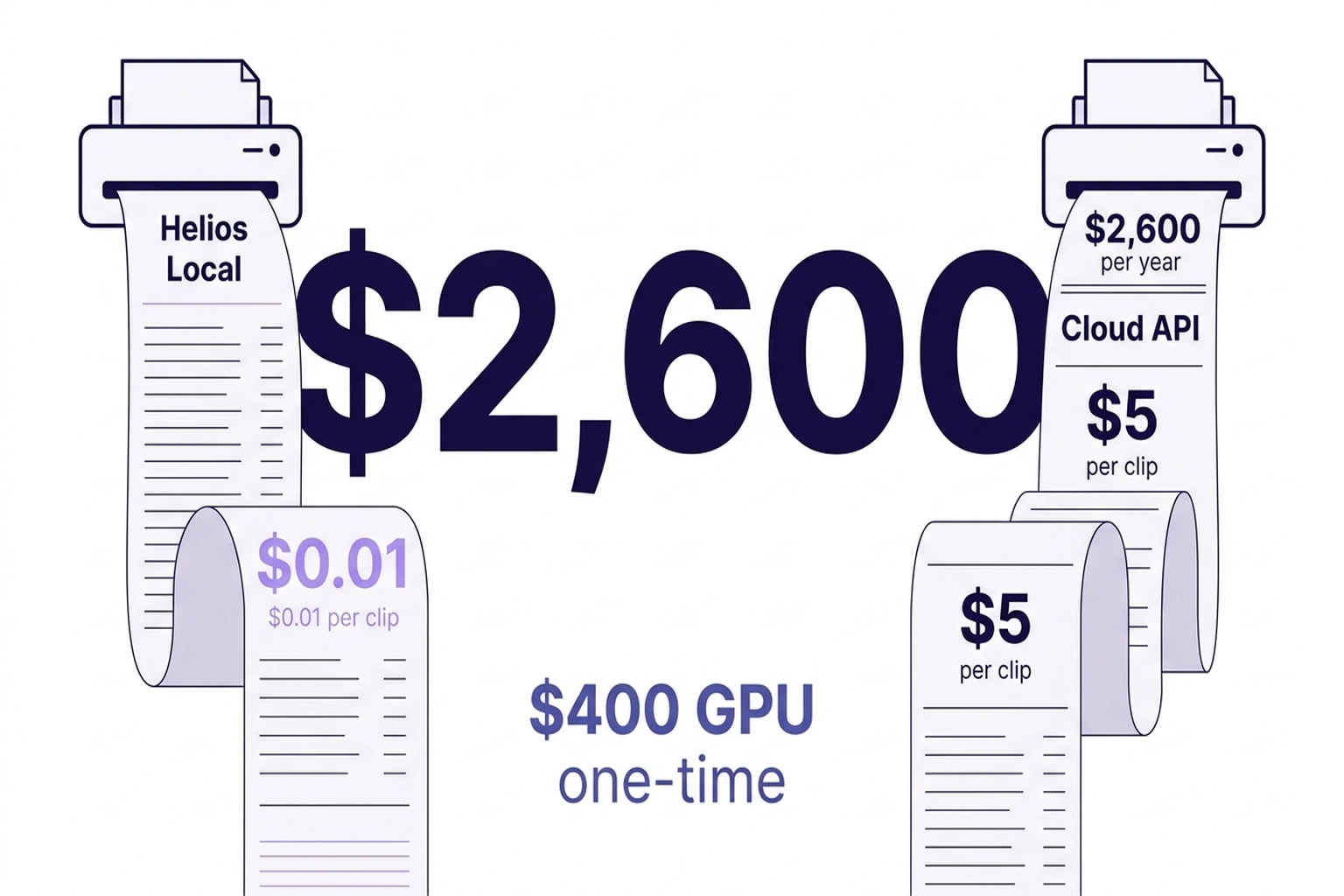

A studio generating 10 one-minute clips per week at $5 average cloud cost spends $2,600 annually. Same workflow on an RTX 4060 Ti: 10 × $0.01 = $5.20 per year. Net annual savings: $2,594.80—a figure neither source calculated, and one that makes the 200-generation breakeven look quaint.

| Metric | Cloud API ($5/gen) | Helios Local ($0.01/gen) | Delta |

|---|---|---|---|

| 10 clips/week × 52 weeks | $2,600/year | $5.20/year | $2,595 saved |

| Iteration limit | Rate-limited (10-50/day) | Unlimited | Unlimited |

| Privacy | Data sent to third party | Fully local | Local wins |

| Hardware cost | $0 (API) | $400 one-time | Pays back in 1 month |

| 60-second clips | $15-25 each | $0.01 each | 1,500-2,500x cheaper |

| Resolution ceiling | Up to 1080p | 384×640 (current) | Cloud wins (for now) |

The deeper disruption isn’t the savings — it’s the constraints that vanish. A unified architecture handles text-to-video, image-to-video, and video-to-video, switching based on input context with no per-mode charge. On cloud APIs, each mode typically incurs separate fees. Locally, mode-switching costs nothing. A similar pattern of hidden architectural overhead surfaces whenever pricing models lag behind the architectures they were designed around.

Second-order effect: when per-generation cost drops to functionally zero, iteration volume increases. Teams generating 10x more variants outcompete those rationing cloud credits—not because their talent is superior, but because they iterate faster.

Bonega.ai frames this primarily as a savings story—“zero per-generation cost, full privacy, and no rate limits”. But this overlooks what The Decoder’s coverage documents: at 384×640 resolution with visible flicker artifacts, production workflows requiring broadcast quality will need paid upscaling or post-processing—partially reinstating the cloud overhead Bonega.ai’s math assumes away. The savings are real, but the headline breakeven figure assumes output quality the architecture does not yet deliver for all use cases.

That said, the framing understates what the data reveals in the opposite direction. Cost analysis assumes the cloud pricing model survives contact with the architecture that made it unnecessary. A more precise reading: what this analysis terms the Duration Tax isn’t a line item to optimize. It’s a business model whose architectural foundation just disappeared.

But business model obsolescence only matters if the replacement produces output people actually want.

What the Quality Benchmarks Actually Show

Resolution is the honest weakness. Helios generates at 384×640 pixels with flicker artifacts at segment transitions. For pixel-level post-production, this is disqualifying. Any VFX supervisor requiring broadcast-ready output will correctly note that full-sequence bidirectional attention—the diffusion approach Helios abandons—enables higher per-frame fidelity by processing every frame in context with every other.

That objection is valid and too narrow.

Resolution and flicker matter for final delivery. For pre-visualization, storyboarding, client pitches, and social media—workflows consuming the majority of generation volume—the quality bar sits lower. A 200-participant user study rated Helios competitive with higher-fidelity models in exactly these scenarios. ByteDance appears to recognize the production gap: the project is designated strictly for research, with no planned commercial integration. But Apache 2.0 licensing means the community—not ByteDance’s product roadmap—will determine where quality goes from here.

One limitation deserves explicit flagging: Helios’s quality benchmarks come from the research team’s own VBench evaluation. Independent production-scale testing has not been published—a familiar gap for anyone tracking how benchmark claims diverge from real-world performance. Until third-party validation arrives, the quality figures carry the caveat of self-reported data.

Looking at the data, a practical split is emerging: diffusion models for final-frame fidelity where budgets support it, autoregressive streaming for the volume-driven iteration work that precedes it. Helios doesn’t need to replace cloud diffusion to disrupt it. It needs to capture the 80% of generation volume where broadcast quality was never the requirement—the drafts, the variants, the what-ifs that were never generated because each one cost $5 and took minutes.

That volume is where what this analysis terms the Duration Tax extracted its real toll. Not from the clips studios paid to generate, but from the ones they decided weren’t worth the price.

Three Levers Worth Modeling

For any team evaluating whether what this analysis terms the Duration Tax applies, the arithmetic reduces to one formula:

Duration Tax Rate = (Avg. cloud cost per generation × monthly generations) / hardware cost

A result above 1.0 means the $400 investment pays for itself within one month. Below 0.15, cloud APIs remain more practical—setup overhead outweighs savings for occasional users.

| Stakeholder | Duration Tax Impact | Verdict |

|---|---|---|

| Indie creators (50+ gens/month) | ~$100–400/month in cloud fees eliminated | Deploy now; breakeven under 4 months |

| Small studios (200+ gens/month) | ~$400–1,600/month in savings | Dedicate an RTX 4060 Ti workstation |

| Enterprise VFX (final delivery) | Quality gap at 384×640 prohibitive | Wait for resolution scaling updates |

For CTOs: the strategic question isn’t whether to adopt Helios today—it’s whether to build internal GPU infrastructure now or risk vendor lock-in as cloud providers adjust pricing to protect margins the architecture no longer justifies. For security teams: local deployment eliminates data exposure to third-party APIs but introduces model supply-chain risk—verify checksums against the official repository before deployment and audit Apache 2.0 dependencies.

Every additional second of real-time AI video widens the gap along precisely the dimension cloud pricing structurally cannot match. The arithmetic is settled. The question remaining is whether deployment complexity erodes the savings—or whether three terminal commands are really all it takes.

How to Deploy Helios on a $400 GPU

Getting started requires three commands and a GPU with 6GB of free VRAM:

git clone --depth=1 https://github.com/PKU-YuanGroup/Helios.git && cd Helios

conda create -n helios python=3.11.2 && conda activate helios

python infer_helios.py --base_model_path "BestWishYsh/Helios-Distilled" \

--sample_type "t2v" --prompt "YOUR_PROMPT" --num_frames 240 \

--enable_low_vram_mode --group_offloading_type "leaf_level"

Step 1: Verify your hardware. Any NVIDIA GPU with 6GB+ VRAM works—RTX 4060 Ti, RTX 3060 12GB, or higher. Run nvidia-smi to confirm available memory. Consumer cards generate at 2–3 FPS rather than the H100’s 19.5 FPS, but per-frame cost remains ~$0.01 regardless of speed.

Step 2: Choose your model variant. Helios-Distilled prioritizes speed (19.53 FPS on H100). The base Helios model offers higher fidelity at lower throughput. For iteration-heavy workflows—storyboarding, client previews—start with Distilled. Switch --base_model_path to "BestWishYsh/Helios" for the full model.

Step 3: Pick your generation mode. The unified architecture handles text-to-video, image-to-video, and video-to-video by changing the --sample_type flag (t2v, i2v, v2v). On cloud APIs, each mode typically incurs separate fees. Locally, mode-switching costs nothing.

Step 4: Scale duration freely. Adjust --num_frames without worrying about cost. A five-second clip and a sixty-second clip demand the same ~6GB VRAM. This is what this analysis terms the Duration Tax in reverse: the constraint cloud pricing imposes on video length simply does not exist on local hardware.

Twelve months ago, the relevant question was “which cloud API produces the best output?” Bonega.ai’s cost analysis hasn’t changed the arithmetic: 200–300 generations, and the $400 GPU has already paid for itself. With the Helios repository already listing experimental interactive control among its supported modes and the codebase open under Apache 2.0 for community extension, the question shifts. Not which cloud provider offers the best output—but why any provider still charges per generation for real-time AI that costs $0.01 in electricity. Every model released after Helios will have to answer that.

What to Read Next

- TurboQuant’s 6x Compression Creates More GPU Demand

- GPT-5.4 Mini vs Nano: Small Model Costs Hide a 33-Point Cliff

- Qwen 3.5 Benchmark Win Hides a 15th-Place User Verdict

References

- Helios: Real Real-Time Long Video Generation Model — Primary research paper from Peking University, ByteDance, and Canva detailing 14B autoregressive streaming architecture and benchmark results.

- Helios: 14B real-time AI on Consumer Hardware — Cost analysis comparing cloud API pricing against local generation economics on consumer GPUs.

- ByteDance’s Helios Brings Minute-Long AI Video Close to Real-Time — Independent reporting on VBench scores, FPS benchmarks, and future development roadmap.

- PKU-YuanGroup/Helios GitHub Repository — Installation instructions, hardware requirements, VRAM specifications, and inference scripts.

- Helios Technical Analysis — Community commentary on architectural innovations, computational cost comparisons, and market implications.