“`

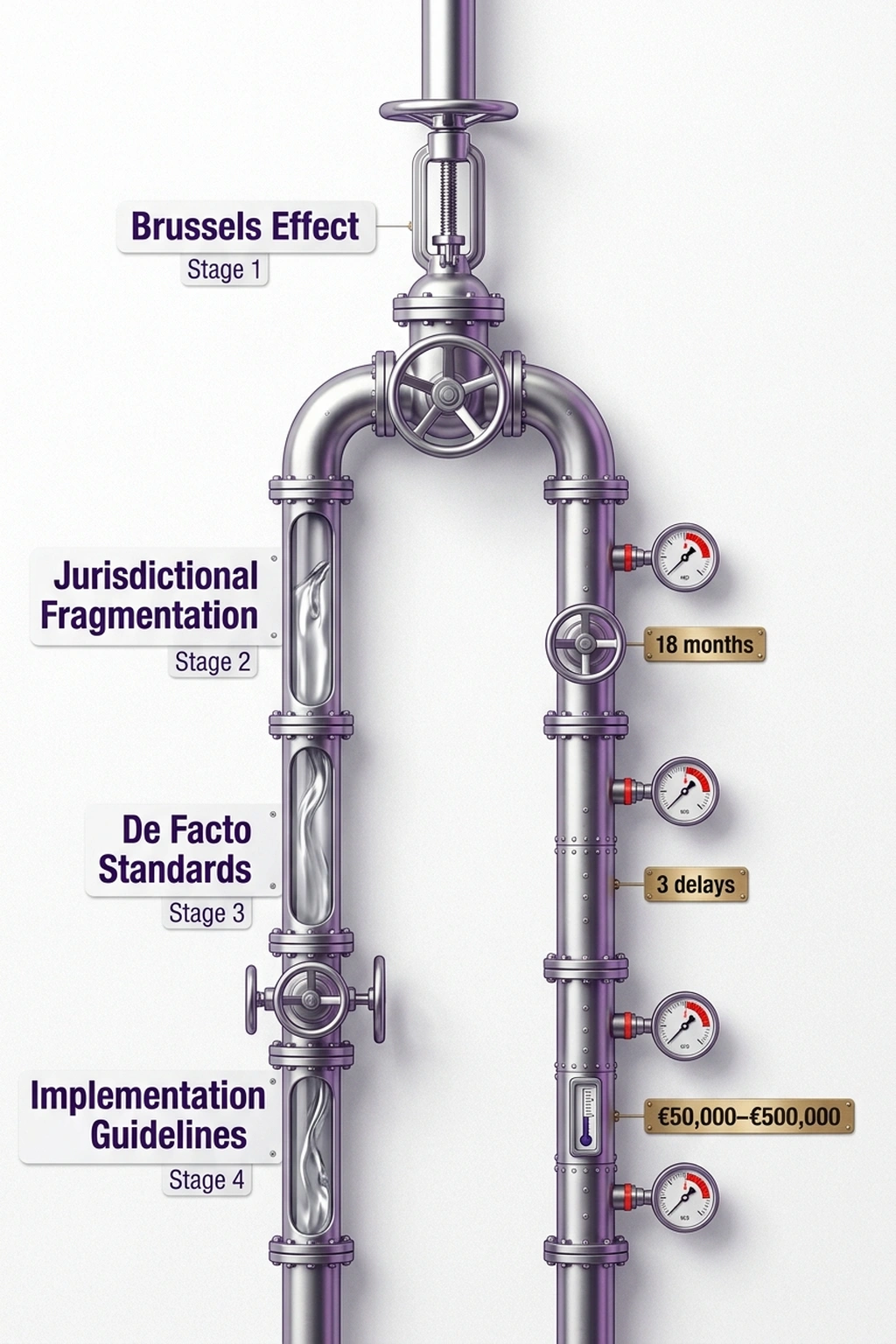

March 27, 2026. The European Parliament’s committee vote on the AI Act implementation timeline — already postponed once — gets delayed again, pushing key provisions to Q3 2026 (Computerworld). This marks the third significant delay in 18 months for a regulation originally billed as the world’s gold standard for AI governance. Meanwhile, companies across Europe have spent between €50,000 and €500,000 building compliance frameworks around timelines that no longer exist.

EU AI Act compliance deadlines 2026 were supposed to bring certainty. Instead, they’ve become a moving target that punishes early movers — the very organizations that took regulation seriously. Compliance teams built risk classifications for AI systems as they existed in 2024. Agentic AI, autonomous tool use, and multi-step decision chains have since rendered those classifications incomplete at best, irrelevant at worst.

“California essentially wants to set a benchmark for de facto AI standards when it comes to procurement, safety, and ethics,” noted Neil Shah, signaling that the regulatory center of gravity may already be shifting away from Brussels (Computerworld). California’s governor signed new AI guardrails via executive order on March 31, 2026, moving while the EU deliberates (CBS News). Europe didn’t just lose time. It lost the initiative.

Technology-Neutral by Design, Obsolete by Publication

EU AI Act compliance deadlines 2026 represent a governance mechanism that was designed to be technology-neutral and future-proof. Yet three delays in 18 months prove its framework cannot classify AI systems that didn’t exist when the text was drafted — specifically agentic AI that chains decisions across time in ways no risk category captures.

Consider what happened between the Act’s initial drafting and today. The risk taxonomy — unacceptable, high, limited, minimal — assumes a model receives input and produces output. That describes GPT-4. It does not describe an agent that calls a database, decides to escalate, drafts an email, and executes a financial transaction in a five-step chain where the vulnerability lives between steps three and four. The Act has no category for that. Regulators acknowledge the definitional gaps, but acknowledging a gap and closing it require the same committee process that has produced three delays.

An April 1, 2026 analysis from the European Parliament’s own think tank examined regulatory sandboxes and implementation challenges — published four days after the latest delay (EP Think Tank). Timing speaks volumes. The body charged with implementation guidance is still researching how to implement while companies wait for answers.

What Delays and Executive Action Reveal: The Moving Finish Line

What the European Parliament’s delays and California’s simultaneous executive action reveal is what this analysis terms The Moving Finish Line — a structural failure where regulation-by-committee produces rules for a technology’s past state while the technology reinvents itself every six months, making compliance spending a recurring sunk cost rather than an investment.

California provides the contrast that exposes the structural problem. Governor Newsom’s executive order, signed March 31, 2026, imposes new AI regulations on businesses operating in the state (CBS News). The state separately moved to bar AI vendors that cannot prove bias safeguards, requiring vendors to submit audited documentation of bias testing, validation methodologies, and demographic performance metrics across protected categories — effectively establishing a procurement standard that functions as de facto regulation for any company selling to California government agencies (Computerworld). From proposal to executive action: weeks. From EU AI Act passage to enforcement: years, and counting.

Original calculation: A company that spent €250,000 (the midpoint of the €50K–€500K range) on compliance frameworks tied to the original EU timeline has now incurred that cost with zero regulatory benefit. If the Act enforces in Q3 2026 and requires reclassification for agentic AI systems, that company faces an additional revision cycle estimated at 40–60% of the original spend — roughly €100,000–€150,000 — to update risk assessments for technology the original framework never anticipated. The total: €350,000–€400,000 spent complying with a regulation that didn’t exist, for systems that no longer match the taxonomy.

Compounding matters, as covered in 19 Countries, €35M in Fines, Zero AI Act Regulators, enforcement infrastructure remains absent even where the rules technically apply. Companies aren’t just waiting for the timeline — they’re waiting for the regulators who will interpret it.

Why “Delays Produce Better Rules” Gets It Wrong

Critics of this view will argue the delays reflect responsible governance — taking time to get the implementation right rather than rushing flawed provisions into force. Proponents argue that a well-crafted regulation arriving late beats a sloppy one arriving on time. The EU’s influence operates through the “Brussels Effect”: once Europe sets a standard, global companies adopt it rather than maintain separate compliance regimes. Under this view, the delays are a feature, not a bug.

However, this argument fails on two counts. First, the Brussels Effect requires the rules to actually exist and be enforceable. As the analysis of the federal push to override state AI regulation demonstrates, jurisdictional fragmentation in the United States is already producing its own de facto standards — and those standards are being adopted faster than the EU can finalize its implementation guidelines. Second, the “better late than flawed” framing ignores that the Act is already flawed in ways the delays don’t fix. The risk taxonomy was written for a generation of AI that is no longer current. More deliberation time doesn’t solve a structural definitional problem — it just gives committees more time to argue about definitions that were outdated before the arguments started.

California’s speed isn’t accidental. Executive orders bypass the legislative consensus process that bogs down supranational bodies. That’s a trade-off — less democratic input, faster execution. But for companies operating in both jurisdictions, the practical reality is clear: California’s rules will shape behavior years before the EU’s do, as explored in Trump’s 4-Page AI Framework Kills 131 State Protections. The Brussels Effect assumes Europe moves first. It no longer does.

Build for Patterns, Not Dates: A Governance Blueprint

For compliance leaders navigating EU AI Act compliance deadlines 2026, the strategic implication is straightforward. Stop building compliance around specific timelines and instead build around the pattern. Any regulation targeting AI capabilities will arrive at least one capability generation late. Design governance that adapts faster than the committees writing the rules.

This means: classify AI systems by behavior — what the system actually does with data and decisions — not by the Act’s risk categories, which describe what systems did in 2023. Map decision trajectories for any agentic system your organization deploys. Track which tool calls chain together and where authorization escalation occurs. That mapping is jurisdiction-agnostic; it serves compliance whether the final rules come from Brussels, Sacramento, or somewhere that hasn’t acted yet.

For organizations subject to DORA and financial-sector AI requirements, the parallel is instructive. DORA’s framework applies to digital operational resilience regardless of the specific technology, which makes it more durable than capability-specific rules. Build AI governance the same way: outcome-oriented, not taxonomy-dependent.

Here is the uncomfortable math. At three delays and counting, the EU has spent approximately 18 months not enforcing a regulation that was supposed to position Europe as the global leader in responsible AI. In that same window, California moved from proposal to executive order in weeks. The lesson isn’t that regulation is futile — it’s that the committee process itself has become the vulnerability. If a compliance strategy requires waiting for Brussels to finish deliberating, that strategy manages no risk. It outsources timelines to a process that has missed every deadline it set for itself. Build for the pattern, not the date.

Prediction: By Q4 2026, at least two EU member states are projected to adopt national AI governance frameworks that conflict with the delayed AI Act provisions, forcing the Commission into reactive harmonization rather than proactive standard-setting — because by the time the Act enforces, national regulators will have already filled the vacuum.

References

- European Parliament votes to delay EU AI Act implementation — Computerworld

- California imposes new AI regulations on businesses — CBS News

- California to bar AI vendors that can’t prove bias safeguards — Computerworld

- AI regulatory sandboxes: State of play and implementation challenges — EP Think Tank

- Examining the Market and Limitations of the Federal Push to Override State AI Regulation — JD Supra

- How Trump’s AI plan to override state laws could undercut key safeguards — Fast Company

- Regulatory framework for artificial intelligence — European Commission