Photographer Robert Kneschke discovered his stock photos in the LAION-5B dataset. He sued LAION in Hamburg — and lost. Across town, Disney’s lawyers sat in a conference room with an AI company’s general counsel, negotiating a licensing deal worth millions for the exact same category of use that deposited Lindsey’s work into a training corpus without consent. Contrast is not accidental.

RIAA, NMPA, and six major music industry organizations filed an amicus brief calling Anthropic’s unlicensed copying of lyrics “inexcusable” (Music Business Worldwide). A separate lawsuit covers thousands of songs and seeks billions in statutory damages. Yet the docket of litigation over AI training practices tells only half the story. In private boardrooms, big rightsholders get checks while individual creators get legal briefs arguing their work needs no compensation at all.

According to TechCrunch, Patreon CEO Jack Conte called AI companies’ fair use argument “bogus” at SXSW and said creators should be paid. The question hangs in the air like a subpoena: if it’s legal to just use it, why pay?

The Claim: Fair Use Is a Timing Strategy, Not a Legal Defense

AI companies are not arguing fair use because they believe they will win. They are arguing fair use because the three-to-five years a case takes to reach trial is three-to-five years of free training data, inflated valuations, and exhausted opponents. Licensing deals with Disney, Condé Nast, and Warner Music prove the companies know the doctrine will not hold. They are buying off the rightsholders who could force injunctions while leaving individual creators too broke to fight.

Call it the settlement arbitrage: settle with the powerful, litigate against the rest, and never let a judge write the ruling that settles the question for everyone.

The Evidence: Follow the Money, Then Follow the Silence

RIAA’s amicus brief specifies statutory damages of $150,000 per work infringed (Music Business Worldwide). Multiplied across Anthropic’s 20,000+ songs, the exposure reaches $3 billion. That number is legible, concrete, and designed for a judge’s arithmetic.

Now consider the text liability. Meta downloaded approximately 80TB of pirated books via torrent for AI training, according to court filings (Ars Technica). Eighty terabytes of books is roughly 60 million individual works. If courts apply the same $150,000-per-work statutory framework to text that the RIAA demands for music, the theoretical book publisher liability reaches $9 trillion. That figure exceeds the combined market capitalization of every major AI company.

Here is what that $9 trillion figure actually means in context: Meta’s current market capitalization sits near $1.4 trillion. OpenAI’s last private valuation reached $300 billion. Google, Microsoft, and Amazon combined add roughly $7 trillion in market cap. The entire AI-adjacent Big Tech complex, valued generously, approaches $9 trillion, precisely the floor of theoretical statutory exposure from Meta’s book downloads alone, before a single image, song, or article gets counted. Total enterprise value across the industry is approximately equal to one defendant’s text liability. That is not a negotiating position. It is an extinction-level number, which is exactly why no AI company will allow a judge to confirm it.

Music cases are not the main event. They are the cheapest category to settle before a judge does the math on everything else.

Meanwhile, Deezer receives 60,000 fully AI-generated tracks per day, and 39% of all music delivered to the platform is synthetic (Music Business Worldwide). Scale of ingestion makes individual negotiation impossible by design. When a system processes millions of works, paying each creator becomes structurally infeasible. Infeasibility is not a bug. It is the legal strategy.

The Economic Proof: Why a Believer Would Never Settle

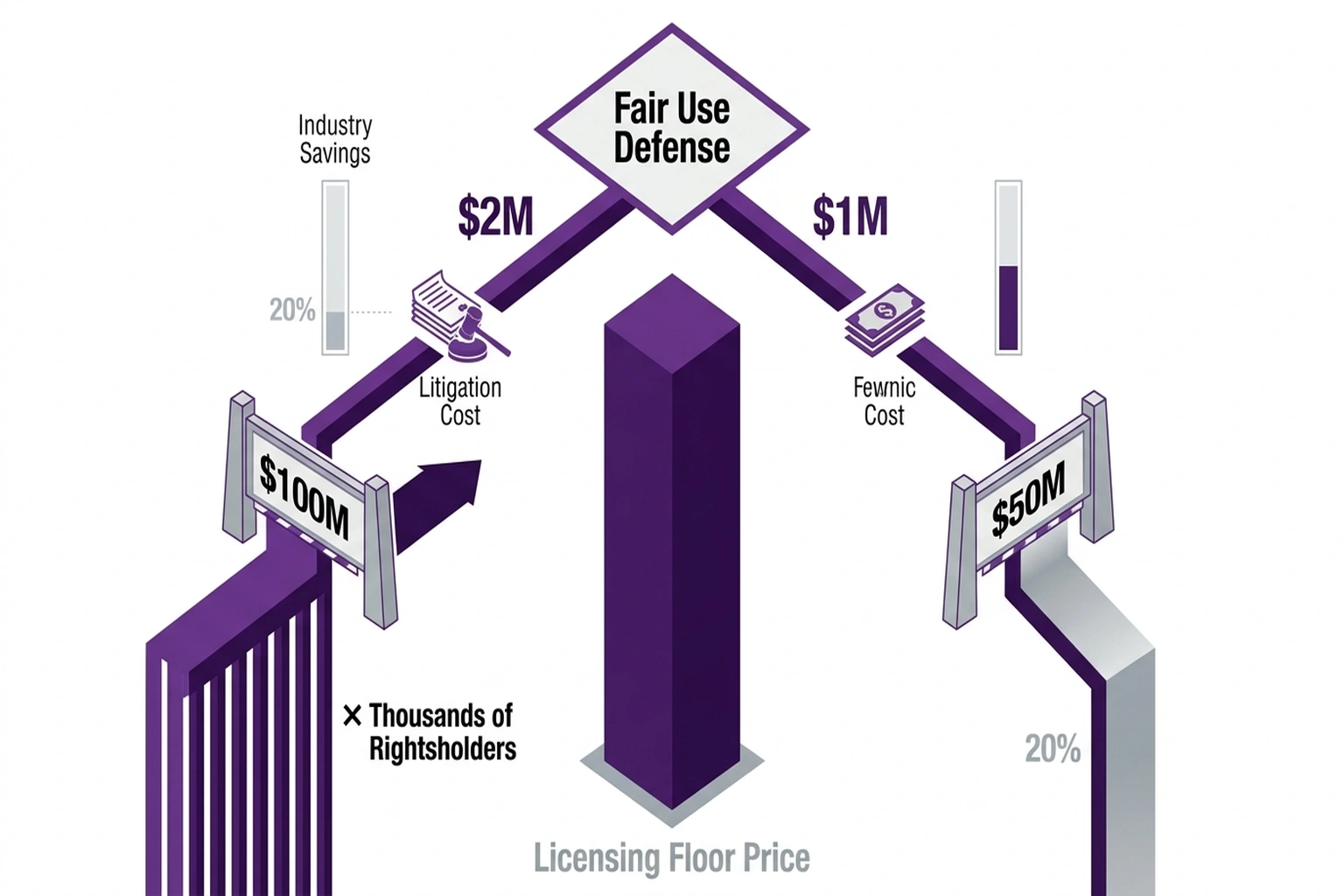

Run the expected value calculation. Defending a fair use lawsuit through trial costs roughly $2 million in legal fees. A favorable ruling would establish perpetual industry precedent worth billions in avoided licensing fees across every rightsholder. Even at a conservative 20% chance of winning, the expected value of fighting equals billions in savings multiplied by probability, minus $2 million. Math demands litigation.

Paying a licensing fee to avoid that ruling is mathematically irrational for a company that genuinely believes its legal position. Unless the company does not believe its legal position.

Consider a second calculation. When an AI leader pays a single major studio $100 million for training rights, it sets a floor price for every other rightsholder in existence. Multiply that $100 million by the thousands of distinct copyright holders whose data was scraped, and the total market liability exceeds the total capital of the AI industry. Settling with one entity guarantees mathematical insolvency against the collective. Only by settling selectively, keeping deals private, and preventing a court from establishing that floor price as a legal principle can a company avoid this cascade.

A third number that nobody in the industry publishes but everyone has calculated: Deezer’s 60,000 daily AI tracks, run forward one year, equals roughly 22 million synthetic works uploaded to a single platform. If even a fraction of those works were trained on unlicensed source material, and if rights-holders eventually establish a per-work damages theory that travels downstream to AI-generated outputs, not just training inputs, the liability clock is not running on the past. It is running right now, today, adding 60,000 potential claims per day on one streaming platform alone. Legal exposure is not a historical debt. It is compounding in real time.

The Turn: Why This Is Not About Fairness

Until this point, the argument has been framed as an equity problem: big companies get paid, small creators do not. That framing is accurate but incomplete, and it leads readers toward the wrong conclusion.

This is not primarily a fairness crisis. It is a solvency crisis hidden inside a fairness argument.

The two-track strategy only works if it never resolves. Every licensing deal with a major rightsholder is structurally self-defeating: it confirms a market rate exists, which means unlicensed use has a calculable price, which means the unpaid bill keeps growing. AI companies are not buying time to find a legal solution. They are buying time to become so embedded in the global economy that the cost of dismantling them exceeds the cost of rewriting the law to accommodate them. Fair use winning in court is not the bet. By the time courts are ready to rule definitively, AI infrastructure will likely be indispensable enough that governments will legislate a compulsory license rather than face systemic collapse.

Individual creators are not incidental casualties of this strategy. They are its load-bearing wall. Every creator who cannot afford to sue preserves the ambiguity that keeps the strategy alive. Every unregistered work is a claim that will never appear on the ledger. That $9 trillion exposure exists on paper. The actual number that will ever reach a courtroom is a fraction of that, precisely because the system was designed to make individual enforcement economically impossible.

At this point the reader’s understanding of the story has to shift. This is not David versus Goliath. It is a controlled demolition of the enforcement mechanism itself, disguised as a legal debate.

The Strongest Objection: Rational Risk Allocation

A reasonable counterargument exists. Stanford legal scholars and venture-backed commentators would call this rational risk allocation: companies settle with high-risk counterparties while preserving legal arguments for others. Fox News settled with Dominion but never conceded defamation law. Strategy is not proof of bad faith.

Structurally, the rebuttal is clear. Selective enforcement creates two legal systems. Corporate rightsholders receive compensation through private negotiation. Individual creators receive legal briefs insisting their work deserves none. Fair use doctrine was designed as a universal defense, but its practical application is becoming means-tested. If a defense only works against opponents who cannot afford to challenge it, it is not a legal principle. It is a toll booth.

The Fox-Dominion analogy also breaks down on the facts. Fox settled one case and continued broadcasting. An AI company that loses a single well-litigated fair use ruling does not face one judgment. It faces every plaintiff in every jurisdiction whose claim was waiting for that precedent. Asymmetry is not between one plaintiff and one defendant. It is between one ruling and an industry-wide liability cascade. No equivalent settlement has happened in AI because the first one that establishes damages theory is not the end of the story. It is the starting gun.

According to WebProNews, Edelson PC founder Jay Edelson is building AI cases because “the pattern is identical to what we saw in asbestos and tobacco — years of delay followed by a rapid collapse once the internal documents come out.” Discovery phase in these cases has not yet begun.

The Disproof Test

What would prove this thesis wrong? Evidence that AI companies are fighting fair use cases to final judgment against any well-funded opponent, or that their licensing deals include clauses explicitly preserving fair use as a public legal position rather than a private litigation shield. No such evidence exists. Every settlement is structured to prevent precedent, which only makes sense if precedent would be fatal.

A secondary disproof would be an AI company voluntarily publishing the internal valuation it assigned to fair use risk before signing its first licensing deal. If that number is zero, if the company genuinely believed training data required no payment, the license is inexplicable. Those documents exist. They are sitting in servers waiting for a discovery order.

The Reader Action: 60 Seconds That Matter

Check your copyright registration status. If you are a creator with unregistered work, open a new tab right now and go to copyright.gov. You cannot sue for statutory damages or join class-action suits without registration. Filing fees run $35-$55. If your work appeared in LAION-5B or any other known training dataset, you have a narrow window to preserve your legal standing before the case law gets written by the parties with the most expensive lawyers.

The gap between a $35 registration fee and $150,000 in statutory damages is the only number in this entire story that favors an individual creator. Act on it before a settlement makes it irrelevant.

But what happens when AI copyright cases actually reach a courtroom? For a guide, see The $15,000-Per-Article AI Copyright Suit. For the music-specific dimension, GEMA v. Suno: AI Music Copyright Training Data Lawsuit traces how European rightsholders are pursuing a different legal path.

Fair use will not be settled by philosophy or PR statements. It will be settled by whoever runs out of money first. Court filings reveal that Mark Zuckerberg’s company hoovered up an equivalent volume of copyrighted text through the same peer-to-peer piracy networks record labels shut down a decade ago (Ars Technica). If courts apply the same $150,000-per-work statutory damages framework to text that the RIAA demands for music, the theoretical liability exceeds the combined market cap of every major AI company. Music cases are not the threat. They are the cheapest category to make go away before a judge does the math on everything else.

What ties together these disputes over AI training practices is simple: companies publicly insist that training on copyrighted material requires no permission or payment, yet privately negotiate licenses with anyone powerful enough to force their hand. The two-track strategy depends on individual creators lacking the resources to mount a legal challenge while corporate rightsholders get quietly bought off. Every settlement that avoids a ruling protects the broader fair use defense from the judicial scrutiny it cannot survive.

Strategy here is not designed to win. It is designed to outlast. And it is working, for now, because the math that would end it is sitting in sealed court filings, waiting for a discovery order that has not yet been signed.

Prediction: By Q4 2026, at least one AI company is expected to offer a blanket individual-creator licensing program priced below the cost of a copyright registration filing fee, designed to extinguish the class-action threat before a court can set fair use precedent. When that offer arrives, read the fine print: accepting it will almost certainly require signing away the right to join any future collective action. The settlement will be priced to feel like a win. It will be structured to guarantee one.

References

- “RIAA, NMPA and More File Amicus Brief Backing Music Publishers Against Anthropic, Arguing AI Company’s Unlicensed Copying Is ‘Inexcusable’” , Music Business Worldwide

- “Patreon CEO Calls AI Companies’ Fair Use Argument ‘Bogus,’ Says Creators Should Be Paid” , TechCrunch

- “Meta Hopes SCOTUS Piracy Ruling Will Help It Beat Lawsuit Over Torrenting AI Data” , Ars Technica

- “The Trial Lawyer Who Terrifies Silicon Valley Is Loading His Next Weapon: AI Lawsuits” , WebProNews