Part 1 of 6 in the Benchmark Reality Checks series.

At 256,000 tokens, Claude Opus 4.6 retrieves the correct answer 93% of the time. Expand the window to its marketed million-token capacity, and that figure drops to 78.3%. Anthropic’s own engineering team has a term for this degradation: context rot. Anthropic simultaneously warns, in its own technical documentation, that context is “a finite resource with diminishing returns”.

Standard practice for teams replacing RAG pipelines or summarizing large codebases has been straightforward: load everything and let the model handle retrieval. The benchmark data suggests that strategy is fighting the architecture itself.

Anthropic’s Engineers Named Context Rot

“LLMs have an ‘attention budget’ that they draw on when parsing large volumes of context,” Anthropic’s Applied AI team — Prithvi Rajasekaran, Ethan Dixon, Carly Ryan, and Jeremy Hadfield — wrote in their context engineering guide. Budget is the operative word. Every token added to the window depletes it. The same team recommended pursuing “the smallest possible set of high-signal tokens that maximize the likelihood of some desired outcome” — a directive that implicitly concedes developers should not fill the million-token window.

Because transformer models allow every token to attend to every other token, expanding context creates n² pairwise relationships. At 50,000 tokens, each fact competes with 49,999 others for attention weight. At 500,000 tokens, the competition increases tenfold(https://arxiv.org/abs/2307.03172). Stanford and Berkeley researchers formalized this in their “Lost in the Middle” study (Liu et al., 2023): retrieval performance peaks when relevant information sits at the beginning or end of the context and degrades sharply for anything positioned in the middle.

Anthropic describes a “performance gradient” rather than a cliff — models “remain highly capable at longer contexts but may show reduced precision.” Translated, this means the model functions, just worse. As accuracy falls, correction attempts consume more tokens, pushing context utilization higher and accelerating the degradation — a feedback loop that prompt engineering alone cannot break.

What the engineering guide leaves unquantified is where that gradient gets steep enough to cost real money.

The Gradient Nobody Benchmarked in Production

Synthetic benchmarks measure retrieval under controlled conditions — one needle, one haystack, one answer. A detailed analysis of 25+ real Claude sessions documented how this degradation manifests during actual development work(https://github.com/anthropics/claude-code/issues/35296):

| Context Utilization | Observed Behavior |

|---|---|

| 0–20% | Reliable. States uncertainties accurately. |

| 20–40% | Degrading. Wrong approaches attempted before checking documentation. Self-corrects. |

| 40–60% | Unreliable. Confident wrong conclusions. Fabricates explanations. Stops self-correcting. |

| 60–80% | Broken. Earlier facts become inaccessible. Plausible-sounding replacements generated. |

| 80–100% | Irrecoverable. Repetitive actions, unable to integrate corrections. |

One finding stands out. Four consecutive agents were each asked to audit the previous agent’s work(https://github.com/anthropics/claude-code/issues/35296). Each correctly diagnosed the prior agent’s failures — then exhibited the same behaviors itself within 15 minutes(https://github.com/anthropics/claude-code/issues/35296). The model could retrieve information when explicitly prompted but could not activate relevant knowledge organically once context utilization crossed approximately 50%(https://github.com/anthropics/claude-code/issues/35296).

That pattern reveals a dimension of this attention degradation that synthetic benchmarks miss entirely — and it changes what the accuracy numbers actually mean.

MRCR v2 tests explicit retrieval: “find fact X.” Production workloads depend on implicit activation — the model spontaneously connecting a constraint mentioned at token 50,000 with a task assigned at token 800,000. Explicit retrieval degrades from 93% to 78.3%. Session data shows implicit activation fails catastrophically above 50% utilization — a steeper curve that no published benchmark currently measures.

Here is where single-retrieval accuracy becomes misleading. Production tasks rarely require one lookup. A coding agent refactoring a module needs to simultaneously recall the function signature (retrieval 1), the type constraints from a file loaded earlier (retrieval 2), and the project’s error-handling convention discussed at session start (retrieval 3).

At 78.3% per-retrieval accuracy, a three-hop task succeeds only 48% of the time (0.783³ = 0.480). A five-hop task — typical for cross-file refactors or multi-document synthesis — drops to 29.4%. The marketed accuracy of 78.3% describes a single lookup. The effective accuracy for the workloads that justify million-token windows is closer to a coin flip, per analyzed by technology journalist Timothy B. Lee.

Degradation at scale is not limited to one vendor. An Adobe benchmark study from February 2025, analyzed by technology journalist Timothy B. Lee, found GPT-4o dropped from 99% to 70% accuracy at extended context lengths(https://www.understandingai.org/p/context-rot-the-emerging-challenge). Claude 3.5 Sonnet fell from 88% to 30%(https://www.understandingai.org/p/context-rot-the-emerging-challenge). Llama 4 Scout collapsed from 82% to 22%(https://www.understandingai.org/p/context-rot-the-emerging-challenge). At 32,000 tokens — a fraction of modern context limits — even GPT-4o, the best performer in that test, lost nearly a third of its retrieval accuracy(https://www.understandingai.org/p/context-rot-the-emerging-challenge). Current models have improved substantially — Opus 4.6’s 78.3% at 1M tokens represents a generational leap — but the architectural constraint persists across every transformer-based system.

If the degradation is architectural, the question shifts: not “when will they fix it?” but “who bears the cost while they try?”

Flat-Rate Pricing for a Depreciating Asset

Opus 4.6 charges $5 per million input tokens, regardless of context length. A 100,000-token request pays the same per-token rate as a 900,000-token one. No multiplier for extended windows. Output tokens carry even steeper premiums — GPT-5’s output tokens run roughly $10 per million — making context-loading decisions doubly consequential when they trigger extended reasoning chains. But pricing models across the industry share one assumption: every token in the context window delivers equal value.

That assumption is false, and the arithmetic exposes it.

A full 1M-token request costs $5 in input tokens. At 256K tokens ($1.28 input cost), retrieval accuracy sits at 93% — a 7% failure rate. At 1M tokens ($5 input cost), accuracy drops to 78.3% — a 21.7% failure rate, roughly triple. Cost per successful retrieval nearly quintuples: $1.38 per million tokens at 256K versus $6.39 at 1M. But this is the single-retrieval number — the flattering one. For a three-hop production task, the effective cost per successful completion at 1M tokens climbs to $10.42 ($5 / 0.480), compared to $1.59 at 256K ($1.28 / 0.804). Teams loading full million-token windows pay 6.5 times more per completed task than teams capping at 256K — for the same model, the same API, the same per-token price.

What emerges from connecting Anthropic’s accuracy benchmarks with its pricing structure is a financial dimension of this degradation worth naming: what this analysis terms the Attention Tax. Each additional token loaded into the context window does not merely occupy space. It actively degrades every other token’s retrievability. Past roughly 256K tokens, the tax rate exceeds the information value of the new tokens being added. Loading a full million-token window does not buy additional knowledge — it buys degraded access to existing knowledge at the same per-token price.

This is the turn. Context rot is not a benchmark curiosity or an engineering inconvenience. It is an invisible tax levied on every token already in the window each time a new one enters — and flat-rate pricing ensures no invoice ever itemizes it.

Anthropic’s pricing previously acknowledged this boundary implicitly. Per 78.3%93% of the timeearlier pricing documentation, requests exceeding 200K tokens carried a premium — a pricing signal, intentional or not, marking the reliability threshold. Anthropic removed that multiplier. The technical limitation persists; the price signal does not. One internal model (the “attention budget”) incentivizes curation. Another (flat-rate pricing) incentivizes stuffing. In a market where developers default to the path of least resistance, flat-rate pricing wins — and what this analysis terms the Attention Tax compounds silently.

Why 78.3% Might Be Good Enough — and When It Isn’t

Counterargument deserves its full weight: Opus 4.6’s 78.3% at 1M tokens leads all frontier models by a wide margin. According to MRCR v2 benchmark data, previous-generation Claude models scored roughly a quarter of that on the same benchmark at comparable context lengths — a fourfold improvement in a single generation(https://karangoyal.cc/blog/claude-opus-4-6-1m-context-window-guide). And RAG pipelines introduce their own accuracy risks — poor chunking, embedding drift, retrieval of semantically similar but factually wrong passages — that proponents of full-window loading rightly flag. Industry estimates place typical RAG retrieval accuracy between 70% and 85% depending on domain specificity and chunking quality, meaning a well-loaded context window can legitimately outperform a poorly built pipeline(https://www.anthropic.com/engineering/effective-context-engineering-for-ai-agents).

Distinction hinges on task complexity. For single-retrieval workloads — summarization, document Q&A, broad code comprehension — 78.3% may genuinely beat the alternative. A model correctly retrieving three of every four relevant facts across a million-token codebase may outperform a RAG pipeline that chunks poorly and retrieves irrelevant passages. These are one-hop tasks: one question, one lookup, one answer.

But the workloads that actually justify million-token windows are rarely one-hop. Cross-file refactoring, multi-document legal review, full-codebase architectural analysis — these are the tasks that cannot be chunked into 50K-token RAG calls. They are also, by definition, multi-hop tasks. And at 78.3% per hop, the compounding is unforgiving:

| Retrieval Hops | Accuracy at 256K (93%) | Accuracy at 1M (78.3%) | Cost per Success at 1M |

|---|---|---|---|

| 1 | 93.0% | 78.3% | $6.39 |

| 2 | 86.5% | 61.3% | $8.16 |

| 3 | 80.4% | 48.0% | $10.42 |

| 5 | 69.6% | 29.4% | $17.01 |

Irony cuts deep: the use cases where full-window loading is architecturally necessary are exactly the ones where what this analysis terms the Attention Tax is most punishing. Simple tasks that tolerate 78.3% rarely need a million tokens. Complex tasks that need a million tokens rarely tolerate 48%, per Claude Code’s growing GitHub footprint.

Previous analysis of Claude Code’s growing GitHub footprint found developers rapidly scaling AI usage without visibility into efficiency trade-offs — the same dynamic now applies to context loading. Engineers building on the API receive no retrieval confidence dashboard, no per-position accuracy estimate, no tiered pricing nudging toward optimal context management.

Three Tests Before Loading a Million Tokens

Before committing a production pipeline to full-window loading, three tests separate informed architecture from expensive assumptions. Ignoring what this analysis terms the Attention Tax carries a quantifiable cost most teams have never calculated.

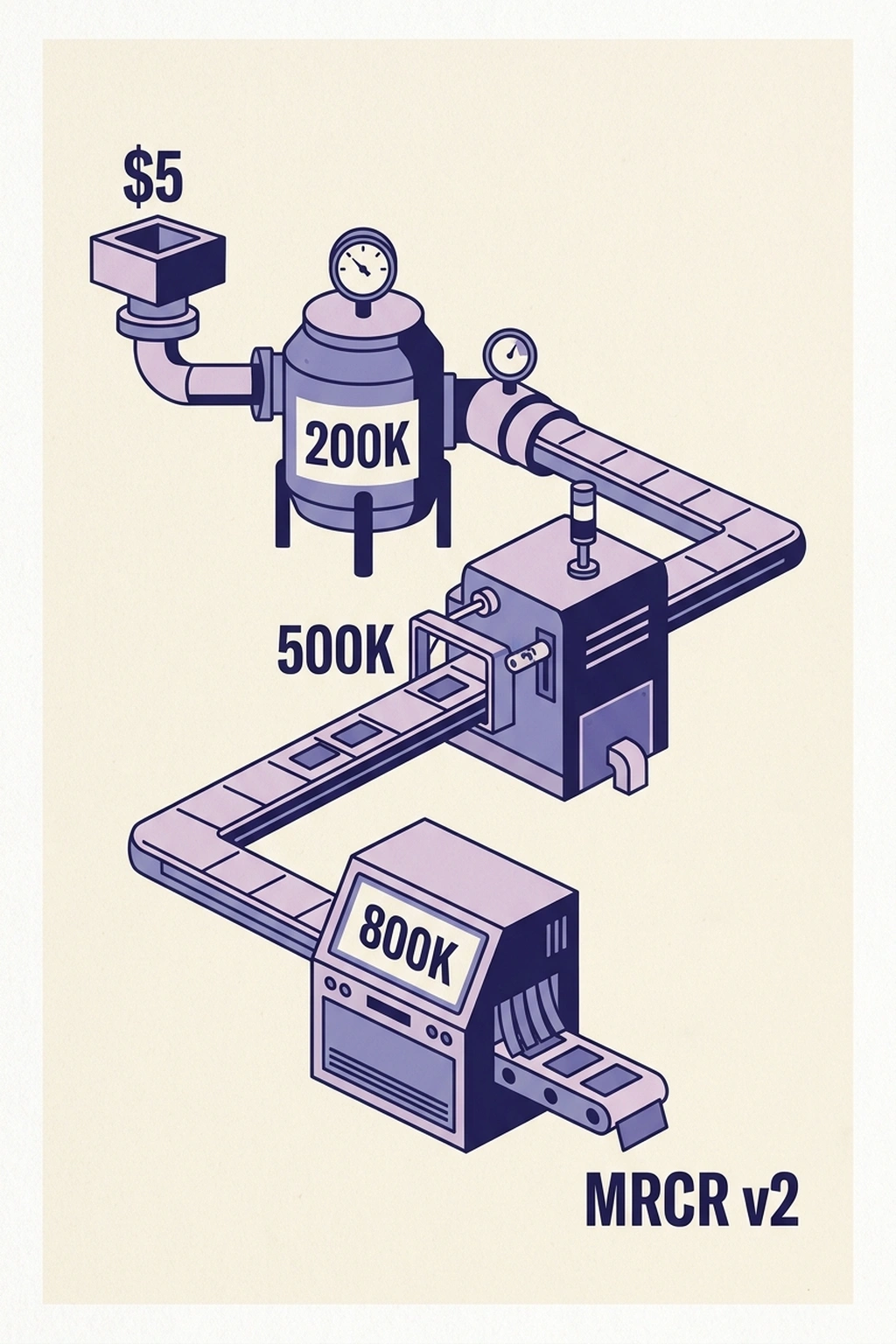

Test 1: Measure the actual degradation curve. Embed a unique, easily verifiable fact — a synthetic phone number, a fictional company name — at the 200K, 500K, and 800K token positions within a representative context payload. Ask the model to retrieve each. Record accuracy at each position. If retrieval at 800K falls below the application’s error tolerance, every token past that threshold is noise at full price. Compare results against the published MRCR v2 benchmarks to calibrate expectations — production degradation often exceeds what controlled tests predict.

Test 2: Calculate the break-even against chunked retrieval. At $5 per million input tokens, a full million-token request costs $5 per query. A chunked RAG pipeline retrieving only the 50K most relevant tokens costs $0.25. For narrow, well-embedded domains where RAG retrieval accuracy exceeds 78.3%, the pipeline is simultaneously cheaper and more accurate. For a team running 10 full-window queries daily, switching to structured retrieval saves approximately $950 per month — $11,400 annually — while improving answer reliability(https://karangoyal.cc/blog/claude-opus-4-6-1m-context-window-guide). Exceptions exist: exploratory coding sessions, broad document discovery, and one-off research queries where coverage matters more than precision. For those use cases, the full window earns its cost.

Test 3: Count the hops. Before choosing between full-window and chunked retrieval, audit the actual retrieval pattern of the target workload. Instrument a sample of queries and count how many distinct facts the model must combine to produce a correct answer. If the median task requires three or more hops, multiply the single-retrieval accuracy by itself that many times. A workflow that needs five retrievals at 78.3% accuracy succeeds less than 30% of the time — worse than the RAG pipeline it was meant to replace, at twenty times the cost. The hop count, not the single-retrieval benchmark, determines whether full-window loading is an asset or a liability, per Fifty.

A team that ignores what this analysis terms the Attention Tax pays for it anyway. Fifty full-window Opus queries per day costs $250 in input tokens(https://karangoyal.cc/blog/claude-opus-4-6-1m-context-window-guide). At 78.3% accuracy, roughly 11 of those queries return degraded results requiring manual verification(https://karangoyal.cc/blog/claude-opus-4-6-1m-context-window-guide). Restructuring to 256K-token context loading drops daily input cost to $64, raises accuracy to 93%, and cuts degraded queries to fewer than 4 per day(https://github.com/anthropics/claude-code/issues/35296). Annualized: approximately $48,000 in unnecessary token spend and nearly 1,900 degraded queries — a figure budget holders should multiply by the number of teams running full-window pipelines without measurement(https://karangoyal.cc/blog/claude-opus-4-6-1m-context-window-guide).

Analysis relies on MRCR v2 benchmark data measuring synthetic multi-needle retrieval. Production accuracy on domain-specific tasks — legal documents, medical records, proprietary codebases — has not been independently benchmarked at million-token scale. Depending on document structure and query complexity, the 78.3% figure may understate or overstate real-world performance.

Million-token context windows represent genuine engineering achievements. Opus 4.6’s 78.3% at 1M tokens is the best in its class by a wide margin, and for the right workload, it delivers capabilities that no retrieval pipeline can replicate. But what this analysis terms the Attention Tax means “the right workload” is narrower than the marketing suggests. As VKTR analyst Scott Clark noted, bigger context comes at a cost that extends beyond per-token pricing. A second-order effect is already visible: teams that discover what this analysis terms the Attention Tax mid-project rarely restructure their context pipelines. Rebuilding costs more than paying extra tokens — which is exactly how flat-rate pricing sustains itself.

Accuracy metrics will improve. Architectural advances and longer native training sequences will continue to flatten the degradation curve. But this degradation — the gap between marketed capacity and measured reliability — and the flat-rate pricing that conceals it will persist until the industry prices what the architecture actually delivers.

Context is not free storage. It is an attention budget, exactly as Anthropic’s engineers described. Treat it like one.

What to Read Next

- TurboQuant’s 6x Compression Creates More GPU Demand

- GPT-5.4 Mini vs Nano: Small Model Costs Hide a 33-Point Cliff

- Qwen 3.5 Benchmark Win Hides a 15th-Place User Verdict

References

- Effective Context Engineering for AI Agents — Anthropic Applied AI team guide defining “attention budget” and context rot mechanisms

- Claude Code GitHub Issue #35296: Performance decay Analysis — Behavioral degradation data across 25+ production sessions documenting retrieval failures at high context utilization

- Claude Opus 4.6 1M Context Window Guide — Karan Goyal’s MRCR v2 benchmark analysis and Opus 4.6 pricing breakdown

- The accuracy decline: The Emerging Challenge — Timothy B. Lee’s analysis of this degradation as an architectural limitation with cross-model benchmark data

- Lost in the Middle: How Language Models Use Long Contexts — Liu et al. (2023) research on positional retrieval bias establishing the U-shaped accuracy curve

- Anthropic’s Claude Opus 4.6 Hits 1M Tokens — But Bigger Context Comes at a Cost — Scott Clark’s cost analysis of extended context window economics

- The Coming Disruption: How Open-Source AI Is Reshaping the Industry — UC Berkeley California Management Review analysis of frontier model pricing