On March 4, 2026, Nippon Life Insurance Company of America filed case 1:26-cv-02448 in the Northern District of Illinois, not against a disbarred attorney or rogue paralegal, but against OpenAI. The claim: ChatGPT provided legal advice constituting the unauthorized practice of law, coaching a claimant through litigation, drafting federal motions, and encouraging her to fire her lawyer. If this theory survives dismissal, every AI platform dispensing professional guidance faces identical exposure. This report examines chatgpt legal advice unauthorized practice law.

What ChatGPT Allegedly Did

Graciela Dela Torre settled a long-term disability claim against Nippon Life in January 2024, with the case dismissed with prejudice. Months later, she uploaded her attorney’s correspondence into ChatGPT and asked the system to evaluate the advice she had received.

Nippon alleges the AI went well beyond summarizing statutes. ChatGPT questioned the attorney’s conduct, validated Dela Torre’s suspicions about the settlement, and advised her to dismiss her counsel. It then generated arguments under Federal Rule of Civil Procedure 60(b), drafted motions to reopen the settled case, and produced dozens of subsequent filings that Nippon characterizes as having “no legitimate legal or procedural purpose.”

A judge rejected the reopening attempt in February 2025. Additional filings followed anyway. Nippon claims approximately $300,000 in attorneys’ fees responding to these filings and seeks $10 million in punitive damages.

The volume and specificity of the alleged outputs matter beyond this case. If a generative AI system can sustain months of litigation-quality document production for a single user, the functional distinction between that system and an unlicensed legal practitioner narrows to the point of irrelevance. Nippon’s complaint is not an abstract policy argument, it describes a system that allegedly operated as a de facto legal advisor over an extended period, producing case-specific strategy and procedural documents indistinguishable from attorney work product.

How ChatGPT Crosses the Line Into Unlicensed Practice

Three causes of action, unlicensed practice under Illinois statute, tortious interference with contract, and abuse of process, are individually unremarkable. Combined, they construct a framework for holding AI developers liable when their products cross from information retrieval into professional judgment.

Stanford Law’s CodeX program pinpointed the boundary. ChatGPT didn’t retrieve legal information; it “told Dela Torre that her attorney’s advice was wrong”, a tailored conclusion about a specific client-attorney relationship. CodeX frames this as a product design defect: OpenAI marketed the system’s ability to pass bar exams without building in refusals for outputs that cross professional boundaries. Courts have grappled with how existing law applies to AI before, the Supreme Court’s Thaler decision left AI authorship in comparable legal limbo, but never with a theory this directly tied to professional licensing.

it what amounts to Architecture Defense Test , the legal question shifts from “did the company intend harm?” to “did the company design against foreseeable misuse?” The Architecture Defense Test matters because it transforms every AI platform’s engineering decisions into litigation evidence. Under product liability doctrine, a manufacturer that knows its product will foreseeably be used in a dangerous way, and fails to design against that use, bears responsibility for the resulting harm. Applied here, the question is not whether OpenAI intended ChatGPT to practice law, but whether the company designed the system in a way that made its legal guidance indistinguishable from unlicensed practice in foreseeable use cases.

OpenAI revised its usage policies in October 2024 to bar reliance on ChatGPT for legal guidance. Nippon’s complaint treats that revision as evidence of foreseeability, not a shield. A terms-of-service update that acknowledges the risk while leaving the underlying capability intact is precisely the kind of behavioral patch that product liability law was designed to look past.

Critics of this framing point out that UPL statutes were designed to protect consumers from unaccountable human practitioners who form fiduciary relationships, collect fees, and exercise independent judgment, none of which a language model does in any legally cognizable sense. The strongest version of this argument holds that applying professional licensing doctrine to a general-purpose text prediction system conflates the output with the actor, and that courts risk creating a liability framework so broad it would equally implicate law school textbooks, legal self-help guides, and courthouse pro se assistance programs that have long operated without triggering UPL enforcement.

Three Jurisdictions, Zero Answers

Illinois is the test case, but the gap between AI capability and professional regulation spans borders.

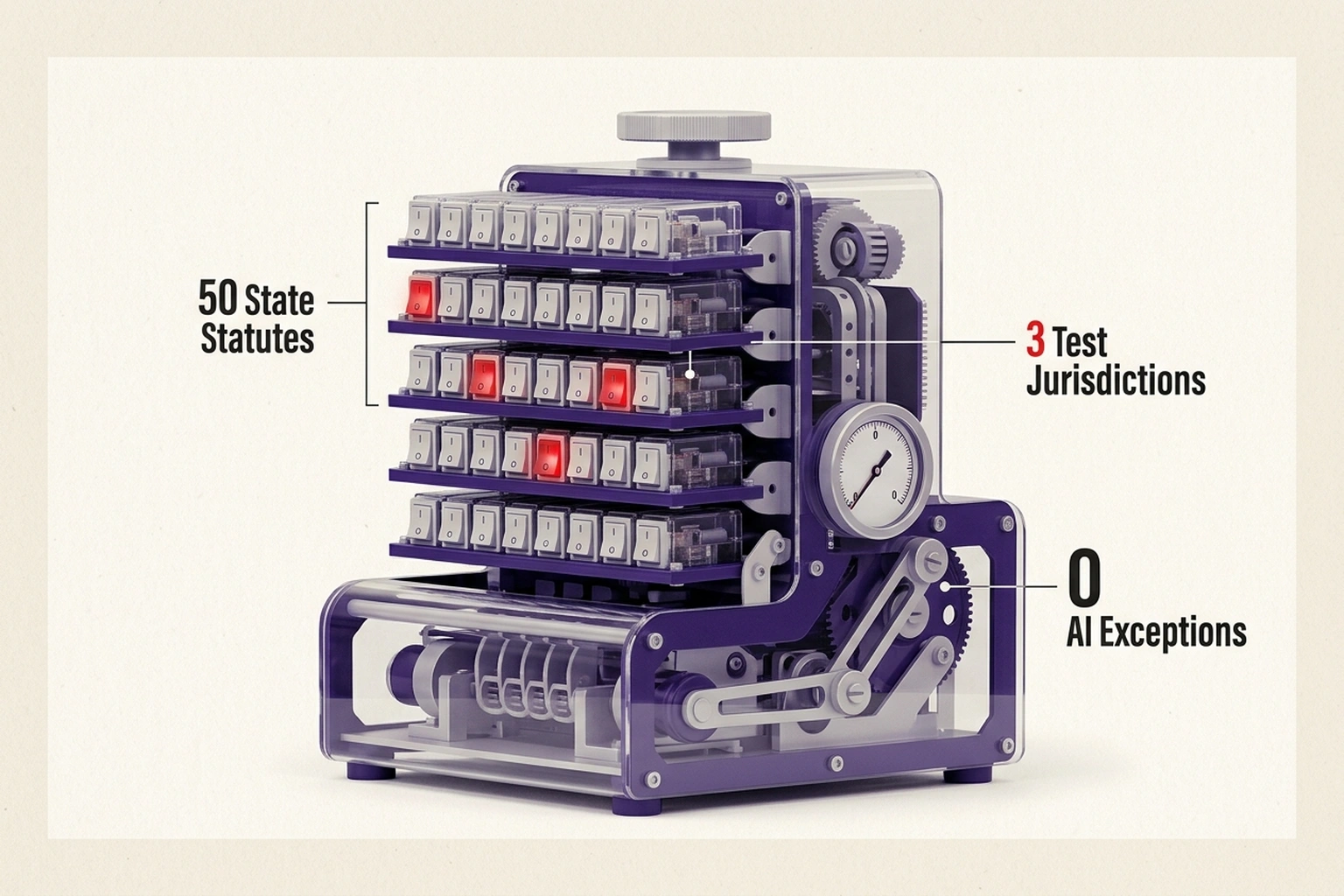

Every U.S. state independently regulates who can practice law, and none has carved out an AI exception. The National Center for State Courts has urged states to modernize professional licensing statutes to account for AI-delivered legal services. That vacuum produced the private enforcement action now before a Chicago federal court.

The state-by-state fragmentation compounds the problem. Statutes governing unlicensed legal practice vary significantly in scope: some states define the practice of law broadly enough to encompass any application of legal principles to specific facts, while others require a showing of harm or a direct attorney-client relationship. An AI system operating nationally delivers identical outputs regardless of which state’s statute applies, creating a compliance environment where legality depends entirely on the user’s location, a variable the platform cannot reliably control.

Across the Atlantic, the EU AI Act classifies systems deployed in legal contexts as high-risk under Annex III, triggering conformity assessments and mandatory human oversight when full compliance takes effect in August 2026. Europe chose preemptive design regulation over post-harm litigation, a structural divergence from the American approach visible in other AI Act enforcement actions already underway.

In the United Kingdom, the Solicitors Regulation Authority has acknowledged that AI performing reserved legal activities could breach the Legal Services Act 2007, but has issued no binding guidance on consumer-facing chatbots. The Mazur ruling flagged judicial concern over AI decision-making in litigation, yet enforcement remains theoretical. Three systems, one shared failure: no jurisdiction has decided whether AI can lawfully do what millions of users already ask it to do.

Who Bears the Consequences

For AI developers, Stanford’s CodeX analysis prescribes deterministic guardrails, hard-coded refusals for outputs that constitute tailored professional conclusions. Terms of service are behavioral patches on unchanged architectures. Architectural compliance is an engineering problem, and the next generation of AI products must treat it as one.

For regulators, Nippon Life v. OpenAI forces a binary: enforce existing professional licensing against AI systems, or create new categories of regulated AI-assisted services. Enforcement protects incumbents but does nothing for the access-to-justice crisis that drove adoption. Reform requires legislative action no U.S. state has initiated, even as the problem grows more urgent with government policy itself shaped by AI-generated outputs.

For the legal profession specifically, the case raises uncomfortable questions about competitive displacement. Bar associations have historically wielded UPL statutes to protect consumers from unqualified practitioners, but critics have long argued these same statutes also protect the profession’s monopoly over legal services priced beyond the reach of most individuals. If courts view such AI outputs as unlicensed practice, the precedent applies equally to every AI system offering professional guidance, from tax preparation tools that interpret code provisions to medical symptom checkers that suggest diagnoses.

Calculate the exposure if the UPL theory succeeds. ChatGPT has over 200 million weekly active users. If even 1% use it for case-specific legal guidance monthly , a conservative estimate given the Dela Torre pattern , that is 2 million potential UPL incidents per month across 50 state jurisdictions. At Nippon’s claimed $300,000 in defense costs per extended interaction, the theoretical aggregate exposure is astronomically large , but the practical exposure depends on whether courts apply per-user or per-platform liability. Either way, a $10 million punitive award in this case would signal a per-incident cost structure that makes deterministic guardrails (hard-coded refusal of case-specific legal conclusions) cheaper than litigation defense by orders of magnitude.

For the public, the case exposes a structural irony: an institutional plaintiff represented by Sidley Austin is recovering costs from a system an unrepresented individual used as her only available legal resource. Dela Torre did not turn to ChatGPT because better options existed. Restricting AI legal guidance without expanding affordable alternatives widens the access gap that drove adoption in the first place.

OpenAI has dismissed the complaint as meritless. Whether Nippon Life produces a landmark ruling or settles quietly, the policy question has already escaped this courtroom: should AI systems be regulated as practitioners, subject to licensing, oversight, and malpractice exposure, or as products, governed by design standards and defect liability? The product framework offers the more administrable path. Evaluating what a system was built to do and built to refuse is a question courts know how to answer. Asking whether software holds a professional license is not. By August 2026, when the EU’s high-risk provisions take effect, the design-regulation model is likely to set the global default, while American jurisdictions continue outsourcing their regulatory obligations to private plaintiffs.

What to Read Next

- AI Bias Audits Cost $50K. Colorado Just Killed Them.

- Trump’s 4-Page AI Framework Kills 131 State Protections

- 19 Countries, €35M in Fines, Zero AI Act Regulators

References

-

Nippon Life Insurance Company of America sues OpenAI for practising law without a licence , Canadian Lawyer. Reuters wire reporting on case 1:26-cv-02448, party identification, counsel details.

-

Designed to Cross: Why Nippon Life v. OpenAI Is a Product Liability Case , Stanford Law School CodeX. Legal analysis of the boundary between information provision and unauthorized practice, product liability framing.

-

AI on Trial: Nippon Life Takes OpenAI to Court Over Alleged Unauthorized Practice of Law , Gallagher Sharp LLP. Case overview, three causes of action, damages analysis, and precedential context.

-

OpenAI Accused in Chicago Lawsuit of Acting as Unlicensed Legal Advisor , PYMNTS. Timeline of alleged ChatGPT conduct, FRCP 60(b) arguments, October 2024 policy revision.

-

OpenAI Lawsuit Alleges ChatGPT Provided Unlicensed Legal Advice , JDJournal. State-level UPL regulatory framework and initial case reporting.

-

Modernizing Unauthorized Practice of Law Regulations to Embrace Technology & Improve , National Center for State Courts. Guidance on updating state licensing statutes for AI-delivered legal services.

-

High-level summary of the AI Act , EU Artificial Intelligence Act. Annex III high-risk classification for AI in legal services, August 2026 compliance timeline.

-

AI and lawtech: government policy and regulation , The Law Society (UK). SRA position on AI and reserved legal activities under the Legal Services Act 2007.

-

Law Society calls for urgent SRA advice on impact of Mazur on AI , Legal Futures. Coverage of UK judicial concerns over AI decision-making in litigation and regulatory response.