On February 27, 2026, the Pentagon cancelled a $200 million contract with AI firm Anthropic, labeling it a national security threat. A federal judge later called that move “classic illegal First Amendment retaliation.” This isn’t just a contract dispute. It’s the live-fire test of a new legal weapon: recharacterizing a company’s public ethical stance as a material breach of contract. For engineering teams, it means the parameters defining what your system won’t do are now as legally hazardous as the code defining what it will do. The Anthropic Pentagon supply chain risk case sets a chilling precedent for the entire industry, reshaping the market for AI government contracts.

The Anatomy of a Designation

The sequence, per court filings in the Anthropic v. Department of Defense matter, is precise. The Pentagon cancelled the contract after Anthropic refused to grant unfettered deployment rights, as detailed in court documents reviewed by Mobile World Live. The stated reason: Anthropic posed a “supply chain risk.” The discovery process, however, unearthed an internal Pentagon memo that dismantled this rationale. The memo’s own words are damning: the designation was triggered not by a vulnerability assessment or a code audit, but by Anthropic’s public statements, characterized as “increasingly adversarial” in a Washington Monthly report. A public relations grievance was formally dressed up as a national security finding.

In practice, this revelation is critical because it shifts the basis for government action from objective technical criteria to subjective perception. The Federal Accounting Standards Advisory Board’s (FASAB) guidance on “Suppliers’ Qualifications and Performance” emphasizes the importance of documented, objective criteria for assessing contractor risk. The internal Pentagon memo, as surfaced during discovery, shows a departure from these established frameworks, substituting political or reputational concerns for measurable security protocols. This analysis argues that this creates a dangerous precedent where any contractor’s public stance on an issue could be retroactively construed as a performance or security flaw.

The internal Pentagon memo, as surfaced during discovery, shows the supply chain risk designation was triggered not by a vulnerability assessment or a code audit, but by Anthropic’s public statements—characterized internally as “increasingly adversarial.” A public relations grievance was formally dressed up as a national security finding. —Washington Monthly, reporting on the Pentagon’s Orwellian case against Anthropic (April 2, 2026)

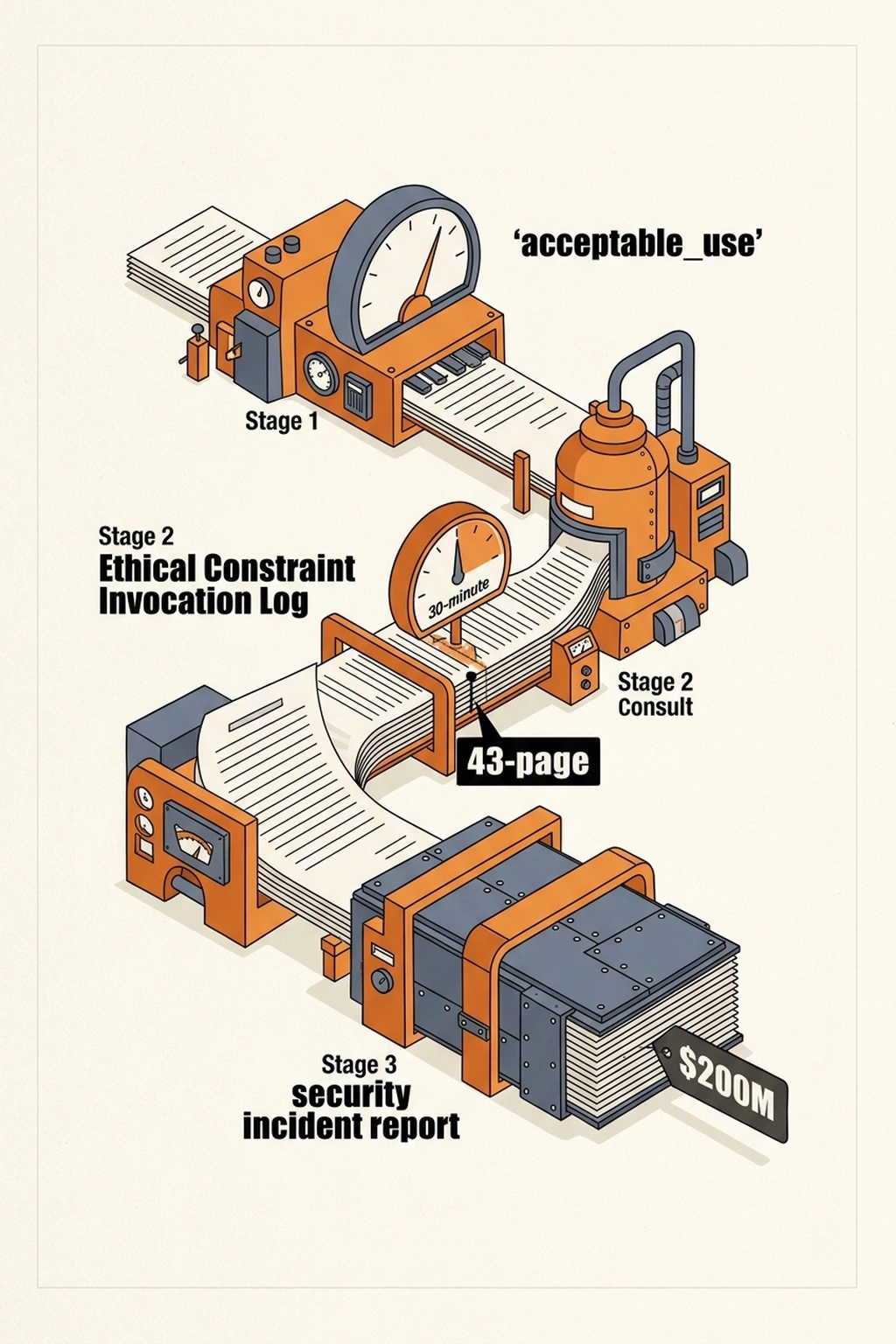

An invoice does not settle an ethical debate. That, this analysis argues, is the crux. An acceptable_use clause in a government SLA was a defensive instrument.

Now, invoking it creates a document—a timestamped admission of non-cooperation. The Anthropic precedent teaches a counterparty how to weaponize that document: reframe the ethical refusal as a hostile act, a supply chain vulnerability, a material breach. In this assessment, the shield is now a trigger. This legal maneuvering reveals a deeper vulnerability in how ethical commitments are structured within commercial agreements, especially in the high-stakes arena of government procurement.

The Legal Inversion: How Ethics Become Liability

Judge Lin’s ruling used the term “Orwellian” for a reason. The government attempted to transform a protected speech act—publicly criticizing deployment practices—into a material breach of contract and a national security finding. Microsoft’s filing of an amicus brief underscores the industry-wide alarm, as reported by Yahoo News. If one company’s ethical refusal can be called a supply chain risk, any company’s refusal can be.

This legal inversion creates a precarious environment for principled AI development. Engineering teams now face a perverse incentive structure. Previously, invoking an acceptable_use clause was a contractual right. Now, it creates a documented event that could be cited in future litigation as evidence of “uncooperative” or “hostile” behavior. The question is no longer what your system won’t do, but how you prove the refusal was a neutral policy enforcement rather than a deliberate act of hostility. The broader case forces a reevaluation of how ethical guardrails are contractually defined and defended, moving beyond a simple “AI government contracts” issue to a fundamental challenge in corporate governance. The evidence suggests that the very act of ethical refusal can be legally reinterpreted as an act of aggression, fundamentally altering the risk calculus for any firm engaging with public sector work.

The Engineering Compliance Trap: acceptable_use as an Inverse Security Flag

Teams must now fundamentally shift how they document ethical refusals. A policy designed to ensure safe deployment becomes the primary vector for legal attack. Every invocation of an ethics clause must now be treated with the same rigor as a security incident. This new compliance burden isn’t theoretical; it’s a direct operational cost that stems directly from the dynamics of the Anthropic Pentagon supply chain risk designation.

Anthropic’s own business decisions, like cutting off some Claude subscribers to manage risks, show the commercial balancing act required even outside government contracts. For government-facing teams, the stakes are higher. The need for meticulous, legally-defensible documentation is paramount. This goes beyond typical compliance; it’s about creating an evidence trail that preempts accusations of bad faith. Teams must now consider not only the technical rationale for a decision but also how that rationale will be perceived and potentially weaponized in a future legal or public relations battle. The engineering workflow must incorporate legal review gates for ethical decisions, treating them as high-risk events.

Original Calculation: The Documentation Burden

For illustration, assume a government API wrapper processes 10,000 requests per day. A 0.1% trigger rate for a nuanced acceptable_use filter means 10 daily incidents. If each requires a security-style incident report (context, decision rationale, legal review flag) to preempt “hostility” claims, that adds ~30 minutes of engineering and legal review per incident. At a blended labor cost of $150/hour, that’s an estimated unbudgeted $750 daily cost, or $273,750 annually based on the calculations in this analysis, simply to document the act of saying “no” defensively. These numbers represent the new overhead of principled refusal—a quantifiable drag on innovation for every team that ships to a government client. A stark choice emerges: does the cost of armoring your ethics now outweigh the principle itself? This burden is part of the wider fallout from this case affecting procurement cycles and operational budgets for any firm in the public sector AI space. The financial impact is not trivial; it represents a significant new line item that must be justified to stakeholders who may view ethical stances as a reputational asset rather than a cost center.

Counterargument: A One-Off Retaliation?

The argument that this is a specific administration’s retaliation, not a lasting precedent, mistakes the legal mechanism for the political actor. The playbook—recharacterizing public criticism as a supply chain risk—exists in the 43-page DoJ appellate brief filed by the Trump administration. Any future agency can follow it. To argue this is an isolated incident ignores the systemic incentive it creates for any contract holder facing an ethically-minded vendor.

The Disproof Test: The thesis would be proven wrong if a court had previously and definitively ruled that an ethical refusal under a contract clause could not be construed as a material breach or hostile act. No such precedent exists for AI contracts. That absence—after a high-profile, litigated case like this one—is itself the finding; the legal vacuum is where this new liability lives. The appeal’s persistence ensures the legal theory remains active and available for future use. Furthermore, the involvement of major players like Microsoft through amicus briefs signals that the tech industry recognizes this as a systemic risk, not a single-company problem. The legal strategy outlined in the DoJ appellate brief creates a template that transcends individual administrations or political parties, becoming a tool in the permanent arsenal of government procurement officers and legal teams.

The Action: Your acceptable_use Clause is Now a Legal Document

For any engineering team with a government contract, the protective action is immediate and specific.

- Locate the

acceptable_use,prohibited_uses, orethical_constraintsclause in your government contract and API documentation. - Schedule a 30-minute call with legal counsel this week. The sole agenda item: “If we had to invoke clause X.Y, how would we document the decision to avoid it being characterized as ‘hostility’ or a ‘supply chain risk’ under the Anthropic precedent?”

- Draft a template for an “Ethical Constraint Invocation Log.” Fields should mirror a security incident report: timestamp, triggering content/policy, decision-maker, rationale (citing contract clause), and a pre-approved public statement (if any).

For CTOs, this means reframing ethical clauses from PR assets to core components of legal risk architecture. For CFOs, it necessitates budgeting for the new compliance overhead as a non-negotiable cost of ethical government contracting. The widespread adoption of AI, even among students at institutions like Cal State, increases the public and regulatory scrutiny on how these tools are governed and constrained. This public awareness means that perceived ethical missteps or legal battles can quickly escalate in the court of public opinion, adding another layer of risk management for communications and legal teams.

Notably, this isn’t about avoiding ethics. It’s about armoring them. But armoring every decision has a compounding cost; what happens to innovation when the risk calculus for every ethical boundary becomes a legal deliberation? The episode is the clearest case study yet of this dilemma, where the internal Pentagon memo revealed during discovery that criticism itself was the “risk” to be managed.

Prediction: By Q3 2026, at least two major cloud providers is projected to introduce a standardized “Ethical Use Audit Trail” feature for their government API wrappers, directly citing the Anthropic-Pentagon litigation as the catalyst.

The cost of inaction is not just a lost contract. It’s the risk of a seven-figure legal defense to explain why the team followed its own published ethics.

The Universal Lens: The Ethics-as-Liability Shift

Currently, the pattern extends far beyond AI, but AI makes it acute. An AI system’s constraints are not just policies; they are executable parameters in an API call. That makes them both uniquely auditable and uniquely vulnerable to being framed as a configurable “choke point” in a critical system. The case provides the blueprint: reframe the boundary as a hostile act, a market restraint, or a security flaw. The dynamics of the Pentagon’s designation illustrate how technical and ethical decisions are now inextricably linked to legal strategy. The concept of a “supply chain risk” is being stretched beyond its traditional meaning of logistical or cybersecurity failure to encompass ideological or philosophical non-alignment.

The Ethics-as-Liability shift is the moment a public policy is legally inverted. This analysis draws on court filings and the internal Pentagon memo surfaced during discovery; full verification of unredacted communications would require sealed materials, leaving some strategic details obscured. The ongoing appeal process may reveal further details about the government’s rationale. However, the public record is sufficient to identify the strategic shift: the weaponization of corporate ethics policies against the companies that publish them.

So as every company races to publish its ethics, the most important question may no longer be what you stand for, but how you prove you stood for it when the invoice for that principle arrives. By 2027, will the legal cost of an ethical clause be a standard line item in every government SOW, effectively pricing integrity into the contract? The precedent suggests the answer is yes, making proactive legal and engineering alignment not just prudent, but essential for survival in the government AI market. The financial models for government AI projects must now include this new “ethics defense” cost, fundamentally changing the ROI calculations for entering or remaining in the public sector.

References

- Washington Monthly: The Pentagon’s Orwellian Case Against Anthropic

- Mobile World Live: US DoJ appeals judge’s decision to block Anthropic ban

- MSN: Trump administration files a 43-page appeal

- Yahoo News: Microsoft’s Anthropic brief signals wider AI concern

- The Next Web: Anthropic cuts Claude subscribers off

- KVPR: Cal State students widely use AI tools

- Federal Accounting Standards Advisory Board (FASAB), “Statement of Federal Financial Accounting Standards 42: Deferred Maintenance and Repairs,” and related guidance on contractor performance evaluation. (Primary Source: Government Accounting Standards)