Affiliate Disclosure: This article contains affiliate links. We may earn a commission if you purchase through these links, at no additional cost to you. This helps us continue publishing free content. See ourfull disclosure. This report examines ai phishing attacks 2025 statistics.

In 2024, security filters caught one phishing email every 42 seconds. as projected for 2025, that rate had collapsed to one every 19 seconds—more than double the volume in a single year. What’s most alarming isn’t just the speed. It’s that the driver isn’t better attack infrastructure or more sophisticated criminal organizations. It’s artificial intelligence, now operating as what threat researchers call a “core capability” for generating, testing, and deploying campaigns at scale.

The data comes from Cofense’s 2026 annual report, “The New Era of Phishing: Threats Built in the Age of AI,” which analyzed threat intelligence across enterprise environments throughout 2025. Headlines miss something: this isn’t just faster phishing—it’s structurally different. AI hasn’t accelerated existing attack patterns. It has created entirely new ones.

The 19-Second Benchmark

A transition from 42-second to 19-second detection intervals represents a 121% increase in attack frequency. But raw volume tells only part of the story. The composition of those attacks has changed dramatically.

Traditional phishing relied on templates—mass-produced emails with identical links, attachments, and payloads. Security tools learned to recognize these patterns. A single URL reported to a blocklist could protect thousands of potential victims.

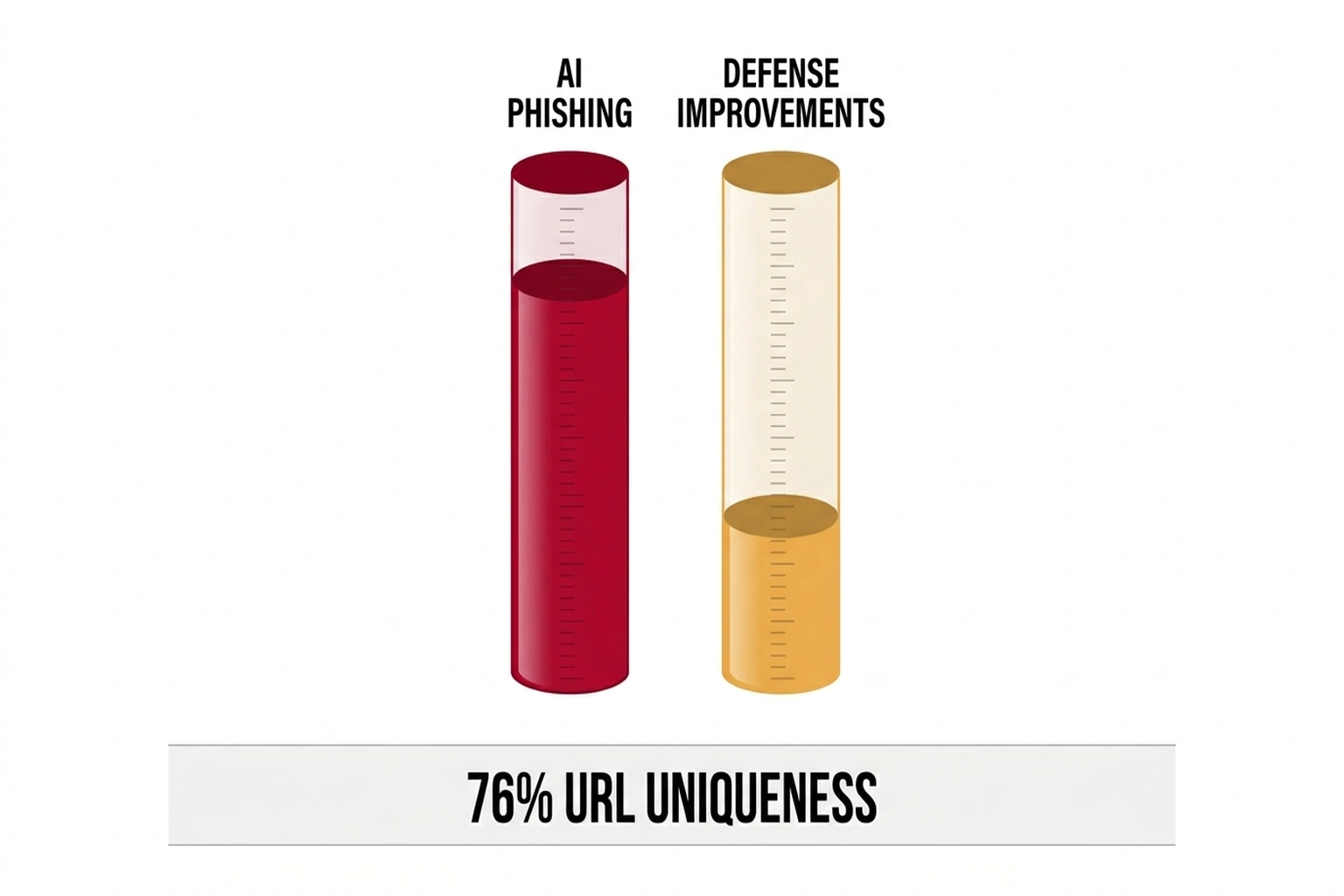

AI has broken that model. According to Cofense’s analysis, 76% of initial infection URLs observed in 2025 were unique. Rather than sending identical links to thousands of targets, threat actors now deploy AI systems that generate distinct URLs for each recipient. When one unique URL gets flagged, the others remain untouched.

Such a volume of unique addresses exposes a critical gap: traditional blocklists and threat feeds are updated only after a malicious URL is reported and verified, often taking hours or even days to propagate protective measures. During that lag, hundreds or thousands of new unique URLs slip through unchecked. As a result, the speed at which AI generates novel URLs now outpaces the slower pace of legacy defenses.

It’s polymorphism by default, not as an advanced technique, but as standard operating procedure.

Run the math on what polymorphism costs defenders. At one attack every 19 seconds, an enterprise with 10,000 employees faces approximately 4,547 phishing attempts per day. With 76% of URLs unique, that is 3,456 distinct malicious URLs daily — each requiring individual analysis, categorization, and blocklist submission. At an average analyst response time of 8 minutes per unique URL (Cofense), full coverage would require 461 analyst-hours per day — roughly 58 full-time security analysts dedicated exclusively to URL triage. Most mid-market security teams have three to five analysts total. The coverage gap: 98.5% of unique phishing URLs go unexamined. The report describes the transformation in stark terms: “Threat actors no longer experiment with AI in isolated ways. Instead, they use it as a core capability to generate, test, and deploy phishing campaigns at scale.” The result is “phishing that is faster, more adaptive, and more convincing than ever before, giving rise to polymorphic, multi-channel campaigns that continuously change their appearance while preserving the same malicious intent.”

The Rise of Conversational Phishing

Perhaps the most concerning shift involves what researchers term “conversational” phishing: emails that contain no malicious attachments, QR codes, or links. These messages account for 18% of all phishing attacks detected in 2025.

Conversational phishing exploits generative AI’s core strength: producing contextually appropriate, grammatically clean text in any language. Attacks typically initiate business email compromise (BEC) schemes, in which an apparent executive or vendor requests urgent payment or the disclosure of credentials via back-and-forth email threads.

Traditional defenses face their biggest blind spot here. Conventional email security filters work by scanning for suspicious elements, such as unusual attachments, blacklisted domains, or anomalous link structures. Conversational phishing contains none of these signals. A message from “ceo@company.com” asking finance to “process the attached invoice” triggers no alarms when there’s no attachment, no link, and the email address appears legitimate.

In one reported incident, a member of the finance team received an urgent request from an address that appeared to be the CEO’s, requesting approval for a wire transfer. With no obvious warning signs in the email and an air of authority in the language, the staff member proceeded, only discovering the deception after the funds had already been sent. Near-invisible attacks highlight just how easily sophisticated threats slip through conventional filters.

An 18% figure is likely to underrepresent the true scale. Security filters designed to detect malicious payloads are fundamentally unable to determine whether a conversational email constitutes social engineering. As AI-generated text becomes indistinguishable from human writing, the detection gap widens.

AI-Driven Personalization at Scale

Cofense documents a shift from spray-and-pray mass campaigns to highly personalized attacks—executed at scale through automation. One observed campaign deployed phishing websites that delivered different payloads depending on the device used to access them. Windows systems received one malware variant; macOS systems received another. Mobile users encountered credential-harvesting interfaces optimized for touchscreen use.

Underlying infrastructure used AI to detect browser signatures, device types, and geographic location, and then served customized attacks in real time. Another pattern involved brand impersonation that adapted to the victim’s software environment. A user accessing a phishing page in Chrome might see a spoofed Microsoft 365 login, while the same page accessed in Safari displays an Apple iCloud prompt. The goal is to maximize credibility by matching the expected visual environment.

AI also enables what the report describes as scraping “publicly available data from the web in order to personalize attacks.” LinkedIn profiles, social media posts, and public company directories feed into systems that craft individually targeted messages—not through manual research, but through automated ingestion and synthesis.

The RAT Surge

Remote access tools (RATs), both legitimate and malicious, saw a 105% increase in detections during 2025. The category includes software such as ConnectWise ScreenConnect and LogMeIn’s GoTo Remote Desktop, which can be exploited through social engineering.

What sets this surge apart is the dramatic reduction in the required skill level of the attacker. Threat creation has become more democratized: recent cases have documented entry-level criminals using off-the-shelf remote support software to compromise victims by following step-by-step guides found online. In one high-profile incident, a group with no formal technical background launched a successful campaign against small businesses by tricking employees into installing legitimate RAT software, gaining system access without writing a single line of code.

Such a trend highlights how AI-powered automation and the exploitation of trusted workplace tools have enabled less-experienced attackers to launch sophisticated, high-impact operations. A typical attack involves convincing a target to download and run remote support software to “fix” a fabricated technical issue. Once installed, the attacker gains persistent access to the victim’s system, often with the same privileges as the logged-in user.

Researchers note that “to continue to execute campaigns involving a large number of systems infected by legitimate RATs, threat actors increasingly rely on automation and AI in their workflows.” A critical concern is that the technical sophistication required to compromise systems has declined dramatically. A threat actor no longer needs to write malware, establish command-and-control infrastructure, or develop evasion techniques. They need only convince a target to click “allow” on a legitimate remote support prompt.

A 105% figure represents the number of detected instances. Undetected compromises—where users granted access without realizing the implications—likely exceed the reported numbers substantially.

Malware Delivery Via Phishing Increases 204%

Phishing’s role as a malware delivery mechanism intensified sharply. The Cofense report documents a 204% year-over-year increase in phishing emails delivering malware payloads in 2025. A combination of polymorphic URLs and AI-generated lures has made traditional blocklist defenses increasingly ineffective. When each URL is unique, when each email reads differently, and when payloads adapt to target environments, static detection struggles to keep pace.

Cofense recommends that “phishing must be analyzed after delivery, where behavioral context and human validation expose threats that evade static, perimeter-based controls.” In other words, assume some phishing will reach inboxes. Focus resources on identifying malicious behavior after delivery rather than on blocking every message at the perimeter.

The .es Domain Explosion

A striking tactical shift emerged around top-level domain selection. Use of Spain’s .es TLD for credential phishing increased 19-fold between Q4 2024 and Q1 2025, making it the third-most commonly abused domain extension. A surge likely reflects a combination of factors: relatively lax registration requirements compared to .com or .org, less scrutiny from security vendors focused on higher-profile TLDs, and the visual credibility of a country-code domain for targeting Spanish-speaking populations or organizations with Spanish business connections.

Domain registration patterns reveal AI’s operational footprint. A 19-fold increase over a single quarter suggests coordinated, automated registration campaigns rather than gradual organic growth. AI systems can identify underutilized TLDs, register domains at scale, and rotate through them faster than security vendors can build detection rules.

A Framework for Understanding the Shift: The Phishing Velocity Taxonomy

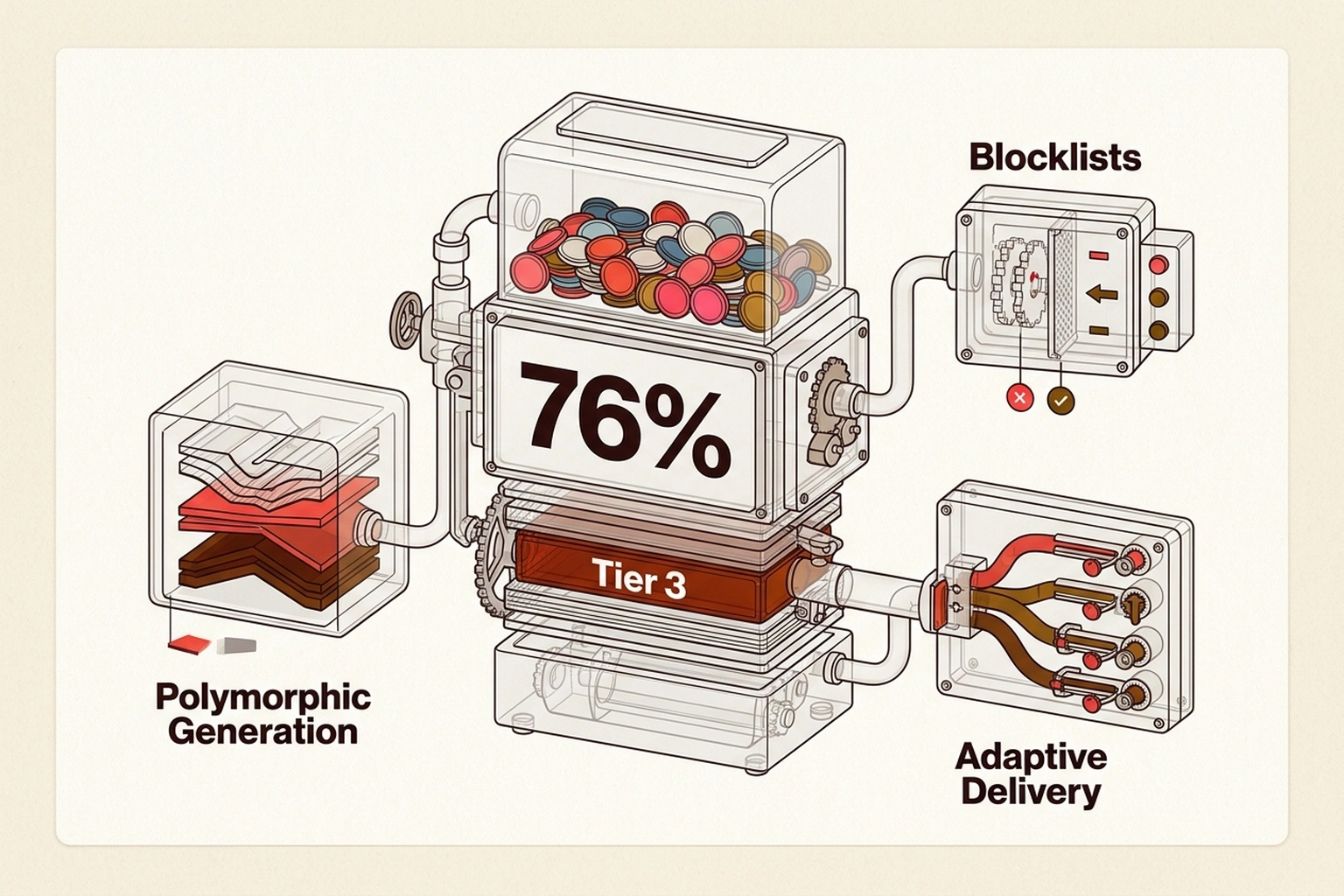

Comparing 2024 to 2025 suggests a useful framework for understanding how AI has changed threat dynamics. This analysis introduces what this analysis terms the Phishing Velocity Taxonomy — an original framework synthesizing research from Cofense, Microsoft, and industry reports into four tiers of AI integration in phishing operations:

| Tier | Capability | What AI Does | Defense Implication | 2025 Status |

|---|---|---|---|---|

| 1 | AI-Assisted Writing | Composes convincing emails in target languages | Traditional filters still effective | Ubiquitous |

| 2 | Polymorphic Generation | Creates unique URL/text/image per target | Blocklists become ineffective (76% unique URLs) | Common |

| 3 | Adaptive Delivery | Detects device/browser/location, serves custom payload | Point-in-time scanning fails | Emerging to common |

| 4 | Autonomous Campaign | Full lifecycle: target ID → message → timing → adaptation | Human-speed defense cannot match | Emerging in sophisticated campaigns |

Most organizations’ security posture assumes Tier 1 or early Tier 2 threats. 2025 available data indicates Tier 3 is now common, with Tier 4 emerging in sophisticated campaigns. A mismatch between the assumed threat level and the actual threat capability accounts for a significant portion of the breach patterns observed in recent months.

What Traditional Defenses Miss

A 19-second detection rate reflects what security filters catch—not what slips through. Several structural blind spots become apparent:

Language-agnostic attacks. Conversational phishing with no links or attachments produces minimal signals for automated detection. An email is text. Text appears legitimate. There’s nothing to block.

Reputation lag. A newly registered domain has no reputation score. A unique URL has no history in the blocklist. By the time a URL is identified as malicious and added to threat feeds, AI has generated thousands of replacements.

Legitimate tool abuse. RATs and remote support software operate through sanctioned channels. Detecting malicious use requires behavioral analysis—distinguishing legitimate IT support activity from social engineering attacks. Perimeter defenses don’t see the difference.

Multi-stage attacks. An initial email might contain only a benign-seeming conversation. Actual compromise happens through follow-up messages, phone calls, or credential entry on external sites. Point-in-time scanning misses the attack chain.

The Cofense report’s conclusion that “phishing must be analyzed after delivery” acknowledges these limitations. However, post-delivery analysis requires different tools, expertise, and staffing than traditional perimeter defense.

“AI Will Also Fix This” — Why the Defense Argument Falls Short

Microsoft’s 2025 Digital Defense Report argues that the same AI driving attacks will power stronger defenses — citing a 54% click rate for AI-generated phishing versus 12% for traditional campaigns, but projecting that AI-powered behavioral detection will close the gap (Microsoft). The argument has structural merit: defensive AI has access to an organization’s full communications baseline, giving it a context advantage that attackers lack. Security vendors including Abnormal Security and Proofpoint report detection improvements of 40-60% after deploying behavioral AI.

The counter-evidence sits in the math above. Defensive AI improves detection rates within a fixed scanning window. Offensive AI expands the attack surface faster than detection scales. A 50% improvement in detection against 4,547 daily attacks still leaves 2,274 threats unexamined — and at 76% URL uniqueness, each one is structurally distinct from the others. The asymmetry is not capability but arithmetic: defense must catch every variant, while offense needs only one to succeed, per Microsoft.

Critics of this framing point out that the asymmetry argument applies equally to pre-AI phishing — defenders have always faced a higher burden than attackers — yet enterprise security tooling has historically kept breach rates manageable despite that structural disadvantage. The strongest version of this counterargument holds that behavioral AI, trained on an organization’s full communication history, introduces a qualitative shift rather than just an incremental improvement: it detects intent and anomaly rather than signatures, which may prove more durable against polymorphic generation than the URL-triage math suggests. If behavioral baselines tighten faster than attack personalization improves, the coverage gap could narrow even as raw attack volume continues to climb.

Defensive Implications

Organizations facing AI-powered phishing need to reconsider assumptions about email security. Several practical shifts emerge from the 2025 data:

Behavioral analysis over signature matching. Tools that evaluate how emails function—rather than what they contain—better address conversational and multi-stage attacks. Solutions like Abnormal Security and Proofpoint focus on behavioral signals rather than static indicators. These platforms analyze communication patterns to detect anomalies that traditional filters miss, making them particularly effective against conversational phishing, where no malicious payload is present.

Identity verification for high-value requests. Conversational BEC attacks exploit trust in email as an identity signal. Implementing out-of-band verification for financial transactions, credential changes, and sensitive data requests creates friction that interrupts attack chains. For example, mandate phone-confirmed voice for any wire transfer request exceeding $10,000 to ensure critical actions cannot be completed solely via email.

Post-delivery remediation capabilities. Assume some malicious emails will reach inboxes. Automated tools that can identify and remove threats after delivery—and alert potentially affected users—provide a necessary backstop.

Cost of inaction. IBM’s 2025 Cost of a Data Breach Report places the average phishing-originated breach at $4.88 million. At 4,547 daily attempts against a 10,000-person enterprise and even a conservative 0.1% success rate, that is 4.5 successful compromises per day — roughly 1,643 per year. If just 1% escalate to reportable breaches, the annual exposure is approximately $80 million per enterprise. The 98.5% coverage gap calculated above is not an abstraction — it is the surface area through which those breaches arrive, per NordVPN’s Threat Protection.

Training that reflects current threats. Users trained to spot “suspicious links” and “unusual attachments” may be unprepared for grammatically correct, personalized messages containing no obvious red flags. Education should emphasize verification behaviors rather than threat identification.

Endpoint-level URL filtering. With 76% of phishing URLs now unique, blocklist-dependent defenses lag behind generation speed. Browser and network-level tools like NordVPN’s Threat Protection block known malicious domains and phishing sites before they load, adding an automated layer that catches threats signature-based filters miss.

The Trajectory Ahead

If 2025’s 121% increase in phishing frequency represents AI’s initial integration into attack operations, the coming years will likely show acceleration. Infrastructure investment required to move from Tier 2 to Tier 3 operations is modest: improved device detection, enhanced payload libraries, and more sophisticated response handling. The barrier to Tier 4 is primarily algorithmic rather than resource-based.

The 19-second benchmark won’t hold: based on current trajectory, attack frequency is on pace to reach one every 10–12 seconds by the end of 2026 as polymorphic generation becomes universal and Tier 3 operations become the baseline rather than the exception. Organizations that have not yet deployed behavioral AI-based email security and out-of-band verification for financial requests should treat those two controls as immediate priorities — not multi-year roadmap items. The data from 2025 is unambiguous: the defenses that worked against signature-based phishing are structurally mismatched to AI-generated, polymorphic campaigns, and the cost of that mismatch compounds with every quarter that closes without remediation.

What to Read Next

- Langflow RCE Exploited Again — 20 Hours, No PoC, Creds Stolen

- 41.6M AI Scribe Consultations Hide an Unregulated Medical Device

- Stryker Hack: Zero Devices Hit, Surgeries Canceled for 8 Days

References

- Cofense Report Reveals AI-Powered Phishing Accelerated to One Attack Every 19 Seconds — Cofense Annual Report 2026 with 19-second attack rate, 76% unique URLs, 18% conversational phishing, and 204% malware increase statistics.

- AI-Generated Code Used in Phishing Campaign Blocked by Microsoft — Infosecurity Magazine coverage of AI-generated phishing code patterns and SVG-based credential phishing campaigns.

- 2025 Microsoft Digital Defense Report (MDDR) — Microsoft report documenting AI-automated phishing trends and 54% click rates vs 12% for traditional phishing.

- Spain TLD’s Recent Rise to Dominance — Cofense research on 19-fold increase in .es domain abuse for credential phishing between Q4 2024 and Q1 2025.

- Cofense Uncovers Dramatic Rise in Phishing Attacks Using Spain’s .es Domains — SiliconANGLE coverage of the 19x surge in malicious .es domain campaigns.