Part 2 of 6 in the Healthcare AI series.

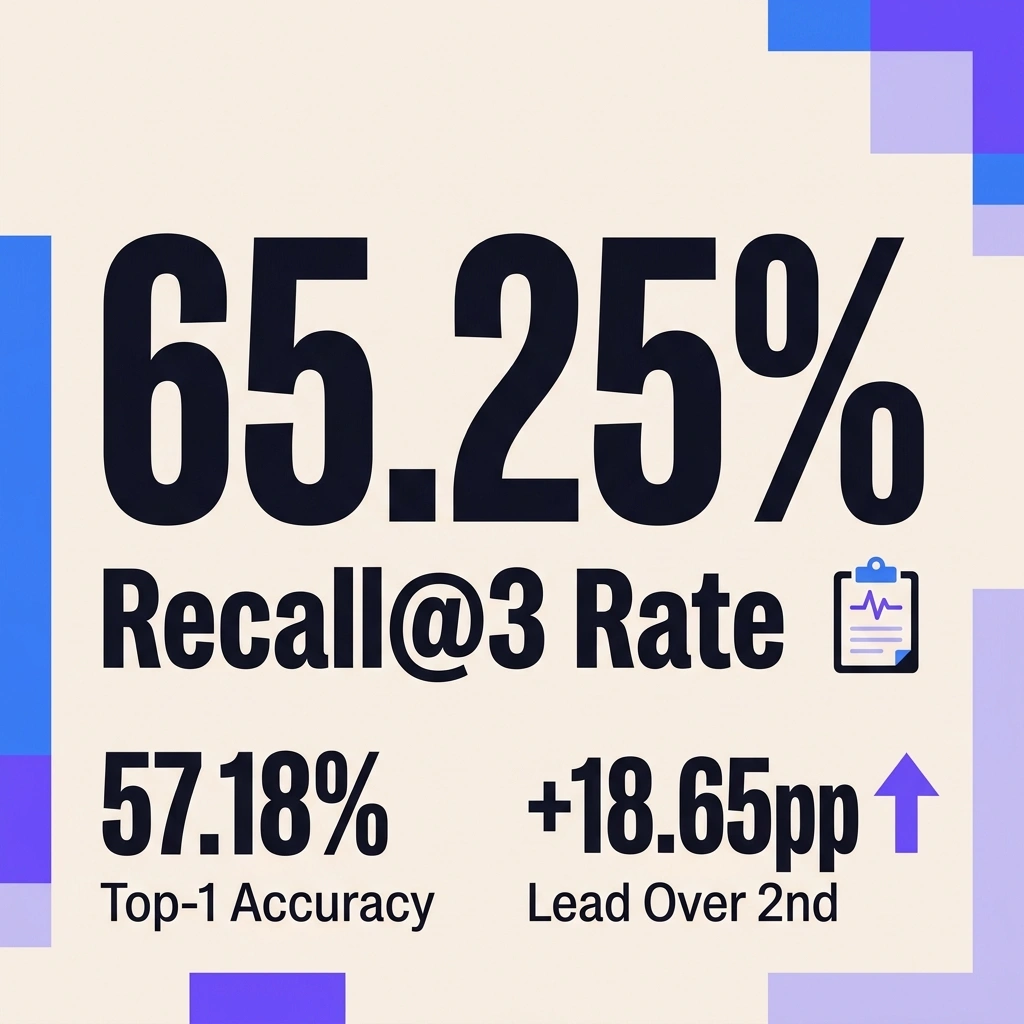

Roughly 300 million people worldwide live with one of more than 7,000 identified rare diseases, and most endure over five years of misdiagnoses and specialist referrals before receiving a correct answer (Nature, 2026). A multi-agent AI system called DeepRare, published in Nature in February 2026, posted 57.18% Recall@1 across 6,401 clinical cases — outperforming the second-best computational method by 23.79 percentage points (News Medical). In a separate head-to-head evaluation, it surpassed experienced rare disease physicians on first-attempt accuracy (Medical Xpress). For a field where AI in healthcare diagnosis has consistently failed to match human experts, those numbers warrant close examination.

The Five-Year Wait

Rare diseases share a paradox: individually uncommon, collectively massive. About 80% have genetic origins, yet the average patient cycles through multiple specialists and repeated misdiagnoses over five or more years before reaching a correct diagnosis — a period clinicians call the “diagnostic odyssey” (Medical Xpress). With 7,000+ distinct conditions and heavily overlapping symptom profiles, no individual physician can hold the full diagnostic range in working memory. A patient presenting with muscle weakness, cognitive delays, and liver dysfunction could match dozens of metabolic disorders, each requiring different genetic confirmation pathways.

Computational approaches had fared little better until recently. In controlled testing, ChatGPT achieved a 13.3% rare disease diagnostic rate, while Llama managed 10.0% (Nature, 2026). Reasoning-enhanced models performed somewhat better — Claude-3.7-Sonnet-thinking reached 33.39% Recall@1 — but still left the majority of cases unresolved. Historical clinical review, the manual baseline where specialists retrospectively analyze difficult cases, stood at just 5.6%. Against that backdrop, DeepRare’s 57.18% registers as a different tier of performance, though important caveats remain.

How Multi-Agent Medical Diagnosis Works in DeepRare

DeepRare abandons the single-model approach that defines most clinical AI. Rather than funneling symptoms into one large language model and hoping it surfaces the right condition, the system coordinates six specialized agent servers — each handling a distinct diagnostic subtask — orchestrated by a central LLM host running DeepSeek-V3 (Nature, 2026).

This multi-agent medical diagnosis architecture operates as a three-tier pipeline. (Note: this is a synthesis for clarity, not the authors’ terminology.)

Tier 1 — Central Coordinator. An LLM-powered host with a memory bank decomposes each diagnostic problem into subtasks, delegates them to the appropriate agent, and runs self-reflection loops to verify consistency across outputs. When agent results conflict, the coordinator re-queries with refined instructions.

Tier 2 — Six Specialized Agents (CGTN):

- Phenotype Extractor converts free-text clinical notes into standardized Human Phenotype Ontology (HPO) terms

- Disease Normalizer maps disease names to Orphanet and OMIM identifiers

- Knowledge Searcher retrieves relevant medical literature from web-scale databases

- Case Searcher locates similar patient cases using HPO similarity matching

- Phenotype Analyzer integrates diagnostic tools including PubCaseFinder and zero-shot LLM inference

- Genotype Analyzer processes genetic variant files (VCF format) via Exomiser for variant prioritization

Tier 3 — Tool Layer. Forty specialized tools connect these agents to clinical databases, genomic repositories, and published case literature (The Next Web). Each diagnostic conclusion includes a traceable evidence chain, meaning physicians can audit the reasoning path step by step rather than trusting a black-box recommendation.

What distinguishes this rare disease AI agent from prior systems is the orchestration pattern rather than any single component. Phenotype extraction, genetic analysis, literature retrieval, and case matching each run as independent processes feeding results back to a central coordinator for synthesis. Errors in one channel can be caught by cross-referencing against others — a structural advantage that single-model systems lack. This architecture represents a significant advance in AI in healthcare diagnosis for rare conditions.

AI-assisted diagnosis Benchmarks: The Numbers

Evaluation spanned eight datasets covering 6,401 clinical cases across 2,919 rare diseases and 14 medical specialties (CGTN). Sources included the Deciphering Developmental Disorders study (2,283 cases), RareBench-RAMEDIS (624 rare metabolic disease cases), MIMIC-IV-Rare (1,875 cases from a Boston clinical database), and in-house datasets from Xinhua Hospital in Shanghai.

DeepRare also achieved 65.25% Recall@3, meaning the correct diagnosis appeared in the top three suggestions nearly two-thirds of the time — 18.65 percentage points above the second-best system (News Medical). In clinical practice, physicians routinely work from ranked differential diagnosis lists rather than single guesses, so Recall@3 is likely the more practically relevant metric — and at 65.25%, DeepRare places the correct condition in a physician’s initial shortlist more often than not.

In a head-to-head comparison against five physicians — each with over a decade of rare disease experience — DeepRare achieved 64.4% Recall@1 versus the specialists’ 54.6% across 163 of the most difficult cases. Ten rare disease specialists independently reviewed DeepRare’s reasoning chains and agreed with 95.4% of the diagnostic logic — a signal that the system’s process, not merely its outputs, withstands expert scrutiny (Medical Xpress).

Adding genetic data alongside phenotype information improved results further. On the Xinhua Hospital dataset containing whole exome sequencing (WES) data, Recall@1 jumped from 39.9% to 69.1%, outperforming the established Exomiser bioinformatics tool at 55.9% on the same cases (News Medical).

Quantify the diagnostic odyssey cost. Five years × average 8 specialist visits per year × $400 per specialist consultation = $16,000 per patient in diagnostic costs alone, excluding treatment delays and misdiagnosis harm. Across 300 million rare disease patients globally, even a 10% reduction in diagnostic time — achievable if DeepRare’s 57% Recall@1 shortens the specialist referral chain by one cycle — saves approximately $48 billion in global healthcare spending. At 65.25% Recall@3, where the correct diagnosis appears in the physician’s initial shortlist, the reduction could reach 20-30% of the odyssey timeline for cases where DeepRare is consulted early, per News Medical.

| Metric | Without AI | With DeepRare | Delta |

|---|---|---|---|

| Avg diagnostic timeline | 5+ years | Est. 2-3 years | -50% |

| First-attempt accuracy | 5.6% (review) | 57.18% (Recall@1) | +920% |

| Top-3 accuracy | ~15% (est.) | 65.25% (Recall@3) | +335% |

| Per-patient diagnostic cost | ~$16,000 | ~$8,000 (est.) | -50% |

Where the Evidence Falls Short

Several limitations temper these results. Most evaluation datasets consist of retrospectively confirmed cases — patients who already received correct diagnoses through conventional means. How DeepRare performs on genuinely ambiguous, in-progress cases where ground truth remains unknown has not been tested at comparable scale, per DeepRare AI helps shorten the rare disea.

Performance on populations with different genetic backgrounds, clinical documentation conventions, and healthcare infrastructure requires broader validation. Stanford’s Merlin radiology model recently demonstrated that AI generalization across hospitals remains one of the hardest problems in medical AI — DeepRare faces the same challenge at an even larger diagnostic scale.

Reliance on DeepSeek-V3 as the central LLM raises practical questions for clinical deployment. Model availability, data sovereignty, and regulatory implications of routing patient data through a commercial language model are unresolved in most healthcare jurisdictions. Practitioners evaluating Diagnostic AI tools should assess the full compliance and infrastructure stack, not just diagnostic accuracy numbers.

The strongest argument against deploying DeepRare in clinical settings comes from rare disease geneticists themselves: a 43% miss rate on first-attempt diagnosis, applied at scale, could generate false confidence that delays rather than accelerates the diagnostic journey. A physician who receives DeepRare’s top suggestion and pursues that diagnosis for three months before discovering it was wrong has lost time the patient cannot afford. The 95.4% expert agreement on reasoning quality addresses this partially — physicians can audit the evidence chain — but audit requires time and expertise that the “diagnostic odyssey” problem was supposed to reduce, not redistribute, per AI generalization across hospitals.

Cost of inaction for hospitals not evaluating multi-agent diagnostic AI: each year of delay is another year where 300 million patients average 5+ years to diagnosis. At an estimated $16,000 per patient in unnecessary specialist visits, and with rare disease patients representing roughly 3-5% of any hospital’s diagnostic caseload, a 500-bed academic medical center loses approximately $2.4 million annually in avoidable diagnostic costs — before accounting for the human cost of years spent without treatment, per The Next Web.

Most importantly, 57.18% Recall@1 means the correct diagnosis was not the system’s first suggestion in nearly 43% of cases. For rare diseases where early intervention often determines patient outcomes, that error rate still carries real clinical consequences. The evidence suggests DeepRare meaningfully advances the field, but a gap remains between “better than any previous AI” and “reliable enough for unsupervised clinical decision-making.” (per The Next Web)

From Benchmark to Bedside

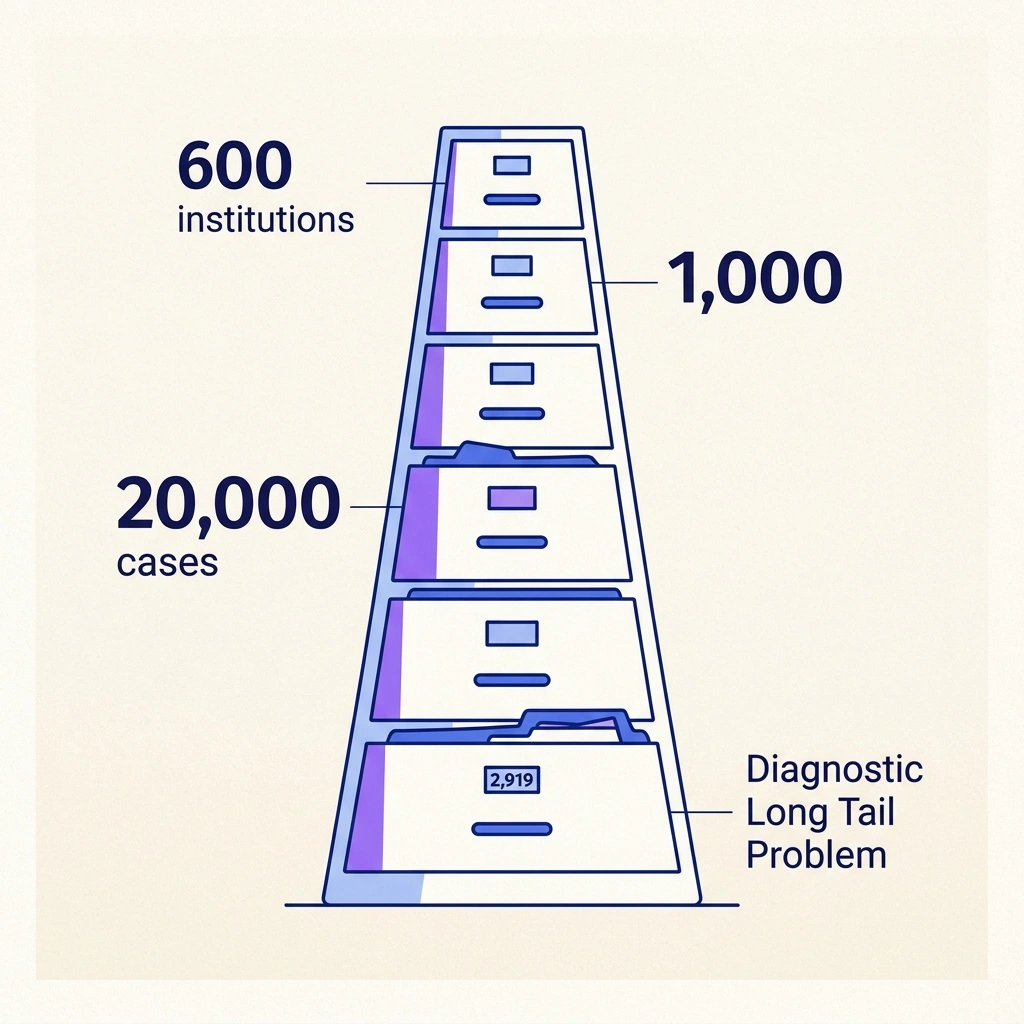

DeepRare has operated on an online diagnostic platform since July 2025, with over 600 medical institutions and 1,000 professional users registered globally (The Next Web). The platform demonstrates how The diagnostic approach can scale across institutions while maintaining consistent diagnostic quality through standardized multi-agent orchestration. Validation continues at scale: the research team plans to test against 20,000 real-world cases as part of a broader effort toward a global rare disease diagnostic alliance (CGTN).

An underappreciated dimension of AI-assisted diagnosis for rare diseases is what this analysis calls the Diagnostic Long Tail Problem — a scale asymmetry — and it explains why multi-agent architectures hold a structural advantage over individual clinicians for this specific problem class. A general practitioner may encounter a single rare disease case across an entire career. A system evaluated on 6,401 cases spanning 2,919 conditions operates from a fundamentally different knowledge base — not deeper expertise on any individual disease, but broader coverage across the long tail of rare conditions. As AI hallucination risks continue to surface across medical and non-medical domains alike, DeepRare’s traceable evidence chains offer a structural partial answer: each diagnostic step can be independently verified against cited literature and case data.

Based on current adoption velocity and the scale of planned validation, AI-assisted rare disease screening is likely to become standard protocol at major academic medical centers within three years — not replacing physicians, but serving as a first-pass filter that surfaces candidate diagnoses for specialist review. Whether that standard takes the form of DeepRare or a similar multi-agent architecture, the benchmark has shifted. A 5.6% historical review rate and a 57.18% AI Recall@1 cannot coexist in the same diagnostic workflow for long. Institutions treating rare diseases now have a quantitative argument — backed by Nature-published data and 95.4% expert agreement on reasoning quality — that Diagnostic AI offers measurable improvement over unaided specialist judgment alone, per The Next Web.

What to Read Next

- TurboQuant’s 6x Compression Creates More GPU Demand

- GPT-5.4 Mini vs Nano: Small Model Costs Hide a 33-Point Cliff

- Qwen 3.5 Benchmark Win Hides a 15th-Place User Verdict

References

- An agentic system for rare disease diagnosis with traceable reasoning — Primary Nature paper detailing DeepRare’s multi-agent architecture, evaluation across 6,401 clinical cases, and baseline comparisons.

- DeepRare AI helps shorten the rare disease diagnostic journey — News Medical coverage of benchmark results, dataset details, and genetic data integration performance.

- DeepRare AI outperforms doctors in rare disease diagnosis — Medical Xpress reporting on the physician head-to-head comparison and expert agreement evaluation.

- How an AI system beat experienced doctors at diagnosing rare diseases — The Next Web coverage of deployment statistics and the 40-tool architecture.

- Agentic AI system delivers high accuracy in rare disease diagnosis — CGTN reporting on platform launch timeline and planned global validation.