Part 7 of 7 in the The Cost of AI series.

$19 per month. That’s the price point where two major AI coding tools converge — Amazon Q Developer Pro charges exactly that for a single seat (Superblocks). GitHub Copilot’s Individual tier is cheaper at $10 per month, while its Business tier matches Amazon Q at $19 per user (GitHub; Superblocks).

Cursor and Windsurf double it to $40 (Exceeds.ai). Free tiers exist from both GitHub and Amazon. Every buyer’s guide published this quarter compares these subscription numbers. None calculates what the code costs after it ships.

When every tool lands between $10 and $40 per month, buyers stop comparing price and start comparing features — autocomplete quality, model selection, IDE integration. That is exactly when the costs not on the invoice start to compound.

Security researchers at Veracode published an answer absent from these comparisons in their latest GenAI security report. AI-generated code passes security checks just 55% of the time, a figure largely unchanged from two years ago (Veracode). Separately, researchers at DryRun Security found that the vast majority of AI coding agent pull requests contained at least one vulnerability (Help Net Security). DryRun’s headline finding deserves scrutiny, however. Their team tested fully autonomous coding agents generating entire pull requests without human oversight — not the autocomplete-style suggestions most small teams actually use. Veracode’s pass rate, measured across the broader spectrum of AI-assisted development, is the more representative baseline for typical buyers. It is also the more uncomfortable finding: the security problem persists even in the least aggressive AI workflows.

This comparison uses a different metric: True Seat Cost — subscription plus the developer hours consumed by security rework. At the industry’s current 55% security pass rate, subscription price represents less than 15% of what a small team actually pays per developer per month (Veracode).

The Rework Nobody Invoices

A five-developer team using any of these tools at default output levels generates roughly 50 AI-assisted pull requests per month. At Veracode’s stubbornly unchanged pass rate — still hovering where it sat two years ago — 45% of those PRs carry at least one vulnerability requiring human remediation (Veracode). For a team without dedicated AppSec staff — the reality for most small businesses — each failed PR requires a developer to identify the flaw, patch it, and re-submit.

Each input in the formula below is a deliberate conservative estimate, and readers should adjust them to match their own teams. Ten AI-assisted PRs per developer per month assumes modest adoption, not heavy usage. Thirty minutes per fix reflects a minimum for identifying a vulnerability, patching it, and verifying the remediation — experienced developers on familiar codebases may be faster, but junior developers or complex dependency chains push well beyond this. A $50-per-hour median rate aligns with small-business and agency billing norms.

10 AI PRs/dev × 0.45 failure rate × 0.5 hours × $50/hr = $112.50 per developer per month in security rework (Veracode).

For a five-person team: $6,750 per year — enough to buy every developer on the team a new laptop, spent instead on fixing code the AI got wrong.

That number appears on zero pricing pages, in zero buyer’s guides, and in zero vendor demos. Call it the Sticker Price Trap — the practice of ranking AI coding tools by subscription cost while the operating cost that dwarfs it stays invisible. GitHub Copilot Individual at $10 per month actually runs $122.50 per developer in True Seat Cost — subscription plus rework (GitHub). Subscription accounts for 8.2% of that monthly expense.

Every comparison published this quarter evaluates the 8.2%. So what happens when you rebuild the ranking around the other 91.8%? (per AI coding tools by subscription cost)

Five Coding Tools, One Formula

True Seat Cost = monthly subscription + estimated security rework per developer.

Starting with the baseline — applying the industry-wide 45% failure rate uniformly across all tools:

| Tool | Monthly Seat | Built-in Security | Baseline True Seat Cost | Sub Share |

|---|---|---|---|---|

| GitHub Copilot Free | $0 | None | $112.50 | 0% |

| GitHub Copilot Individual | $10 | None | $122.50 | 8.2% |

| Amazon Q Developer Pro | $19 | IAM integration | $131.50 | 14.4% |

| GitHub Copilot Business | $19 | Content exclusion, policy controls | $131.50 | 14.4% |

| Cursor Pro / Windsurf | $40 | None | $152.50 | 26.2% |

Sources: GitHub, Superblocks, Exceeds.ai. Rework derived from Veracode’s 45% failure rate.

Read the rightmost column. True Seat Cost spans $112.50 to $152.50 — a $40 spread, identical to the subscription range itself. Rework is a constant floor. Subscription choice shifts the total by at most 35%.

Going into this comparison, the expectation was that free tiers dominate on value. What emerges is the opposite. GitHub Copilot Free conceals 100% of its True Seat Cost — every dollar of operating expense is invisible to the buyer. At $40, Cursor Pro makes 26.2% of total cost visible on the invoice. Paradoxically, the most expensive subscription is also the most transparent about what the tool costs to operate, per GitHub.

But that baseline table has a structural flaw: it treats every tool identically on security rework — the dimension this analysis argues matters most. GitHub Copilot Business includes content exclusion and organizational policy controls that can restrict code suggestions matching known-vulnerable patterns. Amazon Q Developer Pro integrates with AWS IAM for access governance, adding a layer of policy enforcement absent from free and individual tiers. No vendor publishes security-adjusted pass rates for these specific controls. Ignoring their existence, however, produces the same blind spot this article criticizes in subscription-only comparisons.

A conservative scenario illustrates how even modest security features reshape the ranking. If Copilot Business’s policy controls reduce the effective failure rate by 15% (from 45% to 38.25%), and Amazon Q’s IAM integration achieves a 10% reduction (to 40.5%): (Superblocks)

| Tool | Monthly Seat | Adjusted Failure Rate | Adjusted Rework | Security-Adjusted True Seat Cost |

|---|---|---|---|---|

| GitHub Copilot Free | $0 | 45% | $112.50 | $112.50 |

| GitHub Copilot Business | $19 | 38.25% | $95.63 | $114.63 |

| Amazon Q Developer Pro | $19 | 40.5% | $101.25 | $120.25 |

| GitHub Copilot Individual | $10 | 45% | $112.50 | $122.50 |

| Cursor Pro / Windsurf | $40 | 45% | $112.50 | $152.50 |

Under this scenario, Copilot Business — despite costing $9 more per month in subscription than Copilot Individual — becomes $7.87 cheaper in True Seat Cost. A tool that looks more expensive on the invoice becomes less expensive in operation. That inversion is invisible to every buyer’s guide comparing subscription prices alone. (GitHub)

Even moderate skepticism about these adjustment estimates leaves the core finding intact: tools with security-aware features deserve a different rework assumption than tools without them, and any comparison that ignores this difference is comparing marketing materials, not costs.

Now run the formula in the other direction. At what security pass rate would subscription price start to matter — become at least half of True Seat Cost? Solve for the failure rate where rework equals a $10 subscription: $10 = 10 PRs × failure_rate × 0.5 hrs × $50. Failure rate = 4%. That translates to a 96% security pass rate — nearly double the current industry figure of 55% (Veracode). Until AI-generated code clears nineteen out of twenty security checks, comparing subscription prices is comparing rounding errors. No tool on the market is close.

Augment Code sits outside both tables — custom enterprise pricing with SOC 2 Type II compliance (Exceeds.ai) for regulated small businesses where a single breach costs more than a decade of subscriptions. For those buyers, the metric is not True Seat Cost but liability exposure per unsecured tool.

Forty-Three Percent of the Gains Disappear

Nearly half of what AI coding tools give back in productivity, they take away in security cleanup. Here is the math behind that claim.

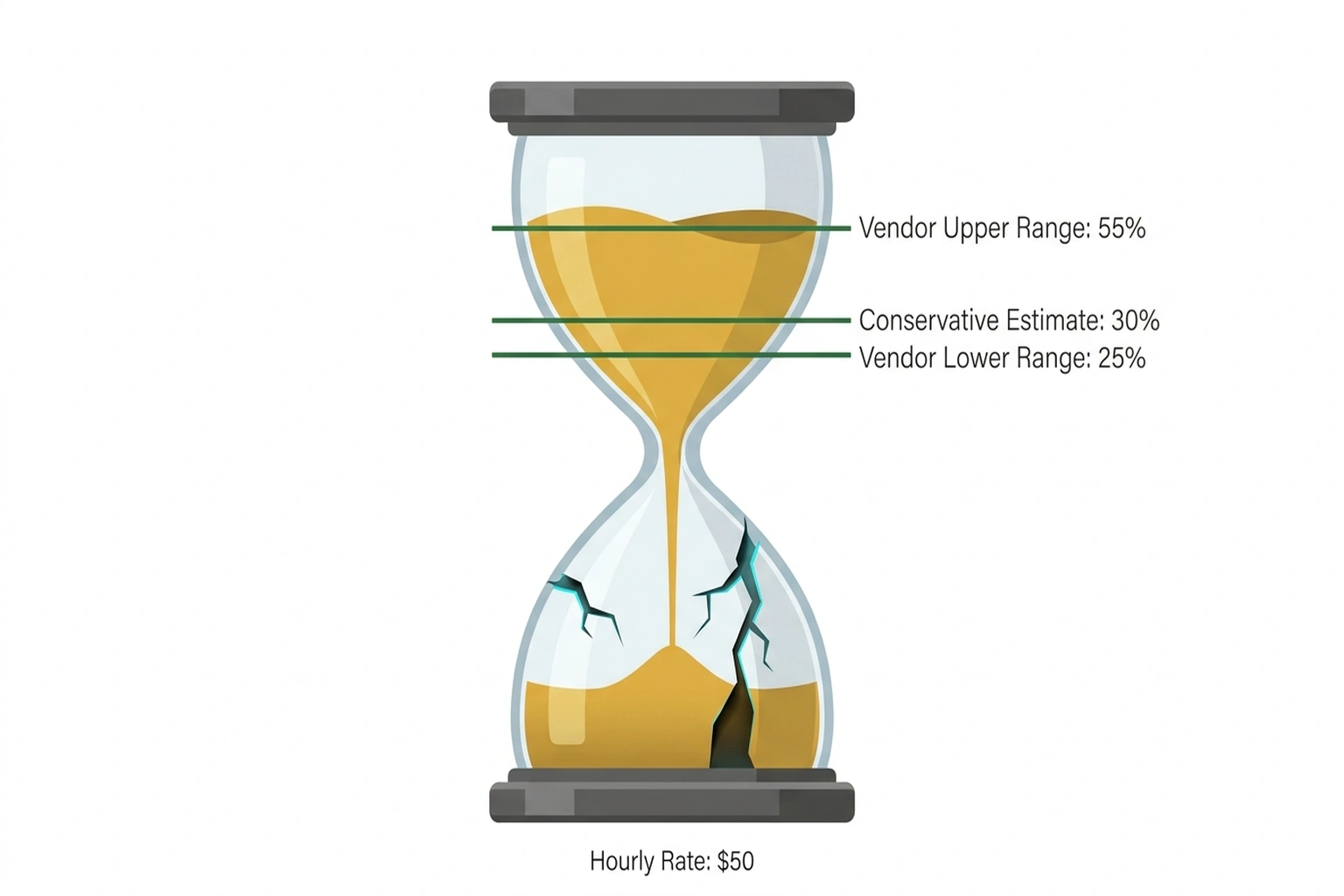

Vendor-reported productivity gains cluster between 25% and 55%, depending on the task and measurement method. Using 30% — near the lower bound of credible estimates — keeps this analysis conservative. A developer spending roughly 17 hours per month on code review and integration saves about five hours at that rate. At $50 per hour: $250 per month in reclaimed capacity, per Veracode.

Same developer, same tools, same month: $112.50 in rework from Veracode’s 45% failure rate (Veracode).

$112.50 ÷ $250.00 = 45% of productivity gains consumed by security rework.

Neither Veracode nor any vendor performs this convergence calculation. Productivity data measures speed. Security data measures quality. Each appears in separate reports, for separate audiences. Combined, they reveal something the individual numbers conceal: nearly half the capacity The tools reclaim gets spent on fixing the tools’ own output.

For a five-developer team claiming 30% productivity improvement, net gain after security rework drops to approximately 16.5%. Not 30%. Both the tool and the gain are real. But 43 cents of every dollar of reclaimed time goes back into remediation, per Help Net Security.

Before this calculation, the reasonable assumption was that These coding assistants deliver net positive value at any price point — the debate was merely how much. After it, the question shifts: at what failure rate does rework erase the gains entirely?

Back-calculate: at a 100% failure rate, rework eclipses productivity savings. DryRun Security’s researchers measured 87% vulnerability rates for fully autonomous AI coding agent pull requests (Help Net Security). For teams running agentic workflows rather than autocomplete-style suggestions, the value proposition approaches break-even. And as AI-generated code volume scales up, the 45% failure rate compounds: more output means proportionally more rework, not proportionally more delivery.

The Counterargument That Sharpens the Finding

Human-written code has always failed security checks at comparable rates, making rework a baseline cost rather than an AI-specific one. This is the sharpest objection — and it is half right.

If AI-generated code fails at the same rate as human-written code, then these tools have not made the problem worse. But they have dramatically increased the volume of code subject to that failure rate. A tool that helps a developer produce 30% more code at a 45% security failure rate generates 30% more vulnerabilities requiring remediation. Productivity gains and security debt scale together, locked in proportion. Academic research on code hallucination — where AI generates syntactically valid but functionally incorrect or insecure code patterns — suggests this coupling is structural rather than a tuning problem the next model update resolves (arXiv:2511.00776).

Every vendor productivity claim measures gains without subtracting the rework those gains generate. After a full quarter of buyer’s guides and comparison articles, the persistence of that omission is itself the finding.

Your Number

Adjust the inputs, keep the structure. Sensitivity matters — the formula is only as reliable as the numbers fed into it:

True Seat Cost = Subscription + (AI PRs per dev × Failure Rate × Fix Hours × Hourly Rate)

With this analysis’s conservative defaults — 10 AI PRs per developer per month, 45% failure rate, 0.5 hours per fix, $50/hr — here is what each scenario costs annually:

| Team Size | Annual Subscriptions ($10/seat) | Annual Rework | Total True Cost | Rework Share |

|---|---|---|---|---|

| Solo dev | $120 | $1,350 | $1,470 | 91.8% |

| 3 devs | $360 | $4,050 | $4,410 | 91.8% |

| 5 devs | $600 | $6,750 | $7,350 | 91.8% |

| 10 devs | $1,200 | $13,500 | $14,700 | 91.8% |

Rework share stays constant at every team size because both subscription and rework scale linearly per seat. No volume discount on security debt. A ten-person team pays ten times the rework of a solo developer — $13,500 per year, the cost of a junior contractor for three months, spent invisibly on undoing what the AI introduced. (Help Net Security)

How sensitive is this result? Halve the fix time to 15 minutes, and rework drops to $56.25 per developer — still 5.6× the cheapest subscription. Double the PR count to 20, and rework hits $225 per developer, absorbing 90% of productivity gains at a 30% improvement rate. Rework dominates subscription cost across every reasonable input scenario. (GitHub)

What to do with this number depends on where you sit:

Solo developers and two-person teams: True Seat Cost is dominated by rework regardless of tier. Start with a free plan, but only if you already run a security scanner in your CI pipeline — Snyk, Semgrep, or GitHub’s own code scanning (free for public repositories) catch vulnerabilities before they reach production. Without automated scanning, the $19 Copilot Business tier with content exclusion policies costs $9 more per month than Individual but provides policy controls to reduce the rework denominator. As the security-adjusted table shows, that $9 premium potentially saves $16.87 in rework — controls the Individual tier lacks entirely.

Three to five developers: At this band, shadow AI adoption compounds the problem — if even one developer uses an unmanaged free tier alongside the team’s paid tool, rework costs apply to both code streams with no visibility into which tool generated the vulnerability. Standardize on a single managed tier. Configure your CI pipeline to run automated SAST scans on every pull request — the goal is catching AI-generated vulnerabilities before human reviewers spend 30 minutes on manual remediation. Present the $6,750 annual rework bill to whoever approves software purchases, not the $600 subscription.

Five to ten developers: At this scale, the gap between the cheapest and most expensive subscription ($0 vs. $40 per seat) is $4,800 per year across ten seats. Investing in pipeline-level security scanning — automated SAST tools that flag vulnerabilities at PR time — addresses up to $13,500 in potential rework. That security investment outweighs the subscription decision by nearly 3:1. Choose the tool whose policy controls fit your workflow; subscription price is the third or fourth criterion, not the first.

$19 Is Not the Price

$19 opened this comparison as a price point — the sticker on the box, the number two major vendors make easy to find. It reappeared as a visible fraction — 14.4% of what a developer actually costs to equip. By now it should register as neither.

$19 is the distraction.

Security pass rates for AI-generated code have not moved in two years. Veracode’s researchers measured roughly 55% when the first wave of AI coding assistants hit the market, and they measure the same now (Veracode). Until that number breaks 96% — the threshold where subscription price begins to rival rework cost — the coding assistant comparison that matters is not which subscription to buy but which workflow produces the least remediation.

One prediction to close on: by Q4 2026, at least one major vendor will bundle real-time security scanning directly into the AI coding subscription — collapsing the rework line into the subscription line and, for the first time, making the sticker price approximate the true cost. That vendor will not be the cheapest. It will be the one that understood the math this article just ran.

Until then, run your own number. The formula is above. The inputs are yours. The $6,750 is already being spent — the only question is whether it shows up in a budget line or disappears into developer hours nobody tracks.

What to Read Next

- JPMorgan’s AI Mandate Hides a 39-Point Perception Gap

- Shadow AI Costs $21K Per App: The 3:1 Ratio Nobody Tracks

- AI Accounting Tools Cite Tax Rules They Invented

References

- GitHub Pricing: https://github.com/pricing

- Superblocks: Amazon Q Developer Pricing: https://www.superblocks.com/blog/amazon-qdeveloper-pricing

- Exceeds.ai: Best Enterprise AI Coding Assistants: https://blog.exceeds.ai/best-enterprise-ai-coding-assistants/

- Veracode: Spring 2026 GenAI Code Security: https://www.veracode.com/blog/spring-2026-genai-code-security/

- Help Net Security: AI Coding Agent Security: https://www.helpnetsecurity.com/2026/03/13/claude-code-openai-codex-google-gemini-ai-coding-agent-security/

- Decoded AI Tech: AI Coding Assistant Security: Codex vs Claude Code: https://decodedaitech.com/ai-coding-assistant-security-codex-vs-claude-code/

- Decoded AI Tech: Shadow AI Costs: https://decodedaitech.com/shadow-ai-costs-21k-per-app-the-31-ratio-nobody-tracks/

- Decoded AI Tech: Vibe Coding Shadow IT: https://decodedaitech.com/vibe-coding-is-now-the-biggest-shadow-it-problem/

- arXiv:2511.00776: https://arxiv.org/abs/2511.00776