Roger Gose directs technology for a school district in Garfield County, Colorado. SB 205 was the nation’s first thorough AI accountability law. When it passed, Gose faced a binary choice. Fund annual bias audits his district couldn’t afford, or stop using AI in student decisions entirely. More than fifty civil society organizations endorsed keeping those requirements. In March 2026, the governor’s AI Policy Working Group voted unanimously to eliminate them.

Gone: impact assessments, risk management policies, algorithmic discrimination reporting to the Attorney General (Mayer Brown). Replaced by: transparency notices and a 30-day adverse-outcome letter, effective January 1, 2027 (Bloomberg Law). Every requirement that generated evidence before a decision was made — removed. Every requirement that generates paperwork after — kept. Gose, who represented the Colorado Association of School Executives on the working group, called the result “great benefits for the citizens of Colorado without bringing in unfunded mandates” (Colorado Politics).

Rep. Brianna Titone, who championed the original bill, read it differently: “While the voting members did agree, there were many caveats to their ‘yes’ votes” (KUNC). When school districts and tech lobbyists and disability advocates all celebrate the same framework, the question isn’t what they agreed on — it’s what the math behind “unanimous” actually reveals.

Six Domains, Zero Budget Lines

SB 24-205 did not regulate chariots or art generators. It regulated any AI system that drove a “consequential decision” across six domains: employment, education, housing, credit, insurance, and healthcare (Colorado General Assembly). For each, deployed owed six simultaneous obligations: risk management policies, impact assessments, annual discrimination reviews, 90-day AG disclosures, human-review appeals, and public governance statements (Colorado General Assembly). Each obligation required legal, technical, and procedural infrastructure — and the compliance burden fell entirely on the deployed, not the AI vendor.

More than 50 organizations endorsed those requirements — the Center for Democracy and Technology, the ACLU of Colorado, the Colorado Cross-Disability Coalition among them. “Consumers want this,” Rep. Titone told the legislature. “Poll after poll after poll” (GovTech). But polling measures demand. It does not measure willingness to pay.

CDT and allied organizations argued that impact assessments and annual reviews were essential minimum safeguards for consequential AI decisions (GovTech). But this framing overlooks DigitalApplied‘s cost evidence: when 75% of deployers cannot fund the “minimum,” the safeguard protects only the consumers whose decisions are made by well-resourced incumbents — and leaves the rest with no regime at all. Consumer demand for algorithmic accountability did not come with a budget line.

Who the $50,000 Audit Actually Protected

A bias audit for a single resume screener costs $5,000 to $12,000. A multi-model hiring platform: $25,000 to $50,000. Annual re-audits run 40–60% of the original fee, and the compliance obligation does not transfer to the software vendor — the deployed bears full legal responsibility whether the AI was built in-house or purchased off the shelf (DigitalApplied).

$5,000 per simple tool × 3 covered domains — hiring, student assessment, attendance flagging — = $15,000 in first-year AI bias audit costs for a district like Gose’s, assuming the cheapest possible tools. Annual re-audits at 50% add $7,500 indefinitely. At the complex end: $50,000 × 3 = $150,000 in Year 1. That range — $15,000 to $150,000 — does not appear in any of the working group’s public materials.

Over five years, the bill comes due in full. At the low end: $15,000 Year 1 + ($7,500 × 4 re-audit years) = $45,000. At the complex end: $150,000 + ($75,000 × 4) = $450,000. Under the replacement framework — a transparency notice template with an estimated ~$500 legal review (based on standard small-business compliance consultation rates), refreshed annually — the same five-year window costs roughly $2,500. The ratio: 18:1 to 180:1, old regime versus new, for the same district making the same decisions about the same students.

What DigitalApplied‘s cost data and the working group’s unanimous vote reveal is what this analysis terms The Audit Cliff. It’s the compliance-cost threshold above which an accountability law stops reaching the entities most likely to deploy AI without oversight — and starts certifying the ones that already have compliance departments.

But the Cliff doesn’t just exclude small deployers. It actively advantages large ones. A hospital system or enterprise HR vendor that can absorb a $50,000 annual audit earns a compliance certification its smaller competitors cannot match — not because its AI is less biased, but because its budget is larger. The audit mandate functioned as an incumbency subsidy: a moat built from paperwork rather than product quality. The organizations it certified were the ones that least needed external policing. The ones it priced out — school districts, community lenders, small landlords — were the deployed whose AI decisions affected populations with the fewest alternative options.

Deployers below the Cliff didn’t enter a lighter regime. They entered no regime at all. And the number of people affected by that gap is not abstract.

If roughly 3,750 of an estimated 5,000 deployed in a mid-size state were priced out of compliance — and each makes consequential AI decisions affecting even 200 individuals per year — that’s 750,000 people annually whose AI-driven denials, screenings, and risk scores operated under zero accountability framework. Not a weaker framework. None. The framework that replaced SB 205 was supposed to bring them back in — but the enforcement mechanism it chose may have created a different gap entirely.

Ninety Days to Fix What Nobody Tests

Here is where the consensus stops looking like a compromise and starts looking like a structural concession — because the replacement framework didn’t just lower the accountability floor. It removed the only mechanism that generated evidence before a decision harmed someone, and replaced it with a process that activates only after the harm is done.

Under the replacement framework, no private citizen can sue an AI deployed for algorithmic discrimination — not the applicant filtered by a biased resume screener, not the tenant rejected by a model that proxies for race. Enforcement belongs exclusively to the Attorney General, who must provide written notice of an alleged violation and then grant 90 calendar days for a cure before pursuing civil penalties (Mayer Brown).

“The devil’s in the details… I want the law to have teeth,” said Sen. Robert Rodriguez, Senate Majority Leader and the original law’s lead sponsor. The Colorado Technology Association — which represents the deployed Rodriguez wants regulated — praised the identical provisions. Its president, Brittany Morris Saunders: “We look forward to seeing this progress reflected in the forthcoming legislation and to continuing the dialogue on how to protect consumers while enabling innovation to thrive” (KUNC).

Rodriguez wants teeth. The enforcement chain has none.

Combine Mayer Brown’s enforcement analysis with the absence of any audit requirement, and a conclusion surfaces that neither source draws. The Attorney General must detect discrimination that no independent test has measured, then notify a deployed whose sole obligation is a transparency notice, then wait three months while the deployed “cures” a problem never independently verified. When the trade association for the regulated industry celebrates a regulation, the reasonable question is not whether the framework is balanced. It’s how much protection transferred to a notice that consumers read after the decision is already made.

This is the arithmetic that the unanimous vote concealed. SB 205 offered deep accountability that reached 25% of deployers — leaving 750,000 people in a shadow zone with no protection at all. The replacement offers shallow accountability that reaches 90% — but tests nothing. The old regime audited the compliant and ignored everyone else. The new regime documents everyone and verifies no one. Neither side in Denver computed which failure costs more lives, per EU AI Act.

Three Frameworks, One Structural Pattern

The Audit Cliff is not a Colorado problem. It is a structural feature of any jurisdiction where compliance costs scale faster than the budgets of the deployed the law targets — and the evidence is already accumulating across three governance levels.

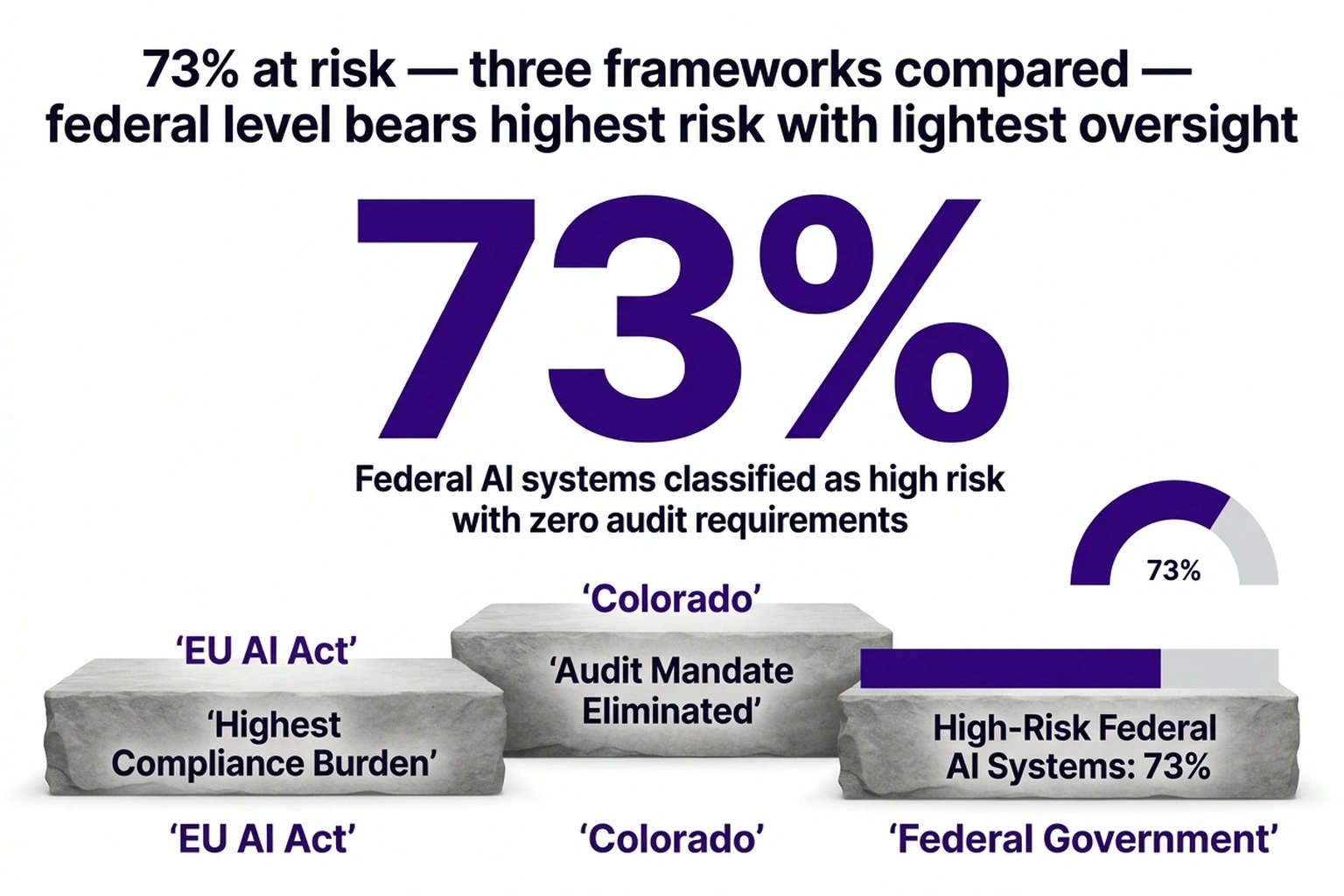

Across the Atlantic, the EU AI Act mandated conformity assessments — technical audits — as a condition of deploying high-risk AI (EU AI Act). Industry estimates place conformity assessment costs at EUR 10,000–EUR 300,000 depending on system complexity — comparable to or higher than Colorado’s audit range, applied to a market where small and medium enterprises account for the majority of AI deployers. The EU chose testing over reach. Colorado chose reach over testing. Neither has published the number that would settle the debate: the CRR of their own framework.

At the federal level, 73% of AI systems deployed by government agencies posed high risk — and a four-page executive framework imposed no audit requirement on any of them, producing a federal CRR of zero: every deployer “reached,” nothing tested, nothing enforced.

At the state level, the pattern is already replicating. Illinois moved first with its AI Video Interview Act — narrow in scope, applying only to video-based hiring assessments (JD Supra). At least 17 states introduced AI governance legislation in the 2025–2026 cycle (Brightmine). None of the transparency-only proposals include independent verification that the AI system treats protected groups equitably. Colorado employers using AI in hiring must now notify applicants and provide rejected candidates with the factors the algorithm considered (Bloomberg Law). Saunders praised the framework that imposed these and no greater obligations (KUNC).

Three governance levels, three frameworks, zero consistent accountability floor — and a predictable second-order effect. Without audit demand, the market for independent AI auditors contracts and the technical expertise to conduct bias assessments consolidates among a handful of firms. When a future legislature tries to reinstate testing requirements, the auditor shortage risks collapsing the industry’s argument against them — entrenching transparency-only as the permanent ceiling for AI accountability.

Recognizing the pattern requires a metric neither side in Denver computed — The Compliance Reach Ratio:

CRR = Deployers who can afford compliance ÷ Total deployed making consequential AI decisions

Under SB 205’s AI bias audit mandate, at a midrange annual cost of ~$30,000 (derived from DigitalApplied‘s $5,000–$50,000 range): CRR ≈ 0.25. Under the replacement framework, where compliance requires a transparency notice template (~$500 legal review) plus a form-letter process covering the 30-day consumer challenge window: CRR ≈ 0.90. Under the EU AI Act’s conformity assessments: CRR is unknown but structurally likely to mirror Colorado’s original audit ratio — high cost, deep accountability, narrow reach. Under the federal executive framework: CRR = 1.00, with zero accountability depth.

Neither number is sufficient on its own.

Audit model: 25% of deployers reached × full accountability depth. Transparency model: 90% of deployers reached × minimal accountability depth. But 75% of deployers in the audit model’s gap — school districts, small landlords, community credit unions — operate under zero accountability. A transparency mandate covering 90% may prevent more aggregate harm than an audit mandate certifying 25%, per DigitalApplied.

According to industry data, Cost of inaction for a state copying SB 205’s original audit mandate: $30,000 average annual cost × an estimated 3,750 deployed priced out of compliance (75% of an estimated 5,000 AI deployed in a mid-size state — roughly the number of public K-12 schools in Colorado) = $112.5 million per year in mandates the target population cannot fund. Multiply by the 17 states considering AI governance legislation, and the aggregate unfunded mandate exposure approaches an estimated $1.9 billion annually — a compliance cliff that no state budget office has published and no working group has calculated. The law exists on paper. The accountability does not.

For policy staff evaluating any AI accountability framework:

1. Estimate deployed count per covered domain

2. Calculate: annual_compliance_cost ÷ median_deployer_revenue

3. If ratio exceeds 0.05 → mandate won't reach the median deployed

4. Calculate CRR for the proposed framework

5. If CRR < 0.30 → law protects incumbents, not consumers

6. Estimate affected individuals: (1 − CRR) × deployer_count × avg_decisions_per_deployer

→ This is the population with zero accountability coverage

For state legislators: run the CRR calculation before drafting. For deployers below the Cliff: build the transparency template now, not after January 2027. For advocacy organizations: the question is not whether audits are better than notices — it’s whether 25% coverage at audit depth outperforms 90% coverage at notice depth, measured not in framework rigor but in people reached, per EU AI Act.

Documented Discrimination vs. Unrecorded Harm

The strongest case for keeping audit mandates: transparency notices test nothing. A landlord who posts “AI assisted this decision” has complied with the new framework. Whether the algorithm rejected disabled applicants at twice the baseline rate remains unmeasured. A bias audit would have produced that evidence. The EU AI Act took exactly this position, mandating conformity assessments for high-risk systems rather than relying on deployer disclosures (EU AI Act). More than 50 organizations that endorsed SB 205’s original requirements (GovTech) endorsed audits for the same reason: notices document, audits test.

Titone warned the working group’s unanimous vote came with caveats. Rodriguez pushed for mechanisms that generate evidence, not paper. A reader who stopped here would reasonably conclude that eliminating audits was a corporate concession dressed in bipartisan consensus.

But the law’s actual reach, not its theoretical rigor, determines how many decisions it covers. An audit mandate with a CRR of 0.25 generates evidence about one-quarter of consequential AI decisions — and generates nothing, not even a transparency notice, for the remaining three-quarters. A transparency mandate at 0.90 generates no testing evidence — but covers the deployed an audit regime would have priced out entirely. The 750,000 people in the audit model’s shadow zone don’t get weaker protection under that model. They get none.

No published study has compared discriminatory outcome rates under audit-only versus transparency-only AI governance frameworks — the comparative data cannot exist because Colorado’s replacement takes effect January 2027 and no other jurisdiction has tried both approaches. The absence of that evidence after a 50-organization endorsement, a unanimous working group vote, and a governor’s signature means Colorado resolved the most consequential AI policy trade-off in American law by intuition.

If the Audit Cliff arithmetic holds, multiple state legislatures may adopt transparency-only AI frameworks modeled on Colorado’s replacement by Q2 2027 — because audit mandates are projected to remain politically unfeasible for any state with a significant small-business constituency. This analysis relies on DigitalApplied’s cost estimates, which reflect 2025–2026 market rates; actual audit prices vary by vendor and system complexity, and the CRR threshold of 0.30 has not been empirically validated.

Roger Gose is projected to build his transparency notice template well before January 2027 — a single legal review, not a $50,000 annual audit, is projected to put his district in compliance. But compliance and accountability are not the same word. The five-year cost dropped from $45,000 to $2,500. The number of students whose AI-driven decisions are independently tested for bias dropped from whatever an audit would have found to zero. By Q4 2026, additional state legislatures are projected to face the same arithmetic Colorado just solved: an audit that reaches the deployers who least need policing, or a notice that reaches everyone but tests nothing. The number neither side in Denver calculated — how many discrimination cases a transparency notice catches versus how many an audit prevents — will determine whether Colorado wrote a consumer protection template or a permission slip. Titone’s “many caveats” may have numbers by then — but the deployers, legislators, and advocates watching Colorado don’t have to wait for them.

What to Do Before January 2027

If you deploy AI in consequential decisions:

- Inventory every AI system touching the six covered domains — employment, education, housing, credit, insurance, healthcare

- Draft your transparency notice template now; an estimated ~$500 legal review covers the requirement, but only if the notice accurately describes the AI’s role in the decision

- Build your 30-day adverse-outcome letter process — the clock starts when a consumer receives an AI-influenced denial, not when your legal team reviews it

- Document the decision factors your AI considers; under the replacement framework, rejected candidates must receive this information (Bloomberg Law)

If you draft AI governance legislation:

- Calculate the CRR for every proposed compliance requirement before markup

- If the ratio falls below 0.30, the law is projected to function as an incumbency subsidy — redesign the threshold or tier the requirements by deployed revenue

- Consider a hybrid model: transparency floor for all deployers, audit mandate above a revenue or deployment-scale threshold

- Estimate the affected population: (1 − CRR) × deployed count × average decisions per deployed — and publish that number in the fiscal impact statement

If you advocate for algorithmic accountability:

- Track compliance rates under Colorado’s new framework starting January 2027 — the gap between deployed who post transparency notices and deployed who use AI without disclosure is the empirical measure of reach

- Collect consumer complaints to build the enforcement record the Attorney General will need; without a private right of action, AG enforcement depends on external evidence

- Push for a mandatory CRR review provision — any framework should require periodic measurement of how many deployers it actually reaches

What to Read Next

- Trump’s 4-Page AI Framework Kills 131 State Protections

- 19 Countries, €35M in Fines, Zero AI Act Regulators

- AI Procurement Rules Find 73% Risk Rate While Laws Stall

References

- Colorado AI Policy Working Group Agrees on Framework — Colorado Politics reporting on the March 17, 2026 unanimous working group vote.

- Mayer Brown: Updated Framework to Replace the Colorado AI Act — Legal analysis of enforcement mechanisms, 90-day cure period, and no private right of action.

- KUNC: AI Policy Group Makes Recommendations — Direct quotes from Rodriguez, Titone, and Saunders on framework provisions.

- Bloomberg Law: Colorado AI Law to Target Transparency — Employer requirements, scope expansion, and January 2027 effective date.

- DigitalApplied: AI Compliance Small Business Guide — Bias audit cost tiers ($5,000–$50,000) and vendor liability analysis.

- GovTech: Colorado Passes Bill Amending AI Legislation — Coalition of 50+ organizations supporting original SB 205 requirements.

- Colorado SB 24-205 — Original bill text: deployer requirements, definitions, enforcement authority.

- EU AI Act Article 6: Classification Rules for High-Risk AI Systems — EU approach to conformity assessments for high-risk AI deployments.

- JD Supra: Colorado Moves to Replace AI Law — Cross-jurisdictional context including Illinois AI Video Interview Act.

- Brightmine: States with AI Laws Tracker — Tracking of 17+ state AI governance proposals in the 2025–2026 legislative cycle.