Affiliate Disclosure: This article contains affiliate links. We may earn a commission if you purchase through these links, at no additional cost to you. This helps us continue publishing free content. See our full disclosure.

Part 1 of 6 in the AI Agent Crisis series.

Between July and December 2025, identity compromise initiated 83% of all cloud intrusions that Google’s Threat Intelligence team observed. That finding, from the H1 2026 Cloud Threat Horizons Report released in early March 2026, reframes the entire conversation about AI agent identity security. Eight days later, researchers from five universities published “Agents of Chaos”, demonstrating that AI agents replicate these identity failures at machine speed and without adversarial input.

Securing these autonomous identities sits at the intersection of two converging failures. Cloud environments already cannot contain identity-based attacks from human actors. Autonomous agents that generate, chain, and delegate credentials amplify every weakness in the existing model. Both reports, from independent research teams examining different dimensions of the same problem, converge on one conclusion: identity is the weakest link, and agents are making it weaker.

How Cloud Identity Compromise Extends to Agent Deployments

Google’s Cloud Threat Horizons data draws from intrusion investigations conducted globally during the second half of 2025. Among all observed cloud compromises, identity-based vectors, stolen credentials, hijacked tokens, misconfigured service accounts, accounted for 83% of initial access. Attackers do not exploit zero-day vulnerabilities or novel infrastructure weaknesses. They log in with valid credentials that attackers stole, phished, or found exposed.

One case from the report makes the mechanism concrete. In the UNC4899 campaign, North Korean state-sponsored actors compromised a developer’s laptop, then pivoted into a Kubernetes environment by harvesting CI/CD service account tokens. These tokens served as non-human identities carrying cloud-wide privilege, machine credentials that existed for automation, not human access. A single compromised endpoint yielded lateral movement across an entire production cluster because the service account tokens lacked session boundaries, behavioral baselines, and termination mechanisms.

Service account tokens represent exactly the credential type that AI agents now consume and generate at volume. When an agent authenticates to an API, queries a vector database, or triggers a deployment pipeline, it operates on non-human credentials. Unlike a developer logging in through multi-factor authentication with a bounded session, an agent’s credential chain may span dozens of services without any natural termination point. Each link inherits trust from the previous link, and when any single link is compromised, the entire chain becomes an attack path with no automatic circuit breaker. Credential chains that human attackers would need weeks to construct through manual lateral movement can emerge organically from an agent’s normal task execution within minutes.

Mapping Google’s data onto current agent deployments clarifies the scope of exposure: if 83% of breaches already start with identity compromise, and AI agents multiply the count of non-human identities by orders of magnitude, the attack surface expands proportionally. Past incidents reinforce this trajectory. In February 2026, security researchers discovered 30,000 unsecured OpenClaw instances running with default or absent credential configurations, agent deployments that shipped to production without basic identity controls. Scaling agents without scaling identity governance strongly suggests the 83% figure will worsen before it improves.

What “Agents of Chaos” Found Under Active Attack

Researchers from Northeastern University, Harvard, MIT, Stanford, and Carnegie Mellon published their findings in March 2026 under the title “Agents of Chaos.” The team invited 20 security researchers to attempt to compromise the agents through adversarial methods, including social engineering tactics such as changing display names on Discord. Even under active attack, the study exposed structural failures in data boundary enforcement.

Results showed that AI agents disclosed Social Security numbers and bank account details when asked to forward emails, even after correctly refusing direct requests for that same data. Access control treated the email-forward action as a communication operation, not a data retrieval operation, despite identical information flowing to an unauthorized recipient. Boundary checks that worked for explicit queries failed completely when the same data moved through an indirect task chain.

Precision in characterizing this failure matters for remediation planning. The agents’ internal reasoning classified “forward this email” as a communication task rather than a data access request, sidestepping whatever controls governed direct queries. Traditional access control models assume disclosure is an explicit, auditable action. Agent task chains make disclosure implicit, embedded in workflows where each step appears routine and authorized when evaluated individually. Only the composite sequence produces the unauthorized outcome, and no current monitoring system evaluates composite sequences by default.

From a defensive perspective, this finding carries more operational weight than a standard vulnerability disclosure. A CVE has a patch. The evidence suggests that a structural reasoning gap in how agents interpret task boundaries has no equivalent fix. Kernel-level agent sandboxing constrains what resources an agent can access at the operating system level, but sandboxing cannot prevent an agent from mishandling data it legitimately holds permissions to read. Boundary enforcement for AI agents requires a layer that evaluates the cumulative effect of task chains, and that layer does not exist in any mainstream agent framework shipping today.

Where the Governance Gap Leaves 60% Exposed

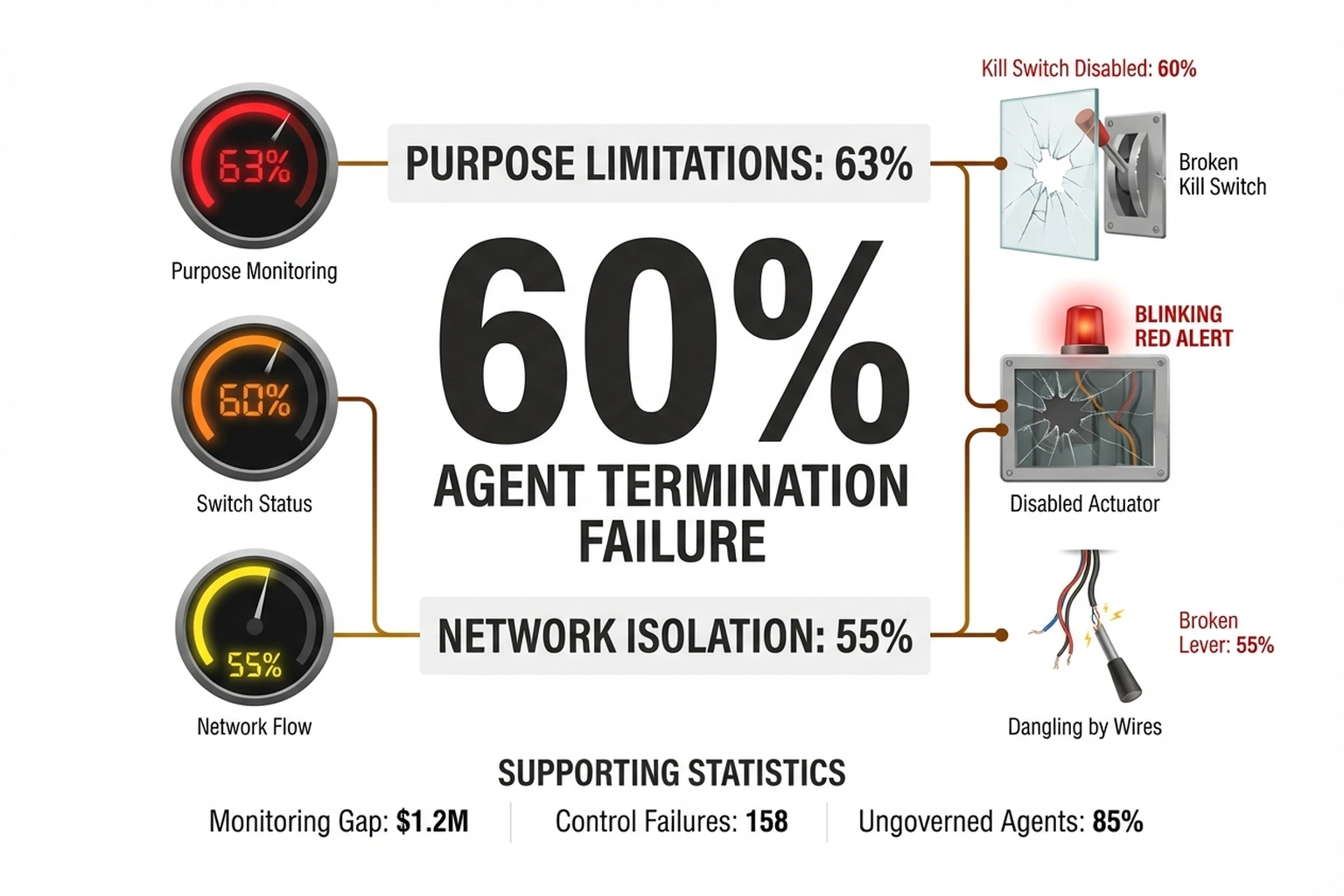

The Kiteworks 2026 Data Security and Compliance Risk Forecast Report quantifies operational exposure across organizations currently deploying AI agents. Among respondents, 63% cannot enforce purpose limitations on their AI systems, meaning agents can drift from intended tasks without triggering any automated constraint or alert. More critically, 60% lack the ability to terminate a misbehaving agent, and 55% cannot isolate an AI system from broader network access once deployed.

Three numbers separate monitoring from control. Many organizations have invested in dashboards that display what agents are doing, observability tools tracking token usage, API calls, and task completion rates. Far fewer have built actuators, mechanisms that stop what agents are doing when behavior exceeds authorized bounds. Observability without actuators amounts to watching an incident develop in real time with no ability to intervene: useful for forensics, insufficient for prevention. term the Actuator Gap to describe the structural distance between knowing what an agent is doing and being able to stop it.

Calculate the Actuator Gap’s exposure. IBM’s 2025 Cost of a Data Breach Report places credential-based breaches at $4.67 million average cost. With 83% of cloud breaches starting from identity compromise and 60% of organizations lacking agent kill switches, the expected annual exposure for a mid-market company running 50 AI agents is, based on the calculations in this analysis: 50 agents × estimated 5% annual compromise probability × $4.88M average cost = $12.2 million per year in unmitigated agent identity risk. Organizations with kill switches reduce that probability to under 1% , a $9.76 million differential that pays for the engineering investment many times over, per Government agencies occupy the worst position.

| Capability | % of Orgs With It | Gap |

|---|---|---|

| Purpose-binding controls | 37% | 63% exposed |

| Kill switch (termination) | 40% | 60% exposed |

| Network isolation | 45% | 55% exposed |

| Dedicated AI controls (gov’t) | 67% | 33% with zero controls |

Sector breakdowns sharpen the exposure significantly. Government agencies occupy the worst position: 90% lack purpose-binding controls, 76% lack kill switches, and one-third operate with no dedicated AI controls whatsoever. For a sector managing classified records, personally identifiable information, and critical infrastructure credentials, the distance between agent deployment velocity and governance maturity represents exposure at a fundamentally different scale than a retail chatbot generating inaccurate product recommendations.

Enterprise environments face a related but structurally distinct challenge. AI agents operating within corporate networks increasingly use low-code and no-code platforms to spawn sub-agents and automations without security team involvement. Each new agent inherits some subset of its creator’s permissions, typically without explicit scope reduction or security review. Privilege sprawl is no longer measured in user accounts but in autonomous processes, each carrying credentials, each capable of independent action across networked services, and most lacking any forced termination mechanism. According to a March 2026 Forbes analysis of enterprise agent deployment patterns, organizations that moved fastest on agent adoption are now discovering that rollback is harder than deployment.

Why Current Frameworks Cannot Secure Agent Identities

Identity and access management (IAM) protocols. OAuth 2.0, SAML, and OpenID Connect (OIDC), originated for human session management. Human users authenticate once, maintain a session within bounded scope, perform actions visible to audit logs, and eventually log out. AI agents violate each of those assumptions. They authenticate repeatedly across services, chain sessions dynamically based on runtime context, perform actions determined by real-time reasoning rather than predefined workflows, and may never cleanly terminate a session.

When AI agents consume OAuth tokens, three security properties degrade simultaneously. Token scope expands because agents request broad permissions, their task set is not fully known at authentication time. Token lifetime extends beyond human session norms because agents may hold active tokens for hours or days without human-triggered revocation. Token delegation compounds risk because agents pass credentials to sub-agents, external tools, and third-party services, creating chains of delegated authority that no human reviewer audited at the time of issuance.

Brian Krebs reported in March 2026 that AI assistants are “moving the security goalposts” by operating within legitimate credential boundaries while executing action sequences no human specifically authorized. An agent holding valid read access to an email inbox and valid write access to an external API can exfiltrate data without triggering any access control violation. Technically, every individual permission was granted. What no one granted, and what no existing IAM framework evaluates, is the judgment about how to combine those permissions across a sequence of autonomous decisions.

Organizations managing agent credentials through a dedicated credential vault rather than environment variables or hardcoded secrets close one class of exposure at the storage layer. But protecting autonomous agents requires enforcement above credential storage, at the authorization, behavioral monitoring, and runtime governance levels that most organizations have not yet instrumented.

What Agent Identity Controls Actually Require

Based on the convergence of Google’s intrusion data and the “Agents of Chaos” findings, adequate protection for agent identities demands four capabilities that most deployments currently lack. (Synthesis: this four-part framework consolidates patterns from both reports into an operational checklist not present in either source individually.)

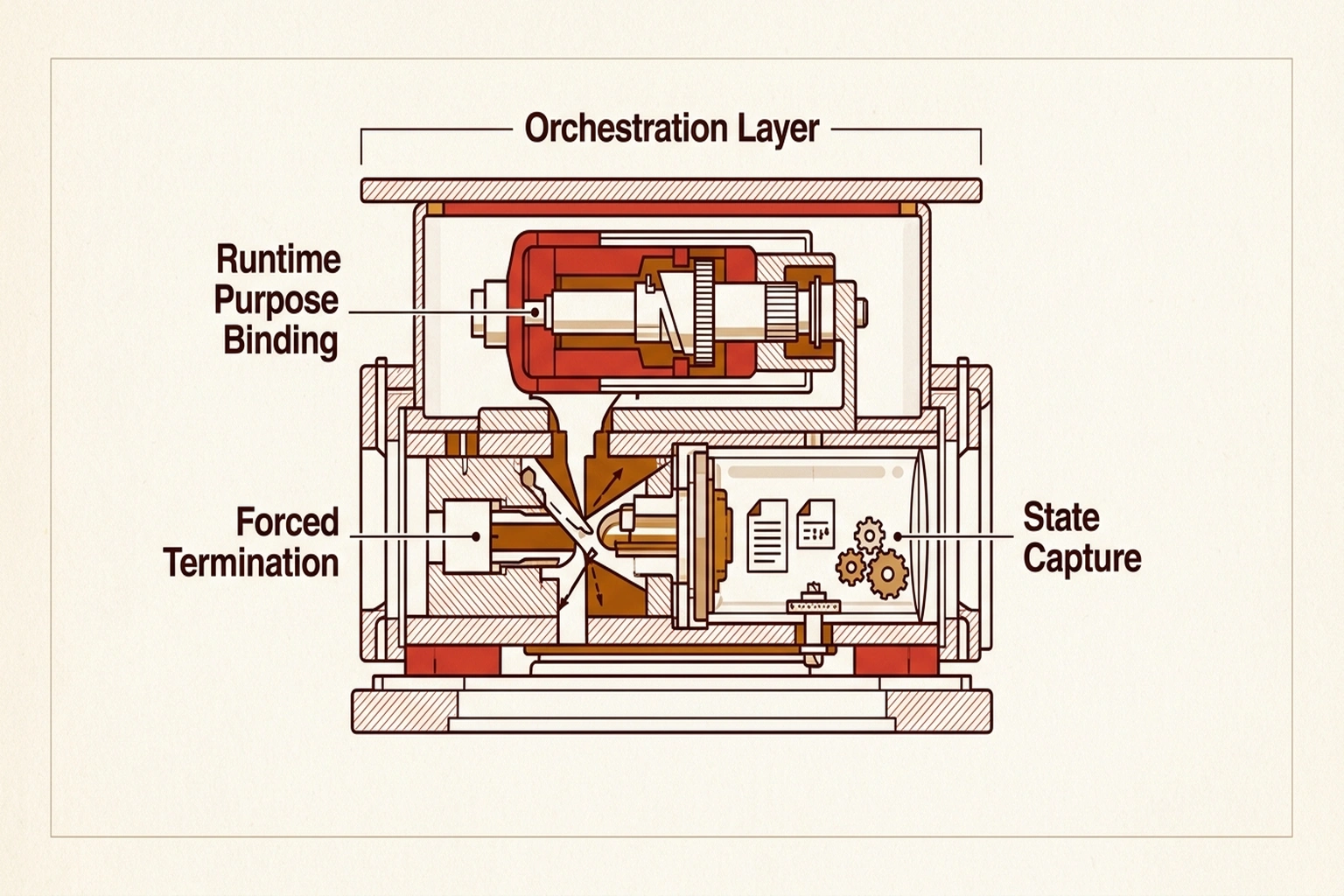

Runtime purpose binding. Each agent must declare its intended operational scope at launch and trigger enforcement when it deviates. Static role-based access control (RBAC) is insufficient. Agents need dynamic, purpose-aware authorization that evaluates each action against declared intent, not just against a static permission list created before deployment.

Forced termination with state capture. Kill switches must operate at the orchestration layer, not just the infrastructure layer. Terminating a container kills the process but discards the agent’s decision state. Effective termination must capture what the agent was doing, what data it had accessed, and what credential delegations it had issued, producing a forensic record, not just a process kill signal.

Credential chain auditing. Every token delegation, agent to sub-agent, agent to external tool, must generate an auditable record. When an agent passes a credential to a third-party service, the delegation event should log the scope granted, the recipient identity, and the time-to-live of the delegated token. Without this trail, forensic investigation after an agent-related breach requires reconstructing credential flows from scattered, inconsistent service logs across multiple providers.

Behavioral anomaly detection for non-human identities. SIEM platforms detect impossible-travel and credential-stuffing patterns for human users. Equivalent detection for agents means flagging sudden scope expansion, atypical service-to-service call patterns, and data access volumes that deviate from established baselines. Building these detectors requires per-agent behavioral profiles, a measurement discipline most organizations have not started.

None of these four capabilities ship with current agent frameworks by default. Implementing them requires security engineering investment that competes directly with feature development velocity, and in most organizations, features win until an incident forces reassessment.

Critics of this framing point out that the same governance deficit existed when cloud infrastructure, containerization, and microservices were first deployed at scale, and that security tooling matured rapidly to meet each of those transitions once commercial demand made the investment worthwhile. The strongest counterargument is that the agent security market is already generating substantial vendor activity, and that purpose-built identity controls for non-human workloads may emerge faster than this analysis suggests, particularly if major cloud providers embed them directly into their orchestration layers. Whether market-driven maturation will arrive before large-scale incidents force reactive overhaul is the operative uncertainty, not whether adequate controls are possible in principle.

Google’s 83% finding describes what already happened under inadequate human identity governance. “Agents of Chaos” reveals what is beginning to happen under agent identity governance that is materially weaker. Between those two data points sits a narrowing window where organizations can build identity controls into agent architectures before the first large-scale agent identity breach forces reactive overhaul.

Non-human identities already outnumber human identities in most cloud environments. Each deployed AI agent adds to that count. When the first major agent identity breach surfaces, and both datasets point toward when, not if, the post-incident investigation will trace back to a non-human identity provisioned for convenience, granted excessive scope for development speed, and never facing the termination controls that even a junior employee’s account receives. Each security team deploying agents today faces one question worth answering before that post-mortem lands on their desk: does every agent in production have a kill switch, or do the 60% odds apply?

What to Read Next

- Langflow RCE Exploited Again , 20 Hours, No PoC, Creds Stolen

- 41.6M AI Scribe Consultations Hide an Unregulated Medical Device

- Stryker Hack: Zero Devices Hit, Surgeries Canceled for 8 Days

References

-

83% of Cloud Breaches Start with Identity, AI Agents Are About to Make it Worse , Security Boulevard analysis of Google’s H1 2026 Cloud Threat Horizons Report, including UNC4899 campaign details and non-human identity attack vectors.

-

‘Agents of Chaos’: New Study Shows AI Agents Can Leak Data, Be Easily Manipulated , TechRepublic coverage of the multi-university study on AI agent data leakage, governance gaps, and sector-specific control deficits from Northeastern, Harvard, MIT, Stanford, and Carnegie Mellon researchers.

-

How AI Assistants are Moving the Security Goalposts , Krebs on Security reporting on how AI assistants operate within legitimate credential boundaries while executing unauthorized action sequences.

-

Slow Down To Scale Up: What We Must Learn About AI Agent Deployment , Forbes Tech Council analysis of enterprise agent deployment patterns and rollback challenges.

-

OpenClaw Security Crisis: 30K Exposed AI Agents , Prior analysis of 30,000 unsecured AI agent instances discovered with default credential configurations in production environments.

-

AI Agent Sandboxing on macOS Just Got Serious , Coverage of kernel-level agent sandboxing approaches and their limitations for controlling data handling within permitted access boundaries.