Fines of up to €35 million — enough to employ more than 700 full-time AI safety inspectors, if any existed to hire. Penalties reaching 7 percent of global annual turnover. The European Union’s AI Act is, by statute, the most punitive AI regulation ever written. As of March 2026, it is also functionally unenforceable across 70 percent of its own jurisdiction.

A March 2026 European Parliament Think Tank analysis quantifies the problem: only 8 of 27 member states have designated market surveillance authorities, seven months past the August 2025 deadline. Social scoring is prohibited. Manipulative AI systems are banned. Real-time biometric surveillance is restricted. These prohibitions are technically binding from Lisbon to Helsinki. If you discover a violation in Paris, Warsaw, or Amsterdam today, there is no one to call.

The implications for AI Act enforcement extend further. The toughest AI law on Earth currently has no police.

Nineteen Governments, Zero Investigators

From a practical standpoint, the Commission’s own market surveillance authority registry reveals which eight and which nineteen. Five member states — Cyprus, Ireland, Italy, Latvia, and Lithuania — have completed designations. Three more — Luxembourg, Slovenia, and Spain — show selections pending final approval. France, Germany, the Netherlands, Poland, and Belgium — the five largest AI markets in continental Europe — list nothing at all. The countries most likely to produce and deploy high-risk AI systems are the ones with zero enforcement apparatus.

Eight jurisdictions, seven months late, constitute the whole of the enforcement footprint.

For the nineteen member states with no designation, no public timeline exists.

That absence is not neutral — it is an incentive.

How the Void Feeds Itself

No single source has connected the supply-side enforcement vacuum to the demand-side deployment surge. Gravitee’s State of AI Agent Security 2026 survey of 900+ executives and practitioners found 80.9 percent of technical teams have pushed AI agents into testing or production — while only 14.4 percent secured full security approval.

Cross the datasets. 80.9% of enterprise teams lack full internal governance. 70.4% of EU jurisdictions lack external governance. Multiply: 80.9% × 70.4% = 56.9%, per State of AI Agent Security 2026 survey.

More than half of enterprise AI deployments in the EU face neither internal approval nor external enforcement.

What the EP Think Tank and Gravitee reveal together is the Potemkin Compliance Effect: 57 percent of enterprise AI in the EU is technically regulated and practically unwatched, because the regulation’s reputation substitutes for its operation. The mechanism is precise: the AI Act requires conformity assessments against harmonized standards — but CEN and CENELEC have published only 15 of the 45 required standards, missing their own 2025 deadline. A company claiming “AI Act readiness” today is claiming conformity against standards that, in 67 percent of cases, do not yet exist. The claim is structurally unfalsifiable — not because verification is difficult, but because the thing to verify against has not been written.

This is not a gap in will. It is a gap in reality.

Looking at the data, the dynamic is self-reinforcing. Enterprises cite the AI Act to satisfy board-level compliance questions without completing conformity assessments, partly because the standards to assess against are still under development. National governments cite the Commission’s enforcement role to defer their own designations. Each party treats the others’ activity as proof the system works. None tests whether it does. And while every participant points elsewhere, 88 percent of organizations reported confirmed or suspected AI agent security incidents in the past year — real harms accumulating in the space between the regulation’s reputation and its reach.

Sebastiano Toffaletti, Secretary General of DIGITAL SME, has advocated “delaying enforcement for SMEs until at least six months after all standards, sandboxes and guidance are operational,” warning that companies otherwise face “costly compliance guesswork” (EU AI Act Newsletter #89). The position diagnoses the void as a fixable design flaw. The Potemkin Compliance Effect suggests it is closer to an emergent equilibrium that benefits every participant except the populations the Act exists to protect. But equilibria can be modeled — and the mathematics of this one are worse than the politics.

When the Law’s Reputation Replaces Its Police

Up to this point, the enforcement gap reads as bureaucratic delay — slow, fixable, familiar. Every EU regulation runs late. Institutions need time to hire. The conventional wisdom says: be patient, enforcement will catch up, it always does.

Model the mathematics and the pattern inverts.

Enforcement probability per enterprise equals capacity divided by the product of regulated entities and jurisdictions served. The conventional wisdom assumes capacity and the regulated population grow at roughly the same rate — regulators staff up as the market matures. But the AI Act broke that assumption. As the Act’s reputation accelerates deployment — 80.9 percent of teams already active — the denominator expands. As those enterprises push into France, Poland, and Belgium, markets where no designated authority exists, the jurisdictional count grows with them. The numerator — actual enforcement capacity — is bounded by staffing, expertise, and the fact that harmonized standards remain two-thirds incomplete.

Multiply the gaps: 8 designated jurisdictions out of 27 × 33% standards completion = effective enforcement coverage of 2.7 jurisdictions out of 27.

The real regulatory enforcement gap is not 70 percent. It is 90 percent.

Now calculate what accumulates in that 90 percent void. The EU’s AI market comprises an estimated 10,000+ companies deploying or developing AI systems that would qualify as high-risk under the Act. Gravitee’s data shows 80.9 percent are already active. At 90 percent enforcement absence, roughly 7,300 companies are deploying high-risk AI with no functional oversight — each month.

Seven months have passed since the August 2025 deadline. That is approximately 51,000 unaudited company-months of high-risk AI deployment already banked in the void. By the time the most optimistic enforcement timeline contemplates full coverage — late 2027 at earliest — the backlog will exceed 130,000 company-months. Every month of delay does not merely postpone enforcement. It manufactures the retroactive caseload that will overwhelm enforcers the moment they arrive.

In practice, this is where the conventional reading — “slow but fixable” — collapses. In a normal regulatory delay, the gap shrinks as institutions mature. Here, the gap compounds. The Act’s reputation drives deployment, deployment outruns enforcement, and the resulting backlog makes future enforcement harder, not easier.

Before the Act, enterprises deploying high-risk AI had to build their own risk frameworks — internal governance was the only friction. After the Act, that friction vanished in perception. “Compliant with the AI Act” replaced “built an internal risk framework.” But compliance cannot be verified when enforcers are absent and standards are incomplete.

Regulation did not add a constraint. It removed one.

That sentence is the structural diagnosis. The AI Act did not fail to regulate — it succeeded in creating the belief that regulation exists, which is worse than no regulation at all, because it displaced the self-governance that preceded it. Each month enforcement remains absent across those nineteen jurisdictions, unaudited deployments compound — and each deployment makes the eventual arrival of enforcers more consequential.

Gravitee’s survey is self-reported; the EP Think Tank tracks formal notifications rather than informal readiness. Even so, the structural overlap — neither deployer nor regulator maintaining operational oversight — requires no methodological leap to identify.

Brussels Was Slow Before — and It Worked Fine

A senior EU policy official would build a strong case for patience. GDPR took effect in May 2018 and collected roughly €56 million in fines during its first nine months — 90 percent from a single Google penalty by France’s CNIL. Most regulators spent that first year staffing up. Kai Zenner, Digital Policy Adviser in MEP Axel Voss’s office, has argued that the AI Act’s Scientific Panel should prioritize recruiting “world-renowned AI researchers” over rushing national appointments — competence before speed (EU AI Act Newsletter #89).

Zenner’s argument is reasonable in isolation. But it overlooks what Gravitee’s deployment data makes unavoidable: 80.9 percent of enterprise teams are not waiting for world-renowned researchers to staff a Scientific Panel. They are shipping AI agents now, into jurisdictions with no oversight apparatus at all. Competence-first assumes the regulated market will hold still while institutions mature. The deployment surge says it will not.

That defense fractures on three structural points. First, GDPR enforced rules over data practices companies already performed — every organization had customer databases. AI Act conformity assessments cover systems enterprises are still building and deploying in real time. The target moves while the enforcer loads. Second, GDPR’s decentralized model worked because every member state already had a data protection authority, institutions predating the regulation by decades. The AI Act requires new surveillance bodies built from scratch in countries where none previously existed. Building an institution takes longer than expanding one. Third, and critically: GDPR did not generate a reputational signal that companies treated as a compliance substitute. No serious enterprise claimed “GDPR compliant” before appointing a data protection officer — the requirement was concrete, verifiable, binary. Companies are claiming AI Act readiness against standards that are 67 percent unwritten, in jurisdictions where the authority to audit that claim does not exist. The compliance claim and the compliance reality have fully decoupled.

The structural difference is decisive. GDPR graduated from slow enforcement to effective enforcement over four years. The AI Act risks graduating from no enforcement to retroactive enforcement — regulators arriving to audit not just current systems but an accumulated backlog of over 130,000 unaudited company-months of high-risk deployments. The question is no longer whether enforcement arrives, but whether you can prove what you did before it did.

Score Your Jurisdiction Before Enforcement Scores You

The following Enforcement Surface Score converts the void into a measurable risk input for compliance and legal teams:

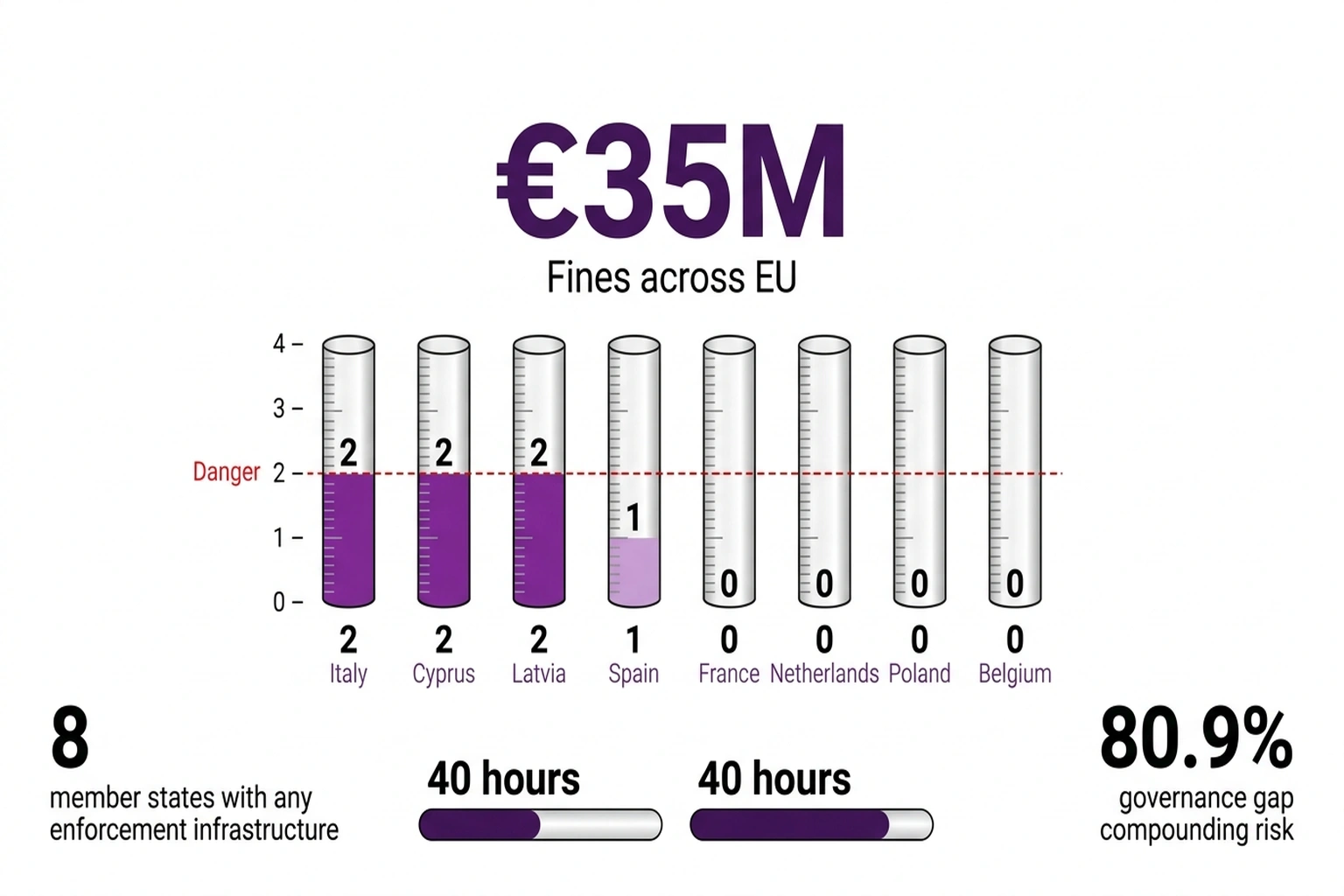

ENFORCEMENT SURFACE SCORE (0–4 per jurisdiction)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

+1 Designated market surveillance authority exists

+1 Single point of contact notified to Commission

+1 Regulatory sandbox operational or legislated

+1 National adoption of ≥1 harmonized standard

Current scores (March 2026):

Italy ...... 2 Cyprus ...... 2

Latvia ..... 2 Spain ....... 1

France ..... 0 Poland ...... 0

Netherlands 0 Belgium ..... 0

If score < 2 AND deploying high-risk AI:

→ Begin voluntary conformity documentation now

→ Budget ~40 hours per high-risk system

→ Designate an internal AI compliance contact

Yet only 21.9 percent of organizations treat AI agents as independent identity-bearing entities (Gravitee 2026 Enterprise Agent Risk Report). In jurisdictions scoring 0 on the Enforcement Surface Score, that governance vacuum compounds unchecked. As Jorge Ruiz, Director of Product Marketing at Gravitee, put it: “Security must shift from periodic, manual audits to continuous, identity-aware enforcement.”

Calculate the cost of inaction — not for one company, but for the single market. For a single company with €200 million annual turnover deploying high-risk AI across three jurisdictions scoring 0: the minimum penalty for a single “supplying incorrect information” violation is 1 percent of global turnover — €2 million per jurisdiction. One violation in each market yields €6 million in annual penalty exposure during the enforcement void. Proactive conformity documentation costs a fraction of that figure.

Now scale that arithmetic. If even 10 percent of the estimated 7,300 companies currently deploying high-risk AI without functional oversight face a single minimum-tier violation upon enforcement arrival, the aggregate retroactive penalty exposure across the EU reaches approximately €4.4 billion — calculated as 730 companies × €6 million average exposure. That figure rivals the entire GDPR fine total of €4.5 billion accumulated over seven years. The AI Act’s enforcement void is not building a compliance backlog. It is building a penalty cliff that could match GDPR’s lifetime output in a single regulatory cycle.

The annual cost of treating the void as permission: €6 million minimum in deferred penalty risk for a single mid-cap deployer. The systemic cost: a single-market penalty exposure that rivals GDPR’s entire enforcement history, compressed into the first wave of retroactive audits.

The UK’s parallel experience with AI copyright framework uncertainty offers a warning: regulatory voids do not resolve in favor of those who exploited them. The pattern echoes AI procurement rules that found 73 percent of systems carrying material risk while legislative frameworks stalled. What distinguishes this instance is the penalty structure waiting on the other side.

By August 2026, when the full risk-classification framework takes effect, the Commission faces a question the AI Act text does not answer: penalize 19 governments for institutional delay, or penalize the companies that treated the void as license. For compliance officers assessing regulatory enforcement readiness this quarter, the math is simpler. Score each market: does a designated authority exist, is it staffed, has it acted? In 19 member states, the honest answer to all three is no. The regulation is real. The regulator is not — yet.

What to Read Next

- Trump’s 4-Page AI Framework Kills 131 State Protections

- AI Bias Audits Cost $50K. Colorado Just Killed Them.

- AI Procurement Rules Find 73% Risk Rate While Laws Stall

References

- Enforcement of the AI Act — European Parliament Think Tank analysis of enforcement readiness across 27 member states

- EU AI Act Article 99: Fines — Penalty structure under the AI Act, including maximum fines of €35 million or 7% of global turnover

- Market Surveillance Authorities Under the AI Act — European Commission registry of designated national enforcement bodies

- State of AI Agent Security 2026 Report — Gravitee survey of 900+ executives on enterprise AI agent governance gaps

- EU AI Act Newsletter #89: Standards Acceleration Updates — Standards development status, expert commentary, and enforcement readiness

- GDPR Fines So Far — GDPR enforcement data for regulatory ramp-up comparison

- AI Procurement Rules: 73% Risk Rate — Decoded AI Tech analysis of procurement-stage risk findings