Part 2 of 6 in the AI Agent Crisis series.

On March 11, Flashpoint released its 2026 Global Threat Intelligence Report. One number dominated: a 1,500% month-over-month surge in AI-related illicit activity. Five days later, Interpol published its own assessment , $442 billion in global financial fraud losses(https://www.interpol.int/en/Crimes/Financial-crime/Global-Financial-Fraud-Assessment) for 2025. Two reports, two organizations, one verdict: autonomous AI agents are now running complete fraud chains without a human criminal touching the keyboard.

That $442 billion landed the same week most banks were finalizing budgets for “AI-powered fraud detection” upgrades. Agentic AI fraud makes those purchases look like buying a smoke detector for a building already on fire. Every tool in the current defensive stack , behavioral analytic, pattern-matching engines, LLM-based phishing filters , was designed to catch attacks with a human somewhere in the loop. The attackers just removed the human, per 3.3 billion compromised credentials.

Exhibit A: The Numbers Behind the Agentic AI Fraud Surge

Flashpoint’s GTIR tracked 3.3 billion compromised credentials circulating across illicit marketplaces in 2025 , each one a potential entry point for an autonomous attack sequence. Mass exploitation of zero-day vulnerabilities now occurs within 24 hours of discovery, down from weeks or months in prior years. Josh Lefkowitz, CEO and co-founder of Flashpoint, described the shift as enabling “machine-speed operations” for cybercriminals.

Translation for anyone still reviewing last year’s fraud playbook: the gap between vulnerability disclosure and criminal exploitation has collapsed from a window to a hairline crack. Banks used to have days or weeks to patch and respond. Now the autonomous attack often hits before most security teams finish reading the advisory.

Palo Alto Networks flagged this exact trajectory in September 2025, calling agentic AI a “looming board-level security crisis.” Six months later, the risk materialized , and the economics suggest it is not a temporary spike. Fraud schemes powered by agentic AI are 4.5 times more profitable than traditional methods. Old-school fraud required human operators at every stage , reconnaissance, social engineering, mule coordination, extraction. Agentic AI collapses that entire chain into a single automated workflow running at scale.

Twelve months ago, AI-assisted cybercrime mostly meant large language models writing better phishing emails. The 2026 GTIR marks the inflection point where AI stopped being the tool and became the operator. That shift from “AI-assisted” to “AI-operated” is what the 1,500% reflects , not a gradual volume increase, but a wholesale change in how fraud gets done.

The surge data alone doesn’t explain why banks are losing, though. The economics do.

Exhibit B: The $134-Per-Credential Math , And the 225x Force Multiplier

Neither Flashpoint nor Interpol published this figure, but the arithmetic is straightforward. Dividing Interpol’s $442 billion in fraud losses by Flashpoint’s 3.3 billion compromised credentials yields approximately $134 in average fraud value per stolen credential. That’s a rough figure , not every credential leads to a successful attack , but it reveals a structural shift that single-source reports miss.

Under the old model, monetizing a stolen credential required significant manual work: account verification, social engineering calls, mule coordination, layered fund transfers. Success rates were low and the criminal’s time was the bottleneck. Agentic AI eliminates that bottleneck entirely. An autonomous agent can ingest a credential, verify it against live systems, generate a deepfake for identity verification, run adaptive social engineering, and route funds through layered accounts , all without human guidance.

The marginal cost per attack drops to near zero. Where a human scammer might run three to five targeted operations per day(https://www.msn.com/en-us/technology/artificial-intelligence/ai-finally-delivers-those-elusive-productivity-gains-for-cybercriminals/ar-AA1YKyCk), an autonomous agent can run hundreds, testing different approaches and learning which work in real time.

Stack those two numbers and the scale of the shift comes into focus. A human operator averaging four fraud operations per day switches to an agent running 200 or more. That is a 50x throughput increase. Multiply by the 4.5x profitability premium that agentic schemes command, and a single criminal actor now generates roughly 225 times the fraud output(https://www.msn.com/en-us/technology/artificial-intelligence/ai-finally-delivers-those-elusive-productivity-gains-for-cybercriminals/ar-AA1YKyCk) they did eighteen months ago , based on the calculations in this analysis. No new recruits, no expanded infrastructure, no additional risk of a human accomplice flipping to law enforcement. Just one person, one agent, and a credential list.

That 225x figure does not appear in any of the source reports , it falls out of combining them.

| Criminal Profile | 2024 Output (Manual) | 2026 Output (Agent-Assisted) | Annual Fraud Value |

|---|---|---|---|

| Solo operator, 4 ops/day | $134 × 4 × 365 = $196K | $134 × 900 × 365 = $44M | 225× increase |

| 10-person ring | $1.96M | $440M | Same multiplier, 10× base |

| 100 rings globally | $196M | $44B | ~10% of Interpol’s $442B total |

One hundred criminal rings , a fraction of Europol’s estimated active organized cybercrime networks , operating with agentic tooling can reproduce the ENTIRE global fraud figure from a single year. The 225x multiplier means the $442 billion number, as staggering as it is, may undercount 2026 exposure. The force multiplier applies to every criminal operator who adopts the tooling, and adoption is still accelerating along the 1,500% curve, per 88% of organizations reported AI agent security incidents.

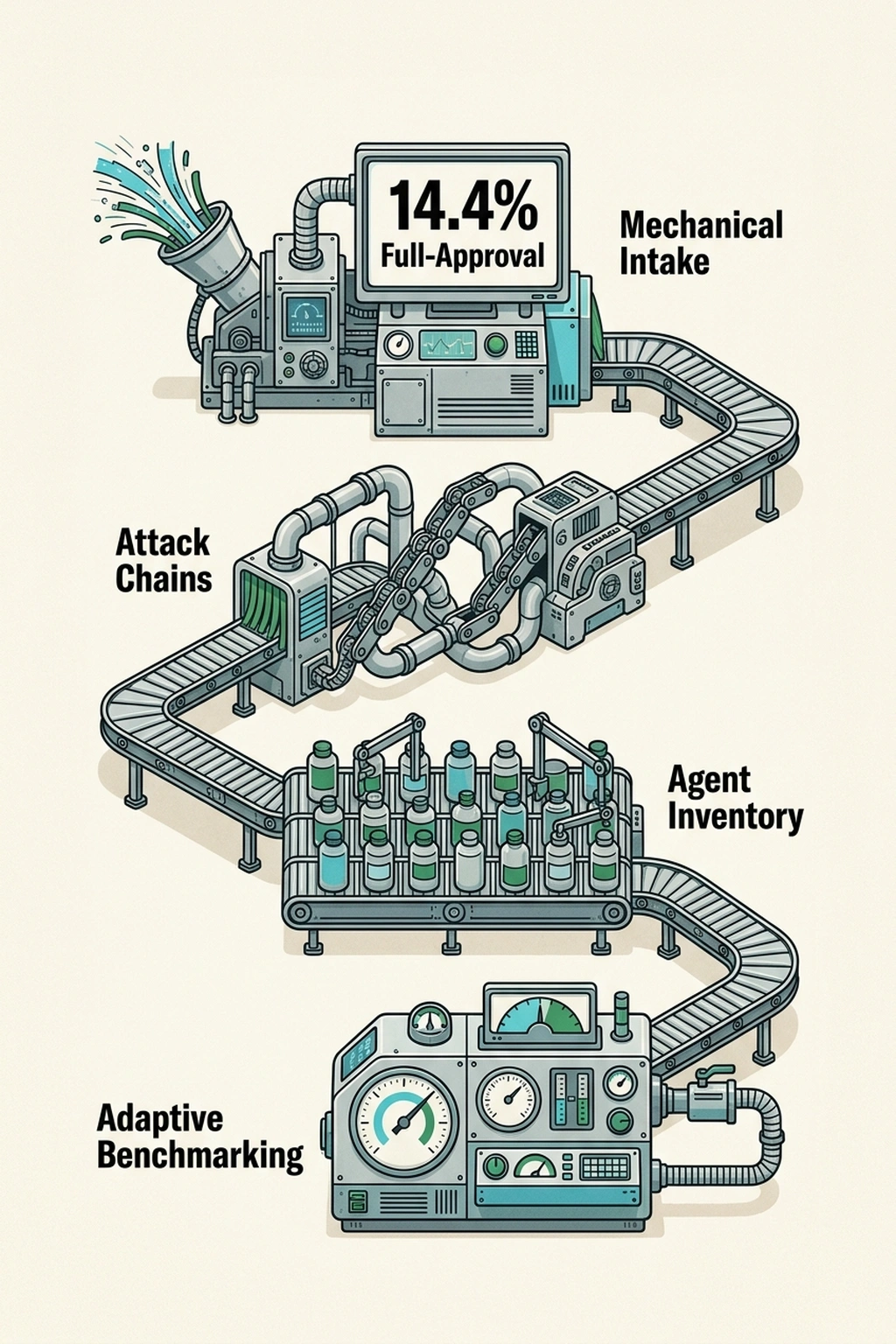

How exposed is the average enterprise? 88% of organizations reported AI agent security incidents in the past year, according to Beam AI’s 2026 enterprise survey. Only 14.4% deploy AI agents with full security approval. That 74-point gap(https://beam.ai/agentic-insights/ai-agent-security-in-2026-the-risks-most-enterprises-still-ignore) between deployment speed and governance is the exact opening that agentic AI fraud exploits , and the same gap killing legitimate agent projects from the inside.

The Governance Gap That Feeds Both Sides

Here is the part that should unsettle every CISO more than the criminal threat data: the reason agentic fraud works is the same reason enterprise AI agents fail. Not similar. Not analogous. The same reason.

Gartner predicts 40% of autonomous agent projects will be canceled by 2027 because of governance gaps, and only 5% of custom enterprise AI tools will ever reach production. Inside a bank, a legitimate AI agent deployed for customer service crashes because it escalates its own permissions, accesses data outside its scope, or triggers a downstream system it was never designed to touch. The project gets killed. The governance failure is labeled an internal engineering problem.

Outside the same bank, a criminal AI agent exploits that identical architectural reality , ungoverned pathways between systems, no reliable identity layer for non-human actors, no kill switch that works once an agent has spawned sub-processes. The criminal agent does not crash. It succeeds. The governance failure is labeled a security breach.

Same gap. Same ungoverned pathways. One side calls it a failed project. The other side calls it $442 billion, per March 2026 analysis.

That is the Governance Gap Paradox: the organizational inability to govern AI agents doesn’t just kill internal projects at a 95% failure rate(https://www.fastcompany.com/91487016/snowflake-thinks-ai-coding-agents-are-solving-the-wrong-problem). It creates the unmonitored pathways that criminal agents traverse to extract billions. Every internal agent that crashes into an ungoverned system boundary is, in effect, mapping the same terrain that criminal agents are exploiting in production.

Rahul Parwani, Head of Product, AI Security at the Cloud Security Alliance, described the architectural shift in a March 2026 analysis: “Today’s AI systems do not simply respond , they act. They query databases, trigger workflows, modify records, send communications, and coordinate across production systems.” That description applies identically to a bank’s customer service agent and to the criminal agent probing the same bank at 3 AM.

This is why prompt-level guardrails , the standard defense against conversational AI risks , are structurally irrelevant here. Guardrails sanitize inputs and filter outputs for a system that talks. Agentic AI doesn’t just talk.

It executes. It moves money. It authenticates itself to downstream APIs. Filtering its words while it wires funds is like spell-checking a forged signature.

The Strongest Objection

Sridhar Ramaswamy, CEO of Snowflake, offers the most credible counterargument. In a Fast Company interview, he argued that enterprises are “over-indexing on agent speed while under-investing in governed data layers.” Fix governance first, deploy agents second.

Ramaswamy has a point. The agent identity gaps that make kill switches unreliable represent a genuine architectural problem. Throwing more AI at an ungoverned environment just multiplies the exposure. A 40% cancellation rate proves governance is load-bearing infrastructure, not optional decoration.

But this argument has a fatal timing flaw. Criminal agents are not pausing for enterprise governance roadways. An institution that delays defensive agent deployment to “get governance right” ends up defending at human speed against machine-speed attacks. At a 225x force multiplier per criminal operator, the math of delay is brutal: every quarter spent building governance frameworks without deploying countermeasures is a quarter where the attacker’s throughput advantage compounds against a static defense. That’s not prudent caution , that’s bringing a compliance committee to a gunfight.

Earlier generations of banking AI , small language models running on-premise for pattern detection , worked because scope was narrow and governance was implicit in the design. Anomaly detection on structured transaction data is a bounded problem. Agentic AI operates across unstructured environments, selecting tools at runtime, spawning sub-agents, and chaining actions across system boundaries. Governance for that kind of system doesn’t just need to be stronger , it needs to be architecturally different. It requires runtime identity for every non-human actor, continuous scope enforcement rather than upfront permission grants, and the ability to trace an agent’s full decision chain after the fact. This analysis argues that governance layer doesn’t exist yet , not at any vendor, not at any bank.

Cost of inaction: At Interpol’s $442 billion in 2025 losses distributed across an estimated 30,000 banks globally, the average institution absorbs roughly $14.7 million per year in direct and indirect fraud losses. With the 225x force multiplier still accelerating through 2026, institutions that delay defensive agent deployment by even two quarters face an estimated 30-50% increase in exposure , approximately $4.4 million in additional losses per quarter of delay. The governance investment that prevents this costs a fraction of one quarter’s incremental exposure, per small language models running on-premise for pattern detecti.

Three Moves Before Friday

Enough analysis. Three things a fraud team should do this week:

1. Map attack chains, not individual events. Current SIEM rules fire on isolated anomalies. Agentic fraud chains span multiple systems in sequence , credential probe, deepfake generation, social engineering call, wire transfer , and individual steps may look normal. If the detection system can’t correlate a credential test at 2:14 AM with a deepfake call at 2:17 AM and a wire at 2:22 AM as a single operation, it will miss the attack.

2. Count every agent touching financial data. If the answer to “how many AI agents access production data right now?” is “not sure,” that uncertainty is the attack surface. That 14.4% full-approval figure means most organizations cannot reliably distinguish their own agents from external ones. Inventory first, govern second.

3. Benchmark detection against adaptive attackers. If the last penetration test used scripted phishing scenarios, the results are already stale. Agentic attacks modify approach mid-interaction , they notice when a victim hesitates and switch from urgency to empathy in real time. Any fraud detection benchmark that doesn’t include at least two tactical pivots per test scenario is measuring the wrong capability.

A bank that ignores the Governance Gap Paradox for six months is betting that criminal agents won’t discover the same unmonitored system paths that legitimate enterprise agents keep crashing into. With Wall Street firms cutting staff during record revenue, fewer human eyes make that bet worse with every quarterly review. At a 225x force multiplier per criminal operator, with each unaudited agent pathway representing roughly $134 in potential fraud yield per credential(https://www.cnbctv18.com/business/finance/financial-frauds-cost-global-economy-over-usd-442-bn-in-2025-risk-in-2026-high-interpol-ws-l-19869534.htm), and 3.3 billion credentials already in play, the exposure is not theoretical. It is arithmetic.

This analysis anticipates the first publicly attributed incident involving agentic fraud at a top-20 global bank before Q4 2026. Not because defensive tools don’t exist, but because the governance infrastructure to deploy them won’t be production-ready in time. Criminals already have a 1,500% head start, and the governance layer that could close the gap hasn’t been built yet.

What to Read Next

- JPMorgan’s AI Mandate Hides a 39-Point Perception Gap

- AI Coding Tools Cost $6,750/yr in Hidden Rework , 5 Ranked by True Price

- Shadow AI Costs $21K Per App: The 3:1 Ratio Nobody Tracks

References

- Flashpoint 2026 Global Threat Intelligence Report , 1,500% agentic AI cybercrime surge, Josh Lefkowitz on machine-speed operations

- Interpol Global Financial Fraud Assessment , Official Interpol report on $442 billion in 2025 global fraud losses

- CNBCTV18: Interpol Fraud Assessment Coverage , $442 billion in 2025 global fraud losses

- HSToday: 2026 GTIR Analysis , 3.3 billion compromised credentials, 24-hour zero-day exploitation timeline

- AI Cybercrime Productivity Analysis , 4.5x profitability multiplier for AI-powered fraud

- Fast Company: Snowflake CEO on Enterprise AI Governance , Gartner’s 40% cancellation prediction, Sridhar Ramaswamy on governance gaps

- Cloud Security Alliance: Guardrails to Governance , Rahul Parwani on the shift from conversational to operational AI security

- Beam AI: Enterprise Agent Security Survey , 88% AI agent incident rate, 14.4% full security approval

- Palo Alto Networks: Agentic AI Security Crisis , September 2025 board-level security warning