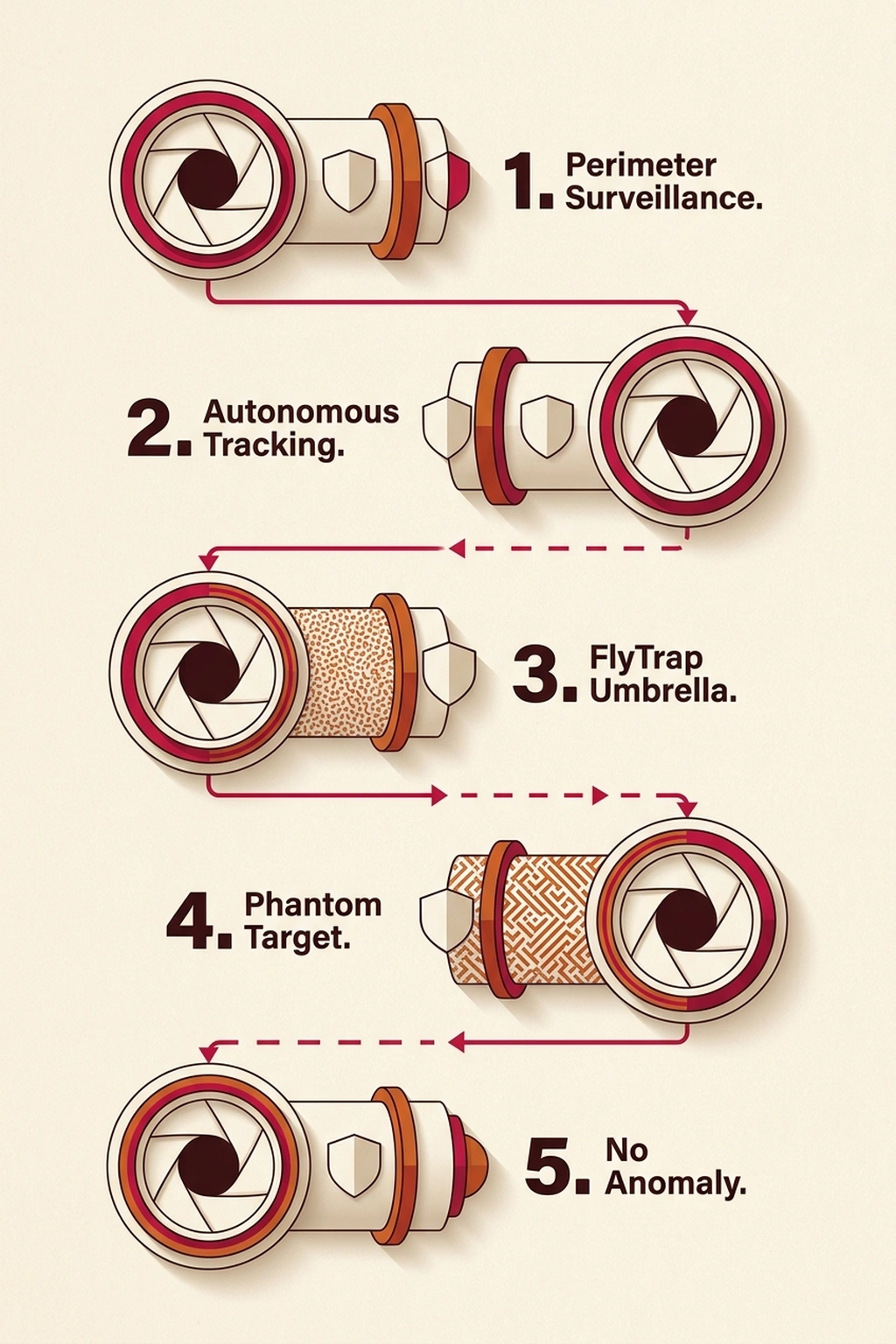

Before December 22, 2025, a UC Irvine research team completed a series of controlled experiments that should concern every agency relying on autonomous surveillance drones. Using an ordinary umbrella printed with a specifically designed visual pattern, the researchers caused autonomous drones to lose lock on human targets and fly confidently in the wrong direction. The research team presented the findings at the Network and Distributed System Security Symposium (NDSS) in San Diego, one of the top-tier academic venues for security research. The technique, dubbed “FlyTrap” by the researchers, is not a theoretical proof-of-concept. They demonstrated it successfully against three commercial drone platforms available to any buyer , including models deployed by law enforcement agencies and border patrol units. Autonomous drone security vulnerabilities of this type have no quick software fix, and the attack materials cost approximately the same as a restaurant meal.

How FlyTrap Exploits Neural Network Tracking

Modern autonomous drones rely on convolutional neural network (CNN)-based visual tracking to follow human targets. A camera feed enters the neural network, which identifies a human figure, estimates relative distance and bearing, and issues real-time flight commands to keep the subject centered in frame. The drone’s computer logic interprets what its camera captures and makes autonomous flight decisions based on that interpretation , no human pilot required. Manufacturers market this capability under branding like “ActiveTrack” or “Follow Me,” and it has become a standard feature across consumer and prosumer drone platforms.

FlyTrap attacks this interpretation layer directly rather than targeting the drone’s communications, GPS signal, or flight controller.

An adversarial pattern printed on the umbrella causes the drone’s neural network to interpret the static image as a person moving farther away, even when the actual target is stationary or walking in the opposite direction. The tracking algorithm receives visual input that registers as a legitimate target at increasing distance, and the drone responds by flying toward the false stimulus. The umbrella trick fools AI target-tracking systems by exploiting a fundamental property of how CNNs process visual data: the networks learn statistical associations between specific pixel patterns and spatial depth cues during training, and an adversarial pattern can present false cues that the system cannot distinguish from genuine visual information.

What separates FlyTrap from conventional electronic countermeasures , and makes it considerably more dangerous from a defense planning perspective , is its physical simplicity. No radio frequency jamming equipment is required. No specialized technical knowledge.

No access to the drone’s communication protocols, encryption keys, or flight controller firmware. A printed pattern on an off-the-shelf umbrella , materials costing roughly $20 , defeats tracking systems that cost thousands of dollars to develop and deploy. Unlike electronic warfare tools that produce detectable RF signatures and can trigger countermeasure alerts, FlyTrap produces no signal detectable by the drone’s telemetry systems. From the operator’s monitoring station, the drone appears to be functioning normally , tracking a target with full confidence while actually pursuing a phantom.

Three Drones, Two Manufacturers, One Failure Mode

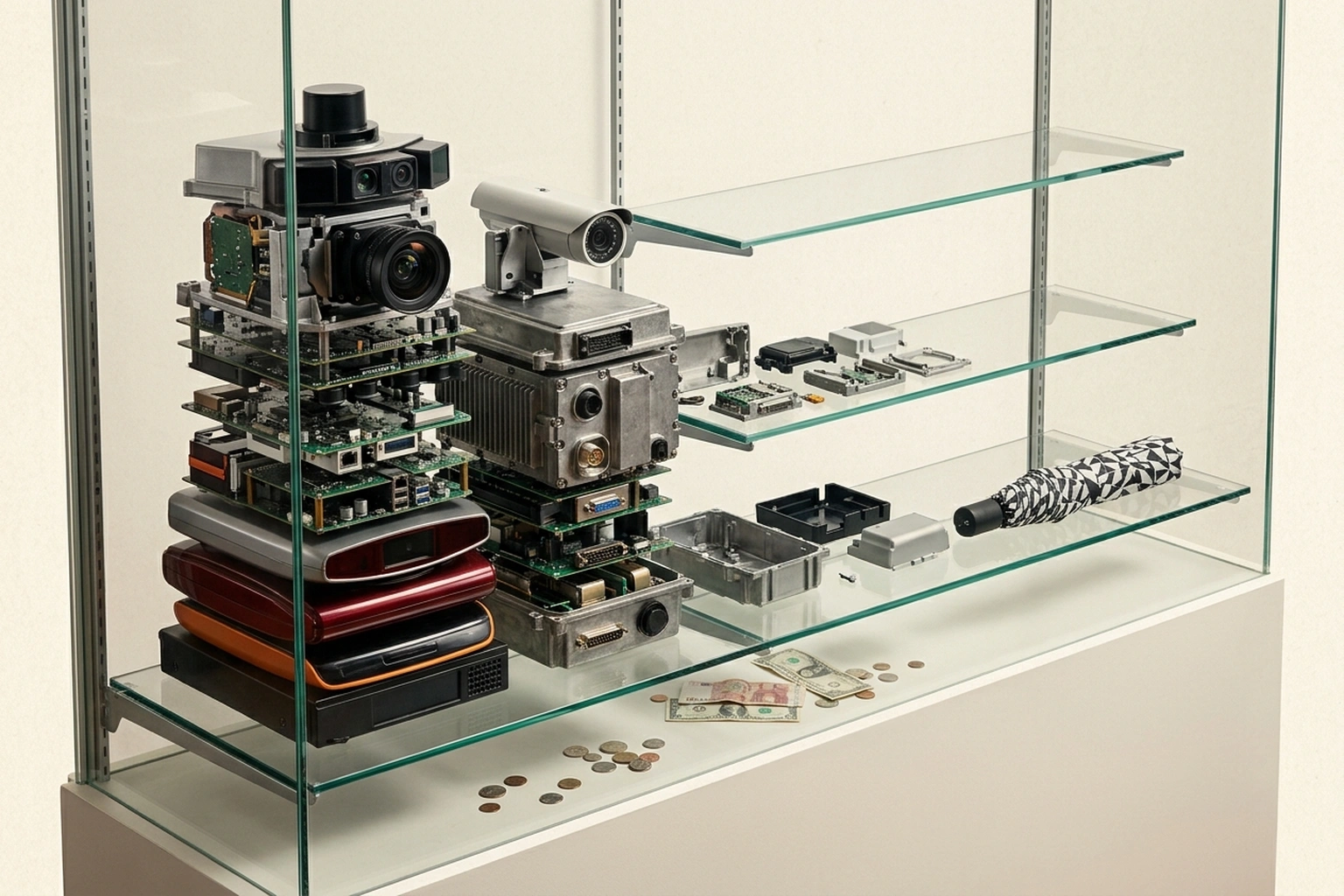

The UC Irvine team tested FlyTrap against three commercially available platforms: the DJI Mini 4 Pro, the DJI Neo, and the HoverAir X1. The researchers deliberately selected drones from two different manufacturers (DJI and HoverAir) , demonstrating that the vulnerability exists at the architectural level of neural network tracking rather than in any single manufacturer’s software implementation. If the attack only worked against one vendor’s tracking algorithm, a firmware update from that vendor could resolve the issue. Cross-platform success indicates the problem resides in the shared approach to visual tracking that the entire commercial drone industry has adopted.

The adversarial umbrella pattern deceived all three platforms. Each drone lost effective tracking of the actual target and responded to the false visual stimulus embedded in the printed pattern. Consistent results across different hardware platforms, different camera sensor specifications, different onboard processing chips, and different manufacturer software stacks point to a vulnerability class inherent to the tracking approach rather than a product-specific bug that any single firmware update could resolve.

DJI has publicly stated that its products are not intended for military use. That legal disclaimer does not change the operational reality. The FlyTrap method can disable autonomous AI drones regardless of what the manufacturer’s terms of service specify about intended use.

Government Reliance on Vulnerable Consumer Hardware

Law enforcement and border security agencies routinely deploy commercial drones with autonomous tracking features for suspect monitoring, perimeter surveillance, and search-and-rescue operations.

Border patrol operations present a particularly concrete risk scenario. An autonomous surveillance drone assigned to track a person crossing terrain would lose its target entirely if that person deployed a FlyTrap-style umbrella. The drone would not report a tracking failure , it would continue flying with full confidence in a false direction, consuming battery life and operational time on a phantom target. The attack requires no electronic signature and produces no anomaly in the drone’s telemetry data, making post-incident analysis nearly impossible without reviewing raw camera footage frame by frame. An operator reviewing flight logs would see a normal tracking mission that simply ended with target loss attributed to range or obstruction , not adversarial manipulation.

Military drone systems typically employ more sophisticated tracking architectures, including multi-sensor fusion that combines visual, infrared, and radar tracking with hardened software stacks resistant to known adversarial techniques. But operational trends work against this advantage. Budget constraints, rapid deployment timelines, and commercial off-the-shelf (COTS) procurement policies push government agencies toward adapting consumer-grade drones rather than funding purpose-built platforms. Each consumer drone pressed into government service inherits every security flaw that platform carries, including susceptibility to FlyTrap-class adversarial attacks that the manufacturer never anticipated defending against.

Search-and-rescue operations raise a different category of concern. If a printed pattern can misdirect autonomous tracking drones used to locate missing persons in wilderness terrain, the consequences extend beyond surveillance failure to potentially lethal operational delays. When rescue drones follow a false visual trail while a subject remains unlocated in deteriorating weather conditions, the situation creates a failure mode that no current drone manufacturer has publicly addressed. Unlike surveillance scenarios where a missed target creates an intelligence gap, SAR failures create direct risk to human life , a liability exposure that procurement officers have not yet been forced to evaluate against adversarial threat models.

Why Software Patches Cannot Solve This

Adversarial attacks against neural networks do not constitute bugs in the traditional software sense. They exploit fundamental mathematical properties of how trained models process visual information. A CNN trained to recognize human figures learns statistical correlations between pixel arrangements and output predictions. FlyTrap patterns present input that falls within the model’s learned distribution while encoding entirely different spatial information , the visual equivalent of a grammatically correct sentence that means the opposite of what it appears to say.

Retraining the model to recognize specific adversarial patterns provides only temporary relief. Generating new adversarial patterns requires minimal computational resources and demands only basic open-source machine learning toolkit, while retraining neural networks and deploying updated firmware across distributed commercial drone fleets is expensive and slow. Each new adversarial pattern demands a full retraining cycle, validation testing, and coordinated firmware update. An attacker needs only one new pattern; a defender must anticipate all possible patterns , a mathematical asymmetry that structurally favors offense.

Adversarial training , a technique that incorporates known attack patterns into the training dataset to build model resistance , offers partial mitigation but introduces well-documented performance trade-offs that complicate deployment decisions. Models hardened against adversarial inputs typically show degraded accuracy on normal tracking tasks.

Multi-sensor fusion offers the most promising architectural counter-measure available with current technology. Combining visual neural network tracking with non-visual sensing modalities , LIDAR, acoustic tracking, thermal imaging, or radio-based localization , raises the attack cost significantly and forces adversaries to defeat multiple independent sensor systems simultaneously. An umbrella pattern that fools a camera simultaneously cannot fool a LIDAR range sensor or a thermal signature detector. However, adding multiple sensor modalities to consumer-priced drones substantially increases unit cost, weight, and power consumption. Whether $500-$2,000 consumer drone price points can absorb the additional hardware burden remains an unsettled question.

Broader Implications for Drone Security

FlyTrap fits within a growing body of research demonstrating physical-world adversarial attacks against AI systems. Researchers have previously shown adversarial patches that mislead autonomous vehicle vision systems, defeat facial recognition cameras, and fool automated license plate readers. What FlyTrap adds to this evidence base is a specific combination: extreme attack simplicity, real-world effectiveness across multiple platforms, and direct government security implications.

NASA and the National Science Foundation funded the UC Irvine research, indicating that federal agencies recognize drone tracking security as a national interest concern. That funding also lends institutional weight to the findings , this is not a hobbyist demonstration but federally supported security research disclosed through proper academic channels at a leading security conference.

Earlier analysis of how AI-driven threats routinely outpace defensive responses maps the same dynamic in digital security domains , attack tooling scales faster than defense mechanisms can adapt. FlyTrap transplants that exact asymmetry from digital space into the physical world. A $20 umbrella defeats a $2,000 drone. Printing a new adversarial pattern takes hours; patching a neural network against it takes months. Deploying the attack requires zero technical expertise; deploying the fix requires coordinated firmware updates across distributed global fleets.

From a containment standpoint, physical-world adversarial attacks present fundamentally harder mitigation challenges than their digital counterparts. Software-based AI containment approaches work because digital environments can restrict permissions, filter inputs, and monitor outputs. A drone operating in open air has no equivalent containment layer , operators cannot sandbox it against visual stimuli encountered in the field.

Publication at NDSS is projected to accelerate procurement policy discussions within government agencies that rely on autonomous drone tracking. Based on current trends, within 12 to 18 months, federal and state procurement offices could begin requiring adversarial robustness testing as a certification prerequisite for drones deployed in law enforcement and border security roles. Whether those requirements arrive before adversarial attack patterns become commercially available to organized criminal networks, smuggling operations, and hostile non-state actors could determine how much operational damage this vulnerability class may inflict before effective counter-measures could reach deployed government fleets. An umbrella is easy to carry, easy to discard, and leaves no forensic evidence of adversarial intent.

Critics of this framing point out that controlled laboratory experiments and bounded field tests may significantly overstate real-world operational risk , adversarial patterns optimized against specific drone models under known lighting conditions and fixed camera angles may degrade rapidly when confronted with variable weather, oblique viewing angles, or the multi-drone coordination that sophisticated agencies already deploy as standard protocol. The strongest counterargument is that the actual attack surface for FlyTrap may be considerably narrower than a three-platform study suggests, and that agencies with layered surveillance architectures , combining drone tracking with ground units, fixed cameras, and human observers , would detect target loss through redundant channels long before a phantom-tracking failure caused meaningful operational harm. If that is true, the more urgent policy response may be enforcing existing best practices around surveillance redundancy rather than treating single-sensor autonomous tracking as a capability that should ever have been relied upon in isolation.

What to Read Next

- Langflow RCE Exploited Again , 20 Hours, No PoC, Creds Stolen

- 41.6M AI Scribe Consultations Hide an Unregulated Medical Device

- Stryker Hack: Zero Devices Hit, Surgeries Canceled for 8 Days

References

- UC Irvine researchers expose critical security vulnerability in autonomous drones , EurekAlert university press release detailing full experimental results, drone test platforms, FlyTrap mechanism, NDSS presentation, and NASA/NSF funding disclosure

- What is the ‘FlyTrap’ Method, and How Can It Disable Autonomous AI Drones? , Military.com coverage of defense and law enforcement implications, DJI military-use disclaimers, and government drone deployment context

- Umbrella Trick Can Fool AI Target-Tracking Drones, UC Irvine , DRONELIFE reporting on adversarial pattern cost, attack mechanism details, and neural network vulnerability analysis