8 min read · 2,025 words

according to OpenAI’s $1 Trillion InfrastructurOpenAI signs deal, worth $10B, for compuWhy OpenAI’s AI Data Center BuildoAnnouncing The Stargate ProjectOpenAI Funding on Track to Top $100 BillSam AltmanSam AltmanSam Altman, the numbers arriving from Bloomberg on February 19 stopped making sense somewhere around the tenth zero. OpenAI is finalizing commitments for a funding round that will likely exceed $100 billion, at a valuation pushing $850 billion. For perspective, a $100 billion funding round is larger than all U.S. venture capital raised in 2023 combined, and a valuation of $850 billion rivals that of Tesla and approaches the total annual GDP of the Netherlands. The pre-money valuation of $730 billion already exceeds Saudi Arabia’s entire GDP, underscoring the record scale at play. This report examines openai stargate infrastructure investment 2026.

SoftBank alone committed $30 billion, spread across three $10 billion installments throughout the year, which matches the size of NASA’s entire annual budget. Amazon’s $50 billion potential contribution would represent the largest single corporate investment in AI infrastructure to date, comparable to the cost of building 10 modern international airports. But the funding round, historic as it may be, tells only half the story. The capital exists to support something far larger: a $1.4 trillion infrastructure commitment that OpenAI CEO Sam Altman has described as necessary to build the Stargate project, a network of AI data centers that would require power consumption roughly 1.5 times New York City’s entire electrical demand. (see cited sources below)

The $100 Billion Question: Where Does the Money Come From

According to OpenAI Funding on Track to Top $100 Billion i, investor composition in this round reads like a who’s who of the AI supply chain. SoftBank’s $30 billion commitment positions Masayoshi Son as Stargate’s financial lead, with the Japanese conglomerate holding “financial responsibility” for the project while OpenAI retains operational control. Amazon’s potential $50 billion stake, still under negotiation, would give the e-commerce giant exceptional access to OpenAI’s compute infrastructure for AWS customers. NVIDIA’s contribution, initially reported at $20 billion and now potentially reaching $30 billion, ensures its hardware remains central to OpenAI’s training and inference workloads. Microsoft’s role appears deliberately muted. The Redmond giant has invested “low billions” in this round, a striking contrast to the $13 billion it poured into OpenAI between 2019 and 2024. The shift reflects a strategic recalibration: Microsoft has secured its Azure exclusivity agreement through 2030, but OpenAI’s infrastructure diversification – with deals spanning Nvidia, AMD, Cerebras, and Broadcom – reduces dependency on any single partner. The funding structure itself carries risk. Multiple tranches spread across the year mean OpenAI won’t receive the full $100 billion upfront. Investor commitments remain subject to due diligence, market conditions, and, critically, OpenAI’s ability to demonstrate progress toward profitability. The company is projected to lose approximately $14 billion in 2026, according to OpenAI’s $1 Trillion Infrastructur , Bloomberg analyst estimates, despite generating an estimated $13.1 billion in revenue. In this analysis, the path from cash incinerator to sustainable business remains uncertain.

Stargate: The $500 Billion Foundation

According to Announcing The Stargate Project, stargate, announced in January 2025, represents the largest private infrastructure investment in American history. The initial commitment: $500 billion over four years, with $100 billion deployed immediately to build data center campuses in Abilene, Texas. The project’s equity backers include SoftBank, OpenAI, Oracle, and MGX – an Abu Dhabi-based sovereign wealth fund. The technical specifications defy easy comprehension. Each Stargate campus will consume multiple gigawatts of power, requiring dedicated nuclear facilities and renewable energy contracts spanning decades. Oracle, Nvidia, and OpenAI will collaborate on the computing systems, while Microsoft continues its Azure partnership as a “consumption layer” rather than an exclusive infrastructure provider. The geopolitical framing is explicit. OpenAI’s announcement described Stargate as infrastructure that would “secure American leadership in AI.” The Texas locations weren’t accidental – land there remains cheap, power is available, and local governments have offered aggressive tax incentives.

The $1.4 Trillion Reality Check

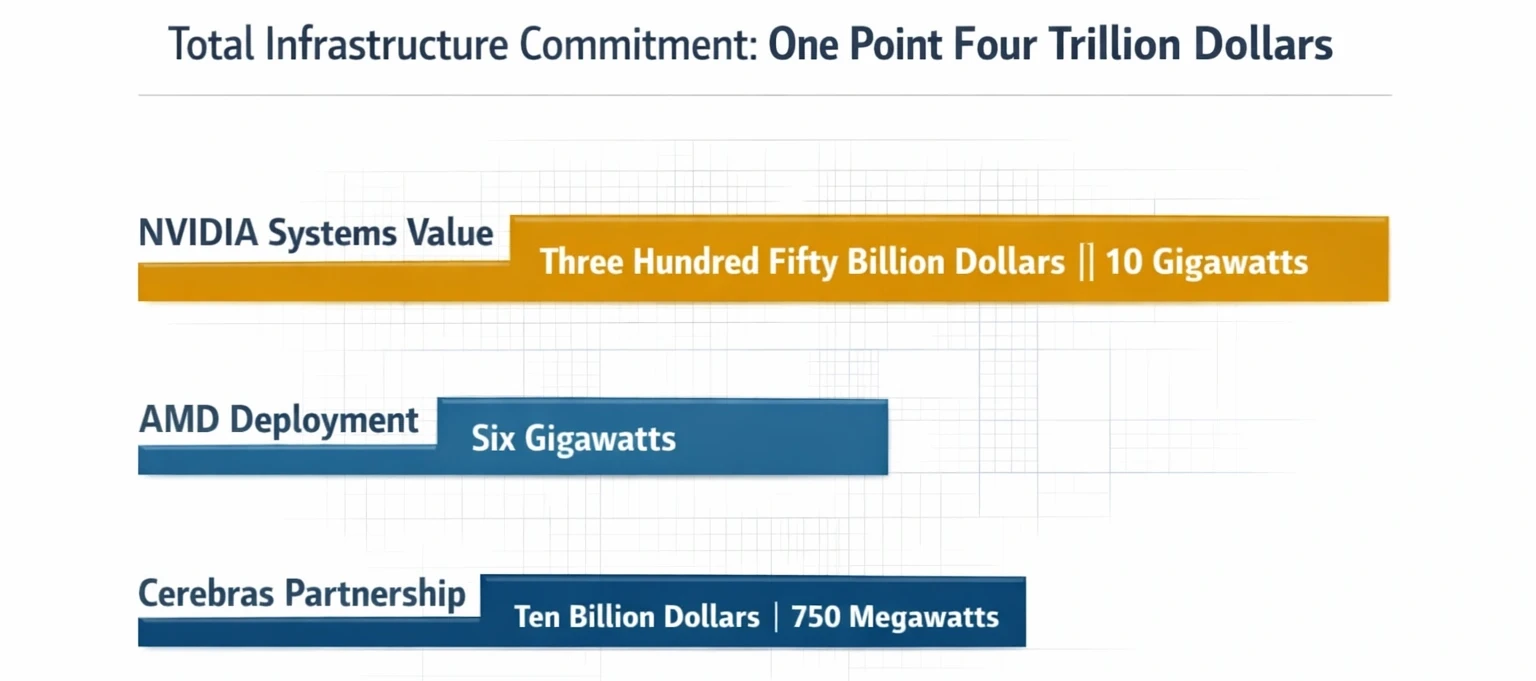

According to Why OpenAI’s AI Data Center Buildout Fa, at this scale, figures become genuinely staggering. Industry analysts estimate these combined obligations – covering Stargate and separate agreements for Nvidia GPUs, AMD accelerators, Cerebras systems, and custom Broadcom chips – at approximately $1.4 trillion over the next eight years, according to OpenAI signs deal, worth $10B, for compu , analysis from Tomasz Tunguz. That figure includes:

The $1.4 Trillion Reality Check

The $1.4 Trillion Reality Check

- NVIDIA systems: At least 10 gigawatts of capacity, valued at an estimated $350 billion based on industry benchmarks of $35 billion per gigawatt

- Cerebras partnership: $10 billion for 750 megawatts of wafer-scale compute, delivering low-latency inference from 2026 through 2028

- AMD agreement: A six-gigawatt deployment of Instinct AI GPUs beginning in the second half of 2026

- Broadcom collaboration: 10 gigawatts of custom AI accelerators designed by OpenAI and developed in partnership with Broadcom, with deployment starting in late 2026

According to OpenAI signs deal, worth $10B, for compute fr, the power requirements alone represent an existential challenge. Ten gigawatts equals roughly 15 times the electricity consumption of Microsoft’s entire global data center footprint as of 2024. It exceeds New York City’s peak demand by 50%. Building that capacity requires not just chips and servers but transmission lines, substations, and generation facilities that take years to permit and construct. The financing assumptions underlying these commitments are equally aggressive. OpenAI’s revenue grew approximately 6.5x in two years – from an estimated $2 billion in 2023 to roughly $13.1 billion in 2025, according to Why OpenAI’s AI Data Center Buildo , Bloomberg reporting. But maintaining that growth rate while simultaneously building the largest computing infrastructure ever constructed by a private company requires capital deployment at a pace no technology firm has ever attempted.

Why the Infrastructure Race Matters

According to OpenAI’s $1 Trillion Infrastructure Spe, openAI isn’t building data centers for its own sake. The infrastructure exists to solve a specific problem: the gap between AI model capability and inference cost. Every query to GPT-5, every image generated by DALL-E, and every conversation with ChatGPT consumes compute resources that scale with model complexity. As models grow larger, the cost of serving each user increases proportionally. Cerebras’s partnership illustrates the strategy. Cerebras builds wafer-scale chips – essentially, single silicon wafers containing hundreds of thousands of cores – designed specifically for AI inference. The company claims its systems deliver response times 10-20x faster than traditional GPU-based infrastructure. For OpenAI, that translates to faster ChatGPT responses, more natural real-time interactions, and the ability to serve more users without proportionally increasing costs. Diversification across chip vendors serves multiple purposes. Dependency on Nvidia alone would give Jensen Huang’s company pricing power over OpenAI’s entire inference stack. For example, a hypothetical 10 percent surge in Nvidia’s GPU prices would increase OpenAI’s annual infrastructure costs by roughly $5 billion, based on the calculations in this analysis of the projected $50 billion committed to Nvidia hardware over the next several years. By spreading workloads across AMD, Cerebras, and custom Broadcom accelerators, OpenAI puts downward pressure on hardware costs and reduces the financial impact of supply-chain shocks. But the strategy assumes something that remains unproven: that demand for AI inference will continue growing at current rates. If chatbot usage plateaus, or if competitors like Anthropic’s Claude and Google’s Gemini capture significant market share, OpenAI could find itself locked into infrastructure contracts worth hundreds of billions with insufficient revenue to service them.

The Profitability Paradox

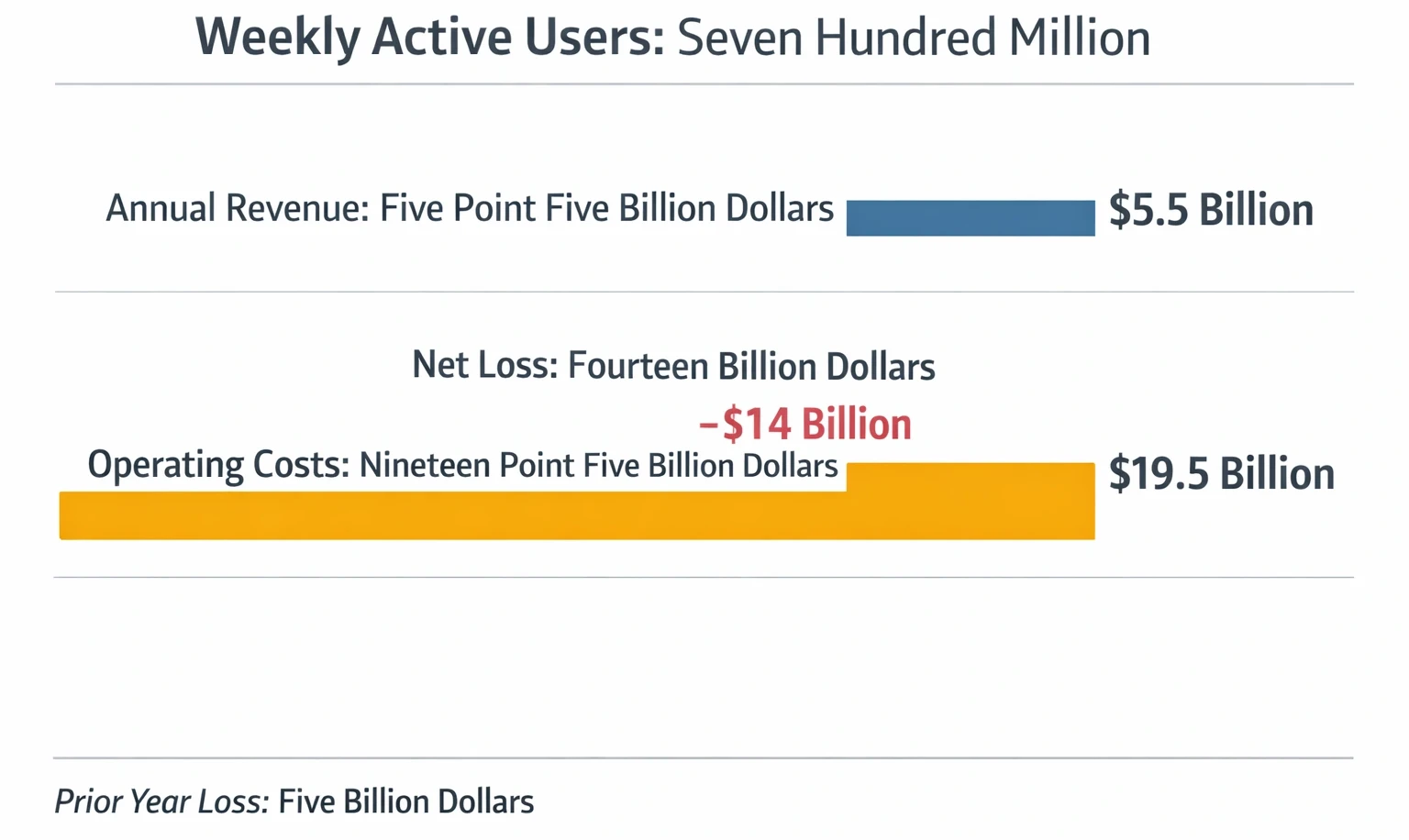

According to Stargate. It’s Not All About You Micros, openAI’s financial trajectory presents a fundamental tension. Even with $13.1 billion in sales, the company’s costs still totaled approximately $21 billion, driven primarily by compute, salaries, and model training expenses. The resulting $8 billion net loss was a significant improvement from 2024’s $5 billion shortfall on $6 billion revenue, but the path to profitability remains murky. Advertising in ChatGPT for free users signals one potential revenue lever. Displaying ads to the service’s 700 million weekly active users could generate billions in additional revenue, though it risks alienating users who selected ChatGPT specifically to avoid ad-supported alternatives. Premium subscriptions, currently priced at $20-$200 per month depending on features, provide stable revenue but haven’t scaled quickly enough to cover infrastructure costs. The company’s valuation multiples reflect this uncertainty. At $850 billion in market capitalization and $13.1 billion in revenue, OpenAI trades at approximately 65x current sales, based on the calculations in this analysis. By comparison, Amazon trades at 3x revenue, Microsoft at 13x, and Nvidia at 25x. This level of premium suggests that the market assumes OpenAI will eventually capture a substantial share of the $400 billion annual enterprise AI market, as Bloomberg Intelligence analysts project for 2030. But capturing that market requires infrastructure that doesn’t yet exist, funded by capital that hasn’t fully materialized, serving demand that may not materialize at the scale required.

The Profitability Paradox

The Profitability Paradox

What Happens If the Money Runs Out

According to Announcing The Stargate Project, industry data, infrastructure commitments OpenAI has signed carry real obligations. Chip suppliers expect payment. Power contracts require a minimum offtake guarantees. Construction firms have signed fixed-price agreements for data center builds. If revenue growth stalls or funding rounds fail to close, the company faces a liquidity crisis that could force asset sales or, in extreme scenarios, bankruptcy. Venture debt markets have already signaled caution. In late 2025, reports emerged that several major banks had declined to participate in OpenAI’s infrastructure financing, citing concerns about the company’s path to profitability. The current funding round relies primarily on equity commitments from strategic investors – SoftBank, Amazon, Nvidia – rather than traditional debt financing. There’s a reason those investors remain committed despite the risks. OpenAI’s ChatGPT currently serves over 700 million weekly active users. Its GPT models power applications across virtually every major industry. The company’s brand recognition in AI exceeds even Google’s in consumer markets. If AI transforms the global economy as profoundly as its advocates predict, owning a stake in the leading infrastructure provider could generate returns that dwarf the $100 billion investment. The question isn’t whether OpenAI can raise $100 billion. The question is whether $100 billion is enough, per OpenAI Funding on Track to Top $100 Bill.

Critics of this framing point out that the entire premise of compute-as-moat may be fundamentally flawed: the rapid efficiency gains demonstrated by models like DeepSeek-R1 suggest that algorithmic improvements could dramatically reduce the compute required to achieve frontier AI performance, potentially stranding hundreds of billions in physical infrastructure before it is fully utilized. The strongest counterargument holds that OpenAI is not merely buying chips but locking in a self-reinforcing cycle , more compute attracts more users, more users generate more revenue and training data, and more data produces better models , making the scale of investment a strategic barrier to entry rather than a liability. If that virtuous cycle breaks down at any point, however, the fixed obligations of $1.4 trillion in hardware and power contracts leave almost no margin for course correction.

What to Read Next

- The 30-Minute Trap: Alibaba’s AI Agent Meets Unprepared Buyers

- The 34% Problem: AI Transformation Stalls, Traps Billions

- The 80% AI Project Failure Rate Costs Firms $7.2M Each

References

- OpenAI Funding on Track to Top $100 Billion in Latest Round , Bloomberg reporting on funding round structure, investor commitments, and valuation details (February 19, 2026)

- Announcing The Stargate Project , OpenAI’s official announcement of the $500 billion infrastructure initiative, including equity partners and initial deployment plans (January 2025)

- Why OpenAI’s AI Data Center Buildout Faces A 2026 Reality Check , Forbes analysis of infrastructure commitment and power consumption challenges (December 2025)

- OpenAI signs deal, worth $10B, for compute from Cerebras , TechCrunch coverage of Cerebras partnership, including 750MW deployment details (January 2026)

- OpenAI’s $1 Trillion Infrastructure Spend , Tomasz Tunguz analysis breaking down OpenAI’s chip partnerships across Nvidia, AMD, Broadcom, and Cerebras, with cost estimates

- Stargate. It’s Not All About You Microsoft. Or You Either Elon , William Keating analysis on competitive dynamics between key Stargate stakeholders