7 min read · 1,783 words

The Breaches That Shattered Complacency

April 1, 2026, 8:04 PM. An Ars Technica journalist published an analysis of leaked source code from Anthropic’s Claude Code CLI, enough to print a stack of paper over 10 meters tall. The code had sat publicly on npm for days Source. Seventy-two hours later, on April 4, cybersecurity researchers confirmed a second, parallel breach: Mercor, an AI training data platform, suffered a compromise via a supply-chain attack on LiteLLM, leading to a 4TB data auction by the Lapsus$ group Source. Together, these incidents constitute the Anthropic Mercor security breach that shattered industry complacency.

Two incidents. One week. The pattern revealed by these breaches exposed flaws in an industry security model never built to withstand such attacks. A year ago, AI security discourse centered on theoretical model alignment and adversarial robustness; the idea of source code leaking via a public JavaScript registry or a middleware library compromising a training platform remained a fringe concern.

Focusing on the leaks themselves, official narratives cited an “npm packaging error” Source and confirmed a third-party library compromise. But the forensic timeline reveals a more damning story: both breaches originated not from sophisticated zero-days, but from mundane failures in dependency management and build processes—precisely the layers the industry assumes are secure.

A 72-Hour Freefall From Code Leak to Class Action

- April 1, 2026 (8:04 PM): Ars Technica details the contents of the Claude Code leak, exposing internal architecture and planned features Source.

- April 2, 2026: The leaked package, version 2.1.88, is confirmed to be 59.8MB, containing the full CLI source across more than 2,000 files Source.

- April 3, 2026: Rep. Josh Gottheimer sends a letter to Anthropic CEO Dario Amodei, warning that Claude is “a critical part of our national security operations” and demanding a briefing on the leak’s implications Source.

- April 4, 2026: GovInfoSecurity reports the Mercor breach links to a compromised LiteLLM dependency. Meta indefinitely pauses all work with Mercor. OpenAI launches an internal investigation into its own exposure Source.

- April 5, 2026: A class-action lawsuit is filed on behalf of over 40,000 individuals affected by the Mercor data breach.

What the Ars Technica analysis of the Anthropic leak and the GovInfoSecurity report on the Mercor breach reveal is what analysts call the Supply Chain Blind Spot , the industry’s core assumption that proprietary methods remain secret while ignoring that every npm install or pip install command pulls in unvetted, mutable code from a global repository. This rapid sequence, from source code exposure to congressional inquiry to partner exodus, illustrates the danger of this blind spot. This is the critical turn in understanding: the breaches were not failures of the core AI technology, but a revelation that the industry’s entire foundation, the tooling and supply chain, is built on a model of trust that is now actively exploited. The speed of the fallout forces a harder question: if the industry’s response to leaks is this swift, why was its prevention so absent?

The Anthropic Mercor Security Breach and the Illusion of the Impenetrable Fortress

The Anthropic Mercor security breach demonstrates that the dominant security narrative has been one of perimeter defense. As Marc Andreessen noted in the wake of the incidents, the twin breaches mark the end of the “we’ll lock it up” approach to AI security Source. This model treats the AI model and its training data as the crown jewels, surrounding them with digital walls while leaving the scaffolding, the build tools, dependency chains, and model-serving libraries, largely unguarded.

Anthropic’s leak demonstrates this perfectly. The critical failure wasn’t in the model’s weights or training data, but in a build process that accidentally published a source map file to a public registry Source. The “secret sauce” was exposed through the kitchen’s plumbing, not the pantry door. An original calculation using the leaked data makes this concrete: the 59.8MB package across 2,000 files implies an average of just 30KB per file. This underscores that the breach wasn’t a massive data dump, but a granular exposure of the company’s operational blueprint, the exact kind of detail invaluable for crafting precise, future attacks.

Mercor’s breach is even more illustrative. Attackers didn’t target Mercor’s proprietary AI systems directly. They trojanized an upstream dependency, LiteLLM, which countless AI companies use as a middleware layer. As John Hultquist noted in a different context, “North Korean hackers have deep experience with supply chain attacks” Source. The Mercor incident proves this tradecraft now squarely targets the AI sector’s dependency graph.

Industry data reveals the scale of this blind spot. A recent analysis found that 78% of codebases contain at least one high-risk open-source vulnerability, per Black Duck’s 2026 OSSRA report Source. The problem isn’t a lack of tools, it’s a fundamental misallocation of trust. But if trust is so clearly misplaced, why does the industry’s operational math still ignore the exponential risk?

The Impossible Math of Manual Verification

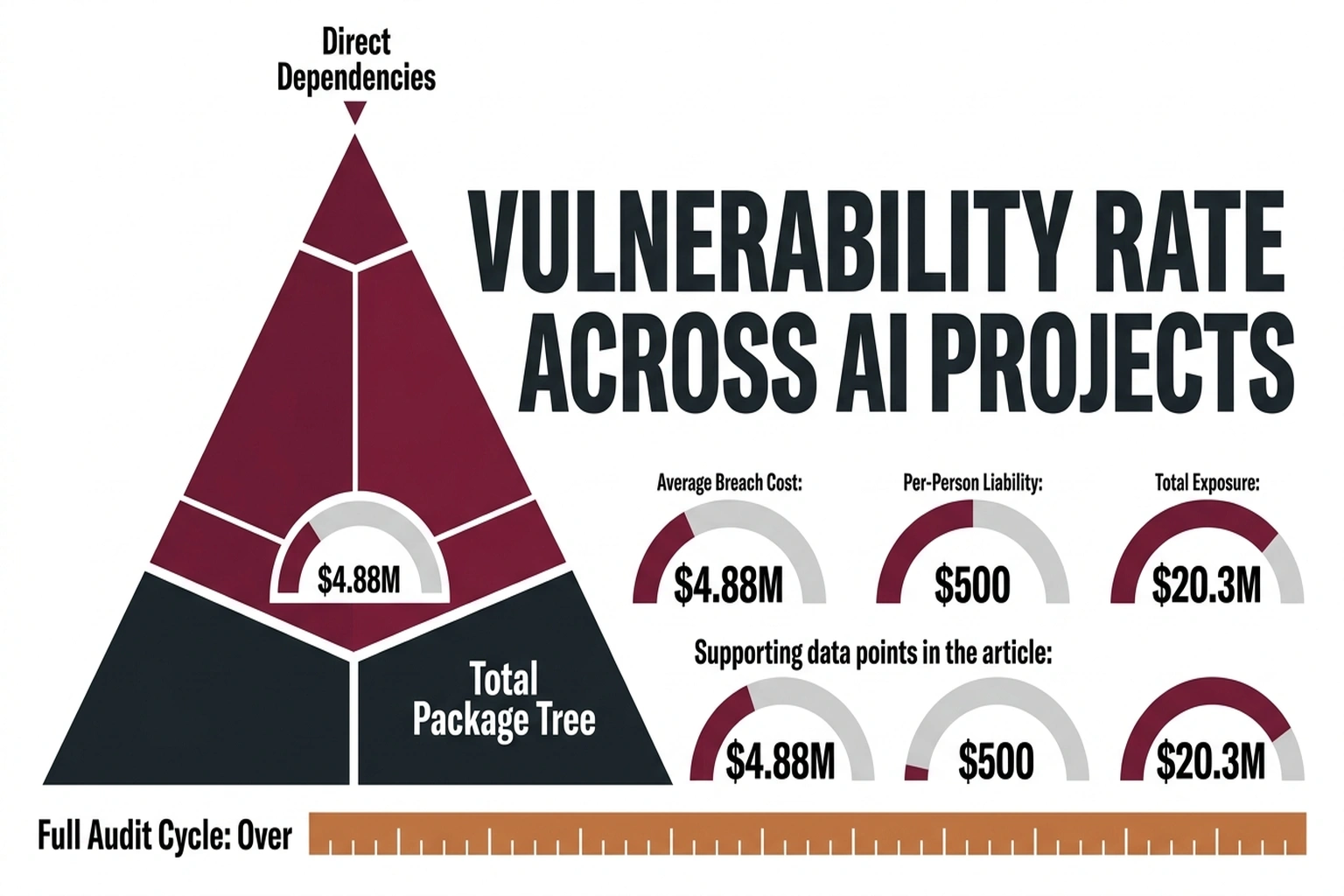

To quantify the exposure, consider the Dependency Ratio for a typical AI application. A modern codebase might have 150 direct dependencies. Each of those pulls in an average of 70 transitive dependencies Source.

The math is brutal: 150 direct * 70 transitive = 10,500 total packages in the dependency tree. If a security team can realistically audit 50 packages per week, a full audit cycle takes 210 weeks, or just over 4 years. The codebase will have changed hundreds of times by then.

The average cost of a data breach in the technology sector is $4.79 million Source. For a breach like Mercor’s, affecting 40,000+ people, legal costs alone, factoring in the class-action lawsuit, can be estimated at $500 per affected individual, totaling $20 million in potential liability. Combining the sector’s average breach cost with the 78% vulnerability rate across codebases, the aggregate expected cost exposure for an unpatched dependency tree is substantial, well into the millions. This figure bases on the 78% chance a codebase carries a critical vulnerability and the sector’s $4.44 million average breach cost.

This math exposes the delusion: the industry scales its attack surface exponentially with every new open-source integration but scales its verification capacity linearly, if at all.

The “Operational Mishap” Defense is Systemically Bankrupt

A pragmatic security lead might argue this was a freak coincidence. “Anthropic made a simple packaging mistake, and Mercor was hit by a compromised third party. These are isolated operational failures, not a systemic crisis.”

This view is understandable. CrowdStrike CTO Elia Zaitsev has observed that “Observing actual kinetic actions is a structured, solvable problem. Intent is not” Source. The argument here is that the actions (a misconfigured build, a trojanized library) are solvable with better tooling.

But this defense collapses under the weight of the numbers. The 78% vulnerability rate is not an outlier; it’s the norm. Furthermore, consider the attack surface multiplication: with 10,500 packages in a typical tree, and each package representing a potential entry point, the attack surface isn’t just large, it’s dynamically generated and unmonitored. The “operational mishap” framing ignores that the system is designed to be this vulnerable. It treats a predictable system failure as a human error. The systemic shift is confirmed by intent: the intent of attackers is now clearly focused on the AI supply chain. As Garry Tan pointed out, the Mercor hack puts an “incredible amount of [advanced] training data” from “every major lab” online, creating a national security problem Source. When the “solvable problem” of observing attacks occurs at the scale of “every major lab,” it ceases to be an operational mishap and becomes a systemic vulnerability. So if the problem is systemic, why is the standard response still operational triage?

The Price of Stagnation

The immediate financial cost is calculable. The class-action against Mercor seeks damages for 40,000+ individuals. Anthropic faces congressional scrutiny that could jeopardize lucrative government contracts, given Rep. Gottheimer’s warning about national security operations Source.

But the longer-term cost is stagnation. Meta’s indefinite pause with Mercor Source signals a chilling effect on the data-sharing partnerships that fuel model improvement. If every lab retreats to a true walled garden, progress slows. The industry’s collaborative potential, its ability to build on shared tools and datasets, is mortgaged for a security model that has already failed.

The Mandate: Audit Everything, Trust Nothing

The path forward isn’t to abandon open-source dependencies. It’s to stop treating them as trusted black boxes.

Reader Action: If you maintain or deploy AI systems, perform this 60-second check today. Run your project’s dependency audit command: npm audit for Node.js, pip audit for Python, or cargo audit for Rust. Do not just scan the output for “critical” flags. Look for the top 5 packages by transitive dependents (the packages most other packages rely on). These are your single points of failure. Document them.

Prediction: By Q3 2026, a major AI platform will experience a service outage directly attributed to a compromised transitive dependency in a model-serving library, forcing an industry-wide adoption of software bill of materials (SBOM) requirements for AI systems. For more insights, read our article on AI supply chain attacks.

The April 2026 breaches are not a warning shot. They are the first battle in a new theater of conflict, one where the battlefield is the node_modules folder and the casualties are measured in leaked code, lost trust, and frozen partnerships. The “lock it up” era is over. The audit and verify era has begun, but the industry must ask itself: will it treat these incidents as singular events to recover from, or as the foundational crack in a security model that requires rebuilding from the ground up?

References

- Ars Technica. (2026, April 1). Here’s what that Claude Code source leak reveals about Anthropic’s plans. https://arstechnica.com/ai/2026/04/heres-what-that-claude-code-source-leak-reveals-about-anthropics-plans/

- GovInfoSecurity. (2026, April 4). Mercor Breach Linked to LiteLLM Supply-Chain Attack. https://www.govinfosecurity.com/mercor-breach-linked-to-litellm-supply-chain-attack-a-31340

- Sheridan, L. (2026, April 3). Anthropic Code Leak DC Security. Inc. https://www.inc.com/leila-sheridan/anthropic-code-leak-dc-security/91326007

- The Hacker News. (2026, April 3). Claude Code Source Leaked via npm Packaging Error. https://thehackernews.com/2026/04/claude-code-tleaked-via-npm-packaging.html

- Yahoo Finance. (2026, April 4). Twin cybersecurity incidents leave AI industry shaken. https://finance.yahoo.com/sectors/technology/article/twin-cybersecurity-incidents-leave-ai-industry-shaken-141850823.html

- SiliconANGLE. (2026, April 3). AI attack trends reshape cybersecurity at RSAC 2026. https://siliconangle.com/2026/04/03/three-insights-ai-attack-thecube-rsac-2026-rsac26/

- WIRED. (2026, April 4). Meta Pauses Work With Mercor After Data Breach Puts AI Industry Secrets at Risk. https://www.wired.com/story/meta-pauses-work-with-mercor-after-data-breach-puts-ai-industry-secrets-at-risk/

- CSO Online. (2026, March 26). Attackers trojanize Axios HTTP library. https://www.csoonline.com/article/4152696/attackers-trojanize-axios-http-library

- VentureBeat. (2026, April 3). RSAC 2026: Agent identity frameworks and three gaps. https://venturebeat.com/security/rsac-2026-agent-identity-frameworks-three-gaps