12 min read · 2,880 words

Affiliate Disclosure: This article contains affiliate links. We may earn a commission if you purchase through these links, at no additional cost to you. This helps us continue publishing free content. See our full disclosure.

Part 6 of 7 in the The Cost of AI series.

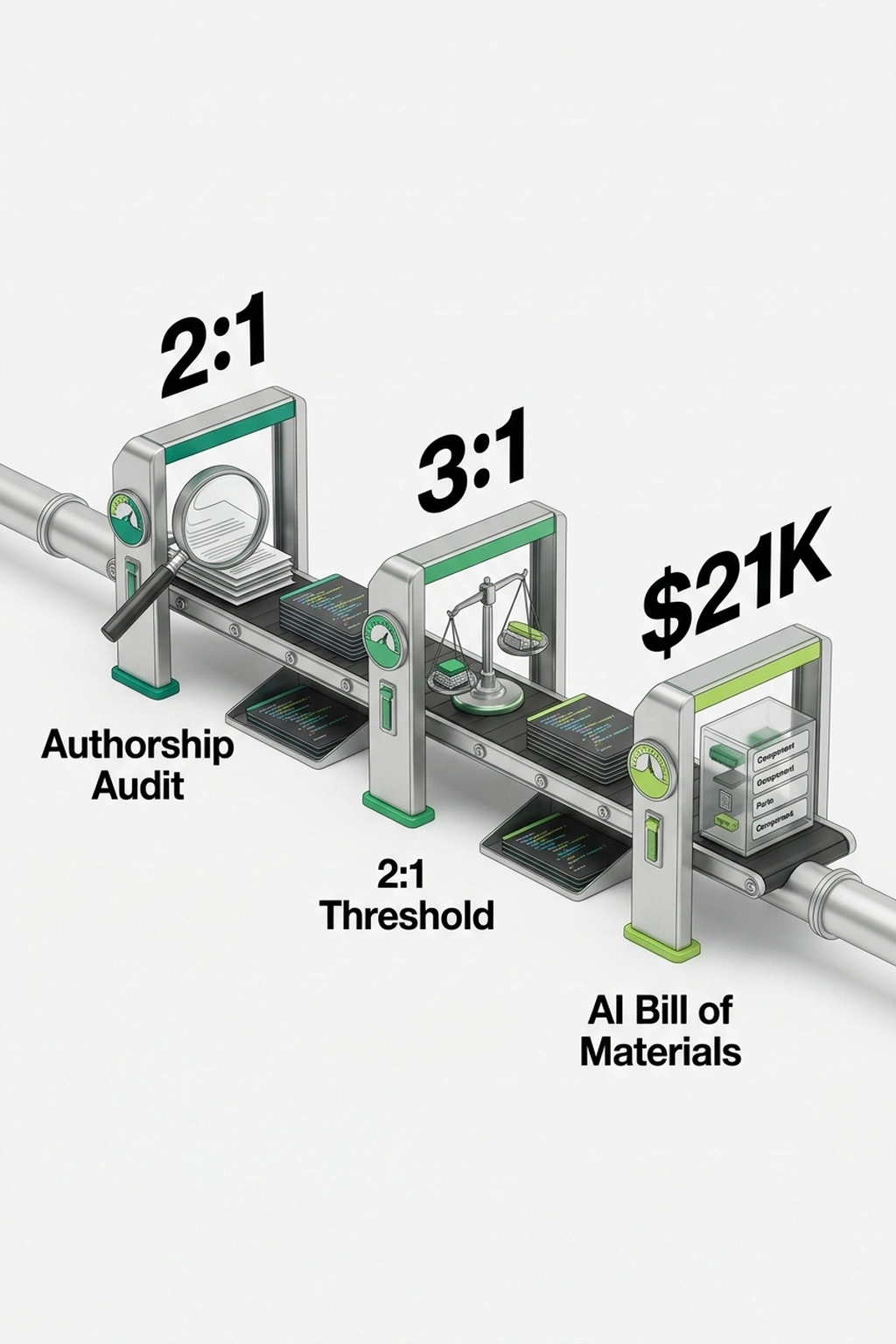

For every AI model an enterprise knowingly deploys, shadow AI introduces nearly three ungoverned AI components into the codebase unseen. That ratio , roughly 3:1 , comes from more than 500 scans run during Snyk’s Evo AI-SPM early access, across organizations already operating CNAPP platforms, endpoint detection, and cloud security posture management (Snyk). Every security stack reported a governed AI environment. Every stack was wrong.

Security architectures embed assumptions about who writes code. Twelve months ago, the foundational assumption , humans author production software, AI assists , still held. MCP servers numbered in the hundreds, not thousands.

AI coding agents had not yet colonized commit histories. No vendor offered a scanner for AI-authored production code because the category barely existed. The assumption expired in under a year. The architectures built on it did not update , and the resulting AI agent security gap now stretches wider with each autonomous commit.

Jason Langston, Director of Product Security at WEX, set up Evo in an afternoon. The report surfaced what his codebases actually contained , and the distance between “in place” and “approved” became the real finding. “Being able to put our arms around the full breadth of what was actually in place was a super helpful foundation to start from,” Langston said (Snyk). Multiply that experience across an industry where executive confidence in AI governance vastly outpaces actual deployment security (Gravitee). The industry calls this gap shadow AI. A more precise name is forming, and nothing in the current security stack is built to see it.

42.8% of AI Agent Activity Runs Unmonitored

In Gravitee’s February 2026 survey of 900+ executives and practitioners, 82% of executives rated their AI governance as adequate. Only 14.4% of their agents had actually launched with full security approval (Gravitee). That 68-point gap between confidence and reality is not a rounding error , it is the signature of a category that security leaders have not yet learned to measure. Among the 80.9% of teams past planning into testing or production, only 47.1% of AI agents receive active monitoring, while 88% of organizations confirmed or suspected AI-related security incidents during the same period (Gravitee).

Those incidents have a productivity engine behind them. BlackFog’s January 2026 research surveyed 2,000 respondents across industries , roughly the workforce of a mid-size tech company. The results: 60% of employees willingly accept security risk for faster output, with 49% using AI tools their employer never sanctioned (BlackFog). BlackFog frames this as an employee behavior problem , workers choosing speed over safety. But Snyk’s scan data complicates that framing considerably. The untracked components surfacing in those 500+ scans were not introduced by employees making rogue choices with unauthorized tools. They were generated by AI coding agents operating within workflows the organization had sanctioned. Blaming employee risk tolerance, as BlackFog’s framing implies, points remediation at hiring and training when the actual vector is the toolchain itself. Netskope’s 2026 Cloud and Threat Report measured the downstream damage: 223 genAI data policy violation incidents per month per organization on average. That figure doubled year-over-year, with source code accounting for 42% of violations (Netskope).

Combine the datasets. If 80.9% of teams deploy while only 47.1% of agents get monitored, then 80.9% × (1 − 0.471) = 42.8% of all organizational AI activity runs in production with zero security oversight. Neither Gravitee nor Snyk published that number. Their data produces it when combined , and it quantifies what CNAPP gaps leave exposed when monitoring coverage trails deployment velocity by this margin, per Gravitee.

Nearly half of organizations , 45.6% , rely on shared API keys for agent-to-agent authentication, meaning a single compromised credential unlocks the entire agent chain (Gravitee). James Simcox, Chief Operations and Product Officer at Equals Money, describes this as “a dangerous blind spot where unmanaged tools connect to enterprise data and systems without oversight” (Okta). At a fintech like Equals Money, that blind spot carries compliance weight measured in regulatory fines, not remediation hours.

Executive confidence decomposes neatly once the framing clicks. Those executives assessed coverage of known categories , antivirus, network monitoring, endpoint detection , with justified accuracy. Then they treated that assessment as total coverage. NIST’s AI Risk Management Framework explicitly cautions against conflating category-level assurance with system-level governance when autonomous agents expand the attack surface beyond defined perimeters (NIST AI RMF). The gap between accurate category assessment and total security coverage widens every time an AI agent acts on credentials designed for human users.

Knowing the gap exists is the easy part. Knowing what fills it is harder, and the answer is not another deployment scanner , because the ungoverned AI components Snyk found were not deployed by humans at all.

Shadow AI: Your Production Code Has No Author

Snyk’s marketing frames Evo as a supply chain security product. The scan data points somewhere more uncomfortable. When those 500+ scans surfaced the 3:1 component ratio, the discovery was not rogue AI models running on unauthorized servers. The scans found software components , libraries, packages, code modules , that autonomous coding agents had introduced into production codebases without appearing on any asset inventory.

“Agentic architectures turn governance into a software supply chain problem,” said Manoj Nair, Chief Innovation Officer at Snyk (Snyk). Nair has a product to sell, and his framing is worth examining rather than quoting reflexively. He describes a supply chain problem because Snyk sells supply chain tools. But the underlying data undersells his own finding: these are not unauthorized dependencies smuggled in by careless developers. Components autonomously written by coding tools , Claude Code, Cursor, Devin , rewrote production code with no human author in the commit chain.

Most engineering teams carried the assumption until this quarter that this meant unauthorized ChatGPT tabs , an HR problem, not a supply chain one. Snyk’s 500+ scans eliminated that framing.

What organizations label shadow AI is not unauthorized model deployment. It is unauthorized authorship of production code.

Cross-reference with Qualys, which cataloged more than 10,000 active public MCP servers within one year of the protocol’s introduction, with 53% relying on static secrets (Qualys). Each MCP server is a junction where an AI agent connects to enterprise applications , databases, APIs, internal tools. When 53% of those junctions authenticate with credentials that never rotate, the invisible rewrite gets an equally invisible highway. “Most organizations have zero visibility into where they are, what they expose, or how they can be abused,” said Krishna Anumalasetty, Product Management for AI Platform & Security at Qualys (Qualys).

Snyk’s 3:1 ratio, Gravitee’s 42.8% oversight gap, and Qualys’s 10,000-server wiring layer reveal the same phenomenon from three angles. Call it The Invisible Rewrite: AI coding agents do not assist development so much as silently replace the authorship chain of production code. CNAPP tools track where AI models run. SCA tools track which dependencies humans chose. Neither detects ungoverned AI components that no human authored, reviewed, or approved , a gap in AI agent security that no product category was designed to address. “AI agents don’t operate at the network, endpoint, or device layer , they live in the application layer and use multiple non-human identities with broad, long-lived privileges,” said Harish Peri, SVP & GM of AI Security at Okta (Okta). Peri’s point lands because the application layer is precisely where authorship happens , and precisely where current security tools carry zero detection capability.

Enterprise security stacks compound independent detection layers under a shared assumption: every layer addresses some nonzero fraction of the same threat taxonomy. For known vulnerability classes, each layer catches problems with probability p, yielding compound detection of 1 − (1 − p)^k, where k is layer count. With p = 0.9 and k = 3, detection reaches 99.9%. Defense-in-depth works because every layer shares the same detection taxonomy , they all look for the same category of threat from different angles. AI-authored components break this assumption at the taxonomic level, not the implementation level. “AI-authored component” does not exist as a detection category in any shipping CNAPP, SCA, or SAST product. Each layer’s detection probability q ≈ 0 not because the tools are poorly tuned, but because the category falls entirely outside every tool’s threat model. Compound detection becomes 1 − (1 − 0)^3 = 0%. Three layers at zero yield zero. Ten layers yield identical zero. Defense-in-depth assumes depth , multiple angles on the same problem. When the problem sits outside every angle simultaneously, the architecture produces confidence without coverage, per Okta.

More investment, more layers, identical blindness , which raises the question of whether the instinct to scan harder can survive contact with that math.

More Scanners, Same CNAPP Gap

Scan everything , that is the instinctive response to an invisible threat, and Veracode’s 2026 State of Software Security data argues against it. Across 1.6 million applications tested on the Veracode platform, security debt , known vulnerabilities left unresolved for more than a year , now affects 82% of companies, up from 74% the prior year. High-risk vulnerabilities climbed from 8.3% to 11.3% (The Register). More vulnerabilities are being created than fixed (The Register).

AI-assisted scanning tools that surface vulnerabilities faster than human reviewers can triage do not reduce risk , they produce a backlog that degrades signal quality. If a team already carries 82% security debt on code with known authors, adding thousands of findings from AI-generated code with no attribution does not close the gap. It widens it. Remediation capacity was already saturated before AI-authored components entered the equation.

Yet waiting carries a calculable cost that Veracode’s own data quantifies, even though the report itself does not make this calculation. A mid-size application carrying 200 known dependencies at Snyk’s 3:1 ratio harbors roughly 600 untracked components. At Veracode’s 11.3% high-risk rate, that implies 68 high-risk vulnerabilities per application that no scanner was configured to find , accumulating debt at a rate no human team budgeted for (The Register).

Calculate the cost of inaction: 68 untracked high-risk vulnerabilities per application, each requiring remediation under conditions where no human author exists to assess intent, scope, or blast radius. Standard vulnerability remediation assumes a known author who can explain the code’s purpose and narrow the fix. When authorship is absent, triage expands to include forensic analysis , determining what the code does, why it was introduced, and what depends on it , before remediation can begin. At roughly $320 per vulnerability under those conditions, a team that ignores the 3:1 ratio bleeds ~$21,800 per application per year in security debt that no dashboard displays and no budget anticipates. A five-application portfolio , modest by enterprise standards , crosses six figures annually.

The strongest counterargument holds that this gap is temporary. Snyk’s Evo launch, Qualys TotalAI, and Okta’s agent discovery tools demonstrate that AppSec vendors are already building AI-authored code detection into their products. Within normal product cycles, the argument runs, these capabilities will ship as standard SCA features , making the 3:1 ratio a solvable problem on existing procurement timelines and the urgency overstated. On a two-year horizon, this argument carries genuine force. On a twelve-month horizon, it collides with Veracode’s 82% security debt rate. Organizations that cannot remediate vulnerabilities in code with known authors and clear attribution will not remediate faster when AI-generated findings , authorless, context-free, and orders of magnitude more numerous , flood the same triage queue. New detection categories do not create new remediation capacity. The tools may ship on schedule. The teams using them were already underwater before the new detection category existed. Adaptation without capacity is detection without consequence , and the debt compounds while the roadmaps execute.

Neither more scanning nor less scanning resolves this.

Scanning the right layer does , and that starts with knowing the ratio.

The Two-Command Diagnostic

Run this in any production repository:

git log --all --format='%an' | sort | uniq -c | sort -rn | head -20

If AI coding tools , Claude Code, GitHub Copilot, Cursor , appear in the top five commit authors, or if generic bot accounts dominate, the authorship chain is already fractured. A second command confirms the supply chain impact:

diff <(jq -r '.packages | keys[]' package-lock.json | sort) \

<(sort approved-dependencies.txt) | grep '^<' | wc -l

Compare actual dependencies against deliberately approved packages. The gap is the number of components no human selected , the ungoverned AI components that existing CNAPP platforms never surface.

What Snyk measured across 500+ enterprise scans, and what amounts to The Authorship Ratio , discovered components divided by deliberately approved dependencies , any team can measure at the repository level. Below 1.5:1: typical human-authored dependency drift. Above 2:1: AI agents have expanded the attack surface beyond what current AI agent security tooling detects. Above 3:1: Snyk’s available data indicates the codebase has undergone an Invisible Rewrite, and the governance model is tracking a fiction (Snyk).

What to Do Before Your Next Deploy

-

Run the authorship audit now. Before these tools compounds further, execute the two-command diagnostic above on every production repository. Record the ratio. Any repo exceeding 2:1 warrants escalation to the security lead before the next deploy , expect the repositories handling customer data or payment flows to show the highest ratios, since those codebases attract the most automation pressure.

Conveyor belt with three AI processing stations showing ratios and cost -

Tag AI-authored commits at the CI gate. Require coding agents to sign commits with a distinct identity (e.g.,

claude-code-bot, not a developer’s name). If the CI pipeline cannot distinguish human from agent commits today, that is the first configuration change , before adding any new scanner. A team that implements author tagging first gains the attribution layer every downstream tool will eventually require. -

Generate an AI bill of materials in CI/CD. Snyk’s Evo Discovery Agent and Qualys TotalAI both automate component inventory at the authorship layer. Add one as a pipeline step so untracked components surface before merge, not after incident. The goal is not to block AI-authored code , it is to close the CNAPP gap by making the invisible rewrite visible at the point where governance decisions still have use.

-

Rotate shared agent API keys. Gravitee’s finding that 45.6% of organizations rely on shared keys means a single compromise cascades. Move to scoped, short-lived tokens per agent identity. If the infrastructure does not support per-agent credentials yet, consolidate shared keys in a credential manager, document which are shared, and set a 90-day rotation ceiling.

-

Cap the remediation queue before expanding scan scope. Veracode’s data shows 82% of companies already carry unresolved security debt. Adding AI-generated findings to a saturated queue buries signal. Set a policy: no new scan category is enabled until the existing high-risk backlog drops below a threshold the team defines , otherwise the new detection capability produces alerts without outcomes.

-

Audit

git logauthor distributions monthly. Any repository where bot-authored commits exceed roughly 30% of total likely needs a manual review gate before the ratio compounds further. For teams already managing vibe-coded applications, the authorship audit doubles as a supply chain audit.

If current vendor trajectories hold, authorship attribution will ship as a default SCA feature within twelve months. By March 2027, the 3:1 ratio will either become a standard metric in SCA reports , or it will have climbed higher as AI coding agents grow more autonomous and more embedded in CI/CD pipelines. The results will surface a conversation nobody wants: how much production code no human ever approved. Run the scan. Calculate the ratio. If the number doesn’t concern the team, try the repo that handles customer data. Snyk’s 500 scans already answered whether ghost components exist in governed environments. The remaining question is simpler and worse , whether anyone on the team can name the author of the code running in production right now.

What to Read Next

- JPMorgan’s AI Mandate Hides a 39-Point Perception Gap

- AI Coding Tools Cost $6,750/yr in Hidden Rework , 5 Ranked by True Price

- AI Accounting Tools Cite Tax Rules They Invented

References

- Snyk Launches Agent Security Solution , Primary data on 500+ Evo scans, 3:1 component ratio, and agent security architecture.

- State of AI Agent Security 2026 Report , Gravitee survey of 900+ executives: 82% confidence, 14.4% approval, 88% incidents.

- MCP Servers: The New Shadow IT for AI , Qualys analysis of 10,000+ MCP servers and enterprise visibility gaps.

- Okta Secures the Agentic Enterprise , Agent discovery and unsanctioned AI usage identity risk data.

- BlackFog Research: Unauthorized AI tools Threat Grows , 2,000-respondent study on unsanctioned AI tool usage and risk tolerance.

- Veracode: Security Debt and AI-Driven Development , 1.6M application analysis showing 82% security debt rate.

- NIST AI Risk Management Framework (AI RMF 1.0) , Federal guidance on governing AI systems, including autonomous agent risk categorization.

- Netskope Cloud and Threat Report 2026 , GenAI data policy violations doubling YoY, source code exposure data.