9 min read · 2,294 words

Sam Altman called concerns about AI data center water usage “totally fake” in February 2026. That same month, The Atlantic revealed that Elon Musk’s xAI Colossus facility in Memphis had erected enormous natural-gas turbines to power its AI supercomputer. Scale alone made the story impossible to ignore. Those turbines consumed enough electricity to power a mid-sized American city , around the clock. One CEO called the backlash imaginary. AI data center community opposition was about to demonstrate that the concerns were anything but fake.

Backlash arrived with teeth , and zoning authority. In March, the San Marcos, Texas city council blocked a proposed hyperscale campus that would have demanded a significant share of the city’s electrical capacity. That vote formed part of a cascade: an estimated $98 billion in data center projects stalled in Q4 2025, and Sightline Climate estimates 30–50 percent of 2026 capacity may not deliver on schedule.

This analysis argues that silicon is not the bottleneck. Neither is power generation, nor capital. The evidence suggests local opposition to these facilities has become the binding constraint on the largest infrastructure buildup in technology history , and the one constraint that no purchase order can resolve.

The Cancellation Cascade

Industry conferences obsessed over power constraints and chip supply; zoning boards merited a footnote, if that. The category did not exist , until it swallowed $98 billion, per twenty-six followed.

In October 2025, cancelation hit one data center project in the United States. Across December and January, twenty-six followed.

Sixty days. Most hyperscale data center proposals take longer than that just to complete environmental review. The opposition cycle now runs shorter than the approval cycle.

Divide the $98 billion in stalled Q4 projects across roughly ninety calendar days, and a figure emerges that no source has published: based on the calculations in this analysis, approximately $1.09 billion per day in challenged infrastructure investment. No other category of American commercial construction has experienced capital disruption at that velocity in recent memory, per blocked development plans.

San Marcos was not unique. New Brunswick, New Jersey rejected a proposed campus. Montour County, Pennsylvania organized against expansion. Communities near Edwardsville, Illinois blocked development plans. The geographic range is the signal , red counties and blue cities, rural and suburban, all running the same arithmetic independently and arriving at the same answer. Each concluded the resource cost did not favor residents and reached for the one authority no purchase order can override , the zoning vote.

Opposition patterns resemble protest.

Add up the vetoes and something structural emerges , which raises a harder question: what exactly did the unauthorized turbines in Memphis teach America’s suburbs about what “structural” looks like?

What the Turbines Taught America’s Suburbs

For communities watching one CEO dismiss their resource concerns as “totally fake” while another CEO’s facility operated natural-gas turbines consuming electricity equivalent to 200,000 American homes, the conclusion was immediate: the only power available is before the first permit clears. At the zoning board, not the courthouse.

Memphis became the template for resident pushback against AI server farms nationwide. Not a protest movement , a risk management strategy, executed by residents who read their utility bills more carefully than any hyperscaler read their community impact assessments. Which suggests every new campus proposal now arrives pre-rejected unless it closes a gap no hyperscaler has budgeted for: social license.

The Social License Gap

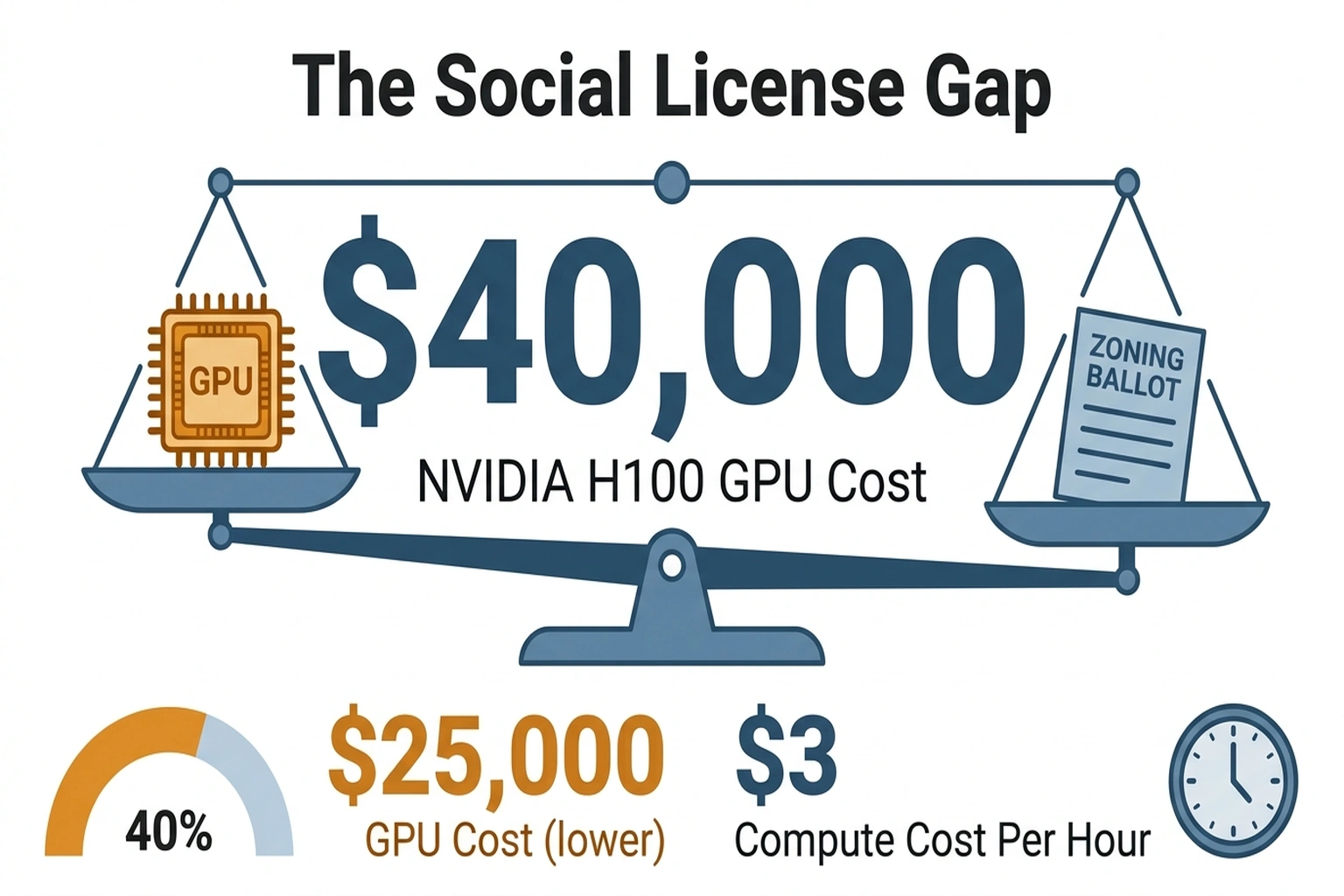

What Data Center Frontier’s cancellation data and Sightline Climate’s delivery estimates reveal is what analysts call the Social License Gap , the delta between capital committed to AI infrastructure and the community consent required to deploy it. Hyperscalers budgeted for gigawatts, GPUs, and power purchase agreements. No one budgeted for the prerequisite that cannot be acquired through capital allocation: a zoning vote.

Each NVIDIA H100 GPU costs between $25,000 and $40,000; cloud compute demand runs at roughly $3 per hour per instance(https://aws.amazon.com/ec2/pricing/on-demand/); a single hyperscale campus houses thousands of these cards. Those unit economics create a brutal asymmetry: every month of operation compounds revenue into further capacity expansion, while every month of delay compounds at zero. The hyperscalers’ own financial logic , build fast, monetize immediately , is precisely what makes a single zoning vote a billion-dollar risk event.

No return matters if the project never opens.

Speed separates this from ordinary NIMBYism. Standard infrastructure opposition follows a negotiation arc: protests, concessions, compromise. Data center resistance is skipping the arc entirely. When San Marcos calculated the electrical load a facility would demand, no concession made the numbers work for residents. Electrical math was binary, and the council voted accordingly.

Communities are not negotiating for better terms.

They are questioning the premise , and so far, the industry’s answer has depended on demand projections that not even Goldman Sachs can verify.

The Demand Nobody Can Prove

One narrative underpins every project: these data centers power the AI revolution, and the revolution justifies the cost. That narrative now encounters a problem the industry prefers not to quantify. Twenty-six vetoed projects suggest communities have already quantified it , and concluded the revolution’s returns accrue to shareholders, not to the towns absorbing its resource costs.

“We still do not find a meaningful relationship between productivity and AI adoption at the economy-wide level,” wrote Ronnie Walker, senior U.S. economist at Goldman Sachs, in a March 2026 research note analyzing fourth-quarter earnings. Walker was summarizing a quarter in which a record 70% of S&P 500 management teams discussed AI on earnings calls(https://fortune.com/2026/03/03/goldman-earnings-ai-anxiety-no-meaningful-impact-productivity-economy-30-percent-in-2-areas/) , yet fewer than half offered hard productivity numbers. “The dramatic change in recent weeks in the narrative in markets from ‘The economy is strong’ to ‘We are all becoming unemployed’ is truly remarkable,” observed Torsten Slok, Chief Economist at Apollo Global Management (Fortune). The boardroom is convinced. The data is not.

Macro data reveals no signal. The micro data says worse.

Mercor’s APEX evaluation , the most extensive AI agent benchmark to date, testing 33 professional scenarios across investment banking, consulting, and corporate law , found that leading AI models fail more than 75% of complex workplace tasks. The best-performing model achieved 24% accuracy. These are the agentic workloads builders are constructing data centers to serve.

Mercor’s APEX evaluation is the sharpest data point critics have , but it deserves scrutiny. Goldman Sachs argues there is no meaningful productivity signal. But this overlooks that APEX tested AI agents against scenarios modeled on existing human workflows , tasks structured around human cognitive strengths and professional conventions. Measuring AI against workflows designed for human cognition resembles benchmarking early automobiles on horse trails. The more relevant question , whether AI reshapes the workflows themselves , is one that neither APEX nor Goldman’s macro lens captured.

Missing evidence extends beyond software agents. Ninety-five percent of FDA-cleared medical AI devices have never reported a single patient outcome, according to Arise Networks’ State of Clinical AI Report 2026 , despite operating in a market that the same report estimates at $70 billion. The most regulated AI deployment sector in the world has no evidence system for measuring whether its products actually work.

Run that figure past any city council facing requests to sacrifice its water table for a data center. Walker’s Goldman analysis is not a contrarian take. The evidence suggests it reflects the consensus of every dataset that measures outcomes rather than intentions , and zoning boards, unlike boardrooms, vote on outcomes.

“Totally fake” is not a rebuttal. It is a confession that the evidence was never collected , which leaves the industry with only one remaining defense: that the technology is improving so fast the question will answer itself.

The Efficiency Defense

Santa Clara holds the strongest counterargument. NVIDIA’s Nemotron 3 Super delivers roughly 10% higher throughput per GPU and higher intelligence than comparable open-source models at identical computational cost , and that is a single generation. Compound that gain across three or four releases, and the same rack of GPUs could serve multiples of today’s workload at a fraction of the energy draw. If the trajectory holds, today’s capacity fears will look quaint in three years.

Every prior efficiency gain in computing triggered more consumption, not less , Jevons paradox applied to silicon. More efficient models enable applications that were previously uneconomical, spawning workloads that devour the capacity the efficiency freed up. Hyperscalers know this. It is why they are still building.

NVIDIA’s own efficiency gains simultaneously argue against the “demand will moderate” thesis and confirm the permanent-expansion logic that fuels community resistance. The industry’s best technical defense of its infrastructure pipeline also validates the communities’ fear that this buildup has no natural endpoint.

Residents hearing “the buildings will get more efficient” correctly translate it as “but they will never stop building.”

Which means the next zoning board will price indefinite expansion into its vote, and the carrying cost of that calculus lands squarely on the hyperscaler’s balance sheet.

The Price of Building Without Asking

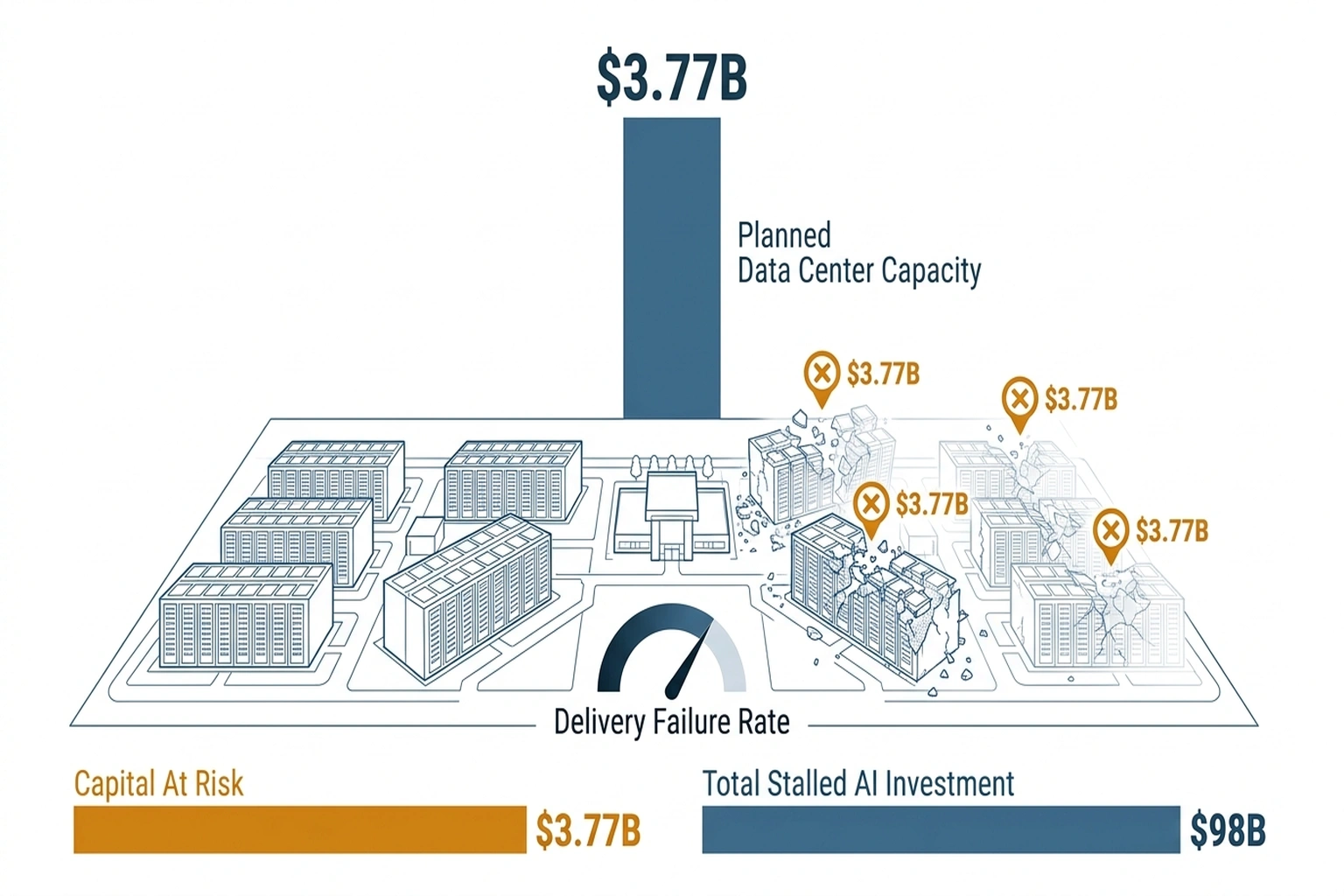

Based on the calculations in this analysis, twenty-six canceled projects against $98 billion in stalled investment means each community veto erased an average of $3.77 billion in planned capital deployment , a figure no source has calculated, and one that dwarfs any conceivable community engagement budget.

According to industry data, for a hyperscaler with $20 billion in planned 2026 data center capacity, Sightline Climate’s 30–50% delivery failure estimate puts $6–10 billion at risk. At an 8% cost of capital(https://www.federalreserve.gov/releases/h15/), an 18-month delay on the at-risk half translates to $480–800 million in carrying costs alone , before counting lost revenue from delayed AI services.

A hyperscaler that treats community engagement as a communications afterthought rather than a capital priority burns roughly $500 million to $800 million per year in carrying costs on stalled projects. That figure does not include a single dollar of lost AI service revenue. Do nothing, and the math does itself, per what emerged when Anthropic partnered with Rwanda.

Run those numbers past a CFO. Then run them again.

History echoes what emerged when Anthropic partnered with Rwanda to build AI capacity: infrastructure built with local stakeholders ships. Infrastructure built around them becomes litigation. The companies now co-investing in local grid upgrades, guaranteeing water-neutral operations, and structuring municipal revenue sharing are breaking ground while competitors compile legal filings.

Stakeholder calculus is already splitting:

- For hyperscaler executives, this zoning resistance has repriced community engagement from a communications function to a capital expenditure. Every dollar not spent on engagement is a bet that the zoning board will say yes anyway , a bet that failed twenty-six times in two months.

- For municipal leaders, every AI data center vote now sets precedent. San Marcos’s decision will appear in council briefing packets across every mid-size American city facing a similar proposal this year.

- For infrastructure investors, any project timeline without social license risk assessment is fiction. Sightline’s 30–50% figure is a portfolio-level repricing event , and the first firms to internalize it will hold the same advantage that early AI project failure-proofing delivers over those that learned the $7.2 million lesson late.

Analysts calculated the $1.09 billion daily stall rate, $3.77 billion per-veto figure, and $480–800 million carrying cost estimates independently. Sightline Climate’s 30–50% delivery shortfall is a forward-looking projection; actual outcomes depend on regulatory and market responses through 2027. This analysis weights Data Center Frontier’s aggregation of cancellation events and Sightline Climate’s delivery projections heavily; both rely on industry self-reporting, and independent verification would require project-level disclosure that no hyperscaler currently provides. (per Community Opposition Emerges as New Gatekeeper for AI Data C)

Altman called the concerns “totally fake.” San Marcos officials did not bother to debate the point. By Q4 2026, every major hyperscaler is projected to have either built a community engagement apparatus larger than its government affairs office , or watched public resistance to AI infrastructure add billions in stalled assets to the write-down column. In Memphis, unauthorized turbines still hum behind the fence line. Families breathing that exhaust are not waiting for a CEO to tell them whether their concerns are real.

What to Read Next

- The 30-Minute Trap: Alibaba’s AI Agent Meets Unprepared Buyers

- The 34% Problem: AI Transformation Stalls, Traps Billions

- The 80% AI Project Failure Rate Costs Firms $7.2M Each

References

-

Community Opposition Emerges as New Gatekeeper for AI Data Center Expansion , Data Center Frontier, citing Sightline Climate and Data Center Watch research on $98B in stalled projects and 30–50% delivery shortfall.

-

AI Data Centers’ Insatiable Energy Demands , The Atlantic investigation of xAI’s Memphis Colossus facility, unauthorized turbines, and electricity consumption equivalent to 200,000 homes.

-

OpenAI’s Sam Altman Blasts AI Concerns Around Water Usage as “Fake” , New York Post, February 2026.

-

Goldman Finds “No Meaningful Relationship” Between AI and Productivity , Fortune analysis of Goldman Sachs Q4 research by senior economist Ronnie Walker.

-

AI Agents Benchmark 2026: Why APEX Test Shows 75% Failure Rate , Mercor’s APEX evaluation testing AI agents across 33 professional scenarios.

-

95% of FDA-Cleared Medical AI Devices Have Never Reported a Patient Outcome , Arise Networks’ State of Clinical AI Report 2026 on medical AI’s outcome-reporting vacuum.

-

NVIDIA Nemotron 3 Super: The New Leader in Open, Efficient Intelligence , Artificial Analysis benchmarks on ~10% throughput gain and higher intelligence per GPU.

-

Helios Real-Time Video Generation on a Single GPU , Apatero analysis of single-GPU AI workloads on H100 hardware.

-

Selected Interest Rates (H.15) , Federal Reserve Statistical Release, providing cost of capital benchmark data.

-

Amazon EC2 On-Demand Pricing , Amazon Web Services official documentation for cloud compute pricing.