10 min read · 2,438 words

Part 2 of 7 in the The Cost of AI series.

A 5,000-token coding problem became a case study in AI reasoning costs , a 5x cost difference generated entirely behind the API wall , when submitted to the same GPT-5 endpoint at two reasoning levels. At minimal reasoning, the API produced roughly 2,000 output tokens for approximately $20 in total cost. At high reasoning, it burned through 10,000 invisible thinking tokens before producing the same answer , and the bill arrived at roughly $120. Same model, same prompt, same visible output.

Developer Simon Willison, who documented this opacity during GPT-5’s preview period, described the result as “an absurd choice: pay for thinking they can’t see or turn reasoning down and lose accuracy”. That absurdity has since scaled industry-wide. Three frontier labs shipped configurable reasoning controls in the past six months, multi-agent deployments grew 327% in under four months on Databricks alone, and AI reasoning costs are compounding across enterprise pipelines in ways vendor pricing pages never surface.

Three Models, Three Reasoning Dials

Mistral Small 4, released in March 2026, collapses four separate models , Magistral, Devstral, Pixtral, and Mistral Small , into a single 119-billion-parameter mixture-of-experts architecture with 6 billion active per token. A new reasoning_effort parameter governs output depth: set to "none", responses match the lightweight chat of Mistral Small 3.2; set to "high", the model produces verbose step-by-step analysis previously exclusive to the dedicated Magistral reasoning model. Mistral reports 40% lower latency and 3x higher throughput at the fast setting(https://mistral.ai/news/mistral-small-4). Token volume at the reasoning end remains unquantified.

Alibaba’s Qwen3 follows a different architectural approach. Its hybrid thinking mode toggles between /think and /no_think commands mid-conversation, with performance that “scales in direct correlation with the computational reasoning budget allocated”. For the flagship 235B-A22B variant , 22 billion of 235 billion parameters active per token , total cost depends entirely on how many reasoning tokens the model generates per query. A complex mathematics problem can consume a thinking allocation that dwarfs the final answer by 15:1 or more(https://qwenlm.github.io/blog/qwen3/).

GPT-5.4 mini scores 54.4% on SWE-Bench Pro at $0.75 per million input tokens. That marks a 19% relative improvement over GPT-5 mini’s 45.7%. Its sibling GPT-5.4 nano drops cost further to $0.20 per million input tokens for lighter workloads. Across the OpenAI family, reasoning tokens bill as output tokens at the standard rate, and volume scales non-linearly with depth. As with Mistral and Qwen, the pricing page advertises the per-token rate but not the per-query token generation.

Every major provider has converged on reasoning as a tunable parameter rather than a model choice , a shift Sebastian Raschka documented as nine distinct inference-scaling techniques now deployed across the industry. Convergence creates a cost category that sits outside traditional API budgeting , one where the unit price holds steady while unit volume is governed by a configuration that many engineering teams never explicitly set.

What the Price-Per-Token Metric Hides About AI Reasoning Costs

Marc Kean Paker, founder of benchmarking platform OpenMark, identified the core mechanism: a standard model returns 200 tokens for a given prompt, while a reasoning model returns 3,000 thinking tokens plus the same 200-token answer. Per-token price stays identical. Token volume does not. Paker documented enterprises spending $10,000 per month on API calls when $2,000 would produce equivalent output quality, simply because reasoning was left at default settings across production pipelines.

Mapping these multipliers against current pricing data reveals a consistent pattern:

| Model | Input ($/M tokens) | Output ($/M tokens) | Reasoning Factor |

|---|---|---|---|

| GPT-5 (high reasoning) | $1.25 | $10.00 | ~5x |

| GPT-5.4 mini | $0.75 | $4.50 | Not disclosed |

| GPT-5.4 nano | $0.20 | $1.25 | Not disclosed |

| Claude Sonnet 4.5 | $3.00 | $15.00 | Variable (extended thinking) |

| Cohere Command A | $2.50 | $10.00 | Non-reasoning baseline |

| Mistral Small 4 | Open-source | Apache 2.0 | Configurable |

| Recommendation | Route by query complexity | Budget ≥3x standard for reasoning queries | Monitor per agent, not per token |

GPT-5 via Implicator analysis; GPT-5.4 via Adam Holter; Claude via Airbyte; Cohere via Artificial Analysis; Mistral is self-hosted under Apache 2.0.

Reading the table vertically exposes the real variable: not the input or output rate, but how many output tokens a single query generates. CloudZero’s inference cost analysis confirms this pattern, finding that the true cost of a resolved AI task is often 10 to 50 times higher than the posted per-call price once token volume is factored in. Cohere Command A is expensive by the pricing page but predictable in volume as a non-reasoning model. GPT-5 appears cheaper per token until reasoning activates and output quintuples. The evidence suggests tokens-per-query , the figure on no vendor pricing page , is the actual cost driver.

Twelve months ago, reasoning lived in separate model families. OpenAI maintained o1 and o3 as dedicated reasoning products. Mistral sold Magistral apart from Small. Enterprises chose a model for reasoning or for speed, never both on the same endpoint. Reasoning as a tunable parameter is a Q1 2026 development, and billing infrastructure has not caught up to the configurability.

Where Agents Compound the Bill

Most analysis of reasoning costs treats the problem as per-query: select the right reasoning level, pay accordingly. Dael Williamson, Databricks EMEA CTO, observed that “AI agents already run critical enterprise parts, but organizations gaining real value treat governance and evaluation as foundations, not afterthoughts”. With multi-agent adoption surging, each agent in a pipeline runs its own reasoning loop , and costs compound multiplicatively, not additively.

Naveen Mathews Renji at Stevens Institute of Technology measured the scale of this compounding. An unconstrained agent solving software engineering problems costs $5 to $8 per task. A Reflexion loop running 10 cycles , a standard pattern for self-correcting agents , consumes approximately 50x the tokens of a single linear pass. Renji coined the term “Unreliability Tax” for this phenomenon: agents reason harder when tasks are hardest, and cost predictability inverts precisely when budgets need it most.

Standard reasoning-cost analysis focuses on the per-query multiplier , 5x, manageable, seemingly configurable. But teams running three-agent pipelines where each agent reasons independently encounter something structurally different , what the combined data from Implicator, Stevens, and Databricks suggests is a “Reasoning Tax Spiral.” Towards Data Science quantified this effect: a four-agent document analysis workflow consumes 3.5x the tokens of a single-agent implementation before accounting for retries, as each agent’s reasoning tokens inflate the input context for subsequent agents. An organization monitoring per-token price sees stability; an organization monitoring per-pipeline cost sees an exponential curve. The parameter designed to provide control becomes the mechanism through which costs escape it.

The $492,750 Bill for Doing Nothing

Using Anthropic’s published rate of $3 input / $15 output per million tokens for Claude Sonnet 4.5, the Reasoning Tax Spiral produces a calculation no vendor provides.

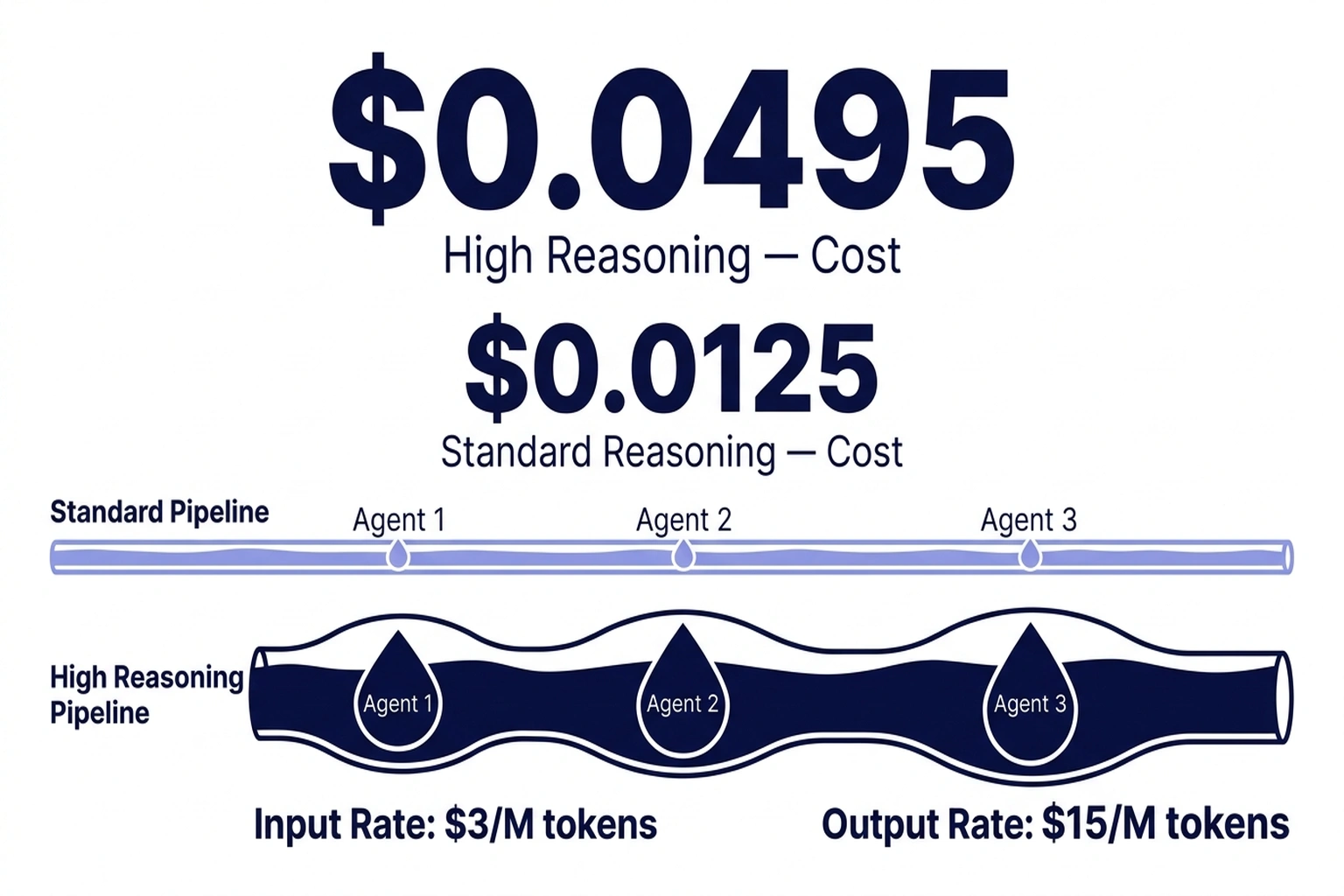

A single agent at standard reasoning , 500 input tokens, 200 output tokens , costs $0.0045 per query. At high reasoning , 500 input, 3,200 output (3,000 thinking plus 200 answer) , the same agent costs $0.0495, following the standard token billing formula: (input_tokens/1M × input_price) + (output_tokens/1M × output_price). An 11x increase from token volume alone, with no change to the published per-token rate(https://www.silicondata.com/blog/llm-cost-per-token).

Scale to a three-agent pipeline where each agent reasons at high depth and previous answers cascade into subsequent inputs:

- Agent 1: $0.0495

- Agent 2 (700 input + 3,200 output): $0.0501

- Agent 3 (900 input + 3,200 output): $0.0507

- Pipeline total: $0.1503 per query

At standard reasoning, the identical pipeline costs $0.0153 per query , nearly 10x less. Processing 10,000 daily queries, the annual gap reaches $492,750 , a figure no vendor pricing calculator surfaces and one derived from the calculations in this analysis rather than any single external source.

Calculations above use Anthropic’s published rates with a standard 500-token input prompt and a 365-day calendar year. Actual costs vary by model, provider, caching configuration, and prompt complexity. Pricing data relies on published API rates and third-party cost reports; independent verification of reasoning token volumes would require API-level telemetry that providers do not currently expose.

For teams doing nothing , leaving reasoning at default across all agents , that $492,750 in annual overhead equals four senior engineering salaries(https://www.bls.gov/ooh/computer-and-information-technology/software-developers.htm) consumed by invisible token spend. Previously published analysis of how JSON structure alone triples LLM output costs through structural token overhead underscores a compounding effect: stacking reasoning tokens on top of format overhead creates dual waste that neither cost category captures independently.

Beyond direct cost, reasoning token accumulation creates a quality ceiling. Analysis shows that model accuracy drops from 93% to 78.3% as context reaches 1 million tokens, a phenomenon called context rot. In multi-agent pipelines where reasoning tokens expand every agent’s input window, the additional tokens may not only cost more but actively degrade the accuracy they were generated to improve.

Performance Returns That Justify the Premium

A strong counterargument exists, supported by production data. Symphonize’s enterprise cost analysis found a legal RAG agent with deep reasoning generates $5.2 million in additional revenue on a $196,000 build cost. That represents a 26x return(https://www.symphonize.com/tech-blogs/costs-of-building-ai-agents-what-decision-makers-need-to-know). Customer service chatbots with reasoning enabled save $480,000 on a $110,000 investment. That translates to a 4.3x ROI in year one(https://www.symphonize.com/tech-blogs/costs-of-building-ai-agents-what-decision-makers-need-to-know). GPT-5.4 mini’s 19% accuracy improvement on SWE-Bench Pro represents quality gains that reduce downstream error correction costs, often making the reasoning premium net-positive on high-stakes queries.

Where this argument breaks down is in application scope. LeanLM’s enterprise cost analysis found that organizations overspend 50–90% on inference by defaulting to frontier models for tasks that produce identical results on cheaper models , classification, extraction, and summarization that make up the bulk of enterprise API calls. OpenAI’s own guidance acknowledges that chain-of-thought reasoning is overhead for routine tasks, where standard models answer more efficiently. Applying maximum reasoning uniformly is, this analysis argues, the cost equivalent of shipping every package overnight: reliable, but irrational when the majority of shipments arrive at the same destination by ground. The available evidence suggests reasoning pays for itself selectively , on the fraction of queries where accuracy measurably improves , not universally.

Three Budget Controls That Work Now

Reasoning Routing

Reasoning routing delivers the highest immediate return. Mistral Small 4’s reasoning_effort parameter and Qwen3’s /think toggle exist for this purpose: baseline queries at minimal reasoning, deep analysis reserved for complexity-flagged inputs. Even coarse routing that separates classification and lookup queries from open-ended analysis addresses the majority of unnecessary reasoning spend without measurable accuracy loss on routine tasks.

Per-Agent Cost Attribution

Per-agent cost attribution is the second priority. Most teams track aggregate API spend without isolating costs by pipeline stage. An observability stack built on OpenTelemetry’s GenAI semantic conventions can trace reasoning token allocation per agent per query, exposing which stages generate disproportionate AI reasoning costs and which maintain flat spend regardless of depth. Without this attribution, the $492,750 gap is invisible until the quarterly bill arrives.

Multi-Model Routing

Multi-model routing completes the strategy. GPT-5.4 nano at $0.20 per million input tokens handles classification and retrieval at a fraction of reasoning model costs. Prompt caching provides additional savings , Anthropic’s implementation delivers 90% cost reduction on repeated context at scale, directly offsetting reasoning overhead in pipelines with shared prompt templates. Reserving reasoning-depth models for queries where step-by-step analysis demonstrably improves accuracy captures the performance premium without applying the 5x multiplier across every API call.

For CFOs, the action is demanding per-pipeline cost reporting rather than per-API aggregates. For engineering leads, reasoning routing is a Q2 project with immediate ROI. For ML architects, the task is designing multi-model strategies that allocate reasoning depth by query complexity , treating reasoning as a tiered service, not a global setting.

FinOps Foundation data shows 98% of organizations now actively manage AI spend, up from 31% two years prior , making reasoning cost management a near-certain line item at enterprises deploying multi-agent systems by Q4 2026. Based on the 327% growth trajectory in multi-agent adoption and the compounding nature of reasoning costs within those pipelines, current trends suggest at least two major cloud providers will launch reasoning-specific billing dashboards before year-end. Willison’s $20-versus-$120 query was an early signal. At multi-agent scale, that 5x difference does not shrink , it compounds with every additional agent, every reasoning-heavy turn, every pipeline stage. The Reasoning Tax Spiral is a pricing structure operating exactly as designed, and whether enterprise finance teams learn the difference between $20 and $120 before the quarterly bill arrives , or after , is now a $492,750 question.

What to Read Next

- TurboQuant’s 6x Compression Creates More GPU Demand

- GPT-5.4 Mini vs Nano: Small Model Costs Hide a 33-Point Cliff

- Qwen 3.5 Benchmark Win Hides a 15th-Place User Verdict

References

- What GPT-5 Actually Costs: Breaking Down OpenAI’s Complex Pricing Structure , GPT-5 reasoning token pricing, 5x cost multiplier analysis, Simon Willison findings

- Mistral Small 4 Announcement , Reasoning_effort parameter, 119B MoE architecture, model consolidation details

- Qwen3 Blog , Hybrid thinking mode, /think toggle, scalable reasoning budget architecture

- GPT-5.4 Mini and Nano: Benchmarks, Pricing , SWE-Bench Pro scores, GPT-5.4 mini and nano token pricing

- The Price Per Million Tokens Is Lying to You , Token volume deception in reasoning models, enterprise overspend analysis

- Databricks Report: Rapid Rise of Multi-Agent AI Systems , 327% multi-agent growth, Dael Williamson commentary on governance

- The Hidden Economics of AI Agents , Per-task agent costs, Reflexion loop 50x token consumption, Unreliability Tax concept

- Best AI Agent Frameworks for 2026 , Anthropic Claude Sonnet 4.5 pricing, prompt caching cost reduction data

- Cohere Command A Model Analysis , Command A pricing benchmarks as non-reasoning baseline

- Costs of Building AI Agents , Enterprise AI agent ROI data, legal RAG and chatbot return analysis

- Categories of Inference-Time Scaling for Improved LLM Reasoning , Sebastian Raschka analysis of nine inference-scaling techniques across the industry

- Your Guide To Inference Cost , CloudZero analysis showing resolved AI task costs 10–50x posted per-call price

- The Multi-Agent Trap , Towards Data Science, multi-agent token compounding analysis (3.5x multiplier)

- Understanding LLM Cost Per Token , Standard token billing formula and cost calculation methodology

- LLM Cost Optimization: Why Enterprises Overspend , LeanLM analysis of 50–90% enterprise overspend on inference

- Using Reasoning for Routine Generation , OpenAI guidance on reasoning overhead for routine tasks

- State of FinOps 2026 , FinOps Foundation report, 98% of organizations now manage AI spend

- FinOps for AI Overview , FinOps Foundation working group on AI-specific cost management

- Software Developers: Occupational Outlook , U.S. Bureau of Labor Statistics, senior engineering salary benchmarks