Keith Ferrazzi’s research team had expected the usual suspects , bad data, wrong algorithms, budget shortfalls. After surveying more than 2,000 workers across the United States and Europe in fall 2025, their Harvard Business Review study surfaced a different culprit: eight in ten employees reported deep anxiety not about AI’s capabilities, but about what AI meant for their professional standing. That anxiety helps explain the AI project failure rate that now tops 80%.

Corporate AI investment hit $252.3 billion globally in 2024. RAND Corporation research puts the failure rate for AI projects at 80.3% , which the same analysis characterizes as roughly double the failure rate of non-AI technology projects. Multiply those two figures and roughly $202.6 billion of that annual spend produced no lasting business value , a number neither RAND nor Stanford calculated, but one that, based on the calculations in this analysis, reframes AI adoption from a growth story into the most expensive category of failed corporate initiatives in modern business history.(https://www.pertamapartners.com/insights/ai-project-failure-statistics-2026)

The AI Project Failure Rate: When Half a Trillion Dollars Buys Abandoned Software

Twelve months ago, S&P Global Market Intelligence data showed that 17% of companies had walked away from most of their AI programs. By mid-2025, that abandonment rate had spiked to 42% , a 2.5x jump in a single year. Enterprise generative AI spending simultaneously surged from $11.5 billion to $37 billion during the same window. Companies spent faster while failing faster.

Each failure carries real weight. Large enterprises absorbed an average of $7.2 million per failed AI initiative in 2025, with the typical firm abandoning 2.3 initiatives annually , a calculated $16.56 million waste figure per company.(https://www.pertamapartners.com/insights/ai-project-failure-statistics-2026) MIT’s NANDA research confirmed the severity: only 5% of generative AI pilot programs achieved rapid revenue acceleration, with the vast majority delivering no measurable bottom-line impact. “Pick one pain point, execute well, and partner smartly with companies who use their tools,” said lead author Aditya Challapally. Companies following that formula “have seen revenues jump from zero to $20 million in a year.”

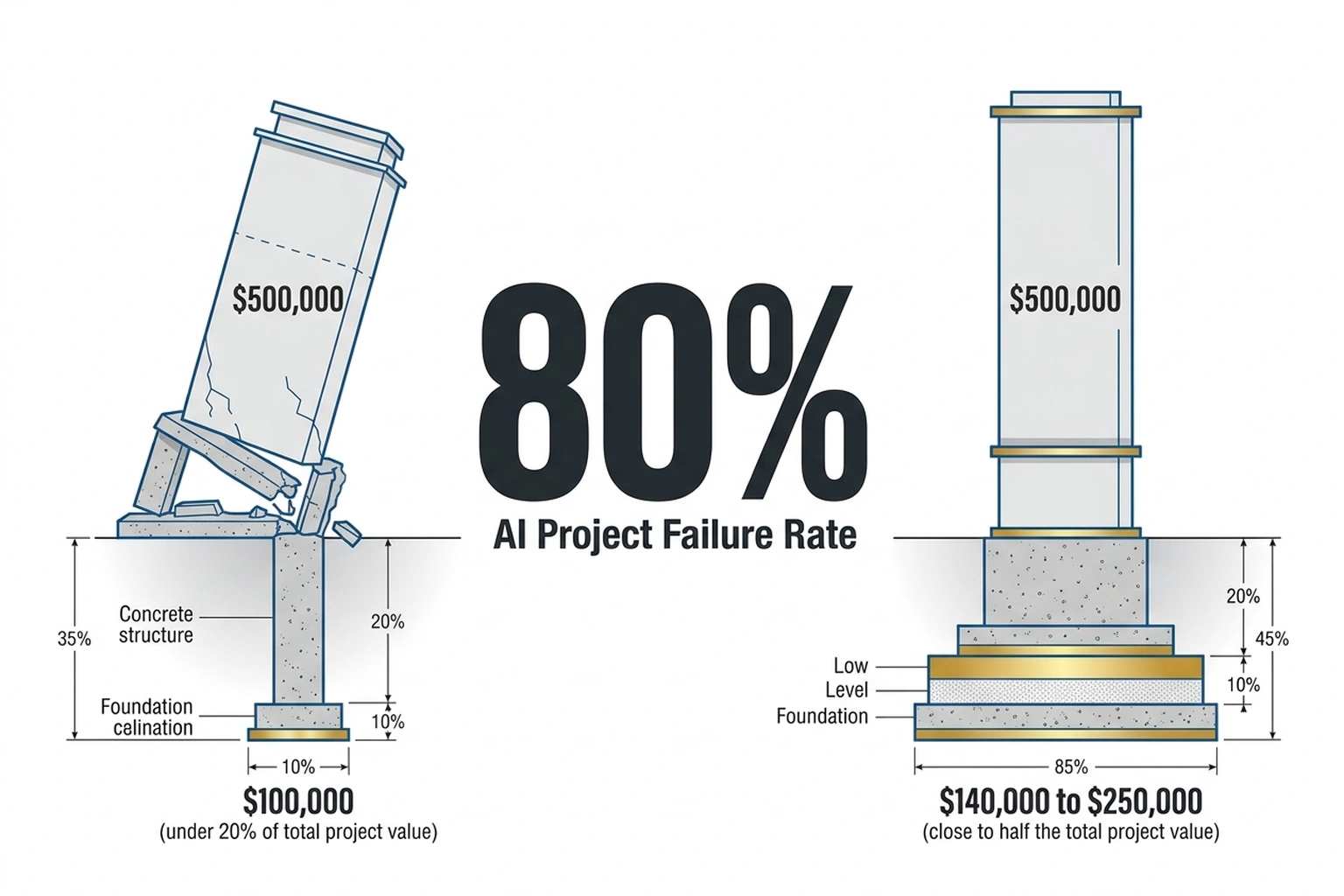

Mid-market firms and small businesses face the same pattern at smaller scale , a single abandoned $500,000 AI initiative represents three to five employees’ annual salaries spent building something nobody would use. The technology works in specific hands. But what separates the 5% that succeed isn’t what most leaders assume.

Everyone Blames the Wrong Line Item

CTO post-mortems consistently blame data pipelines, model accuracy, or integration complexity. Deloitte’s 2026 State of AI report exposes a structural gap underneath those explanations: only 20% of companies have mature governance models for their AI deployments, even as 42% of leaders describe their strategy as “highly prepared”. That 22-point confidence gap , executives who believe they’re ready while their organizations demonstrably aren’t , is the first diagnostic clue that the real barrier isn’t technical.(https://www.gao.gov/products/gao-24-106309)

Enterprise technology failures of this magnitude recall the ERP implementation wave of the late 1990s, when roughly 90% of implementations finished late or over budget and organizations discovered that buying SAP was the easy part , getting employees to change how they worked was the hard part. AI adoption is following the same arc, two decades later, at roughly ten times the cost. And the organizational symptom looks strikingly similar: not rejection of the new system, but passive non-adoption that quietly drains every initiative’s ROI.

In 2025, 76% of enterprise AI use cases came from vendors rather than internal builds, up from 53% the year before. That migration looks like a market preference, but this analysis argues it functions as an organizational confession: companies are outsourcing enterprise AI adoption because they cannot execute internally. When firms announced AI-driven layoffs during record revenue quarters, remaining employees’ relationship with AI calcified from skepticism into active resistance. Others circumvented official programs entirely, building shadow AI tools when the sanctioned versions never materialized.

The Angst-Adoption Paradox

Here is where the conventional narrative breaks. Ferrazzi, Eatough, Smith, and Waters didn’t just find that employees resist AI. Their research revealed that the employees who use AI the most are the most resistant to its organizational integration.

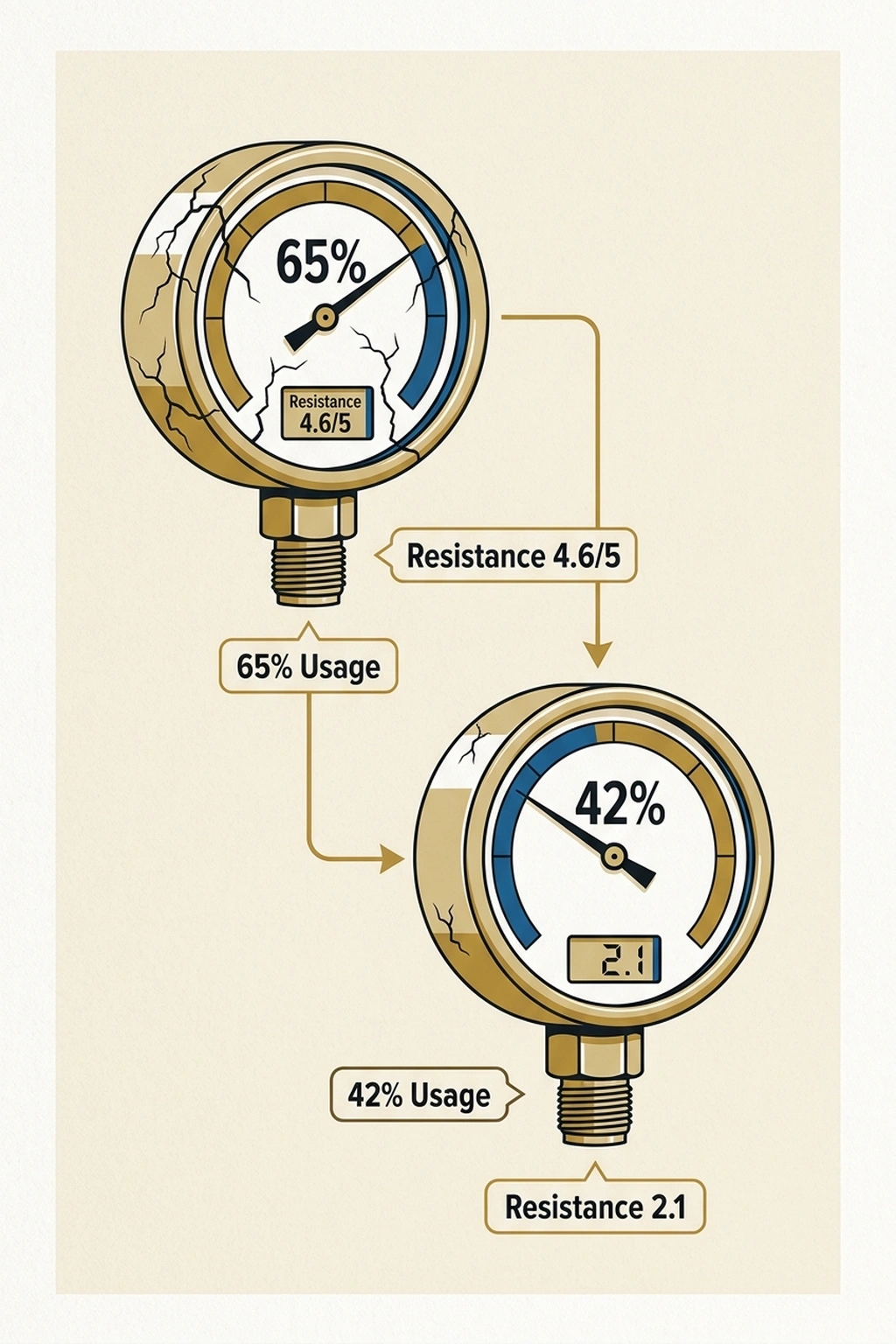

High-angst workers used AI for 65% of their job tasks , far more than the 42% usage rate among low-angst peers. Yet those same heavy users scored 4.6 out of 5 on resistance to organizational AI changes, compared to 2.1 for workers who felt secure. Sixty-five percent worried about “being replaced by someone who knows how to use AI better.”(https://hbr.org/2026/02/why-ai-adoption-stalls-according-to-industry-data) Sixty-one percent feared AI would make colleagues “think I don’t bring unique value.”(https://hbr.org/2026/02/why-ai-adoption-stalls-according-to-industry-data) Forty-four percent believed AI was “making them dumber” , a response from power users, not holdouts.

The evidence suggests the Angst-Adoption Paradox works as follows: employees adopt AI tools because they fear falling behind, then resist the organizational integration that would make those tools productive , because full integration threatens the professional identity that defines their worth. Usage goes up. Commitment stays flat. Projects report “adoption” while delivering no returns. Ferrazzi and co-authors coined the term “AI angst” to describe this pattern, and the data behind it explains why throwing money at better models hasn’t moved the needle.

The 80.3% failure rate for AI initiatives and the roughly 80% employee angst rate emerge from independent studies , one compiled by RAND across hundreds of deployments, the other surfaced by an HBR survey of 2,000 workers. That two unrelated research efforts converged on the same number isn’t statistical noise. The evidence suggests it is a signal the industry has been ignoring while debugging data pipelines.

Data Quality Deserves the Argument

Dismissing technical barriers would be dishonest. Informatica’s CDO Insights 2025 survey found that 43% of organizations named data quality and readiness as their top AI barrier , ahead of technical maturity and skills shortages. RAND data, as compiled by Pertama Partners, consistently shows that most AI projects encounter significant data quality issues, with data preparation consuming much of the average project timeline.

Bad foundations produce bad models, regardless of how psychologically prepared the workforce feels. Data-infrastructure advocates argue, with justification, that organizations with clean, well-governed data rarely face adoption problems. A World Economic Forum analysis supports this: 68% of AI-first organizations report mature data governance frameworks, compared with just 32% of other organizations , suggesting the causation runs from data quality to organizational success, not the reverse. Advocates deserve respect because the data behind it is real.

But successful AI projects encounter those same data quality problems , and solve them. Successful projects, Per 80.3%$252.3 billion globally in 2024Harvard Business Review studycompiled industry data, invest roughly two to three times more of their budget in organizational foundations , governance, change management, executive alignment , than failed ones. On a $7.2 million initiative, that investment gap exceeds $2 million. Success doesn’t stem from cleaner data at the outset. It’s the organizational will to fix dirty data before building on top of it. Data quality is a genuine engineering challenge. Data quality as the root cause, this analysis argues, is a misdiagnosis , a symptom of the organizational dysfunction the Angst-Adoption Paradox creates.

These findings rely primarily on self-reported survey data from HBR, S&P Global, and Deloitte. Independent verification through longitudinal project-level tracking would strengthen the causal link between workforce angst and project failure. The correlation, however, is striking enough to warrant immediate organizational attention.

What the Winning 5% Actually Spend Money On

Three patterns separate successful AI projects from the Project failures that tops 80%, and none of them involve choosing a better model: (Why Most Enterprise AI Projects Fail , A)

Organizational investment before engineering. According to Harvard Business Review study, According data cited in this analysis compiled research, successful projects devote close to half their total budget to foundations , data governance, change management, and executive alignment , compared to under a fifth for failed ones. For a mid-size company running a $500,000 AI initiative, that means allocating $140,000 to $250,000 to people and process before writing a single line of model code.

Narrow scope with executive continuity. Per $252.3 billion globally in 2024 , industry analyses, projects with sustained executive sponsorship succeed at substantially higher rates than those that lose C-suite attention within six months. MIT’s NANDA research finding that purchased solutions with vendor partnerships outperform internal builds reflects the same principle: narrower scope means fewer organizational fault lines to manage.

Identity-preserving role design. The HBR study categorized workers into four profiles , Visionary (roughly 40% of most workforces), Disruptor (30%), Endangered (20%), and Complacent (10%). Nearly a third of any workforce is simultaneously enthusiastic about and threatened by AI. Designing roles that position AI as an amplifier of existing expertise, rather than a replacement for it, directly addresses the angst that drives the Paradox.

Cost of inaction: a large enterprise running 10 AI initiatives at $7.2 million each with an 80% failure rate writes off $57.6 million annually in abandoned projects. Redirecting 25% of each project budget ($1.8M) to organizational readiness , governance, change management, angst assessment , and reducing the failure rate to even 60% (still above industry average) saves $14.4 million per year. The return on organizational investment: 8:1, per HBR study.

For CTOs, the data argues for reallocating 25-30% of AI project budgets from engineering to organizational readiness. For CHROs, measuring workforce AI angst is now a leading indicator of project survival. For CFOs, the $16.56 million annual waste figure belongs in the risk register, not the innovation slide deck, per HBR study.

Concretely, three actions available this week:

-

Survey before selecting tools. Run a 10-question AI angst assessment across the team using the HBR study’s five-point scale. Categorize each member into one of four profiles: Visionary (high belief, low risk), Disruptor (high belief, high risk), Endangered (low belief, high risk), or Complacent (low belief, low risk). If Endangered workers exceed 30% of the team, invest in psychological safety before deploying new AI tools.

-

Audit the budget split. Pull every active AI initiative’s budget and calculate the ratio of organizational investment (training, governance, change management) to technical investment (infrastructure, models, data engineering). If the organizational share is under 25%, redirect resources before the next quarter , the success-factor data is unambiguous on this point.

-

Interview five frontline users about fears, not features. Ask what they worry AI will change about their role, their professional value, or their team dynamics. Record whether concerns cluster around competency (“AI makes me look less skilled”), identity (“AI threatens what makes me unique”), or security (“AI might replace me”). Each cluster requires a different organizational response, and the distribution predicts adoption failure more accurately than any technical readiness assessment.

According to Harvard Business Review study, at $16.56 million in annual AI waste for the average large enterprise , roughly $1.38 million per month , every month of ignoring workforce psychology costs more than an entire year’s organizational readiness program. A company doing nothing about AI angst is not saving money. It is spending $1.38 million per month to strongly suggests the next initiative joins the failure pattern that now characterizes four out of five deployments.

The strongest counterargument is that the 80% failure statistic aggregates heterogeneous project types, and organizations with mature data infrastructure report significantly lower failure rates. Critics also note that many “failed” projects generated valuable institutional learning that later informed successful deployments, meaning the $7.2 million per-failure figure may overstate true economic loss by conflating sunk costs with zero-value outcomes.

Expect at least one major consulting firm , likely Deloitte or McKinsey , to add a dedicated “workforce psychological readiness” metric to its annual State of AI assessment within the next twelve months. That metric doesn’t exist in any standard enterprise survey today, and its absence helps explain why the $7.2 million average cost per failed initiative keeps compounding: the evidence suggests organizations are paying full price for initiatives they diagnose as technology problems while the actual fault line runs through their workforce. Every large enterprise abandoning 2.3 initiatives annually at $7.2 million each is not running an AI program , it is running a $16.56 million annual transfer from its balance sheet to vendors, consultants, and sunken infrastructure, with workforce angst as the silent authorizing signature on every check.

What to Read Next

- The 30-Minute Trap: Alibaba’s AI Agent Meets Unprepared Buyers

- The 34% Problem: AI Transformation Stalls, Traps Billions

- Claude Code’s 4% GitHub Share Signals a Developer Reckoning

References

- Why AI Adoption Stalls, Per 80.3% , Industry Data , Ferrazzi, Eatough, Smith, and Waters’ survey of 2,000+ workers revealing the AI angst phenomenon and the usage paradox

- AI Project Failure Statistics 2026: The Complete Picture , Pertama Partners’ compilation of RAND, MIT Sloan, and Deloitte data on 80.3% failure rate and $7.2M per-failure cost

- MIT Report: 95% of Generative AI Pilots at Companies Are Failing , MIT NANDA research on pilot-to-production failure rates and Aditya Challapally’s analysis of success patterns

- Why Most Enterprise AI Projects Fail , And the Patterns That Actually Work , S&P Global Market Intelligence data on 42% abandonment rate and Informatica CDO Insights on data quality barriers

- The State of AI in the Enterprise 2026 , Deloitte survey on governance maturity gaps and strategic readiness disconnect

- 2025: The State of Generative AI in the Enterprise , Menlo Ventures data on the $37B enterprise GenAI market and the shift from build to buy

- Economy , The 2025 AI Index Report , Stanford HAI data on $252.3 billion global corporate AI investment in 2024

- ERP Failure In The 90s Vs. Today , Panorama Consulting analysis of 1990s ERP implementation failure rates and organizational change patterns

- Why Data Readiness Is a Strategic Imperative for Businesses , World Economic Forum data on data governance maturity gaps between AI-first and other organizations