10 min read · 2,531 words

Affiliate Disclosure: This article contains affiliate links. We may earn a commission if you purchase through these links, at no additional cost to you. This helps us continue publishing free content. See our full disclosure.

Part 3 of 6 in the AI Agent Crisis series.

Part 4 of 6 in the Healthcare AI series.

February 4, 2026: Gravitee’s State of AI Agent Security report surfaced a number that should have halted every healthcare MISO mid-morning , 92.7% of healthcare organizations reported confirmed or suspected security incidents tied to healthcare AI agents in the preceding twelve months. Five weeks later, on March 12, Google’s Cloud Threat Horizons team confirmed the primary vector: 83% of cloud intrusions in H2 2025 began with identity compromise. Between those two dates, no governance framework for clinical AI systems shipped.

Clinical AI deployments now produce more incidents than any other sector, exceeding the cross-industry average of 88% by nearly five points. What explains the gap isn’t deployment volume , it’s data sensitivity. Scheduling systems tied to patient identifiers, diagnostic tools reading electronic health records (EHRs), billing agents traversing insurance databases: every unmonitored agent touching these systems creates federal exposure under HIPAA (Health Insurance Portability and Accountability Act). Where a compromised agent in retail might leak purchase histories, a compromised clinical agent leaks Social Security numbers, diagnoses, and prescription records , data that cannot be rotated or reissued.

Gravitee’s survey of 900+ executives and technical practitioners paints a picture of an industry that deployed before it secured. Across all sectors, only 14.4% of AI agents go live with full security or IT approval. In healthcare, the exposure is more specific: 42.41% of deployed agents operate entirely without security monitoring , not reduced logging, not partial oversight, but zero visibility into what those agents access or transmit.

Those two numbers frame a governance collapse across the full deployment pipeline. If 14.4% of agents receive full security approval and 42.41% receive zero monitoring, the remaining 43.19% of all clinical AI agents occupy a gray zone , partially reviewed, partially logged, fully trusted with patient data(https://www.elisity.com/blog/ai-agent-network-security-microsegmentation-2026)(https://www.gravitee.io/state-of-ai-agent-security). Fewer than one in seven agents gets proper vetting(https://www.elisity.com/blog/ai-agent-network-security-microsegmentation-2026). Nearly half get none at all(https://www.gravitee.io/state-of-ai-agent-security). Four in ten get something in between that no one can consistently define(https://www.elisity.com/blog/ai-agent-network-security-microsegmentation-2026), per “Shadow AI becomes a back door into the enterpriseR.

Jorge Ruiz, Gravitee’s Director of Product Marketing, described the risk in the report summary: “Shadow AI becomes a back door into the enterprise” when agents operate beyond security oversight. A hospital running 50 deployed AI agents at the sector’s unmonitored rate has approximately 21 agents accessing patient data with no audit trail(https://www.gravitee.io/state-of-ai-agent-security) , an invisible risk surface that expands with every new deployment.

Monitoring gaps alone don’t explain a 93% incident rate. How these agents authenticate , and what access they inherit the moment they go live , reveals a structural failure no amount of logging can remedy.

Where 42% of Healthcare AI Agents Disappear

IBM’s 2025 Cost of a Data Breach report found that among organizations experiencing AI-related breaches, 97% lacked proper AI access controls. In healthcare, where agents routinely traverse EHR systems, billing databases, and insurance platforms, the absence of individual agent identities transforms every shared credential into a lateral movement opportunity. An agent authenticated with a shared key accesses patient records indistinguishably from every other agent using that key , no forensic trail, no individual attribution, no way to isolate a compromised entity after the fact.

Most healthcare MISOs operate under a threat model focused on external attackers , ransomware gangs, nation-state actors, credential-stuffing operations. Google’s Threat Horizons report confirmed these threats are real: North Korean state-sponsored group UNC4899 exploited overprivileged automated service accounts , the same type of standing access healthcare AI agents typically receive , to breach cloud environments.

This is where the conventional threat model breaks. Gravitee’s incident data and Google’s identity data, read together, do not describe an outside force penetrating healthcare defenses. They describe an attack surface being manufactured from within , one unmonitored the healthcare agent deployment at a time. That 92.7% incident rate is not evidence that healthcare is under siege. It is evidence that healthcare organizations are building the corridors their adversaries will walk through, then leaving 42% of the doors unguarded, per “You are managing a perimeter that has shifted from hu.

Thomas Nuth, Head of Product Marketing at Tenable and contributor to the Cloud Security Alliance’s 2026 report, framed the shift directly: “You are managing a perimeter that has shifted from human users to a 100-to-1 ratio of machine and non-human identity counts”. In healthcare, where each AI agent may connect to scheduling, clinical, and billing systems simultaneously, that ratio means the majority of identities accessing patient data are now non-human , and the majority of those non-human identities were never individually credentialed.

What Gravitee’s sector data and Google’s identity compromise findings expose, taken together, constitutes a 42% Blind Zone , the share of clinical AI agents operating with access to protected health information and zero security oversight. Neither report named this gap. Both documented it.

$8.09 Million , and the Number Nobody Ran

IBM’s 2025 Cost of a Data Breach report placed the average healthcare data breach at $7.42 million , the highest of any industry for the fourteenth consecutive year , while breaches involving shadow AI added an average of $670,000 per incident. Combined: an AI-agent-involved healthcare breach costs an estimated $8.09 million(https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls). Neither IBM nor Gravitee produced this number, because neither connected healthcare’s sector-specific breach cost to the shadow AI exposure premium. For a hospital system where nearly half of deployed agents run without monitoring, a single agent-triggered breach could exceed the entire annual IT security budget.

Now apply that figure to the sector. American Hospital Association counts roughly 6,100 hospitals in the United States(https://www.aha.org/statistics/fast-facts-us-hospitals). At a 92.7% incident rate, approximately 5,655 of them experienced a confirmed or suspected AI agent security incident in the past twelve months(https://www.gravitee.io/blog/state-of-ai-agent-security-2026-report-when-adoption-outpaces-control)(https://www.aha.org/statistics/fast-facts-us-hospitals). If even 5% of those incidents escalated to a reportable breach , a conservative assumption given that 42% of agents operate without any monitoring to catch escalation early , the sector’s annual AI-agent-related breach exposure exceeds $2.28 billion(https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls)(https://www.gravitee.io/state-of-ai-agent-security). That is a single-year estimate. Healthcare has been deploying AI agents for three.

Twelve months ago, these numbers would have remained hypothetical. Flashpoint’s 2026 Global Threat Intelligence Report documented a 1,500% surge in AI-related illicit activity between November and December 2025, rising from 362,000 mentions to more than 6 million, alongside 3.3 billion compromised credentials fueling identity-based attacks. Josh Lefkowitz, Flashpoint’s co-founder and CEO, described the convergence: “Cybercrime has reached a point of total convergence, where the silos that once separated malware, identity, and infrastructure have consolidated into a single, high-velocity threat engine”. Healthcare, with its interconnected patient data systems and AI agents operating across them, sits at the center of that engine.

A hospital system operating 200 AI agents with 42% unmonitored has roughly 84 autonomous systems accessing protected health information without audit trails(https://www.gravitee.io/state-of-ai-agent-security). At $8.09 million per breach, the cost of a single undetected compromise exceeds what most mid-sized hospital systems spend on information security annually(https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls). An organization that ignores the 42% Blind Zone is not saving money on security , it is deferring an $8 million liability it has not budgeted for, per May 2026, with a 180-day compliance window.

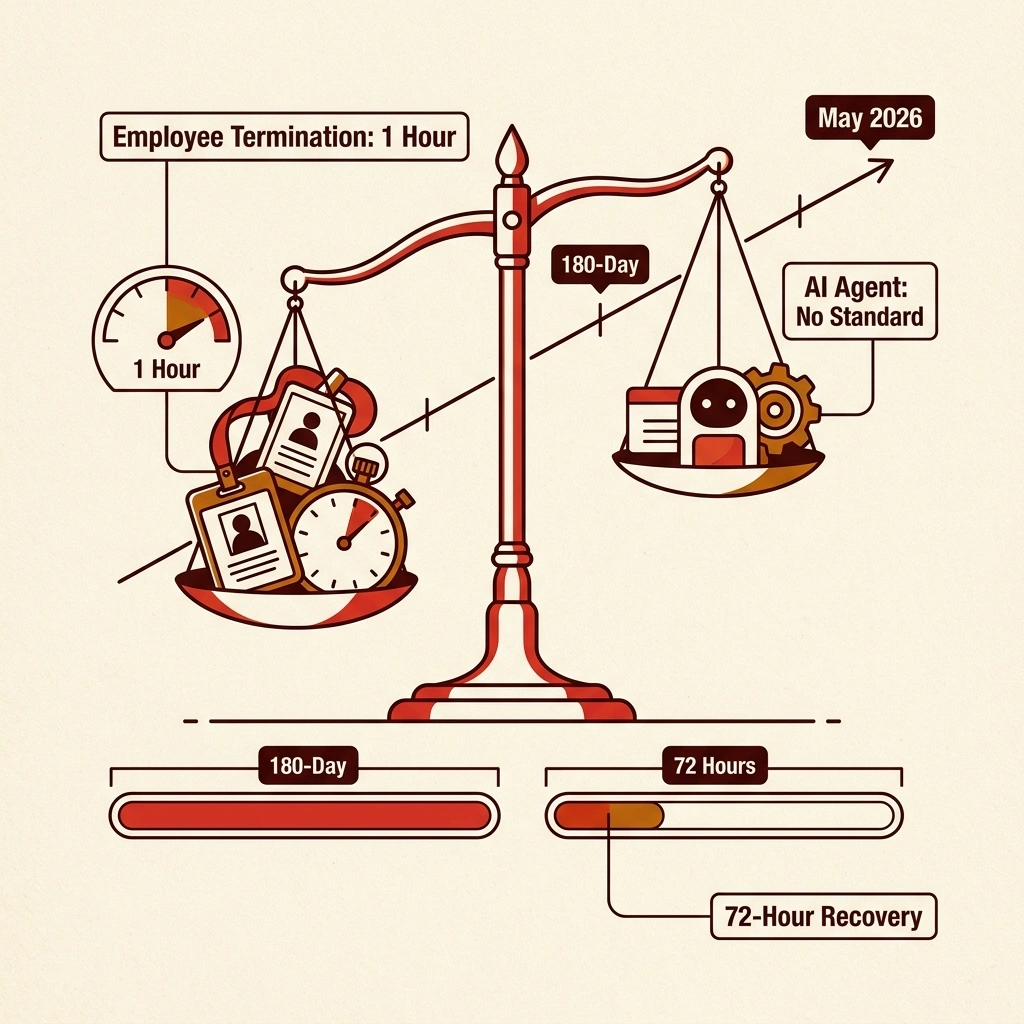

HIPAA’s May 2026 Deadline Ignores Its Biggest Threat

HHS (Department of Health and Human Services) issued a Notice of Proposed Rulemaking on December 27, 2024, to update the HIPAA Security Rule for the first time in over a decade. Expected finalization: May 2026, with a 180-day compliance window. New mandates include mandatory encryption for electronic protected health information (PHI) at rest and in transit, multi-factor authentication for all PHI access, annual penetration testing, vulnerability scanning every six months, and system recovery within 72 hours of an incident.

Not one provision in the proposed rule mentions AI agents, non-human identities, or autonomous systems. Access revocation is required within one hour of employee termination , but no equivalent standard exists for decommissioning an AI agent that has exceeded its authorized scope. Network segmentation is now mandatory , but no guidance addresses how to segment agent-to-agent communication channels. While FDA’s PCCP guidance addresses AI-powered medical devices, it does not extend to the scheduling, billing, and records-access agents where Gravitee’s data shows the highest incident concentrations.

Defenders of the current framework raise a reasonable objection: HIPAA’s existing access controls, audit requirements, and “minimum necessary” standard for data access apply to any system touching electronic protected health information, whether human or autonomous. Derek Gunny, Product Specialist at Verkada, described the trajectory optimistically: “AI-powered systems are shifting security from a reactive cost center to a proactive intelligence capability”. HIPAA’s technology-neutral language was, in fact, designed to survive generational shifts , the framework’s theoretical coverage extends to AI agents by default.

In practice, that theoretical coverage collapses against the evidence , and the enforcement arithmetic exposes why. Regulators at the Office for Civil Rights (or) levied more than $6.6 million in HIPAA violation fines in 2025 alone , with individual penalties reaching $3 million per incident. Set that against the sector-wide exposure: $2.28 billion in estimated annual AI-agent-related breach costs across U.S. hospitals. or’s entire 2025 enforcement output represents 0.29% of the breach liability accumulating in the 42% Blind Zone(https://www.healthcarelawinsights.com/2026/02/major-hipaa-security-rule-changes-on-the-horizon-is-your-healthcare-organization-ready/)(https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls). Technology-neutral governance assumes someone is enforcing the governance. When fewer than one in six AI agents receives security approval before deployment, the distance between HIPAA’s theoretical scope and its real-world application is not a gap , it is the operating theater of the 42% Blind Zone, policed by a regulator outmatched by a factor of 345 to 1(https://www.healthcarelawinsights.com/2026/02/major-hipaa-security-rule-changes-on-the-horizon-is-your-healthcare-organization-ready/)(https://newsroom.ibm.com/2025-07-30-ibm-report-13-of-organizations-reported-breaches-of-ai-models-or-applications,-97-of-which-reported-lacking-proper-ai-access-controls).

Three Moves Before the Final Rule

Problems identified in AI agent identity security , specifically the inability to revoke misbehaving agents in real time , compound in healthcare, where each agent may traverse multiple HIPAA-protected databases before anyone detects anomalous behavior. Thomas Nuth put the operational stakes plainly: “These agents are the new insider threat. If an agent is overprivileged, an attacker can use it to infiltrate data at machine speed”. Three structural changes can begin closing the 42% Blind Zone before the updated HIPAA Security Rule takes effect:

-

Assign every AI agent an individual, suitable identity. Shared credentials are not a convenience tradeoff , they are a forensic dead end. Each agent accessing PHI should authenticate with its own credential set , managed through a dedicated credential vault, not shared environment variables , scoped to the minimum data necessary for its function, with session logs attributable to that specific agent. The 97% of organizations that lack proper AI access controls cannot attribute a breach to a specific agent , or revoke one without revoking all.

-

Require pre-deployment security review for every agent touching patient data. Current rates , 14.4% of agents receiving full security approval , do not indicate process inefficiency. They indicate a policy vacuum. No AI agent should access electronic health records, billing databases, or patient scheduling systems without documented authorization from security or IT leadership, with scope limitations recorded and enforceable.

-

Implement real-time behavioral monitoring with automated revocation. Logging what agents did after a breach is not monitoring. Monitoring means detecting when an agent accesses data outside its authorized scope and revoking access before the session completes. Agents in the 42% cohort with zero oversight represent the highest-risk segment , but partial oversight, which applies to another 43% of deployments, is not meaningfully safer if it cannot trigger automated response, per 14.4% of agents receiving full security approval.

These clinical agents Risk Score , calculate yours before the HIPAA deadline:

| Question | If Yes | Your Score |

|---|---|---|

| Do all AI agents have individual credentials (not shared keys)? | +0 | +3 if no |

| Is every agent pre-approved by security before deployment? | +0 | +3 if no |

| Can you terminate a misbehaving agent in <60 seconds? | +0 | +2 if no |

| Do you monitor agent data access in real time? | +0 | +3 if no |

| Can you isolate an agent from network access? | +0 | +2 if no |

| Total | 0 = prepared | >6 = in the 42% Blind Zone |

According to industry data, a score above 6 means the organization falls into the cohort where 92.7% experienced AI agent incidents and the average breach costs $8.09 million. Print this table and hand it to the CISO with the HIPAA deadline circled.

A 180-day compliance window following the HIPAA Security Rule update will force healthcare organizations to demonstrate technical safeguards they do not currently have. Organizations that begin closing the 42% Blind Zone now , by credentialing agents individually, gating deployments through security review, and implementing behavioral monitoring with revocation capability , will be building toward compliance. Organizations that wait for the final rule will be building controls under deadline pressure, against a threat surface that grows with every new agent deployment.

According to industry data, this operational blind zone is not a statistic. It is the space between what healthcare organizations have deployed and what they can see. Every agent operating in that space accesses patient data that cannot be compromised, generates liability that has already been quantified, and operates under a regulatory framework that has not yet acknowledged it exists. Whether the final rule closes this gap matters less than how many $8 million incidents will occur in the months before it tries.

What to Read Next

- Langflow RCE Exploited Again , 20 Hours, No PoC, Creds Stolen

- 41.6M AI Scribe Consultations Hide an Unregulated Medical Device

- Stryker Hack: Zero Devices Hit, Surgeries Canceled for 8 Days

References

- Gravitee State of AI Agent Security 2026 Report , Blog Summary , 92.7% healthcare incident rate, Jorge Ruiz shadow AI quote, survey findings

- Gravitee State of AI Agent Security Report , Healthcare sector breakdown including 42.41% unmonitored agent rate, 900+ respondent methodology

- Google Cloud Threat Horizons H1 2026 , Security Boulevard analysis by Jack Poller , 83% identity-based cloud intrusions, UNC4899 campaign details

- AI Agent Network Security: Microsegmentation , Elisity , Cross-industry 88% incident average, 14.4% security approval rate

- Cloud Security Alliance: State of Cloud and AI Security 2026 , Thomas Nuth analysis on 100:1 machine-to-human identity ratio and insider threat framing

- IBM 2025 Cost of a Data Breach Report , $7.42M healthcare breach average, $670K shadow AI premium, 97% lacking AI access controls

- Flashpoint 2026 Global Threat Intelligence Report , PRWeb , 1,500% AI illicit activity surge, 3.3B compromised credentials, Josh Lefkowitz quote

- 5 HIPAA Security Rule Changes in 2026 , CBIZ , Proposed encryption, MFA, penetration testing mandates and access revocation timeline

- 2026 HIPAA Security Rule Update , Medcurity , May 2026 finalization timeline, 180-day compliance window

- Major HIPAA Security Rule Changes , Healthcare Law Insights , OCR enforcement fines totaling $6.6M in 2025, per-violation penalty range

- How AI Is Reshaping Healthcare Security in 2026 , Security Info Watch , Derek Gunny quote on AI-powered proactive security capabilities

- AHA Hospital Statistics , American Hospital Association , 6,100 hospitals count (Primary Source)