10 min read · 2,379 words

In late November 2025, Andrej Karpathy coded the way most senior engineers had for decades , 80% manual keystrokes, 20% AI assist. By mid-December, those numbers had completely reversed. “This is easily the biggest change to my basic coding workflow in 2 decades of programming,” said Andrej Karpathy, former director of AI at Tesla. That personal inflection point now has a market-wide counterpart: SemiAnalysis data shows Claude Code GitHub commits account for 4% of all public commits on GitHub , a number climbing fast enough to rewrite the economics of an entire industry.

Behind that percentage sits a $2.5 billion annual revenue stream, a $380 billion parent company, and a growth curve the developer tools market has never produced. Claude Code’s footprint on GitHub is only the surface metric. For small development teams and freelance engineers, the deeper signal reads in dollars: the global AI coding tools market hit $7.37 billion in 2025, and Anthropic , barely two years into selling developer products , already claims the largest share.

From 3 Commits to 135,000: The Growth Nobody Saw Coming

Claude Code launched as a research preview in early 2025. Within thirteen months, the tool grew 42,896-fold, processing more than 135,000 commits per day by February 2026. A viral post from Claude Code creator Boris Cherny in January 2026 drew 4.4 million views on X, pushing adoption beyond what even bullish analysts expected. “While you blinked, AI consumed all of software development,” said Dylan Patel, co-founder of SemiAnalysis, when publishing the data.

Growth at that pace bears no resemblance to GitHub Copilot’s path. Copilot reached 20 million total users by mid-2025 through line-by-line code suggestions inside IDEs, building market share gradually over three years. Claude Code operates from the terminal, autonomously executing multi-step workflows , reading entire codebases, planning changes, and writing across multiple files without constant developer oversight. SemiAnalysis projects that Claude Code’s output on GitHub will exceed 20% of daily commits by December 2026.

A concept SemiAnalysis calls “task horizon” , the duration an AI agent can autonomously operate before breaking down , explains why the shift feels so sudden. When that horizon stretched from minutes to hours around December 2025, delegable work grew exponentially, not linearly. Copilot helps engineers type faster. An extended task horizon replaces entire implementation cycles. Karpathy’s experience captures the difference: he went from using AI as an autocomplete to programming primarily in English, describing objectives rather than writing syntax.

The $19 Billion Bet

Anthropic’s annualized revenue hit $19 billion in March 2026, up from $9 billion just three months earlier. After closing a $30 billion Series G at a $380 billion valuation in February, the company commands more capital than any AI lab in history. Claude Code alone generates $2.5 billion annually , a single developer tool producing more revenue than most publicly traded SaaS companies earn in total.

Revenue growing faster than public commit share reveals something the raw numbers obscure. The 4% figure tracks only public repositories, while enterprise teams run Claude Code on private codebases invisible to outside measurement. Anthropic’s leap from $9 billion to $19 billion in three months suggests private adoption vastly exceeds what public data captures. Claims of 54% coding market share, if accurate, mean Anthropic has overtaken Microsoft’s Copilot operation in under two years , market capture at a speed rivaled only by mobile platforms and cloud computing.

Platform disruptions follow a recognizable pattern: the new entrant initially complements the incumbent before structurally replacing it. AI coding tools entered as autocomplete assistants adopted by 84% of developers according to Stack Overflow’s 2025 survey. Now they are evolving into autonomous agents that handle entire workflows end-to-end , and that transition changes the math for every team making headcount decisions.

A Productivity Story That Doesn’t Add Up

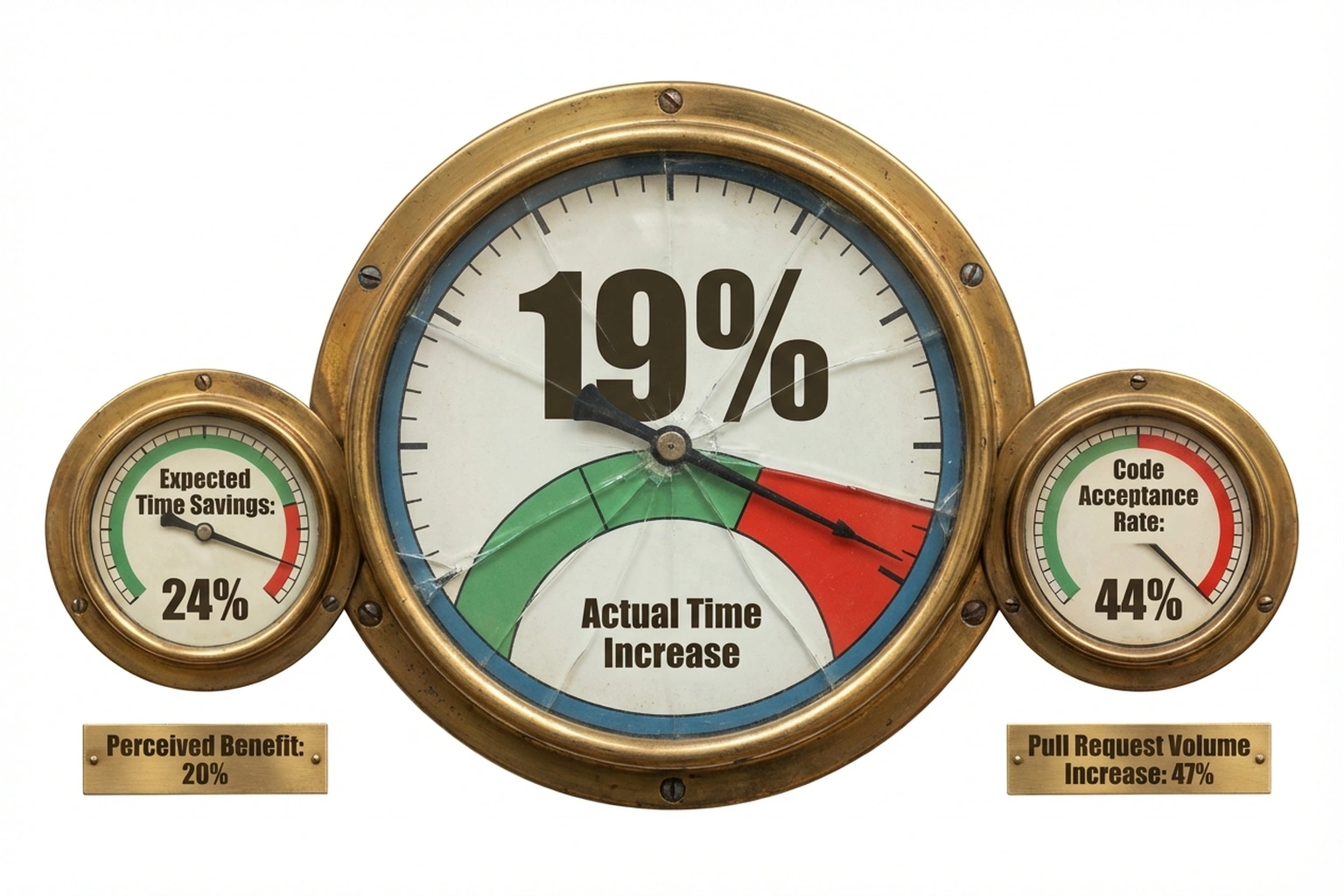

Growing adoption should mean growing productivity. Independent data tells a different story. METR, the AI evaluation organization, tested 16 experienced open-source developers on 246 real coding tasks and found that those using AI tools took 19% longer to complete issues than developers working without AI assistance.

Before starting tasks, developers predicted AI would save them 24% of their time. After finishing, they still believed it had helped by 20%. Neither estimate matched reality. Developers accepted less than 44% of generated code, spending considerable time reviewing and testing suggestions that were ultimately rejected , a pattern of overconfidence with real cost implications for teams budgeting around perceived AI benefits.

Faros AI’s larger-scale telemetry study , tracking 10,000 developers across 1,255 teams , complicates the picture further. Developers on high-AI-adoption teams handled 47% more pull requests daily and completed 21% more tasks. But code reviews took 91% longer, pull request sizes ballooned 154%, and bug rates rose 9%. Faros’s blunt conclusion: “Developers are completing a lot more tasks with AI, but organizations aren’t delivering any faster,” the researchers noted.

Combining these findings exposes what the data reveals as The 40-Point Illusion , a measurable feedback loop driving the entire AI coding tools market. METR shows developers believe AI saves them 24% of their time. Reality: it makes them 19% slower.

That is a 40-percentage-point gap between perception and reality. This perception gap is the mechanism that produces the Faros paradox: organizations adopt AI tools based on developer self-reports of productivity gains , the same self-reports METR proved are wrong by 40 points. Developers report feeling more productive, management increases AI investment, output volume rises, review burden and bugs rise proportionally, net delivery speed stays flat, but developers still report feeling productive. The loop is self-reinforcing and invisible from the inside.

Neither SemiAnalysis nor METR nor Faros published this connection. But the math they independently provide tells the same story. Volume and velocity are not the same thing , and the gap between them has a price tag.

Here is a calculation neither source provides. Claude Code generates $2.5 billion annually from approximately 49.3 million commits per year (135,000 daily × 365). That works out to roughly $50 per Claude Code commit. A human developer earning the $120,000 U.S. median salary produces approximately 750 commits per year (industry-standard 3 per day), putting the cost at roughly $160 per human commit. At first glance, Claude Code commits cost users one-third as much. But Faros data complicates that ratio: if each AI-generated commit carries 9% more bugs and requires 91% longer review, the effective value per commit drops sharply. A commit that ships faster but breaks more and demands more senior engineering time to review is not cheaper , it shifts costs from generation to verification. For a 5-person team, The what amounts to 40-Point Illusion means budgeting for AI-enhanced output while actually paying for AI-generated review overhead.

What Small Development Teams Should Prepare For

For a three-to-ten-person development shop, the practical implications break into three phases that mirror previous technology platform shifts.

Phase one , augmentation , is already underway. Engineers add AI tools to existing workflows, handling more tickets per sprint while team structure stays intact. Fifty-one percent of professional developers now use AI tools daily, and most small teams sit at this stage. Monthly costs run a few hundred dollars per seat, and the perceived productivity gain feels real even when independent data like METR’s study suggests otherwise.

Phase two is automation. As task horizons extend, entire categories of work , scaffolding new projects, generating boilerplate, writing test suites , move to AI agents entirely. Teams that once needed a junior developer for routine implementation may not replace departing ones. Security risks compound at this stage, as autonomous coding tools introduce vulnerabilities most small shops lack the expertise to address.

Phase three is displacement. If SemiAnalysis projections hold and 20% of daily commits come from Claude Code by December, the logical consequence for startups with limited runway is fewer engineering hires. Wall Street firms are already cutting thousands of positions during record revenue years, a pattern that typically reaches smaller companies 12 to 18 months later. Meanwhile, vibe coding has emerged as the biggest shadow IT challenge , AI-generated applications deployed without engineering review , and the same dynamic will compress freelance rates as clients recalibrate what implementation work actually costs.

Engineers who invest in skills AI cannot easily replicate , system architecture, security review, client communication , will remain in demand. The window for preparation narrows with every quarterly revenue report.

The Strongest Case Against Alarm

Not everyone reads these numbers as a displacement story, and the strongest counter-argument warrants genuine engagement. Karpathy himself frames AI coding as making programming “more fun” by removing tedious boilerplate , not by removing programmers. Developer demand remains strong globally, and the broader pattern of software expanding into every industry continues generating new roles faster than automation can eliminate existing ones.

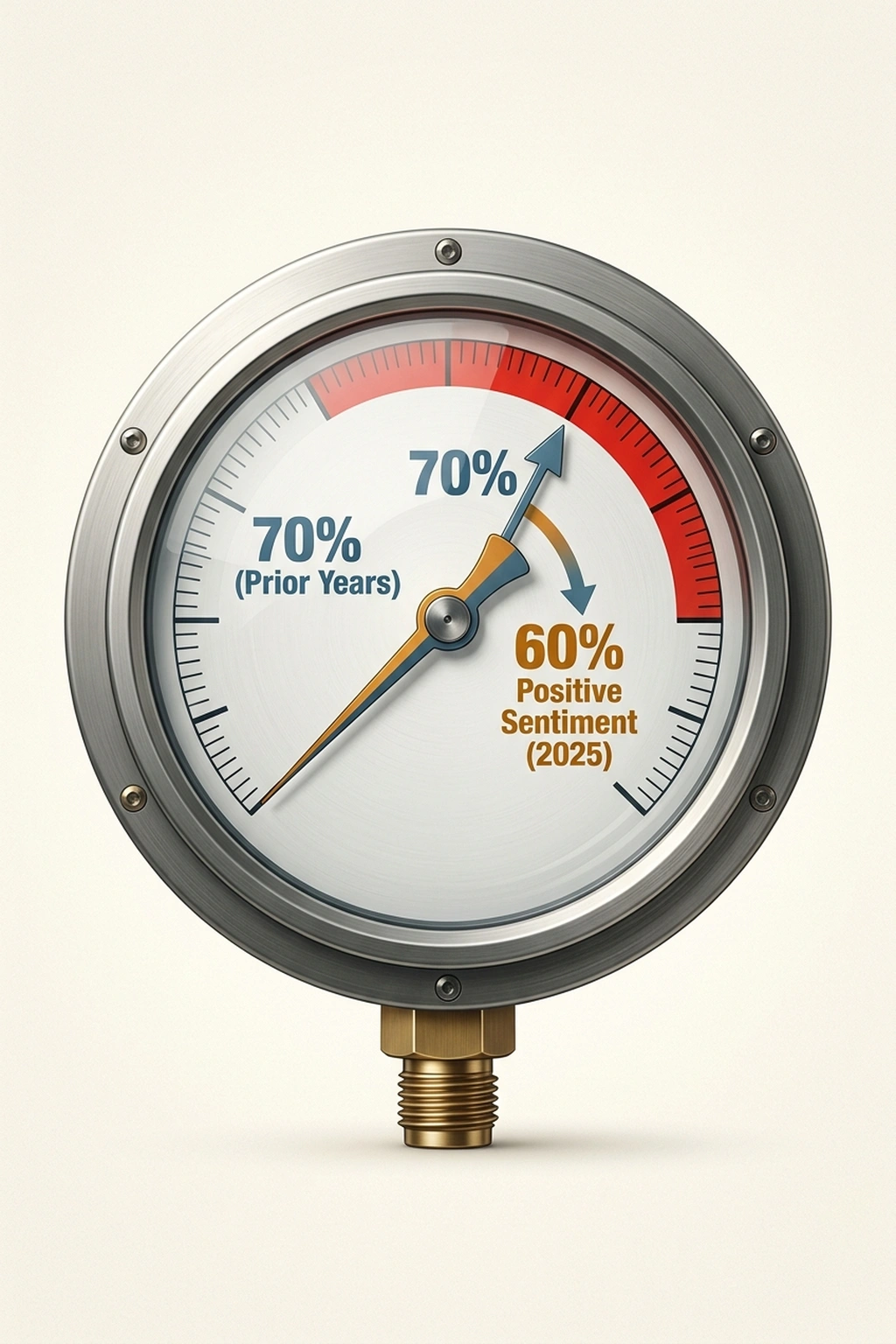

Developer trust in AI tools is actually declining even as usage rises. Positive sentiment dropped to 60% in Stack Overflow’s 2025 survey, down from over 70% in prior years, with more developers actively distrusting AI output than trusting it. Karpathy’s own “slopacolypse” warning , his prediction that AI-generated content will flood digital platforms with low-quality output , applies to code repositories too. If machine-generated code requires extensive human review, the net effect may shift what engineers do rather than eliminate the need for them.

Historical precedent backs this view. Compilers didn’t eliminate assembly programmers , they created application developers. Cloud platforms didn’t eliminate operations engineers , they birthed the DevOps discipline. Automated testing didn’t replace QA teams , it redefined their work. Every previous automation wave in software ultimately expanded the profession, often in directions nobody anticipated. Dismissing this track record requires stronger evidence than an adoption curve, however dramatic.

Strong as that argument is, it underestimates the speed differential. Past automation waves played out over decades; the industry had time to retrain, redistribute, and create new work categories. Claude Code went from research preview to 4% of public commits in thirteen months. Small development teams operating on annual budgets don’t have the luxury of waiting for labor markets to rebalance , they face hiring decisions next quarter, not next decade.

The Budget Meeting Nobody Has Scheduled

Here is the cost model a 5-person development team needs before next quarter.

Before AI tooling: 5 engineers × $120,000 salary = $600,000 annual labor cost. Standard output: ~3,750 commits per year, reviewed through normal code review processes.

After AI tooling adoption: Add Claude Code at approximately $200 per seat per month ($12,000 annually). Faros data predicts the team will produce 47% more pull requests , roughly 5,500 commits. But reviews will take 91% longer. If senior engineers previously spent 10 hours per week on review, that rises to 19 hours. At $75 per hour, the additional review cost per senior engineer is approximately $35,100 annually. For two senior reviewers on a 5-person team: $70,200 in additional review overhead. Bug rates rise 9%, adding estimated QA and hotfix costs of $15,000-$25,000 annually based on industry incident response benchmarks. Net delivery speed: unchanged.

The 40-Point Illusion in dollars: $12,000 in AI tooling + $70,200 in review overhead + ~$20,000 in bug costs = approximately $102,200 in annual hidden costs for a team that delivers at the same velocity. That is not a productivity investment. It is a volume tax , and The 40-Point Illusion ensures the team won’t notice until someone runs the numbers.

This week, run a review tax audit on your last 30 merged pull requests. Pull time-to-merge and review round counts from GitHub or GitLab analytics. Split them into AI-assisted versus human-authored commits. If AI-assisted PRs take 2x longer to merge , and Faros available data indicates they will , schedule a 30-minute meeting with your engineering lead to decide whether the team needs a dedicated AI-output review process separate from standard code review. The bottleneck is no longer generation. It is verification. Most teams have not noticed yet.

Engineering managers do not forward think pieces to their VPs. They forward spreadsheets. The numbers above are the spreadsheet.

What to Read Next

- The 30-Minute Trap: Alibaba’s AI Agent Meets Unprepared Buyers

- The 34% Problem: AI Transformation Stalls, Traps Billions

- The 80% AI Project Failure Rate Costs Firms $7.2M Each

References

- Andrej Karpathy coding workflow notes , Original X post documenting the 80/20 workflow reversal in December 2025

- Andrej Karpathy Admits Software Development Has Changed for Good , Extended quotes on programming in English and the professional identity impact

- Claude Code accounts for 4% of GitHub’s public commits , GIGAZINE analysis including the “task horizon” framework

- Anthropic revenue, valuation & funding , Financial data: $2.5B Claude Code revenue, 54% coding market share

- GitHub Copilot Statistics 2026 , AI coding tools market size ($7.37B) and Copilot’s 20M user base

- 4% of GitHub Commits Are Now Made by Claude Code , Growth data: 42,896x increase, 135K daily commits, Boris Cherny’s viral post

- Dylan Patel on Claude Code data , SemiAnalysis co-founder’s analysis framing the 4% milestone

- Claude Code is the Inflection Point , Full SemiAnalysis report projecting 20%+ daily commits by end of 2026

- Anthropic ARR surges to $19 billion , Revenue trajectory from $9B to $19B in three months

- Anthropic raises $30 billion Series G , $380B valuation and funding round details

- Developers remain willing but reluctant to use AI: Stack Overflow 2025 Survey , 84% adoption rate, 51% daily usage among professional developers

- Measuring the Impact of Early-2025 AI on Experienced Developer Productivity , METR’s randomized controlled trial finding AI tools made experienced developers 19% slower

- METR study: Is AI-assisted coding overhyped? , Analysis of the 24% expected speedup vs 19% actual slowdown and 44% code acceptance rate

- What METR’s Study Missed About AI Productivity in the Wild , Faros AI telemetry across 10,000 developers: 47% more PRs but no delivery speed improvement

- Karpathy’s Claude Code Field Notes , Programming “more fun,” “slopacolypse” prediction, and agent workflow analysis

- AI | 2025 Stack Overflow Developer Survey , Positive sentiment declining to 60%, growing accuracy trust concerns