8 min read · 2,035 words

Roughly one in five student interactions with AI education tools involve problematic behavior , cheating, self-harm queries, bullying, or violence , Per 14 million school-issued devices and rev1.2 million conversations across more thdata from 1.2 million conversations across more than 1,300 school districts. Securly’s analysis, covering December 2025 through February 2026, offers the most complete AI education tools student safety 2026 dataset available , and should settle any debate about whether this problem deserves serious attention in the current school year. Monitoring technology catches the worst interactions right now. Policy frameworks prevent most problems right now. Almost nobody deploys either. In a country where schools took a decade to figure out social media policies, the clock on AI safety is running considerably faster , and the gap between capability and adoption, not the AI itself, is where the real failure sits.

What 1.2 Million Student AI Conversations Reveal

Securly, a student safety company managing 14 million school-issued devices and reviewing 75 million daily student activities, ran the analysis from December 1, 2025 through February 20, 2026. Breaking down that 20% figure exposes two very different problems.

According to Securly’s data as analyzed by Education Week, 95% of deflected queries were students trying to get AI to finish homework , garden-variety academic dishonesty. Annoying for teachers, but not dangerous. Far more alarming: roughly 1 in 50 interactions that systems flagged for violence, cyberbullying, or self-harm, totaling more than 24,000 queries in under three months. Smaller but notable categories include approximately 1% involving sexual content, 1% firearms, and 0.5% each for drugs and hate speech. Standard internet searches get flagged as potentially unsafe at a rate of just 0.4%, meaning AI conversations surface concerning content at roughly five times the rate of regular browsing. ChatGPT leads student AI use at 42% of all interactions, followed by Securly’s AI Chat at 28%, Google Gemini at 21%, and education tools like MagicSchool and SchoolAI at 9%.

That tool breakdown matters because ChatGPT and Gemini are general-purpose models with zero classroom awareness. Neither suits a twelve-year-old researching self-harm or testing violent scenarios , and a recent Center for Countering Digital Hate investigation found that 8 of 10 major AI chatbots regularly assisted researchers posing as 13-year-olds in planning violent attacks. When the tools themselves cooperate with dangerous requests, the monitoring layer is not optional. It is the only barrier between a curious student and content no adult would want them reading.

What Securly AI Monitoring and GoGuardian Filters Actually Catch

When districts actively set AI usage policies, monitoring works. Securly CEO Tammy Wincup told Education Week that 80% of student AI conversations stay within district policy when guardrails are in place. Securly’s deflection feature, rolled out in November 2025, intercepts off-policy prompts before they reach the AI model. During a pilot across 450 districts, the platform enabled over 250,000 safe AI conversations during the 2024-25 school year.

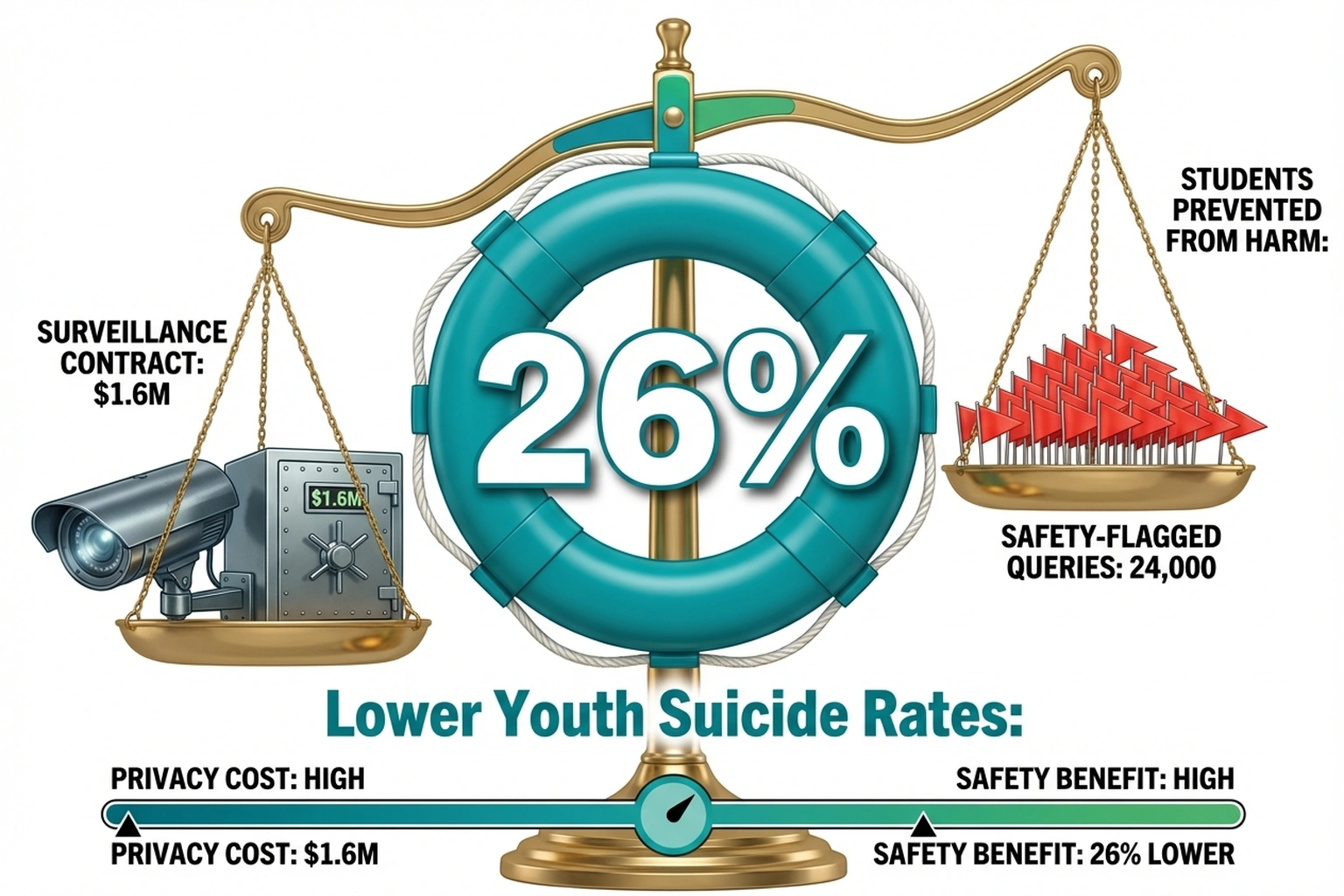

GoGuardian’s Beacon product covers a broader base: 25 million students , roughly half of all U.S. K-12 learners , with 51,000+ educators using the system. Beacon’s natural language processing detects self-harm signals in conversations on ChatGPT, Gemini, and Talkie-AI. GoGuardian reports Beacon has prevented an estimated 18,623 students from physical harm since March 2020, with counties using it seeing 26% lower youth suicide rates during 2021-2022. Both platforms meet FERPA and COPPA (Children’s Online Privacy Protection Act) compliance baselines , a detail that matters given the privacy concerns emerging from districts buying tools with little due diligence.

Verdict: both platforms demonstrate that monitoring student AI interactions at scale works right now. But Lightspeed Systems, a third major player, reports that despite 85% of schools blocking most generative AI, sites still recorded more than 10,000 safety incidents on AI sites in the past year. Blocking alone is not enough , students route around restrictions the way water routes around rocks. Active monitoring paired with clear policies is the combination that actually produces results. it the Governance Gap Multiplier: each percentage point of districts without AI policies multiplies the incident rate, because unmonitored students don’t generate fewer safety events , they generate undetected ones.

Quantify the multiplier. Securly flagged 24,000 safety incidents across monitored districts in three months. Only 23% of districts actively monitor AI use. If the remaining 77% generate incidents at even half the monitored rate , conservative, given that unmonitored environments typically show higher risk behavior , the true national incident count is approximately 24,000 + (24,000 × 0.5 × 77/23) ≈ 64,000 safety incidents per quarter, or roughly 256,000 per school year. At an average intervention cost of $500 per safety event (counselor time, parent notification, documentation), the unmonitored 77% faces approximately $100 million in undetected risk annually. (23% of districts surveyed , across 8,200+ schools)

Classroom AI Governance Is Mostly Missing

Only 23% of districts surveyed , across 8,200+ schools , actively filter or block AI tools, Per 14 million school-issued devices and rev , Securly. More than three-quarters of districts have students chatting with AI models under no formal governance framework at all. For anyone who builds software, the analogy writes itself: this is shipping a production application with no input validation and hoping users behave. (Spoiler: they do not.)

Consequences are already visible. At Delevan Drive Elementary in Los Angeles, fourth-graders using Adobe Express for Education to create AI-generated book covers received serialized imagery of women in lingerie instead of a children’s book illustration. Parent Jody Hughes told CalMatters: “These tech companies are making things marketed to kids that are not fully tested.” Adobe pulled the feature for review, but the damage had already occurred , and this incident happened in a district that at least had AI tools deployed for education. Districts with zero governance have no mechanism to catch similar failures before parents discover them.

Privacy compounds the problem. In Houston, Fort Bend ISD approved a GoGuardian contract worth up to $1.6 million over five years, but basic data ownership questions remain unresolved. Tech expert Juan Guevara Torres asked FOX 26: “Who is gonna own the data that is coming from those devices?” Crime Stoppers CEO Rania Mankarios stressed that extra caution is required because the data belongs to children. Most districts purchasing monitoring tools cannot answer either question , they buy the software and skip the policy work that gives it purpose.

States Start Writing Classroom AI Governance Rules

Some states are finally codifying requirements. Ohio now mandates every school district adopt a formal AI policy by July 1, 2026, with a model policy covering AI literacy, FERPA-compliant data protection, and governance workgroups that include educators, board members, and students. California released revised AI guidelines in early 2026, developed with 50 teachers, administrators, and experts, building on earlier legislation through SB 1288 and AB 2876.

Approaches vary dramatically , some states pursue strict prohibitions below certain grade levels, others create voluntary pilot programs with privacy safeguards. The fragmented response mirrors broader patterns of governments making AI policy decisions without adequate frameworks, a failure mode hardly unique to education. Ohio’s approach at least provides a template: mandate the policy, provide a model, set a deadline. Most states have not gotten that far.

The Privacy Counterargument Schools Cannot Ignore

Digital rights advocates make a genuine case against monitoring expansion. GoGuardian’s $1.6 million Fort Bend contract places surveillance infrastructure on devices children use daily , and as crime prevention expert Rania Mankarios noted, “the data belongs to children.” The Electronic Frontier Foundation has consistently argued that school monitoring tools create dragnet surveillance that chills student expression, disproportionately flags minority students, and normalizes institutional surveillance from childhood. When GoGuardian’s Beacon fires at 2 AM for a student expressing distress to ChatGPT, the safety benefit is clear. When the same system logs every student’s academic AI conversation for five years, the privacy cost is less obvious but potentially larger. (Popular School Safety Platform Expands To Manage S)

The counterevidence: 26% lower youth suicide rates in counties using Beacon (GoGuardian) and 18,623 students prevented from physical harm. Privacy costs are real. So are the 24,000 safety-flagged queries in three months. The question is not monitoring-versus-privacy , it is whether districts can deploy monitoring with data minimization, retention limits, and student-accessible audit logs that make surveillance accountable rather than ambient.

AI Education Tools Student Safety 2026: Five Questions for Parents

Districts rarely volunteer details about how AI operates on student devices. Based on gaps in current reporting, five questions deserve direct answers:

-

What AI tools can students access on school-issued devices? Many districts genuinely do not know. Given that 42% of student AI use flows through ChatGPT , a consumer-grade platform with no classroom guardrails , the answer matters more than most administrators realize.

-

Does the district have a written AI governance policy? No policy means membership in the 77% majority running without one. Ohio’s July 2026 mandate proves districts can do this; most states simply have not required it yet.

-

What monitoring software runs on student devices, and what triggers an alert? Securly, GoGuardian, and Lightspeed handle AI conversations differently. Monitoring without policy is surveillance without purpose.

-

Who controls the data from student AI conversations? This remains the hardest question for most districts to answer, and it should concern any parent whose child uses a school-issued device daily.

-

What happens when a safety alert fires? With 58% of schools reporting rising student mental health support requests, adding AI-generated alerts to understaffed counseling systems raises a practical follow-up: when an alert fires at 2 AM for a student expressing suicidal ideation to ChatGPT, who picks up the phone?

Running Out of Runway

LaShawn Chatmon, CEO of the National Equity Project, told CalMatters: “Educators have a narrow window to set norms before they harden.” That window is closing faster than most administrators realize. Schools eventually built workable frameworks for internet filtering, social media policies, and device management , but slowly, with students absorbing every year of delay. AI in classrooms moves at a different speed, and the vibe-coding phenomenon shows exactly what happens when powerful tools spread faster than governance can follow.

The reality for student safety with AI education tools in 2026 is stark: twenty-four thousand safety-flagged student queries in three months is not a number that shrinks with time. Monitoring tools are ready. Policies, in most places, are not. Every semester without a governance framework is another semester of hoping that AI chatbots designed for adults will somehow know they are talking to a child.

What to Read Next

- JPMorgan’s AI Mandate Hides a 39-Point Perception Gap

- AI Coding Tools Cost $6,750/yr in Hidden Rework , 5 Ranked by True Price

- Shadow AI Costs $21K Per App: The 3:1 Ratio Nobody Tracks

References

-

Real-Time Data Shows Exactly How Students Use AI on School Technology , Education Week analysis of Securly’s 1.2 million student interaction dataset (March 2026).

-

Popular School Safety Platform Expands To Manage Student AI Use In K-12 Classrooms , Securly press release with platform scale, pilot results, and district survey data.

-

GoGuardian Beacon Strengthens Student Safety with Critical AI Chat Oversight , GoGuardian announcement detailing Beacon AI chat monitoring, student safety outcomes, and suicide rate data.

-

AI Images Scandalized a California Elementary School , CalMatters investigation of the Delevan Drive incident and California’s state policy response.

-

Ohio Unveils Model AI Policy for Use by K-12 Schools , Government Technology coverage of Ohio’s July 2026 AI policy mandate for all school districts.

-

Houston-Area Districts Confirm AI Monitoring Tools on Student Devices , FOX 26 investigation into district monitoring purchases and unresolved data privacy concerns.

-

How Popular AI Chatbots Are Enabling the Next Generation of School Shooters , Center for Countering Digital Hate study testing 10 AI platforms with minor-age profiles.

-

AI Risks and Safety in Schools , Lightspeed Systems data on school AI blocking rates and safety incident volume.