10 min read · 2,452 words

Affiliate Disclosure: This article contains affiliate links. We may earn a commission if you purchase through these links, at no additional cost to you. This helps us continue publishing free content. See our full disclosure.

Seventy-four percent of senior fraud and anti-money laundering (AML) leaders now rank AI-driven fraud as a top threat , yet 67% admit their organizations lack the infrastructure to fight back with AI of their own, according to DataVisor’s March 2026 Fraud & AML Executive Report. That gap between fear and readiness is where small language models banking fraud detection strategies gain ground. Rather than waiting years to deploy trillion-parameter systems that regulators will not approve for on-premise use, a growing number of banks are betting on models under 15 billion parameters , compact enough to run inside their own data centers, fast enough to flag a suspicious wire transfer before it clears.

The shift is not theoretical. TD Bank Group expects to cut insurance claims costs by $150 million through AI deployment, targeting 1 billion Canadian dollars (~$731 million) in annual AI-driven value across its operations. TD is hardly alone: 68% of financial institutions increased fraud-detection spending year over year, with 40% investing specifically in deep learning systems. But the trillion-dollar question is not whether banks will adopt AI , it is which kind of AI they will actually deploy, and whether that AI can operate inside their own walls rather than in someone else’s cloud.

Why Small Language Models Outperform Bigger AI in Banking Fraud Detection

Fraud detection operates on milliseconds. A wire transfer flagged 800 milliseconds after initiation might as well not be flagged at all. SLMs (Small Language Models) , typically ranging from 1 to 15 billion parameters , respond in 50 to 200 milliseconds on-premise hardware, with recent fine-tuned variants pushing latency below 50ms. Compare that to large language models (LLMs) at 800ms or more for equivalent real-time chatbot queries, and the performance gap becomes a compliance gap: regulators increasingly expect near-instant transaction screening.

Speed alone would be enough to justify the shift, but cost is the accelerant. Running a million monthly fraud-screening conversations through a frontier LLM generates bills between $15,000 and $75,000. The same workload through a fine-tuned SLM costs $150 to $800 , a savings ratio that, at the high end, reaches 180x.

None of that math accounts for the compliance premium. Financial institutions operating under the Bank Secrecy Act, GDPR, or Canada’s PIPEDA face hard constraints on where customer data can travel. An SLM running on a single GPU inside a bank’s own infrastructure eliminates the data-residency question entirely. For institutions still rebuilding trust after regulatory enforcement , TD Bank itself settled enforcement actions with U.S. regulators in October 2024 over AML program failures , that compliance flexibility is not optional.

Accuracy tells a more complex story. A 3.8B-parameter model like Phi-3 scores 69.0% on MMLU versus GPT-4’s 86.4% , a meaningful gap on general knowledge benchmarks. But fraud detection is not a general knowledge task. Fine-tuned on proprietary transaction histories, an SLM narrows that gap dramatically on domain-specific pattern recognition while maintaining its latency and cost advantages. Mistral 7B, another popular SLM candidate, achieves 81.0% on HellaSwag (commonsense reasoning) while processing at 200-400 tokens per second , roughly 15x faster than frontier LLMs at 15-20 tokens per second.

The AI Readiness Gap: $485 Billion in Losses, Not Enough Defense

DataVisor coined the term “AI Readiness Gap” to describe the growing disconnect between attacker capabilities and institutional defenses. Generative AI now enables deepfakes, synthetic identities, coordinated fraud rings, and automated scam campaigns at industrial scale. Global fraud losses reached $485.6 billion in 2023, a figure that predates the current wave of AI-powered attack tools.

On the defense side, the picture is worse than the headline numbers suggest. DataVisor’s report identifies legacy infrastructure, organizational silos, and outdated operating models as the primary friction points. One bright spot: 81% of financial organizations are considering or implementing a unified approach across fraud and AML, and 74% say a unified, end-to-end view of risk would materially improve detection.

But “considering” is doing heavy lifting in that sentence. PYMNTS Intelligence and Block surveyed 200 executives at U.S. financial institutions and found that 71% of total fraud incidents and dollar losses now stem from unauthorized-party schemes , up from 48% the prior year. Meanwhile, 20% of institutions, especially smaller and regional banks, still lack behavioral analytics entirely , not advanced AI, not frontier models, but any form of behavioral analysis at all.

What the readiness gap data and the SLM performance benchmarks reveal, taken together, is what amounts to Deployability Threshold , the point at which a model’s ability to actually run inside a bank’s compliance perimeter matters more than its benchmark accuracy. A 7B model scoring 69% on MMLU but running at 50ms on-premise behind an air gap beats a frontier model scoring 86% that requires cloud API calls a compliance officer will never approve. The winning variable in banking fraud detection is not intelligence , it is deployability. And deployability favors small.

For institutions lagging behind, SLM-based fraud detection offers a realistic on-ramp. An SLM fine-tuned on proprietary transaction data can run on existing server hardware, requires no cloud API dependency, and deploys in weeks rather than quarters. DataVisor’s own customers report a 50% detection lift while reducing false positives when they move from rule-based systems to AI-powered detection.

TD Bank’s Billion-Dollar AI Bet

TD Bank Group is the most visible test case for AI-driven fraud defense at scale. During the bank’s Q1 2026 earnings call on February 26, 2026, Group President and CEO Raymond Chun stated the bank is “accelerating AI deployments and reducing the cost of delivery by scaling the technology”. CFO Kelvin Tran explained that “AI is helping power these savings as we scale through repeatable patterns, driving faster deployment and reduced costs”.

Concrete numbers back the ambition. TD targets 500 million Canadian dollars (~$365 million) in annualized revenue uplift from AI, plus another 500 million Canadian dollars in annualized cost savings , totaling the bank’s C$1 billion medium-term AI value target. Group Head of U.S. Retail Leo Salom confirmed that machine learning models were “implemented in our transaction monitoring system last year” with additional deployments planned over coming quarters.

For a bank that launched a new KYC platform for business users in February 2026 as part of its AML remediation, the progression from rule-based monitoring to ML-powered transaction screening represents more than a technology upgrade. After the 2024 regulatory settlement, TD’s aggressive AI investment doubles as a compliance recovery strategy , demonstrating to regulators that the bank’s systems can now detect the patterns its old infrastructure missed.

Broader cost savings reinforce the ambition. TD is targeting annualized cost reductions of 2.2 billion to 2.5 billion Canadian dollars (~$1.6 billion to $1.8 billion) across the organization, with AI acting as a force multiplier across fraud detection, claims processing, and customer service automation. At those numbers, even a modest improvement in fraud-detection accuracy , say, catching an extra 5% of synthetic identity applications , pays for the entire SLM deployment many times over.

Why On-Premise Deployment Matters More Than Model Size

The AI banking security conversation often fixates on parameter counts, but deployment location may matter more for institutional adoption. A TD survey conducted by Léger (December 18, 2025 – January 5, 2026, n=1,517 adults and 262 business owners) found that 75% of Canadians feel more vulnerable to financial fraud due to AI advancements and 82% believe scams are becoming harder to detect.

Customer trust demands that banks keep sensitive transaction data in-house. An SLM deployed on-premise satisfies that requirement while still delivering meaningful analytical capability. Models like Phi-3 (3.8B parameters) score 69.0% on MMLU and 82.0% on GSM8K , not frontier-model territory, but more than sufficient for pattern matching against known fraud typologies when fine-tuned on domain-specific data.

| Decision Factor | Cloud LLM (e.g., GPT-5.4) | On-Premise SLM (e.g., Phi-3, Mistral 7B) |

|---|---|---|

| Latency | 200-800ms (network + inference) | 50ms (local inference) |

| Data sovereignty | Transaction data leaves premises | All data stays on-premise |

| Cost at scale (3-year) | Higher (API fees compound) | 30-50% lower above 60% GPU utilization |

| MMLU accuracy | 86.4% (GPT-4) | 69.0% (Phi-3) , but fine-tuning narrows gap on domain tasks |

| Regulatory compliance | Requires data processing agreements | Inherently compliant (no third-party data transfer) |

| Deployment speed | Hours (API integration) | Weeks (infrastructure + fine-tuning) |

| Fine-tuning on proprietary data | Limited or prohibited by vendor | Full control |

For institutions weighing GPU infrastructure investment, the economics favor on-premise SLMs over cloud LLMs at scale. At consistent GPU utilization above 60-70%, on-premise deployment saves 30% to 50% over cloud LLM costs across a three-year horizon. Banks processing millions of daily transactions easily clear that utilization threshold. Cloud GPU providers like RunPod offer on-demand GPU instances that let institutions prototype SLM fine-tuning and inference workloads before committing to permanent on-premise hardware , a useful middle step for teams still sizing their deployment.

Business Owners Are Already Under Fire

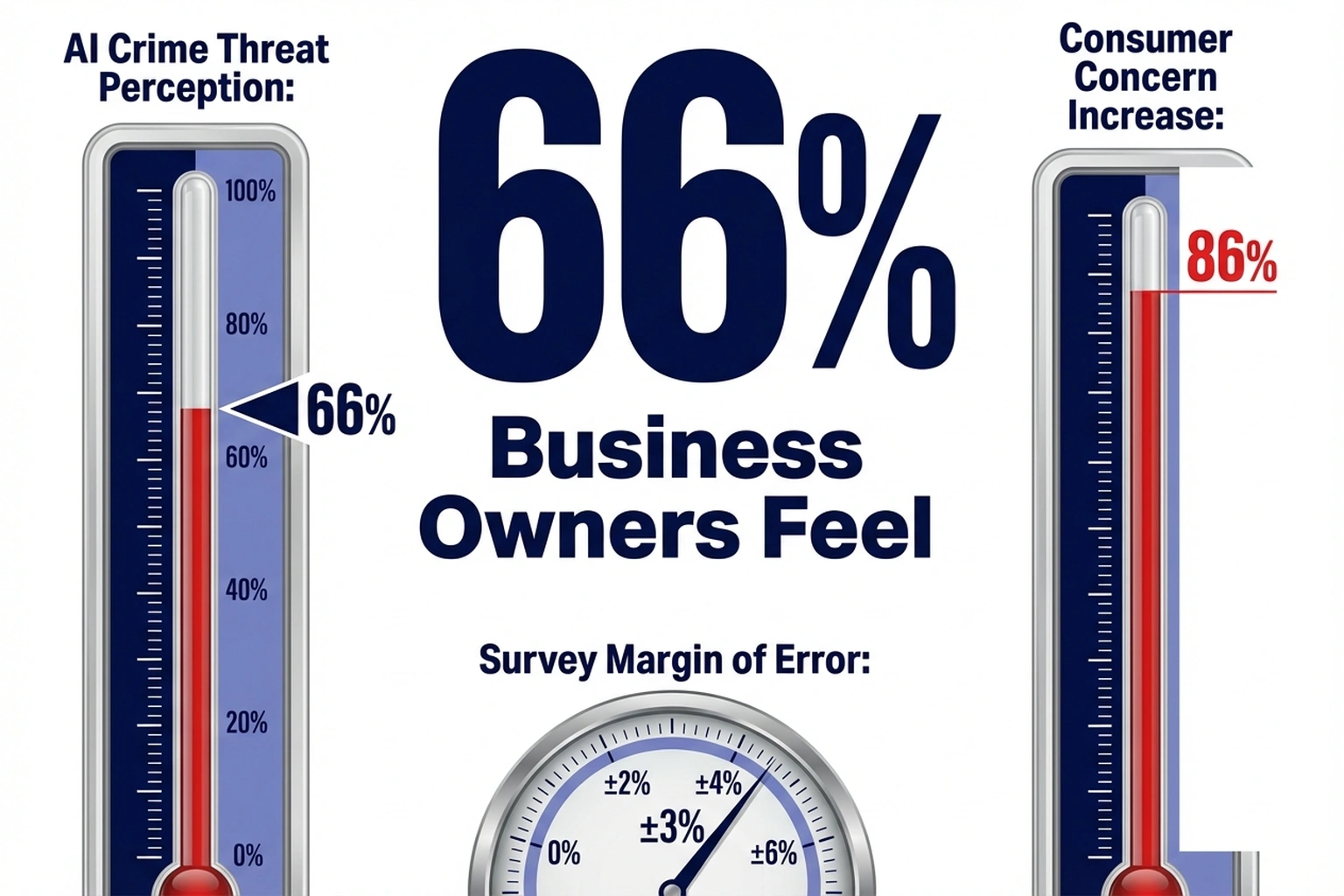

Consumer vulnerability grabs headlines, but the business side of AI fraud may be more urgent. TD’s Léger survey , with a margin of error of ±2.5% , found that 66% of business owners feel more exposed to fraud, 61% view AI-driven crime as a major threat, and 46% endured at least one fraud attempt in the past year. Among consumers, the mood is grimmer still: 86% express greater fraud concerns than five years ago, and 41% never seek fraud prevention education or advice , leaving banks as the de facto first line of defense whether they like it or not.

At the same time, 46% of financial institutions cite regulatory compliance challenges and 41% point to faster or more diverse payment types as factors complicating their defense posture. Fraud does not just drain accounts , 50% of institutions report damaged customer loyalty and 44% cite brand and reputation harm.

As attackers adopt generative AI tools to create deepfake identities and automate social engineering at scale , the kinds of attacks documented in Decoded AI Tech’s analysis of AI phishing doubling to one attack every 19 seconds , the defenders who match AI with AI, quickly and affordably, will determine which banks survive the next cycle of fraud escalation. For companies still running on the hyperscaler-dependent infrastructure model, the urgency is existential.

What Comes Next for SLM-Based Fraud Detection

Half of surveyed institutions plan to expand cloud-based fraud platforms and 51% expect increased outsourcing , moves that seem counterintuitive if on-premise SLMs are the winning architecture. The reality is more layered. Most institutions will run hybrid deployments: SLMs handling real-time transaction screening on-premise, larger cloud-hosted models tackling complex investigations and cross-institutional pattern analysis. A bank might use a 7B-parameter model to screen every incoming wire transfer in under 50 milliseconds, then route flagged transactions to a larger cloud model for deeper contextual analysis , getting speed where speed matters and analytical depth where latency is tolerable.

Where adoption stalls, the barrier is rarely technical. 80% of FinTechs and large banks already run advanced behavioral analytics, but mid-tier and community banks face a different calculus: limited ML engineering talent, tight IT budgets, and boards that view AI as a risk rather than a risk-mitigation tool. For these institutions, the $2.50-per-month entry point of a fine-tuned open-source SLM could be the difference between having AI fraud defense and having none at all.

Financial institutions currently average 158 AI models in production, expected to reach 176 within one year. Adding specialized SLMs for fraud, AML, and KYC tasks fits naturally into that model-proliferation trajectory , particularly when a fine-tuned 7B-parameter model costs $2.50 per month for 10,000 queries versus $450 for the same workload on a frontier model.

Banks that wait for “perfect” frontier-model fraud solutions will lose ground to competitors deploying imperfect-but-fast on-premise alternatives today. By the time a 1-trillion-parameter model clears a compliance review, a fine-tuned 7B model will have screened millions of transactions and improved through production feedback loops. TD Bank’s C$1 billion AI target, DataVisor’s documented readiness gap, and the 180x cost advantage driving SLM adoption in fraud detection all point in the same direction , smaller, faster, closer to the data. By late 2026, based on current adoption trajectories, most top-20 North American banks will have at least one on-premise SLM in their fraud-detection pipeline.

Critics of this framing point out that the Deployability Threshold argument may underweight the compounding risk of deploying models with materially lower general reasoning capacity against adversaries who will actively probe and exploit those capability gaps. The strongest version of this objection holds that fraud rings already test defenses systematically, and a 17-point MMLU gap between a fine-tuned SLM and a frontier model could translate directly into blind spots that sophisticated attackers , not benchmark designers , will find first. On this view, the 180x cost advantage is real but potentially illusory if it purchases speed and compliance at the price of the very detection accuracy the system exists to provide.

What to Read Next

- TurboQuant’s 6x Compression Creates More GPU Demand

- GPT-5.4 Mini vs Nano: Small Model Costs Hide a 33-Point Cliff

- Qwen 3.5 Benchmark Win Hides a 15th-Place User Verdict

References

-

DataVisor Report Finds “AI Readiness Gap” , Crowdfund Insider coverage of DataVisor’s 2026 Fraud & AML Executive Report revealing the disconnect between AI-driven fraud threats and institutional defense capabilities.

-

TD Bank Eyes $150M in Claims Cost Reductions , with Help from AI , CIO Dive reporting on TD Bank’s AI-driven insurance claims cost reduction targets announced during Q1 2026 earnings.

-

TD Bank Scales AI to Fix AML Program , PYMNTS coverage of TD Bank’s C$1 billion AI value target, executive quotes from Raymond Chun, Kelvin Tran, and Leo Salom.

-

68% of Banks Turn to AI as Fraud Outpaces Rule-Based Systems , PYMNTS Intelligence and Block survey of 200 U.S. financial institution executives on fraud-detection spending and AI adoption trends.

-

Small language models , IBM analysis of SLM vs LLM performance benchmarks, latency comparisons, and cost economics.

-

The Hidden Power of Small Language Models in Banking , Lumenalta overview of SLM deployment in banking, AI model proliferation statistics, and global fraud loss figures.

-

3 in 4 Canadians Feel More Vulnerable to AI-Powered Fraud, TD Survey Finds , TD Bank press release with Léger survey data on consumer and business fraud vulnerability perceptions.