Part 3 of 6 in the Healthcare AI series.

FDA AI Medical Device PCCP Guidance, Explained

On December 3, 2024, the FDA released Docket FDA-2022-D-2628 — final guidance titled “Marketing Submission Recommendations for a Predetermined Change Control Plan for Artificial Intelligence-Enabled Device Software Functions” (McDermott+). By January 14, 2025, the Center for Devices and Radiological Health (CDRH) had scheduled a public webinar to brief manufacturers on implementation details. The FDA opened the draft in April 2023, but the final version introduced a significant scope change: coverage broadened from machine-learning-only devices to all AI-enabled device software functions, with AI now defined as a “machine-based system that can make predictions, recommendations, or decisions”.

That scope shift has practical consequences. Rule-based clinical decision support systems now fall under the same regulatory protocol as deep learning models. With 295 AI/ML device clearances in 2025 alone and a median review time of 142 days per submission, the PCCP framework addresses a genuine regulatory bottleneck. But the compliance requirements inside the document’s three mandatory components are steeper than the summary suggests.

What Healthcare AI Compliance Looked Like Before PCCPs

Before this framework, every significant modification to an AI-enabled medical device triggered a new marketing submission — a 510(k) clearance, De Novo classification, or Premarket Approval (PMA) supplement. For machine learning models designed to improve with new training data, that review cycle created a structural mismatch: by the time one model update cleared the FDA, the next iteration was already queued for deployment. A radiology AI tool trained on chest X-rays could see a six-month review delay during which newer, higher-quality training data accumulates — meaning authorization arrives only after the model has already become obsolete.

Predetermined Change Control Plans (PCCPs) break that cycle. An authorized plan, submitted alongside the original marketing application, covers specified future modifications without requiring a new premarket submission. Eligible pathways include Traditional and Abbreviated 510(k)s, De Novo classifications, and all PMA variants — Original, Modular, 180-day, Panel Track, and Real-Time. One exclusion stands out: Special 510(k)s are not eligible.

Minor updates that do not affect safety or effectiveness still follow standard Quality System Regulation (QSR) maintenance procedures. PCCPs apply specifically to modifications that would otherwise require premarket review — algorithm retraining, performance threshold adjustments, and input data pipeline changes among them.

Three Components of an FDA AI Medical Device PCCP

Each submission mandates three components, and each carries distinct evidentiary demands.

Description of Modifications

Manufacturers must define anticipated changes, including their scope and alignment with the device’s intended use. Joint guiding principles — developed by the FDA, Health Canada, and the UK’s Medicines and Healthcare products Regulatory Agency (MHRA) and published in October 2023 — specify that covered modifications must remain “limited to modifications within the intended use or intended purpose of the original” device. Expanding clinical scope — moving from chest CT analysis to abdominal imaging, for example — falls outside an authorized PCCP and requires a fresh submission.

Specificity matters here. A PCCP that describes modifications too broadly — “the model may be retrained on new data” — provides insufficient boundaries for CDRH review. Descriptions must identify specific modification types, their triggers, and the performance envelope within which changes operate.

Modification Protocol

The guidance requires four specific elements: data management practices, re-training practices, performance evaluation protocols, and update procedures. For AI diagnostic tools — 71.5% of 2025 clearances targeted radiology — the data management element carries particular weight. Training data composition directly shapes model performance across patient demographics, and the final guidance explicitly flags this dependency. CDRH will likely reject modification protocols that fail to specify how new training data will be validated for demographic representation.

Impact Assessment

From a practical standpoint, the Impact Assessment is perhaps the most demanding component of FDA AI medical device PCCP guidance requirements. Manufacturers must compare modified and unmodified device versions, evaluate benefits and risks including “unintended bias,” assess verification and validation adequacy, analyze modification interactions, describe cumulative impact, and — new in the final version — account for intended-use populations by ethnicity, gender, and disease severity. According to the PCCP guiding principles, manufacturers must also implement mechanisms to detect and halt changes that fail performance standards — effectively, a kill switch for underperforming updates.

Cumulative impact analysis grows progressively harder with each successive update. A device that has gone through three retraining cycles must demonstrate that the combined effect of all modifications still meets original performance specifications — not just that the latest change passes in isolation. If update two degrades performance on a subpopulation that update one improved, teams must detect, document, and resolve that interaction before the third update proceeds.

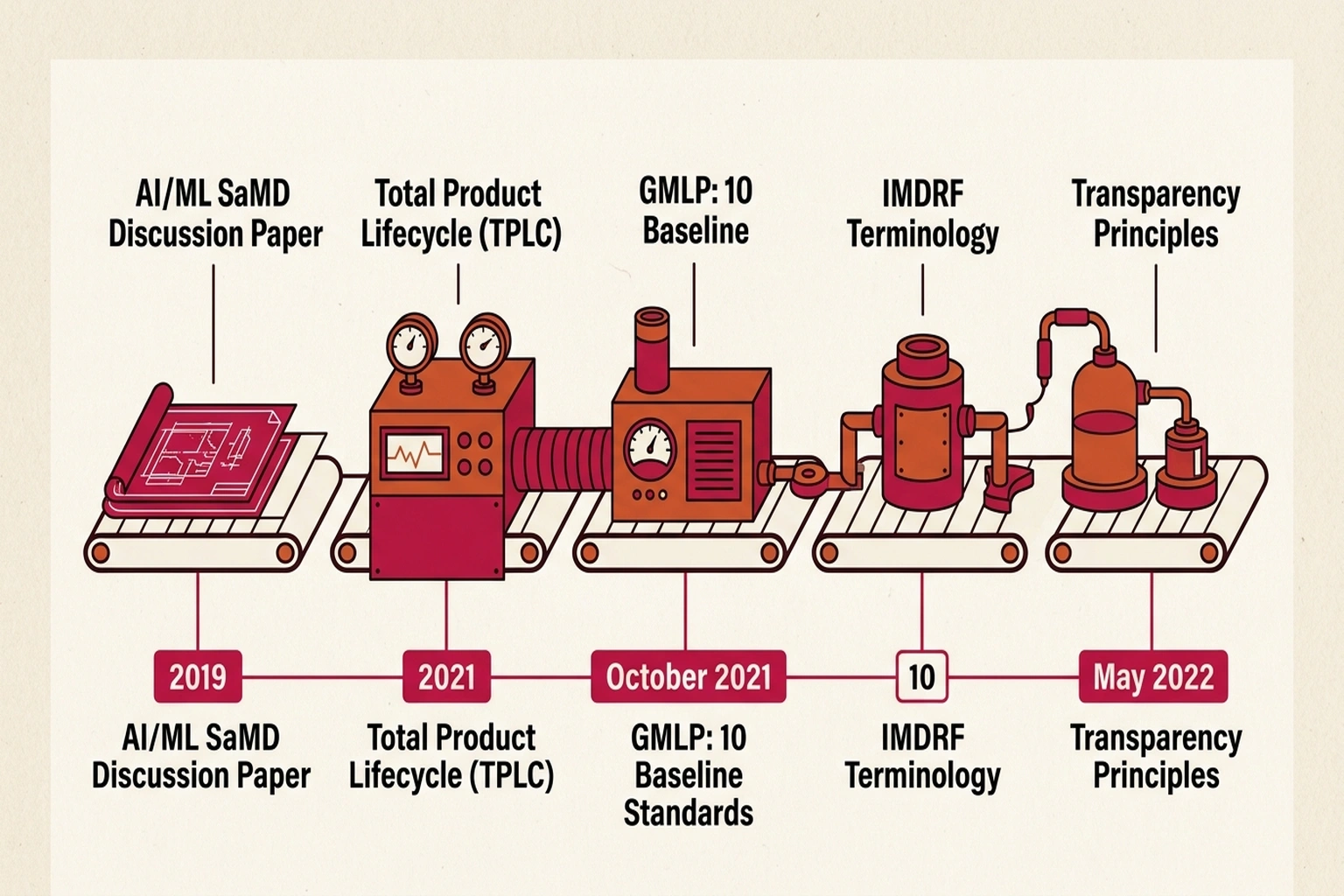

FDA AI Regulation: A Seven-Year Buildup

None of this materialized overnight. Regulatory scaffolding dates to April 2, 2019, when the FDA published its AI/ML Software as a Medical Device (SaMD) Discussion Paper, introducing the Total Product Lifecycle (TPLC) approach — the principle that AI devices require continuous oversight, not a single clearance gate. Subsequent milestones include the January 2021 AI/ML SaMD Action Plan and the October 2021 Good Machine Learning Practice (GMLP) guiding principles, the latter a trilateral effort with Health Canada and MHRA establishing 10 baseline standards for AI medical device development.

International regulatory alignment accelerated after 2022. The International Medical Device Regulators Forum (IMDRF) published standardized terminology for ML-enabled medical devices in May 2022. In June 2024, the same trilateral group released Transparency principles for ML-enabled devices, defining transparency as “the degree to which appropriate information about a MLMD is clearly communicated to relevant audiences.” Each document laid the groundwork for the PCCP framework’s five guiding principles: Focused and Bounded, Risk-based, Evidence-Based, Transparent, and Total Product Lifecycle Perspective (PCCP Guiding Principles). Across those five principles, one theme recurs: manufacturers must treat AI device modification not as a software release cycle but as a regulated clinical intervention.

Why Adoption Remains Low

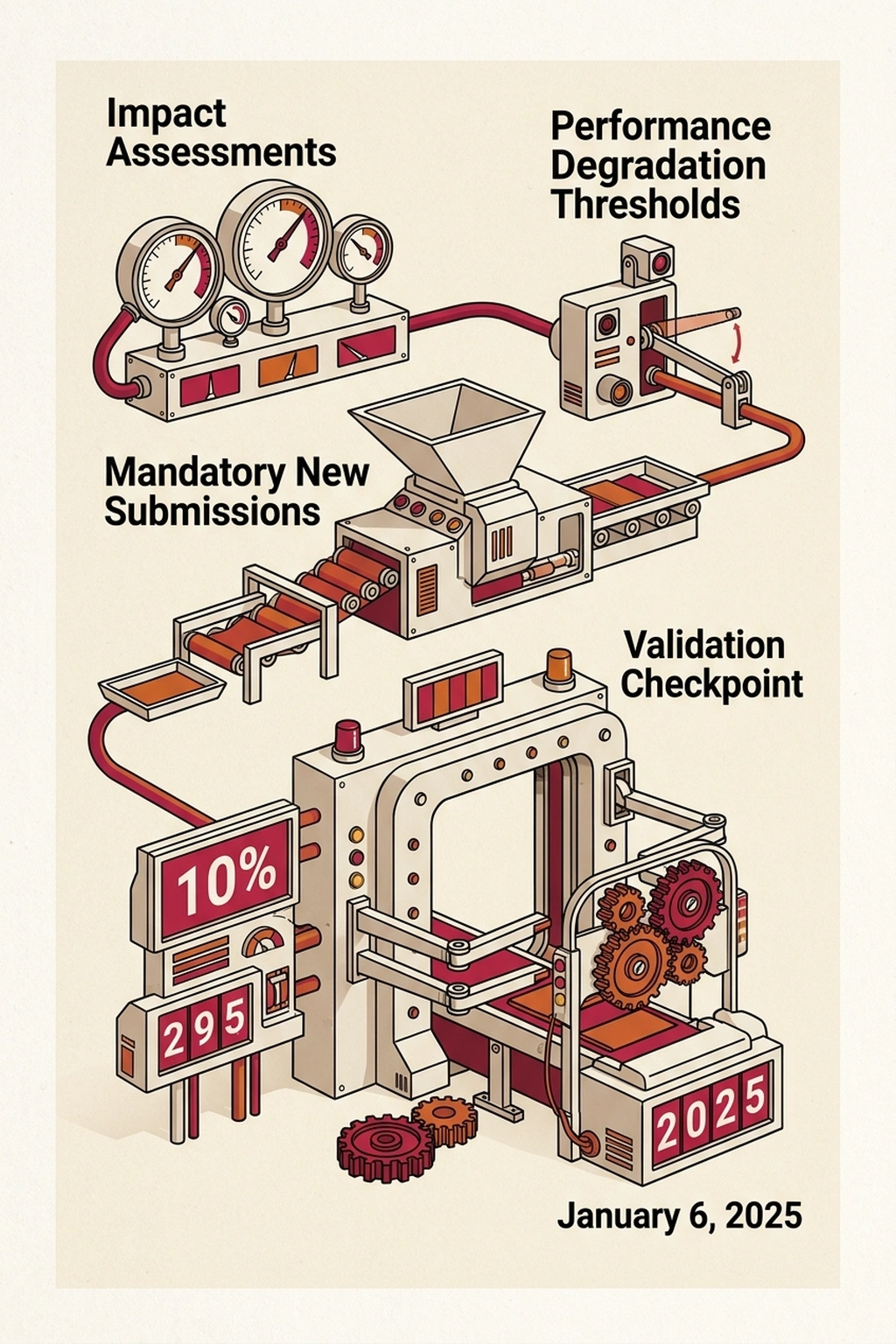

Adoption data reveals what this analysis calls the Compliance Paradox — the framework designed to accelerate AI device updates is itself too demanding for most teams to adopt. Only 10% of AI/ML devices cleared in 2025 included authorized PCCPs, despite the availability of PCCP guidance for AI medical devices since December 2024.

Calculate the cost of not having a PCCP. With a median review time of 142 days per submission and 295 clearances in 2025, the regulatory queue alone imposes 142 × 2 ≈ 284 days of model obsolescence per update cycle (round-trip for submission and response). A diagnostic AI tool that improves accuracy by 3% per retraining cycle loses 3% × (284/365) ≈ 2.3 percentage points of available accuracy improvement per year to regulatory delay. Over three years without a PCCP, that compounds to roughly 7 percentage points of accuracy that existed in the training data but never reached patients. At scale — 295 devices × 142 days average delay — the aggregate patient exposure to suboptimal AI performance is approximately 115,000 device-days per year of regulatory-imposed accuracy lag.

| Metric | With PCCP | Without PCCP |

|---|---|---|

| Update review time | Pre-authorized (days) | 142 days median |

| Updates per year | 4-6 (continuous) | 1-2 (queue-limited) |

| Accuracy lag | Minimal | ~2.3 points/year |

| Bias audit frequency | Per-update (continuous) | Per-submission (annual) |

Several friction points explain the low adoption.

Cumulative impact documentation is the primary barrier. Assessing a single model retrain is manageable. Documenting how sequential modifications interact — the compounding validation challenge — is considerably harder. Medical AI teams building adaptive diagnostic tools, like those pursuing cross-hospital generalization in radiology, face particular difficulty when each performance evaluation must account for every prior update in the device’s modification history. Few manufacturers have automated pipelines capable of that level of regression tracking. Most are still running manual validation cycles that scale poorly beyond two or three sequential modifications.

Bias evidence is the second friction point. Demonstrating performance parity across ethnicity, gender, and disease severity for each modification demands training datasets many manufacturers have not assembled at sufficient scale. Without minimum dataset sizes specified in the guidance, the evidentiary bar remains implicit — but the rejection risk is concrete.

Labeling creates a third ongoing obligation. Manufacturers must update device labeling and public-facing documents as each modification occurs, turning what begins as a one-time submission into a continuous disclosure program. For SaMD products — the majority of 2025 clearances — that obligation extends for the life of the device.

One path through this friction: engaging CDRH through pre-submission feedback programs before filing a formal PCCP. Early alignment on modification scope, protocol specificity, and impact assessment methodology could significantly reduce rejection risk — but it requires manufacturers to invest months of regulatory dialogue before their first authorized plan takes effect.

The strongest counterargument to expanding PCCP adoption comes from patient safety advocates: a 10% adoption rate may reflect appropriate caution, not regulatory friction. Each authorized PCCP is a pre-approved pathway for modifying a device that touches patient care — and a poorly specified PCCP that permits unchecked model drift could cause more harm than the 142-day review delay it eliminates. The FDA’s own guidance requires kill switches for underperforming updates precisely because pre-authorization without adequate monitoring creates a new risk category: silent degradation under regulatory cover.

That argument carries real weight for high-risk devices. For the 71.5% of clearances targeting radiology — where a false negative means a missed tumor — conservative review timelines are defensible. But the Compliance Paradox persists: the devices most likely to benefit from continuous updates (those processing diverse patient populations where retraining improves equity) are also the devices facing the steepest Impact Assessment burden (documenting cumulative effects across demographics). The framework incentivizes exactly the wrong behavior: manufacturers retrain less often to avoid compliance complexity, producing devices that drift further from current clinical practice with each passing quarter.

Cost of inaction for manufacturers delaying PCCP adoption: each year without an authorized plan is a year of 142-day submission cycles instead of pre-authorized updates. At an estimated $150,000 per 510(k) submission (legal, testing, documentation) and 2 submissions per year, the direct regulatory cost is $300,000 annually — plus the opportunity cost of 284 days of accuracy lag while competitors with authorized PCCPs iterate continuously.

Looking at the data, the data yields a clear conclusion: the PCCP framework targets the right problem but sets a compliance bar higher than many healthcare AI teams anticipated. Manufacturers treating the Impact Assessment as a formality — rather than an ongoing evidence-generation program — are likely to see their plans rejected or scoped so narrowly they become functionally useless.

What the Next Guidance Will Demand

On January 6, 2025, the FDA published a draft guidance on AI-Enabled Device Software Lifecycle Management. If finalized, that document would establish post-market monitoring expectations for devices operating under authorized PCCPs — including how frequently manufacturers must reassess Impact Assessments and what performance degradation thresholds trigger mandatory new submissions. Without clear reassessment intervals, manufacturers face uncertainty about when voluntary re-evaluation becomes a regulatory requirement.

For healthcare AI security teams already monitoring escalating attack surfaces in medical systems, each PCCP-authorized model update adds another validation checkpoint where assumptions about safety and demographic equity could fail silently. Based on current regulatory momentum — seven years of incremental framework-building, three aligned international regulators, and a device market producing nearly 300 clearances per year — the lifecycle management guidance is likely to formalize reassessment intervals by Q4 2026, making PCCP adoption less optional than the current 10% uptake suggests.

What to Read Next

- Langflow RCE Exploited Again — 20 Hours, No PoC, Creds Stolen

- 41.6M AI Scribe Consultations Hide an Unregulated Medical Device

- Stryker Hack: Zero Devices Hit, Surgeries Canceled for 8 Days

References

-

FDA Issues Final Guidance on Predetermined Change Control Plans for AI-Enabled Devices — McDermott+ analysis of the December 3, 2024 final guidance, covering three PCCP components, bias mitigation additions, and labeling requirements.

-

Small Change: FDA’s Final PCCP Guidance Ditches ML and Adds Some Details — FDA Law Blog analysis by Lisa M. Baumhardt on scope expansion from ML to all AI, Special 510(k) exclusion, and Modification Protocol requirements.

-

2025 Year in Review: AI/ML Medical Device 510(k) Clearances — Innolitics statistical breakdown of 295 clearances, 10% PCCP adoption rate, and specialty distribution data.

-

How the FDA’s PCCP Framework Supports AI-Enabled Medical Devices — Intertek regulatory overview of PCCP’s three components and QSR maintenance boundaries.

-

FDA: Artificial Intelligence in Software as a Medical Device — FDA primary regulatory timeline from the 2019 Discussion Paper through 2025 lifecycle management draft.

-

PCCP Guiding Principles for ML-Enabled Medical Devices — Joint FDA, Health Canada, and MHRA document defining five core PCCP principles and modification boundaries.

-

Transparency for ML-Enabled Medical Devices: Guiding Principles — June 2024 trilateral transparency framework with six disclosure dimensions.

-

IMDRF Machine Learning-Enabled Medical Devices: Key Terms and Definitions — International terminology standardization document published May 2022.