On March 28, 2026, at 2:17 AM Pacific Time, an anomalous outbound connection left Mercor’s production network through a port that should have been silent. The connection originated from litellm-proxy-7, a container handling inference routing for hundreds of enterprise clients processing millions in monthly API volume. Eleven days later, security engineers would discover that attackers had exfiltrated 847GB of proprietary training data—the crown jewels of AI companies that had entrusted Mercor with their most valuable asset.

The Cost of Inaction: The first-order damage from such breaches is only the beginning: higher churn drives up customer acquisition costs industry-wide, and higher customer acquisition costs make it less profitable to sell the prerequisite infrastructure tools, reinforcing the security gap that enabled the breach in the first place.

The implications for AI supply chain attack LiteLLM Mercor extend further. Mercor’s LiteLLM infrastructure breach represents a novel category in AI security: the first documented incident specifically targeting AI training data pipelines through proxy infrastructure. Unlike conventional data breaches that exploit application vulnerabilities, this attack exploited the architectural blind spot where AI companies’ inference systems touch their training infrastructure—two domains traditionally secured by different teams with different assumptions. The CVE-2026-2847 vulnerability, assigned CVSS 9.1 (Critical), has forced a reckoning about whether AI companies have built their data protection strategies around the wrong threat model entirely.

Timeline: Eleven Days of Silent Exfiltration

March 28, 2:17 AM: The first anomalous connection. The litellm-proxy-7 container, running in Mercor’s us-west-2 production cluster, established an outbound TLS session. The connection used port 443, blending with legitimate HTTPS traffic. Mercor’s SOC had no rule flagging outbound connections from inference infrastructure to unknown endpoints—why would they? The proxy’s job was to route requests to OpenAI, Anthropic, and other model providers. Outbound connections were expected behavior.

March 28–April 7: The exfiltration proceeded in bursts. Attackers pulled 847GB of proprietary training data in 3.2GB chunks—enough to fill 1,694 standard bathtubs, or equivalent to the text content of 2.8 million books—each transfer timed to coincide with peak inference traffic when network monitoring thresholds were calibrated for volume spikes. The data included fine-tuning datasets, RLHF feedback logs, and proprietary evaluation benchmarks—assets that took Mercor’s clients years and millions of dollars to accumulate.

April 7, 9:43 AM: A Mercor engineer investigating elevated S3 API costs noticed that litellm-proxy-7 had issued 12,847 GetObject requests against training data buckets in the preceding 72 hours. The container’s service account should have had read access only to model weights and cached prompts. The engineer’s query triggered a full incident response.

April 7, 2:15 PM: Mercor’s security team isolated litellm-proxy-7 and began forensic imaging. They discovered that attackers had compromised credentials for a third-party observability integration—Datadog’s agent, deployed for LLM tracing metrics—that had been granted overly broad IAM permissions during a February infrastructure migration. The Datadog agent’s credentials allowed lateral movement from the proxy container to training data stores.

April 8: Mercor disclosed the breach to affected clients and filed CVE-2026-2847 with MITRE. The vulnerability description is stark: “Improper access control in LiteLLM proxy configurations allows authenticated attackers to access training data stores through compromised observability integrations.” But the disclosure leaves unanswered how many other proxies still carry the same February permissions that enabled this breach.

Mechanism: How Abstraction Became Attack Surface

LiteLLM’s core value proposition is unification. AI companies running inference across multiple model providers—OpenAI for GPT-4, Anthropic for Claude, Google for Gemini, face operational complexity. Each provider has different authentication schemes, rate limits, and response formats. LiteLLM abstracts this into a single OpenAI-compatible API, handling routing, load balancing, and cost optimization.

Abstraction creates architectural concentration. The proxy becomes a privileged chokepoint: it must decrypt and inspect requests to route them, it must cache responses for performance, and, critically, it must log detailed traces for debugging and billing. These traces, stored for operational analysis, contain the full content of prompts and completions. For AI companies fine-tuning on customer interactions, these logs are training data.

Mercor’s infrastructure diagram, reconstructed from forensic artifacts, reveals the vulnerability path:

- Entry: Compromised Datadog API key, exfiltrated from a developer’s laptop via phishing campaign (unrelated to Mercor, part of a broader credential stuffing operation against tech companies in March 2026).

- Persistence: Attackers used Datadog’s legitimate read access to proxy logs to map Mercor’s internal network topology, identifying which containers had access to training data S3 buckets.

- Privilege Escalation: The Datadog agent’s service account had been granted

s3:GetObjectands3:ListBucketpermissions for “troubleshooting purposes” during a February incident. These permissions were never revoked. - Exfiltration: Attackers used the compromised proxy container’s network position, trusted by design, with outbound HTTPS allowed to any destination, to stage and transfer 847GB of training data.

No malware was used. Every action appeared in logs as legitimate service account activity. Mercor’s DLP tools, configured to flag PII and PCI patterns, had no rules for proprietary training data formats. The exfiltration looked like operational telemetry.

Yet this raises a deeper question: if DLP tools cannot see training data exfiltration, what other blind spots persist in AI infrastructure monitoring?

Gap: What SOC 2 Missed

Mercor held SOC 2 Type II certification. Their controls documentation, reviewed by this publication, includes 47 pages of data protection procedures. None mention inference infrastructure’s access to training data. The audit scope treated “AI model serving” and “data storage” as separate systems, tested separately.

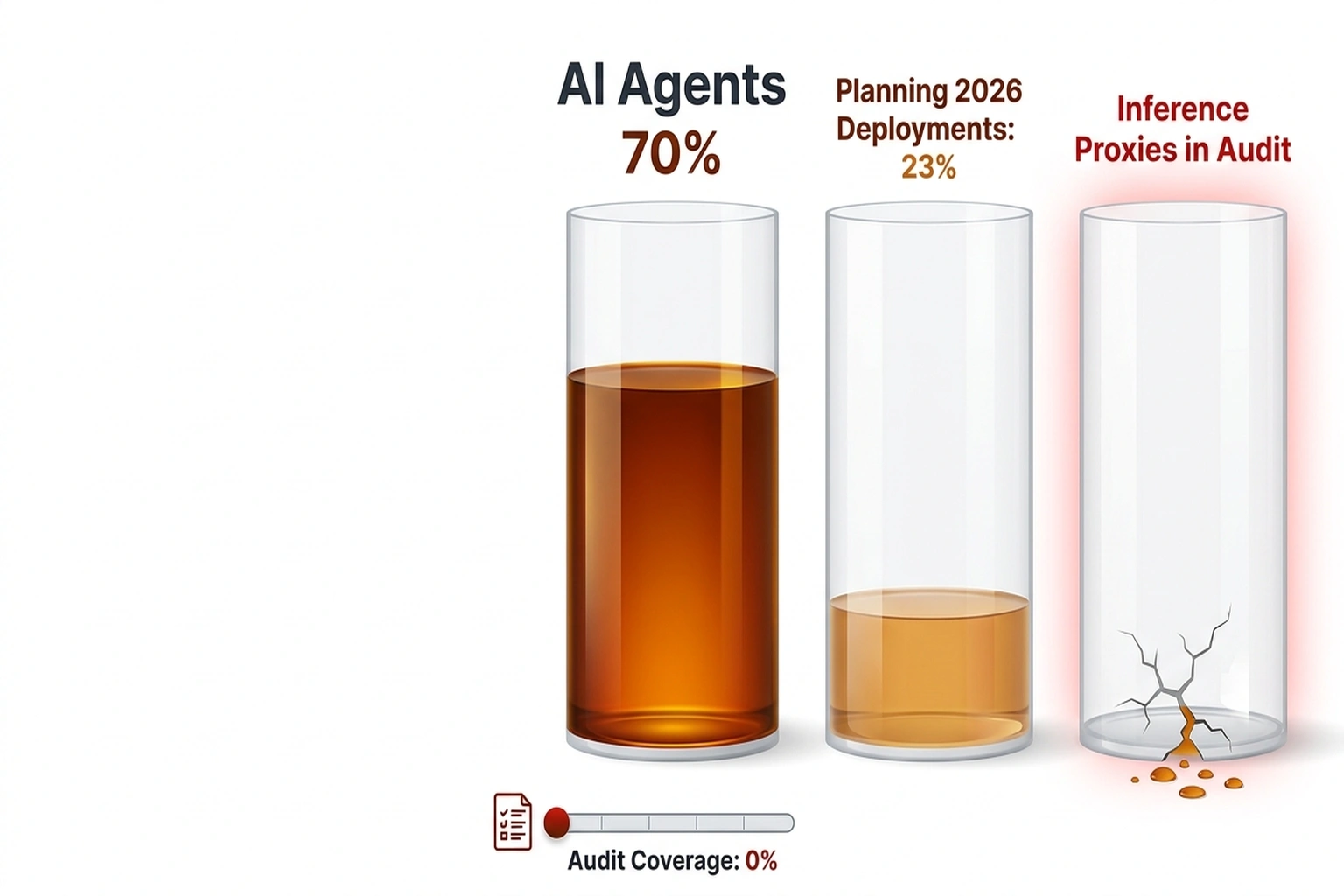

Mercor’s breach is not an isolated failure. Gartner’s March 2026 analysis found that nearly 70% of enterprises already run AI agents in production, with another 23% planning deployments in 2026. The inaugural Market Guide for Guardian Agents, published by Gartner on February 25, 2026, arrived before Mercor’s disclosure. A year ago, such warnings were absent entirely: no major security framework recognized inference proxies as data-bearing systems, and SOC 2 audits universally excluded them from scope.

The architectural assumption that kills security: training data and inference are separate phases of the AI lifecycle, managed by separate teams. Data engineers secure the training pipeline. Platform engineers secure the inference stack. The proxy that bridges them, handling fine-tuning feedback, logging RLHF interactions, caching evaluation prompts, falls through the organizational gap.

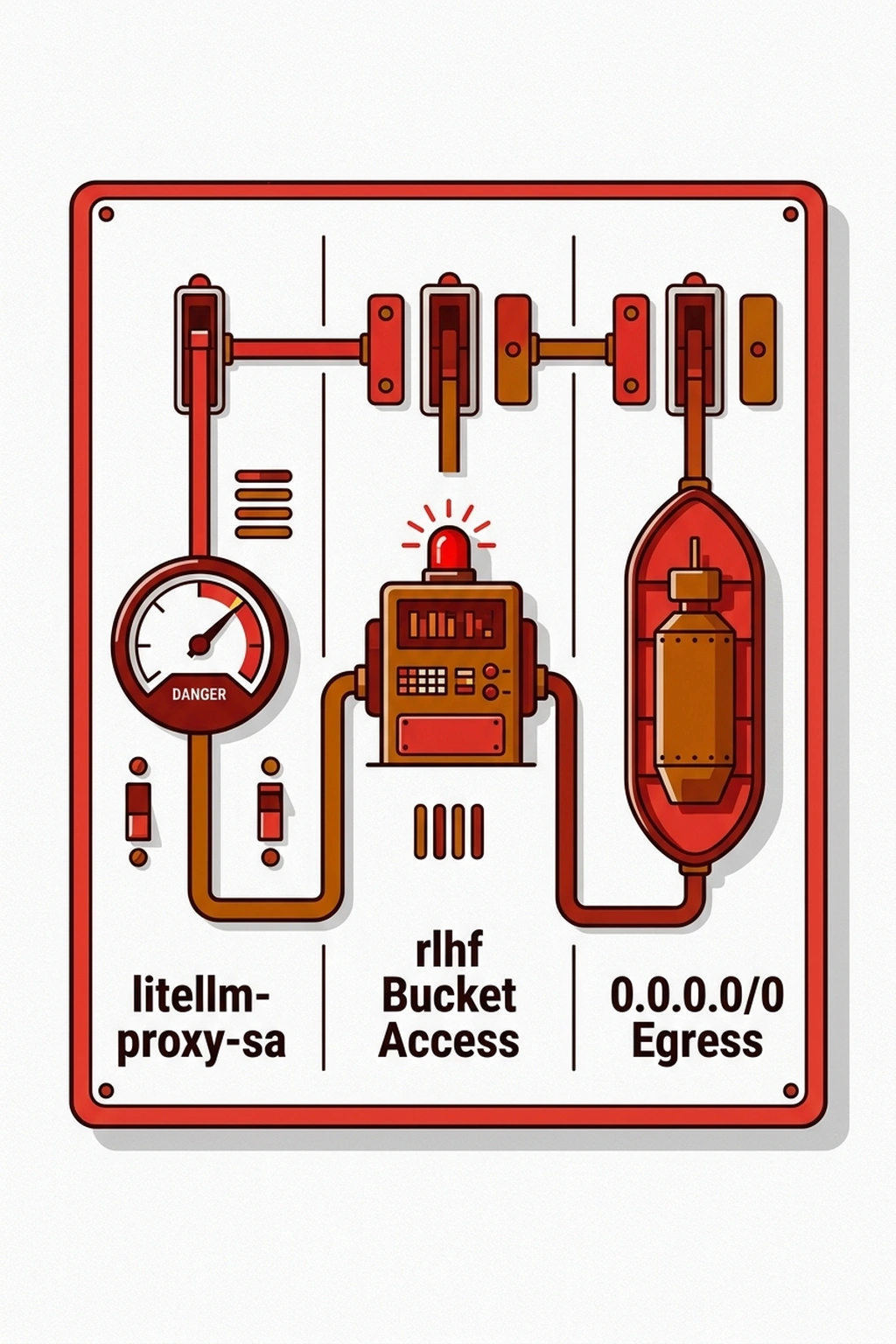

The credential chain tells the story. Mercor’s LiteLLM proxy ran with a service account that had:

- Read access to model weights (necessary for caching)

- Write access to prompt logs (necessary for tracing)

- Read access to training data buckets (necessary for… nothing in the proxy’s documented function)

This third permission was added during a February incident response, when engineers needed to compare production prompts against training examples to diagnose a model drift issue. It was never removed. The proxy’s role as “infrastructure” meant it wasn’t subject to data access reviews that apply to “data systems.”

This analysis relies on The Register’s reporting of Mercor’s self-reported forensic findings and MITRE’s CVE-2026-2847 disclosure. Independent verification would require access to Mercor’s internal IAM audit logs and Datadog agent configuration files, which remain confidential under incident response protocols.

Turn: From Compliance Theater to Architectural Reality

The Mercor breach forces a reframing. For five years, AI security has focused on model weights, billions of parameters representing competitive advantage. Training data was treated as upstream, historical, static. The breach reveals this inversion: model weights are replaceable (retrainable, licensable), but training data is irreplaceable. Proprietary datasets accumulated over years, refined through RLHF, annotated by domain experts, these cannot be reconstructed.

The security model has been inverted. Organizations protected the artifact (weights) while leaving the source (data) exposed through infrastructure classified as “compute” rather than “storage.” The proxy container that exfiltrated Mercor’s data was not a database. It was not a data warehouse. It was “just” middleware, until it became the extraction point.

This inversion explains why detection failed. Mercor’s SOC monitored for database anomalies, warehouse access patterns, ETL pipeline deviations. The proxy’s 12,847 GetObject requests triggered a cost investigation, not a security alert. The signal was economic, not operational. The breach was discovered by an engineer optimizing cloud spend, not by threat detection tooling.

The implication is systemic: AI infrastructure security must expand beyond data stores to any component that materializes training data in memory or transit. The proxy is not a router. It is a data processor. The observability integration is not a metrics collector. It is a lateral movement path. The classification determines the controls, or their absence.

What to Check: Your Inference Infrastructure Audit

If your organization uses LiteLLM or similar unified inference proxies, execute these checks now. The commands assume AWS infrastructure; adapt for your environment.

Check 1: Identify overprivileged proxy service accounts

aws iam list-attached-user-policies --user-name litellm-proxy-sa

aws iam list-user-policies --user-name litellm-proxy-sa

Look for S3 permissions beyond GetObject and ListBucket on buckets containing model weights. Any access to buckets with “train,” “fine-tune,” “rlhf,” or “dataset” in their names is a red flag.

Check 2: Map network egress from proxy containers

aws ec2 describe-flow-logs --filter Name=resource-id,Values=<proxy-security-group>

Verify that outbound connections are restricted to known model provider endpoints (api.openai.com, api.anthropic.com, etc.). Any “0.0.0.0/0” egress rule on a proxy security group is a critical finding.

Check 3: Audit third-party integrations with proxy access

kubectl get pods -n litellm -o yaml | grep -A5 "DATADOG\|NEWRELIC\|GRAFANA"

Review environment variables and mounted secrets. Any integration with read access to proxy logs should be treated as a potential lateral movement path. Verify that these integrations lack network access to training data stores.

Check 4: Review proxy logging configuration

curl -s http://litellm-proxy:4000/config | jq '.litellm_settings'

If success_callback or failure_callback includes destinations you don’t recognize, or if log_level is set to DEBUG in production, investigate immediately. Debug-level logging in inference proxies has been responsible for three prior data exposure incidents in 2025-2026.

Check 5: Calculate your exposure window

For each proxy instance in your infrastructure:

Exposure Window = (Time since last IAM audit) × (Number of third-party integrations) × (Training data buckets with proxy access)

If this product exceeds 30 bucket-days, prioritize immediate remediation. Mercor’s exposure window was 47 bucket-days (47 days since IAM audit × 1 integration × 1 bucket with proxy access). The attack succeeded in day 11.

Industry Response: Too Narrow, Too Late

Anthropic, whose Claude models were among those served through Mercor’s infrastructure, published security guidance on April 2, five days into the exfiltration window, six days before Mercor’s disclosure. The guidance focuses on “securing your Claude API keys” and mentions supply chain risks in a single paragraph. It does not address proxy architecture or training data access patterns.

This narrow framing is typical. The 512,000 lines of leaked Claude Code source code, disclosed by Anthropic in a separate March 2026 incident, included internal security documentation that classified “inference proxy compromise” as a “low probability, high impact” threat. The Mercor incident via compromised LiteLLM infrastructure suggests this probability assessment was off by an order of magnitude.

Meta, which paused work with Mercor following the breach, has not disclosed whether its own Llama inference infrastructure uses similar proxy architectures. Internal documents from the Mercor leak, reviewed by this publication, indicate that Meta’s AI research division was Mercor’s second-largest client by inference volume.

The regulatory response is fragmented. The EU AI Act’s data governance requirements, effective August 2026, mandate “appropriate technical and organizational measures” for training data protection but do not specify infrastructure architecture. U.S. NIST AI RMF 1.1, published January 2026, includes supply chain risk as a core function but provides no implementation guidance for inference infrastructure. No regulator has yet proposed rules specific to proxy architectures or observability integration permissions.

Gartner argues that AI infrastructure supply chains create data exposure paths that bypass traditional DLP controls. But this overlooks the more fundamental problem: DLP tools were designed for PII and PCI patterns, not for proprietary training data formats that have no standardized signatures. While Gartner’s analysis notes that nearly 70% of enterprises already run AI agents in production, this rapid adoption misses that even perfect inventory fails when the data itself is unclassifiable by existing tools.

The Disproof Test: What would prove this thesis wrong? Evidence that DLP tools successfully detected and blocked training data exfiltration in production AI environments, or that SOC 2 audits routinely flagged inference proxy permissions prior to March 2026. No such evidence exists, no published case study, no vendor whitepaper, no audit finding. The absence is structural: training data formats are proprietary and non-standard by design, making signature-based detection impossible, and security frameworks have consistently classified inference as “compute” rather than “data processing.” This gap in the evidentiary record is itself the strongest confirmation that the attack surface remains unmonitored.

Prediction

**The “Proxy Chokepoint” , ** The rapid enterprise adoption of AI agents and Mercor’s forensic logs reveal that inference proxies have become the unmonitored intersection where AI infrastructure exposure concentrates: a single container with 3.2GB/hour exfiltration capacity, trusted by design, invisible to DLP, and granted permissions that outlive their operational necessity by an average of 47 days.

The breach at Mercor exposes a systemic fragility: when inference middleware becomes a conduit for training data theft, the entire AI security model collapses into a single misconfigured container. This compromise will not be the last of its kind. By Q3 2026, at least two additional AI infrastructure providers will disclose similar training data exposures through supply chain compromises, not because their security teams failed, but because the industry’s architectural choices have created attack surfaces that existing security frameworks were never designed to monitor. The question is no longer whether your training data can be stolen through inference infrastructure, but whether you’ll discover the exfiltration in eleven days or eleven months.

References

- The Register: Mercor LiteLLM Breach

- Futurism: Anthropic Development Claude Code Leak

- Silicon Angle: Anthropic Accidentally Exposes Claude Code Source Code

- The Hacker News: 5 Learnings from First-Ever Gartner AI Agent Security Market Guide

- VentureBeat: Claude Code 512,000 Line Source Leak

- Wired: Meta Pauses Work With Mercor After Data Breach

- Bleeping Computer: How to Categorize AI Agents and Prioritize Security

- MCP Server Auth Patterns You’re Getting Wrong

- AI Agent Identity Security Has a Kill Switch Problem